From virtual sets and on-air graphics to 8K video editing, NVIDIA will be at NAB 2019 powering demos for over 85 partners on the show floor. Where is a guide to some of the shows you absolutely must see.

What do The Weather Channel, RED Digital Cinema, Colorfront, Black Magic Design, EVS, Autodesk, Zero Density or Mellanox have in common in their presentations at NAB 2019? One simple answer: they all use NVIDIA RTX technology to make it happen, whether its mixed reality graphics for a TV show or 8K video editing and color grading in real time at full resolution. It’s hapenning, and for a few days all this “magic” is demonstrated in one place: the NAB Show in Las Vegas.

https://youtu.be/SvHS8jPL6C4

As the industry moves at a fast speed to new ways to distribute content, RTX professional solutions are helping broadcasters and streaming services adapt to the mounting pressures of creating and delivering high-quality content faster than ever. Streaming is growing, and it’s not just aimed at your TV set, it has to reach people anytime, anywhere, in multiple platforms and formats, from tablets to smartphones.

New ways to distribute content

Distributing content is no longer just sending a signal and hoping for audiences to get it. The use of Artificial Intelligence solutions brings unlimited possibilities to enhance consumer experiences and deliver audience analytics to broadcasters. At the same time, broadcasters are discovering the advantages of integrating technologies like mixed reality to immerse audiences in the nightly news. Virtual Reality, Augmented Reality and Mixed Reality are no longer terms known only by a generation of geeks but something audiences expect to have served at home, with extreme quality.

https://youtu.be/q01vSb_B1o0

Watching the news has changed drastically in recent months, and experiences like those from The Weather Channel have demonstrated how audiences can be put in the heart of the story. To convey the severity of life-threatening weather conditions, the channel used a popular games engine, Unreal Engine 4, and NVIDIA graphics to show audiences what a nine feet storm surge looks like. Used to illustrate the forecast for the Hurricane Florence storm surge, the sequence is a good example of how broadcasters can use mixed reality.

Mixed Reality in the news

With RTX ray tracing, cinematic-quality visuals are possible for ultra-realistic, live on-air graphics and virtual sets, and we’re seeing more and more of these presentations, and not just for weather related news. At NAB, see this technology powered by Epic Games Unreal Engine 4.22, Zero Density, Z by HP and NVIDIA Quadro RTX in the StudioXperience booth (SL3824) and in the Brainstorm booth (SL2812).

https://youtu.be/9UO0M2Eomqs

For the first time in the history of the industry, a ray traced virtual studio will be live in booth SL3824 in collaboration with Epic Games, Nvidia and Zero Density. Zero Density and NVIDIA have partnered with Fox Sports for daily presentations in the StudioXperience to showcase the very latest advances in photo-realistic real-time virtual sets and on-air graphics.

The world’s first raw keyer

Zero Density offers the next level of virtual studio production with real time visual effects. It provides Unreal Engine native platform, “Reality“, with advanced real-time compositing tools and its proprietary keying technology. Reality is presented as the most photo-realistic real-time 3D Virtual Studio and Augmented Reality platform in the industry.

The StudioXperience booth is the place to go if you want to see the future happening. Following the release of Reality 2.7 in mid March, Zero Density will showcase at NAB 2019 a preview of 2.8 “Haytham” release with real-time ray tracing, in collaboration with NVIDIA and Epic Games. The company says it’s a “historical milestone” to see the world’s first raw keyer in action.

https://youtu.be/9K7qa3HK-pI

Brainstorm is another company present at NAB 2019, booth SL2812, showcasing its complete product range featuring solution for motion graphics, virtual sets and augmented reality for a variety of applications such as news, sports, entertainment, elections and presentations. In its 25 anniversary year, the company will highlight new developments focused on delivering the best rendering quality, including real-time ray tracing with NVIDIA RTX GPUs.

Changing the game in the news arena

InfinitySet, Brainstorm’s virtual set and augmented reality solution, will showcase its latest version 3.1, which includes new powerful features like real-time ray tracing, Unreal Engine 4.22 compatibility, HDR I/O, PBR, new effects and new 360-degree output. Brainstorm was last March selected amongst the winning projects of the 2019 run of Google’s Digital News Initiative (DNI).

The DNI Fund is a European programme that’s part of the Google News Initiative, an effort to help journalism thrive in the digital age. According to Francisco Ibáñez, Brainstorm’s R&D Projects Director, “we are excited to be part of Google’s Digital News Initiative, which will allow us to expand our advanced AR technology to new fields such as journalism, which will surely change the game in the news arena by providing additional tools for newsmakers.”

8K video editing and color grading

Video editing is rapidly moving to new solutions and ProVideo Coalition has revealed some of those changes in recent months, like RED’s 8K video editing using NVIDIA. At NAB 2019 it is possible to watch NVIDIA RTX supercharge 8K video editing and color grading in real time at full resolution in RED Digital Cinema’s meeting room (N201MR). As the industry moves to 8K UHD as the standard for creating ultra-high-quality 4K UHD content, more companies are exploring the new options, and during NAB 2019 there is another example to see, at the Colorfront suite at the Renaissance (Ren Deluxe I).

https://youtu.be/MRZiya1DW3c

Colorfront will showcase at NAB 2019 its Transkoder 2019 with 8K HDR playback of camera RAW files, as well as IMF workflows with Dolby Atmos / immersive audio, brand new subtitling engine, support for IMSC1.1, multiple subtitle tracks, audio solo/mute and a new HDR GUI. The suite at the Renaissance Hotel is also the place to go to see on-Set Dailies 2019 demos with support for the latest camera formats including the ALEXA Mini LF, Sony Venice V3, RED 8K Decompression on NVIDIA GPUs, Blackmagic RAW and others.

AJA’s solutions at NAB 2019

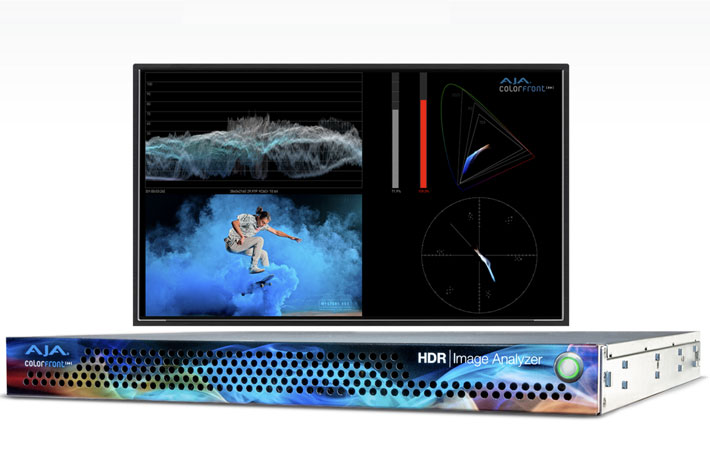

To discover the HDR Image Analyzer developed by Colorfront in partnership with AJA, you’ve to visit the AJA Booth #SL2416 at the Las Vegas Convention Center. AJA’s HDR Image Analyzer supports a wealth of inputs from camera LOG formats to SDR (REC 709), PQ (ST 2084) and HLG and offers color gamut support for BT.2020 alongside traditional BT.709. AJA hardware prowess ensures high reliability and performance, with 4x 3G-SDI input and output, and DisplayPort connections.

https://youtu.be/bi79vUO0GMk

AJA’s booth is also the place to see another product powered by Colorfront Engine proprietary video processing algorithms. The FS-HDR, a 1RU, rackmount, universal converter/frame synchronizer, is designed specifically to meet the High Dynamic Range (HDR) and Wide Color Gamut (WCG) needs of broadcast, OTT, production, post and live event AV environments, where real time, low latency processing and color fidelity is required for 4K/UltraHD and 2K/HD workflows.

DaVinci Resolve Super Scale

The task of reviving archived content is also easier now, thanks to NVIDA RTX. If you want to see the new solutions available for broadcasters to easily up-resing SD or HD content to 4K or 8K using AI, go directly to the South Hall (SL216) to see how Blackmagic Design’s DaVinci Resolve uses Super Scale technology.

To support super-resolution workflows and faster content delivery, Quadro RTX’s high-performance transcoding uses, says NVIDIA, dedicated NVENC/NVDEC hardware to enable faster-than-real-time encoding and decoding of 8K video.

ProVideo Coalition mentioned recently one example of machine learning used to give artists more creative options, and NAB is the place to go to see what Autodesk’s Flame 2020 does. The new GPU-accelerated machine learning feature set along with a host of new capabilities that bring Flame artists significant creative flexibility and performance boosts.

Delivering 4K and 8K content faster

At Mellanox booth (SL6025), a company that NVIDIA announced last March it is acquiring, it is possible to see how their intelligent interconnect solutions increase data center efficiency by providing the highest throughput and lowest latency, delivering 4K and 8K content even faster to applications.

Delivering content to the home is no longer just a brute force task, and it has evolved to become highly intelligent. NVIDIA’s acquisition of Mellanox is a sign of the company’s understanding of those changes. AI has enabled broadcasters and live streaming services to make individualized recommendations to match content to people based on past likes or dislikes, or using a similarity index.

Broadcasters can further improve consumer experiences with AI by allowing personalized content filtering or using new inputs such as mood or specific multi-dimensional parameters (for example, “show me a quirky movie with a female lead in Paris”), writes Rick Champagne at the NVIDIA blog, adding that “With new AI solutions appearing every day, the possibilities are limitless. Broadcasters can now gain much deeper viewer insights using advanced data analytics with NVIDIA RAPIDS, and then visualize these insights in real time using an NVIDIA Data Science Workstation.”

Generating missing frames

To see how some of these new systems work, nothing better than to go to EVS booth (SL3816), The company is using NVIDIA GPUs as a hardware platform to execute the AI solutions they are developing for smarter and more efficient live production. From automatically steer robotic cameras using action prediction, to creating super-slow motion footage by generating missing frames, EVS is demonstrating the power and flexibility of AI at NAB.

At the 2019 NAB Show EVS will demonstrate the centralized production workflow implemented by the University of Miami (UM) for its live sports production. Home to the Hurricanes and 17 varsity sports, the university is the first educational institution to deploy EVS’ software-defined Dyvi switcher for high-quality programming of multiple sports from a centralized media production hub.

At the 2019 NAB Show EVS will demonstrate the centralized production workflow implemented by the University of Miami (UM) for its live sports production. Home to the Hurricanes and 17 varsity sports, the university is the first educational institution to deploy EVS’ software-defined Dyvi switcher for high-quality programming of multiple sports from a centralized media production hub.

EVS will also debut at NAB its XNet-VIA, an ethernet-based live media sharing network that facilitates ultra-fast sharing of high-resolution content between XT-VIA and XS-VIA servers. This matches the needs of the world’s largest and most demanding event producers, stadiums and arenas, and outside broadcast providers.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now