After Effects is the industry standard application for motion graphics design, but like any major app, it can take years to understand all of its idiosyncrasies. Over 18 parts, the “After Effects & Performance” series looks at exactly what After Effects does, how it works, and why it has very different performance characteristics to 3D animation software.

Every design-related industry will see trends come and go, and market forces can also change over time, affecting the types of work that artists are requested to do. Only ten years ago, motion graphics design was clearly separate from 3D animation. The hardware requirements of a computer intended for 2D motion graphics were different to one intended for 3D. But since then, with Cinema 4D playing a significant role, motion design has increasingly adopted 3D animation as a fundamental tool. After Effects is now regularly used as a companion app to Cinema 4D, and computers intended for motion graphics design are now heavily geared towards 3D animation.

Parallel to this evolution in the creative world, real-time 3D engines have advanced to the point where we now have real-time ray tracing. Real-time 3D engines have a completely different technical approach to traditional 3D rendering, and their development has been mostly driven by the demands of the gaming industry. However real-time 3D has just started to be used in film & television production, paving the way for future workflows that are radically different to those which have been used for the past 20 – 30 years.

Despite the rapid evolution of design trends, workflows, rendering technology and hardware capabilities, After Effects has steadfastly continued to do what it has always done – animate layers in a timeline. Unfortunately, it doesn’t always feel as though the performance of After Effects has improved in the same way that performance has improved for 3D animation. Many Cinema 4D users have spent loads of money on hardware to improve their 3D rendering speed, while discovering that After Effects isn’t really any faster.

A lot of the motivation behind this series came from me seeing people make poor purchasing decisions. Roughly ten years ago a colleague told me they’d bought an expensive “Quadro” video card, expecting After Effects to suddenly render everything incredibly fast. It didn’t, and I still flinch at the memory. With only a little bit of homework, thousands of dollars could have been saved, or better spent. When Apple released the “trashcan” MacPro, it was a similar situation. You could spend over $10,000 on one, but it wouldn’t make After Effects that much faster.

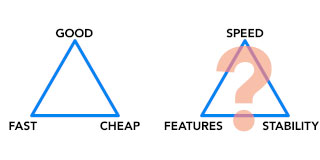

For many users, the notion of “performance” comes down to a purchasing decision, such as “what GPU should I buy”. But this series begins by looking at the many facets of “performance”, then takes us through the various hardware elements that make up our computers, and ends with an interview with the head of the After Effects Performance Team. Nearly all of these articles have a historical element to them, putting the problems facing current AE users into the context of an application that’s over 25 years old, and one that was designed for a different task than it’s generally used for.

Here’s a collated table of all the articles in the series, along with a quick summary of what they cover and why.

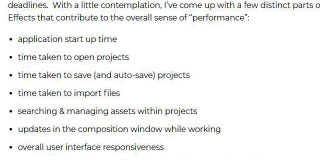

1. What is “Performance”?

The first article in the series introduces the concept of “Performance” as much more than raw rendering speed. It also lists a variety of different After Effects users who are all using After Effects for different things – and thus have their own unique idea of “performance”.

2. What After Effects actually does

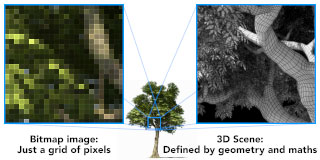

After Effects was first released in 1993, and was originally intended to be a desktop compositing application. Since then, it’s become the industry standard for motion graphics. But understanding its compositing origins gives us an insight into what After Effects can and can’t do. Part 2 is all about understanding what After Effects is doing when we hit that “render” button, and how it’s very different to rendering a 3D scene.

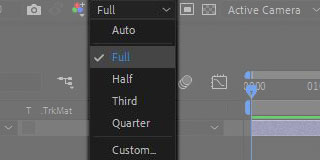

3. It’s numbers, all the way down

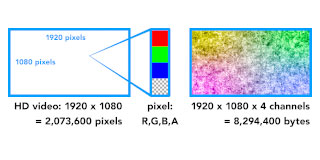

A fter Effects works with bitmap images, and to use a quote from Adobe: “it renders all the pixels, all the time”. Unfortunately, those pixels can quickly add up. In Part 3 we see how changes in resolution and bit depth can have a dramatic impact on rendering times. When After Effects was released in 1993, television was “standard definition”, while today we have cameras that can record in 8K and even 12K resolutions. A single frame of 8K video contains the same number of pixels as 80 frames of PAL, and 94 frames of NTSC video. A single frame of HDR 8K video requires 320 times more memory than a frame of 8-bit SD video. With multiple layers of video, the memory and storage requirements, as well as rendering times can quickly add up.

fter Effects works with bitmap images, and to use a quote from Adobe: “it renders all the pixels, all the time”. Unfortunately, those pixels can quickly add up. In Part 3 we see how changes in resolution and bit depth can have a dramatic impact on rendering times. When After Effects was released in 1993, television was “standard definition”, while today we have cameras that can record in 8K and even 12K resolutions. A single frame of 8K video contains the same number of pixels as 80 frames of PAL, and 94 frames of NTSC video. A single frame of HDR 8K video requires 320 times more memory than a frame of 8-bit SD video. With multiple layers of video, the memory and storage requirements, as well as rendering times can quickly add up.

4. Bottlenecks and busses

Having established that After Effects processes bitmap images, and those bitmap images can contain a lot of pixels, it’s time to look at what this means for your computer hardware. Unlike 3D animation, working with video is very bandwidth intensive – After Effects spends a lot of time moving data around different parts of your computer. The way that After Effects performs can be heavily influenced by the speed of your storage, network and system bandwidth. Jumping back to the late 90s, we can learn a lesson about busses from The Brady Bunch.

5. Introducing the CPU

For most users, the CPU is the most important part of their computer. But unfortunately, choosing a CPU can be very difficult. One of the most confusing aspects of modern CPUs is that the clock speed – measured in GHz – doesn’t always relate to real-world performance. From the moment the first CPU was released in the early 1970s, manufacturers improved CPU performance by increasing their clock speed, and also by including additional instructions for specific purposes. Apple tried and failed to convince the public that CPU clockspeed wasn’t that important – they called it the “megahertz myth”. But Intel kept pumping out faster and faster Pentium 4s, and ultimately Apple gave up arguing and switched to Intel processors.

6. Begun, the core wars have

Over the 20 year period following the release of the first CPU, CPU clockspeeds slowly increased from 1Mhz to 50Mhz. But with the Pentium 4, Intel rapidly increased the average clock speed to 4Ghz in only a few years. The public became accustomed to high clock speeds, and software performance improved dramatically without any special effort from programmers. But in 2006 the future of desktop computing was changed forever, when Intel discontinued the Pentium 4, and replaced it with their new dual-core CPU. The future of performance would come from more CPU cores, not faster ones.

7. Introducing AErender

One of the simplest ways to improve your productivity with After Effects is to begin background rendering. AErender is the key to rendering in the background while being able to continue working with After Effects. While you can do it for free, there are several 3rd party options available to make it simpler.

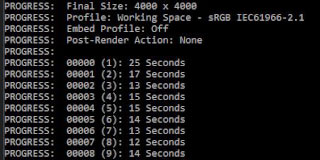

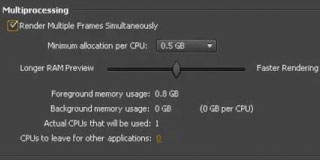

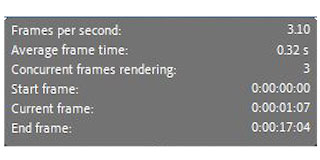

8. Multiprocessing (kinda sorta)

When After Effects CS3 was released in 2008, it included a new feature called “render multiple frames simultaneously”. This feature attempted to use multiple CPU cores to speed up rendering performance. Did it work? Sometimes… While the “RMFS” feature was dropped after CC 2014, part 8 looks at how it worked, why it didn’t always work, and what that might mean for the future.

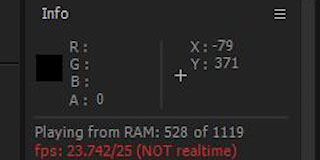

9. Cold, hard cache

After Effects really, really loves RAM. The more memory your machine has, the more frames After Effects can cache away, so it doesn’t have to re-render. To put it simply – storing a frame in RAM is much faster than rendering it from scratch. While having more memory might not make your final renders faster, it can definitely improve your productivity by reducing the amount of processing After Effects needs to do while you’re working.

10. The birth of the GPU

The GPU – or graphics card – has had an incredible impact on computer graphics. But how did we get to where we are today, with real-time ray tracing and GPU rendering? The simple answer is games. But the longer version is far more interesting, and includes a few guys who left their prestigious jobs at Silicon Graphics with the dream of bringing 3D animation to cheap home computers. OK, so there’s not much to do with After Effects in this article, but it’s a great story!

11. The rise of the GPGPU

The first GPUs weren’t even called GPUs, they were just 3D graphics cards – and most people bought them to play games like Quake. In 1998 nVidia released the first graphics card with the “GPU” label, and in 2006 they released the “GPGPU” – a graphics card that could be programmed with a specific language called CUDA. The era of “GPU acceleration” arrived, and “graphics cards” would never be the same.

12. The Quadro conundrum

If you’re shopping for a new GPU, then both nVidia and AMD have two distinct product lines. nVidia have their gamer orientated range which they brand “GeForce”, but they also have a “professional” line under the “Quadro” name. AMD have the same approach, with their “Radeon” range for gamers and the “FirePro” range for graphics professionals. But what’s the difference, and why are Quadros / FirePros so much more expensive? Unfortunately, the answers aren’t clear at all…

13. The wilderness years

When After Effects became a 64 bit application in 2010, and was freed from the 4 gigabyte memory limit, it represented the single biggest leap in performance since version 1. But in the years following the release of CC 2014, After Effects seemed to stagnate – just as GPUs were taking off and 3D animation was advancing in leaps and bounds. But most significantly, with the release of CC 2015 the RAM preview function was broken – and remained broken for the next few years.

14. Make it faster for free

Buying expensive hardware isn’t the only way to make After Effects faster – in fact, it’s quite easy to spend money on CPUs and GPUs that won’t make any difference to After Effects at all. But by changing your workflow, and understanding the “all the pixels, all the time” approach of After Effects, you can dramatically improve your productivity without spending any money at all. Some file formats, such as PNGs, are inherently slow. Colour management, and Log / Raw file formats are also performance hogs.

15. 3rd Party Opinions

It’s pretty easy to find After Effects users who think it’s slow, and the Adobe user-voice forum has popular feature requests for improved multi-threading and GPU support. But how easy and feasible is it for Adobe to improve performance? I interviewed a range of professional software developers for their opinions.

16. A few bits and bobs

With the series drawing to a close, there were few bits and pieces that had slipped through the cracks, or had been left on the cutting room floor. Part 16 looks back on the range of After Effects users listed in Part 1, and details how the projects they work on place different demands on their computer hardware. We also revisit CPUs and basic purchasing advice– with cheaper CPUs limiting RAM options, and cheaper motherboards limiting bandwidth. But perhaps the most significant section of the article focuses on how real-time 3D engines work totally differently to conventional 3D software.

17. Interview with Adobe’s Sean Jenkin

In Part 15 I interviewed a range of professional software developers for their thoughts and opinions on After Effects. Now, it’s Adobe’s turn to talk. Sean Jenkin heads up the “Performance” team at Adobe, and he sat down to discuss all aspects of After Effects and Performance. The resulting chat was so long I had to split the article into 2 parts. We begin by discussing performance in a more general sense, and hear directly from Adobe about what’s happening behind the scenes with After Effects development.

18. Interview with Sean Jenkin

part 2: Multi-Frame Rendering

Finally, we wrap up the series with a look at “Multi-Frame Rendering” – the most exciting new feature After Effects has had in over 10 years. Sean continues our chat by detailing all of the work that his team have done to take advantage of multi-core CPUs, and what that means for the future.

Have you enjoyed this series? I posted my first After Effects tutorial in 2002, and I’ve been writing for the ProVideo Coalition for over 10 years. Check out some of my other After Effects articles.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now