Introducing the CPU – Confusing Products, Unfortunately…

At the heart of every modern computer is the CPU – the central processing unit. For several decades the CPU has been the main focus of the computer, with the name of the CPU sometimes used as the name for the computer itself. In the 1990s Windows users were introduced to the “Pentium”, and Apple would go on to release a range of G3, G4 and G5 computers. The CPU has become synonymous with the actual computer, the definitive part of the machine.

Choosing a CPU for a new computer can be a daunting task, aside from the huge variation in cost. The majority of desktop computers are using CPUs made by Intel, and they have a truly overwhelming range. To begin with, Intel group their desktop processors into five “families” – often compared to how BMW group their cars. The basic families are i3, i5, i7, i9 and then the X-series (named “series” but listed as a distinct “family”, to make it more confusing).

Once you’ve got your head around the “families”, you move onto the “series”, including the “T-series”, the “K-series”, the “E-series”, the “H-series” and the “P-series” – but not the “X-series”, as that’s a family… Ultimately, within each family there may be dozens of options – if you want a list then it’s not just a simple chart on a website, you need to download an Excel spreadsheet. I’m not even sure how many variations there are – one list I found included 212 distinct products in Intel’s current “Core” lineup, but that may not be the final figure.

Trying to understand the differences between them plunges you into a world of technical jargon: core counts, clock speeds, cache sizes and terms like “hyperthreading”, turbo boost and overclocking. Comparing all of the options – along with all of the prices – is the domain of dedicated hardware and CPU websites, and even then many only begin the scratch the surface.

It’s hard enough with the standard Intel desktop CPUs. Apart from the five families listed above, Intel also have their separate “Xeon” range, and that’s before we consider AMD. AMD have done a remarkable job with their “Threadripper” range of CPUs, and every new release gives Intel a gentle kick up the rear. For the first time in many years, Intel has series competition on the desktop. Anecdotally it seems that 3D artists are leaning towards Threadrippers in new machines, and competition can only benefit consumers over the next few years.

Sweeping generalisations

This series is looking at the history of After Effects and performance, and it’s reasonable to assume that the CPU will be a major part of that. For the average After Effects user, the main thing we’re interested in is how CPUs keep getting faster and faster, and if After Effects is getting faster with them. We don’t need to understand exactly how they work, although want to know just enough to help us understand marketing terms. So the intention is to find a balance between giving enough detail to explain the relevance of a CPU to After Effects, but not so much that we lose focus.

In fairness, there’s not much After Effects in this article, and most of it hovers somewhere between trivia, mildly interesting, and slightly boring. But the point of publishing these articles regularly is to prevent me from getting bogged down by spending months editing and re-editing. Instead, I’ll just hit the “publish” button and you can choose to read as much or as little as you want. At this stage it looks like I’ll be posting 3 separate articles on the CPU, and this one is more of a general overview.

TL;DR 1: If you’re reading this article (or even this series) in the hope of a simple recommendation as to what’s the best CPU, then let me disappoint you now. I can’t make such a sweeping generalisation. What I can do is help you begin to cut through the jargon, but most importantly try to give an indication of how the CPU relates to After Effects. The main thing is not to spend too much money. If you have a set budget then it’s much easier to overspend on the CPU than it is to overspend on RAM or SSD storage.

If you just want to build a workstation for After Effects, this is all very confusing. For the CPU alone, you can spend about $50 on a brand new Intel i3 processor, or over $3,000 for a Xeon. Does the $3,000 Xeon W-3175X processor render things 60 times faster than the cheapest i3?

While it’s reasonable to consider the CPU the “heart” of a computer, there’s one important thing to note when talking about the performance of a specific application such as After Effects.

A CPU is designed to be able to do pretty much anything.

You only have to look at the bottom of your screen to see that. Mac users have the dock, Windows users have the taskbar, and both are used as shortcuts for the apps you use the most on your computer. It doesn’t matter how few or how many icons you’ve got down there, all those icons represent a very wide range of things you use your computer for. That’s because the same CPU can be programmed to do all sorts of different things. CPUs aren’t limited to desktop computers, either. The same CPU you use for office work, to play games and watch cat video on YouTube, can also be used to drive a car, run your TV and even your fridge. If you’re the proud owner of a Segway, you might not have known that the first generation used a Pentium III CPU to stop you falling over – possibly the same CPU that was in your computer.

This might sound obvious, but the point is that a CPU is not specifically designed to do one thing. CPUs, even though the latest models are fast and powerful, are still general purpose items. With the right software, a CPU can do anything.

1971 was 48 years ago

In Part 6 of this series (the next one, this is part 5), I’ll be looking at the more recent history of desktop CPUs and explaining why 2006 was a huge milestone in the history of computing – After Effects included. In 2006 everything changed, and is still changing. But before we look at what those changes were and why they’re so significant, let’s begin by looking at CPUs before 2006.

TL;DR 2: Go to the library (or Amazon)

The term “CPU” has become so common that it’s easy to overlook the history behind the term, and how computers developed. To avoid getting excessively sidetracked, I’m going to recommend two books which go into much more detail. Together, they cover everything you’d ever want to know about CPUs and how computers really work. The first is “Code”, by Charles Petzold. “Code” begins with very basic concepts that are easy to understand – such as how a simple switch can turn a light on and off. Blinking lights lead to Morse code: turning one switch on and off can represent letters & numbers. The next step is to use more than one switch; by using a collection of switches to represent numbers we’ve developed binary – the ones and zeroes that computers use.

From here, “Code” takes you step by step through all of the principles that make a computer possible. Switches can be combined together to form logic gates, logic gates can be combined together to do binary arithmetic, and by synchronizing enough parts together we can create an autonomous calculator – a computer. If you accidentally time travel back before the 1940s, “Code” is the book you want to have with you, so you can construct a recognizably modern computer and presumably use it for good and not evil.

The book begins with a torch and ends with the Intel 4004 – the first microprocessor. The Intel 4004 was released in 1971, made with 2,300 transistors, and was the first product that contained all of the necessary components for a CPU on a single chip.

For comparison, a current desktop CPU has about 20 Billion transistors.

From the moment that Intel released the 4004, the battle was on to make CPUs that were faster and more powerful. Again, because this series is aimed at After Effects users, I’ll simply recommend another book to anyone who wants more details. “Inside the Machine” by Jon Stokes picks up where Code finishes, and details all of the improvements and enhancements made to microprocessors up until Intel released the Core Duo in 2006 (remember above when I said that 2006 was a milestone year?). The book covers many of the chips used in popular desktop computers, including the G3, G4 and G5s used by Apple, as well as the Pentium series used in Windows machines.

We don’t need to go through every single CPU released over the last 40+ years, but we do want a rough outline of what’s happened over that time to make computers faster. To understand this, and to understand how CPUs got to where they are today, we need to jump right back to the beginning – when they were first invented.

An Absolute Unit

The short version is that a CPU is just a very fancy calculator. Everything your computer does – from browsing the internet to working in After Effects – can be broken down to different types of calculations. The name “central processing unit” came about because in the very early days of computers, these calculations were not done by a single microchip (they hadn’t been invented yet) but by a collection of distinct circuit boards that worked together as a “unit”.

Modern computers still allow expansion “cards” to be plugged in to add functionality to your computer. Your desktop computer will have a video card, and you might need one for 10-gig Ethernet or a Thunderbolt connection. However in the very early days of computers, even basic functions such as multiplication relied on specific “cards” to be added to the “central processing unit”. The overall CPU might be the size of a room, at least a filing cabinet. Wikipedia notes that by 1968, CPU design had advanced to the point that the latest models needed “only” 24 individual circuit boards.

The first microprocessor, the Intel 4004, was a big deal because it took a whole load of separate circuit boards and stuck them together on a single chip.

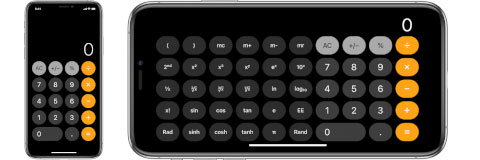

An Abacus with buttons

If we think of a computer as a fancy calculator, it’s easy to understand that some calculators have more features than others. While the most basic calculators only have 4 functions – addition, subtraction, multiplication and division – others have many more. You can buy “scientific calculators” with dedicated functions for things like trigonometry and logarithms. CPUs are the same- there are many different types and some have more features than others. The list of built-in functions that a CPU can do is referred to as the “instruction set”. The first microprocessor, the 4004, had 46 unique instructions – you could think of it as a calculator with 46 different functions.

The desktop calculators that we’ve grown up with only perform one calculation at a time, usually when we press the “=” button. What differentiates a computer from a desktop calculator is the ability to perform sequences of calculations, automatically. We can think of a computer program as a list of instructions for a calculator to work through without us having to press the “=” button after each one.

Devising a sequence of instructions that will do something useful is the basis of computer programming.

Compilation Tape

This article is intended to give a general overview of how CPUs developed for the first 30+ years, but before we go any further we need to stop for a second. It’s natural to read about new features and technical developments and wonder if After Effects has benefitted from these changes, and complain if we think they haven’t. The simple answer is that using all of the features in a CPU is mostly an automatic process, and not necessarily something that the programmers at Adobe have to do manually.

Very early computers were programmed by hand. Each individual instruction was entered by flipping switches on a control panel. This was just as tedious as it sounds. The next step was to program computers by punching holes in paper cards, which could be fed into an electronic card reader, and at some point we got to where we are today, with keyboards and screens.

While it’s obviously much easier to type on a keyboard than it is to flick switches on a box that doesn’t have a screen, an equally revolutionary step took place in the early 1960s. Up until that point, each individual computer – whatever it was, and however the CPU was built – needed to have software written just for it. Computer programs were written using the specific set of instructions that the CPU had – and as long as CPUs were a collection of individual circuit boards, each computer could be more or less unique. There was no idea of compatibility. By 1960 IBM had five different computer lines for sale, but they all needed their own distinct software.

To underline how chaotic this was, imagine going to an Apple store today and buying an iMac, a Macbook, a Mac Mini, and a Mac Pro, and then discovering that none of them can run the same software as another. Each one has their own different app store and their own apps. If you purchase a program for your iMac, it won’t work on any of the others. And they all have completely different operating systems, too.

That’s how it was in the 1950s. It was crazy.

IBM changed this forever in the early 1960s when they released their revolutionary 360 line, along with a new programming language called “Fortran”. Computer programs didn’t have to be custom written for a specific computer. Instead, programmers could write programs using more friendly and easy to understand “languages”. These computer programs were then converted into the set of instructions that each CPU could run, a process called compiling. The same program could be compiled for a range of different computers. This is still how the computer industry works today.

Thousands of different programming languages have been developed, all designed for specific tasks. The first “high level” language – Fortran – was specifically designed for scientific and engineering calculations. It’s still used today on the fastest supercomputers in the world. Many, many other languages soon followed. Basic was a language aimed at beginners, and ended up being used to save the world in an episode of “Stranger Things”. After Effects users will recognize Javascript as the language used for After Effects expressions, while many 3D apps use a language called Python in a similar manner.

After Effects itself has been written in a few different languages since 1993, for at least three separate processor families (68x, PowerPC, x86).

There was a period of time when some Apple computers used Intel chips and others used PowerPC chips, two totally different products by different, competing companies. Any program made for Apple computers actually contained programming code for two different CPUs – one for Intel chips and one for PowerPC – and the computer would work out which version to use.

The main point, before we dive back into the hardware stuff, is to understand that computer programmers write software – such as After Effects – using one language, and this is compiled into actual computer code by a computer. The different features of different chips & CPUs are automatically utilized where necessary.

While we take the concept of a “microchip” for granted, we shouldn’t overlook how significant it was to take a collection of individual components the size of a refrigerator and combine them all together into something the size of a postage stamp.

I got rhythym, I got cycles

From the general public’s perspective, there’s one single factor that indicates the speed of a CPU over any other: the clock speed. Bigger numbers sound better, and so it’s reasonable to assume that a 3 Ghz CPU is faster than a 2 Ghz CPU. Right?

Even though “Code” demonstrates how to build a basic computer using only relays and light bulbs, all the different parts still need to be synchronized together. This is done with a very precise clock, and the speed of the clock is – quite obviously – referred to as the “clock speed”. The clock co-ordinates all of the calculations and other data movement around the CPU. It’s like the drummer in a band, keeping all of the other band members in time with each other. The clock inside the CPU is there to keep all the parts of the CPU synchronized by providing very precise beats. But while the clock speed is widely advertised, eg 3 Ghz, that doesn’t mean that every part of the CPU is doing something new on every single beat. If we compare a CPU to a string quartet playing Pachelbel’s Canon, some parts are like the violin players, whizzing through calculations on every beat while others are like the cello players, who only change notes every four beats while secretly wishing they learned guitar instead. Even though they’re all playing the same song at the same tempo, by the time the piece has finished the cello players will have played 224 notes, while the violin players will have played many thousands.

So although the clock speed is the most widely advertised aspect of a CPU, it’s misleading to assume that something always happens on every tick of the clock. In practice, different calculations take different amounts of time – a different number of beats – to complete. Ideally, every instruction that a CPU can do would take only 1 beat of the CPU’s drum, but even with modern processors some of the more complex instructions can take many beats (in computer terms, a “beat” is called a “clock cycle”).

The clock in the CPU keeps everything running smoothly, but it’s only a rough indication of how many calculations the CPU is doing. The first microprocessor, the Intel 4004, used a clock with a speed of 740 kHz – that’s the equivalent of 740,000 drum beats every second. However that equated to anything from roughly 42,000 to 92,000 actual instructions per second, because different instructions took different amounts of time to complete – the fastest instructions took 8 clock cycles to calculate while others could take more than 16.

If you’re in marketing and you wanted to make the Intel 4004 sound fast, you would look for the instruction that took the fewest clock cycles. The add instruction only took 8 clock cycles to calculate, and 740,000 divided by 8 is 92,500. So you’d write up some fancy ads claiming that the Intel 4004 could do 92,500 instructions per second.

But if you work for the competition, and you want to make the processor sound slow (and their marketing department look like liars) you find the slowest calculation and do the same thing. The slowest instructions actually took 16 cycles to complete, and 740,000 divided by 16 is 46,300. So you’d tell everyone that Intel was wrong, and that it only did 46,300.

Both of these claims are misleading, because you can’t write a program with only one command. To get a realistic figure, you’d need to consider all of the commands that a CPU can do, in the same proportions that programmers use them. This is really hard, and lots of people try to do it, and it seems to be a good way to start online arguments.

Making dubious claims about the speed of a CPU while debunking the same claims made by your competition is something that still goes on today.

The need for speed

The clock provides the timing required to keep all of the parts of a computer synchronized. It sounds reasonable that using a faster clock will result in a faster processor, just as a band will play songs at a faster tempo if the drummer starts playing the drums faster. Increased clock speeds of CPUs are the most obvious improvements since 1971. The original 4004 had a clock speed of 740 kHz, and over the next 30 years the average clock speed increased from 1 Mhz (1 million cycles per second) to 1 Ghz (1 billion cycles per second), and now the average is around 3 Ghz.

However- making the clock faster is not the only way to make a processor faster and more powerful. Remembering that most instructions take several “drum beats” to calculate, then reducing the number of beats required to complete each one would mean more instructions are completed in the same time. If the Intel 4004 took an average of 8 clock cycles to complete an instruction, then a chip that can do 1 instruction every clock cycle will be 8 times faster, even at the same clock speed.

Another way to make processors faster is to add new instructions. A CPU that only has a small set of functions, like the 4004, will need to combine many of them together in complex algorithms to calculate anything that it can’t do itself. One example is finding the “square root” of a number. In older CPUs there was no single instruction for the processor to find the square root of a number, and programmers had to use various algorithms and programming techniques that involved dozens of separate calculations. This could be very slow. But if a different CPU had a “square root” function built in, then it would be much simpler and much faster. Multiple lines of programming code could be replaced with a single command.

This concept leads to “co-processors”. While a “CPU” is the main chip inside a computer that does most of the work, you can also get specialized chips for specific tasks. One of the earlier examples of this was a “FPU” – or “floating point unit”. Even during the 1990s, common desktop CPUs did not have the built-in capability to deal with floating point numbers – that’s fractional numbers, or numbers with decimal places. With clever programming, it’s possible to do floating point maths using only integer numbers, but it’s very slow.

Alternatively, you could purchase an optional “FPU” – or “Floating Point Unit” – a dedicated chip designed specifically for dealing with decimals. The FPU would work alongside the CPU, with the FPU calculating maths with decimal places many, many times faster than the CPU could by itself. A square root can be calculated on a FPU with a single instruction, in a fraction of the time. These days all desktop CPUs have a FPU built in. But if you’re trying to compare the speed of two different processors and one has an FPU and the other doesn’t, then you get different results depending on what sort of calculations you’re doing. If you’re comparing the time needed to calculate a square root, then the CPU with a square root instruction built in will be much faster than one that doesn’t. But if you’re comparing speeds of instructions that both CPUs have, then the results will be more similar.

Having a dedicated chip to process specific types of tasks is massively faster. Without jumping too far ahead, these days most computers also have some sort of “GPU” – a “graphics processing unit”, and every so often games developers talk about a “PPU” – or “physics processing unit”, a dedicated chip for processing physics simulations in games.

For complete details on all the history and methods employed to make faster and more powerful chips since the original 4004, I recommend “Inside the machine”.

Reading the instructions

While it’s easy to generalize computers by saying that they’re simply doing a bunch of calculations on a bunch of numbers, there have been many different approaches to exactly how those calculations are done, and even what they are.

The Intel processors used in most computers today use a set of instructions called “x86”, dating back to the 8086 processor released in 1978. The 8086 had a total of 117 unique instructions, and even the most complex computer programs were created using only those 117 instructions. Over time, more and more instructions have been added to the original x86 list. While it’s surprisingly difficult to know exactly how many instructions a current Intel CPU has, it’s well over 1,500.

The “square root” function is an example of a single new instruction replacing dozens of lines of programming code. It’s rare for a single new instruction to be added in isolation, usually when new instructions are added to a processor they’re part of an overall collection designed for a specific purpose, or they already exist as a separate product. The FPU mentioned earlier is an example of an existing product – a floating point unit – being merged with the main CPU. The “square root” function wasn’t added by itself, it was added as a part of a whole suite of instructions for floating point maths. The “Pentium” processor, released around 1995, was the first Intel x86 chip to include a FPU across all models. The previous model, the 80486, had several variations – some included a FPU but cheaper models didn’t, with the FPU available to purchase as a separate chip.

Around the same time – the mid 90s – the internet was taking off, and home computers were beginning to include sound and graphics capabilities as standard. While it’s almost impossible to imagine a home computer that can’t play music, videos, or even show a photo in full colour, for a long time all of these capabilities were only available with a range of add-on hardware. If a computer had a CD-Rom drive, a sound card and a graphics card that could display more than 16 colours then it was marketed as a “multimedia” computer.

After Intel released the “Pentium”, which included a FPU with all models, attention turned to features that would help computers to process sound and image files. Dedicated processors for working with video already existed, and they used a fundamentally different design to a basic CPU. As we looked at in earlier articles, images and videos are just very large collections of numbers (pixels), and in many cases processing an image involves repeating exactly the same calculation to every pixel in the image. While computers are generally very good at repetitive tasks, there’s still a lot of wasted overhead in having a general purpose CPU load up each pixel individually, before performing exactly the same calculation on each one.

As far back as the 1970s, specialized chips were developed for just this type of processing. When they were being developed they were often called “vector processors”, but the more modern term is SIMD – meaning Single Instruction, Multiple Data. A SIMD processor is specifically designed to do the same calculation to lots of numbers together, which is where the term “SIMD” comes from.

As an example of how SIMD works, lets look at a single HD frame that we want to brighten. In this example, to brighten the image we will just add a constant number (eg 10) to every pixel. However with 3 channels of a 1920×1080 image, we have 6.2 millions numbers that all need to be increased by 10 (we covered all of this in part 3). You have a Single Instruction (add 10), and Multiple Data (6.2 million pixels). A SIMD processor can be told to take 6.2 million numbers and add 10 to each one, and because it’s designed to do just that, it will be much, much faster than a CPU. It’s not that a CPU can’t do it, but that there’s always potential to make a specialized tool with higher performance for one specific task.

Intel were the first to add SIMD features to a desktop processor, when they added the “MMX” set of functions to the Pentium II in 1997. The new MMX feature was heavily advertised when the Pentium II was launched, and TV ads made all sorts of colourful but vague claims about how amazing it was. Unfortunately, despite the heavy advertising campaign, the new MMX instructions were really pretty useless. The technical details aren’t important, but MMX had little impact on software. Intel learned from their mistakes and planned a completely new set of SIMD instructions, which they included with the Pentium III in 1999. Because the MMX features added to the Pentium II had proved to be a flop, Intel wanted a completely new completely different name for their second attempt. Called SSE, for “Streaming SIMD Extensions”, the new functions were much more useful than MMX.

PowerPC chips – as used by Apple around the same time – added their own SIMD implementation, starting with the G4 processor in 1999. For legal reasons the same SIMD feature was given different names by different companies – initially referred to as “altivec”, Apple renamed it the “velocity engine” and IBM called it “VMX”. Regardless of the name, the SIMD features on the G4 processor were more elegant and powerful than Intel’s equivalent, and included features specifically designed to process images. However perhaps a bigger difference between the two competing platforms was the support and integration that Apple was able to provide, especially at the operating system level.

Directly comparing two different processors is very difficult, and comparing the G4 with the Intel Pentium III is a good example of this. Steve Jobs famously claimed that the G4 was up to twice as fast as the Pentium III (and also the Pentium 4), with on-stage demonstrations to prove it. However the benchmarks that Apple used were always carefully selected to use the G4’s SIMD features (the “velocity engine”, aka “altivec”), because that was where the biggest performance difference between the two chips lay.

Apple had invented the term “megahertz myth” in 1984, to try and persuade customers that a 4.7 Mhz PC was not 4.7 times faster than a 1 Mhz Apple II. Now they resurrected the term, actively demonstrating that a 500 Mhz G4 was faster than a 700 Mhz Pentium III, and later on that a 733 Mhz G4 was faster than a 1.5 Ghz Pentium 4.

At the time, the claims made by Jobs and the differences between the G4 and the Pentiums were heavily analyzed by the tech media, and argued about in various online forums, because benchmark results could vary so much depending on whether or not they used SIMD instructions.

This all happened about 20 years ago and the details don’t matter any more. The take-home point is that just as desktop computers were being used for serious video production work – the late 1990s – the most common CPUs used in desktop computers now contained a new set of features specifically designed to speed up the processing of images and video.

Intel continued to improve and extend the SSE functions in future versions of their chips, but as Apple switched to Intel processors in 2006, the days of arguing about Mac vs PC benchmarks were over.

This is part 15 in a long-running series on After Effects and Performance. Have you read the others? They’re really good and really long too:

Part 1: In search of perfection

Part 2: What After Effects actually does

Part 3: It’s numbers, all the way down

Part 4: Bottlenecks & Busses

Part 5: Introducing the CPU

Part 6: Begun, the core wars have…

Part 7: Introducing AErender

Part 8: Multiprocessing (kinda, sorta)

Part 9: Cold hard cache

Part 10: The birth of the GPU

Part 11: The rise of the GPGPU

Part 12: The Quadro conundrum

Part 13: The wilderness years

Part 14: Make it faster, for free

Part 15: 3rd Party opinions

And of course, if you liked this series then I have over ten years worth of After Effects articles to go through. Some of them are really long too!

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now