3rd Party Opinions

After Effects was first released in 1993, and since then it’s established a healthy ecosystem of 3rd party support. Plugins, scripts, tutorials, templates and even articles like this one are all an indication of the size and scope of the After Effects market. In this article, I’ll be reaching out to the extended After Effects world and chatting to other software developers for their input and opinions on the topic of “After Effects and Performance”.

Node points

In November last year I travelled to Melbourne for Node – Australia’s annual motion graphics conference. One of the presentations was given by the guys from Plugin Everything, and after they concluded their presentation, the audience was given the opportunity for some Q&A. Someone took the mic and asked a question along the lines of “How long would it take you to re-write After Effects from scratch?” I’m not sure what was funnier; Matt’s response that he could do it in about six months, or the panicked look on James’ face when he realized that people might think that he was serious.

What was very clear, however, was that the question really struck a nerve with the audience. Throughout the conference After Effects was the butt of many jokes. Roughly 600 people sat in a sold-out theatre, in a blissful pre-covid celebration of motion graphics, and giggled at random screenshots of unusual After Effects error messages punctuated by the occasional bleating sheep sound. Many of the speakers at Node referenced After Effects, with at least one presenter mentioning that although they used After Effects, they wished they didn’t have to.

An old proverb says “Never let the truth get in the way of a great story.” Node was fun, but were the jokes warranted? Or was the truth pushed aside for some cheap laughs? At the time of the conference I’d already published the first 3 articles in this series on performance, but I’d been concentrating on explaining what After Effects actually does. I hadn’t got as far as questioning how well it did it. But there was no mistaking the vibe in the air. The motion graphics zeitgeist was pretty clear when it came to After Effects, and the zeitgeist was not happy.

So the question has to be asked: “Is After Effects really that bad?”

After fourteen articles looking at what After Effects does, how CPUs and GPUs have evolved over time, and all sorts of other bits and pieces, it’s time to focus on the software itself.

FWIW

I’ve always been quite forgiving of After Effects when it comes to performance. My opinion is that there wasn’t much to complain about before the release of CS 5 in 2010. Before then, all software was limited to using 4 gig of RAM, and that was the biggest performance constraint. The 4 gig limit wasn’t Adobe’s fault, and all software faced the same restriction, so it wasn’t a case of “oh app X is much better than After Effects”. In the years immediately after 2010, the cost of multi-core CPU systems with lots of RAM was so high that only a small fraction of After Effects users would actually own hardware where multiple CPU performance would be an issue (see part 8).

BUT…

But now, ten years after being freed from the 4 gig RAM limit in 2010, the hardware landscape has changed significantly. The average computer has multiple CPU cores, RAM is cheap enough that having more than 64 gigabytes is not unusual, and the power of the latest GPUs is simply breathtaking (if you can find one in stock to buy…)

We’re not in 2010 any more.

What After Effects users want

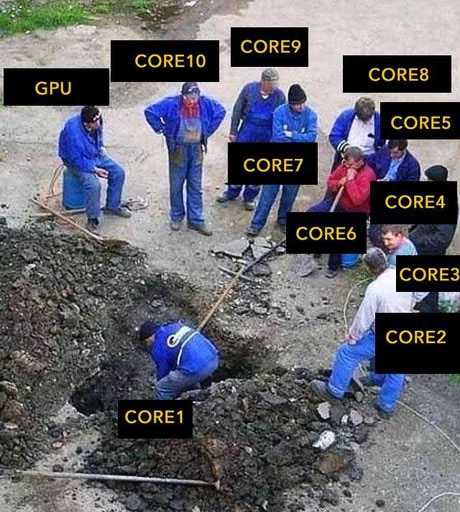

We’re at a point now where the current release of After Effects is simply not utilizing the full potential of the average machine. Exactly what the average machine is doesn’t need to be precisely defined, but to put it simply: After Effects does not take full advantage of every CPU core available in multi-core machines. And it certainly doesn’t utilize a GPU to the same extent as 3D software does.

And for many users, that’s the heart of the matter. In 2010 2D and 3D were very different worlds. Motion graphics designers in 2010 weren’t buying computers based on their 3D rendering performance. But now, 3D animation has become tightly integrated with motion graphics. There’s all sorts of 3D software out there that will utilize every CPU core and every GPU that your system has, and many motion designers are running that software alongside After Effects.

In 2010, you’d have to spend an awful lot of money to build a machine that had more theoretical power than After Effects actually used, but even now the most basic desktop machine will have CPU cores sitting idle when After Effects renders heavy comps.

So if we quit living in the past, and look at the top feature requests on the AE uservoice forum in 2020, then it’s pretty clear that users want After Effects to take advantage of all those CPU cores. As detailed in part 8, the software term is multi-threading. In a time where you can buy a CPU with 64 cores (and presumably more will come in the future), users expect After Effects to be able to utilize all of those cores. And as we’ve covered in many of the previous articles here, currently it doesn’t. Unlike the situation in 2010 where ALL software was limited to 4 gig of RAM, when it comes to graphics software in 2020 After Effects is the odd one out.

On a similar note, users are also asking for GPU acceleration. While Adobe have been quietly adding GPU acceleration to individual plugins over the past few years, buying the most expensive GPU you can get your hands on doesn’t mean After Effects will benefit. In many cases, there’s no performance difference between old GPUs and the very latest ones.

So if After Effects users are so vocal about wanting support for multi-core CPUs and GPU acceleration, then what’s the hold up?

3rd party help

I’m not a software engineer, and although I’m interested in the latest geek news I’m hardly qualified to comment on the technical quality of an application like After Effects. So over the past few months I’ve reached out to a few people for their help – some After Effects developers, other software developers, and even an old high-school friend who’s just really bloody clever. I wanted their help and insight into the current state of After Effects, and whether or not it’s really fair to blame Adobe for slow software.

I do not think it means what you think it means

Way back in part 1 of this series, I asked the question “What is performance?” The word “performance” means a lot of different things to different people. In that first article, I even itemized ten different aspects of After Effects which I felt contributed to the overall sense of “performance”, including the time taken to open After Effects as well as the overall user interface responsiveness.

Looking back at part 1, which is nearly a year old, I can see that all of the ten items I list are related to speed. For many people this makes sense – when we talk about performance, we’re usually talking about speed. My point in part 1 was that there were different types of speed, not just final rendering speed but also things like the time taken to open After Effects, import footage items, or to save a project. I’m glad to report that in the months since part 1 was published, Adobe have already improved the time it takes to open After Effects.

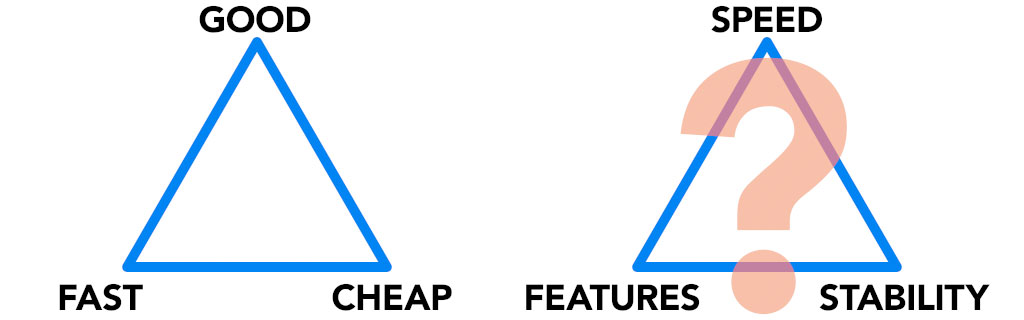

After speaking to a bunch of software developers, I learned that there are two other sides to software performance that are all related: overall stability, and new features.

Stability is pretty clear – how often are you prepared to accept an application crashing? Or bugs? Would you be happy with an application that was “faster” but randomly crashed once an hour?

Features might not be something you think of as being related to performance, but new features can improve productivity. Productivity isn’t the same type of performance as raw rendering speed, but it’s still performance.

After interviewing several software developers, it’s become clear that the notion of “performance” is a balancing act between speed, stability and features. But are these qualities similar to the famous “good, fast, cheap” triangle, where you can only have two, and not all three?

It was interesting to reach out to professional After Effects developers, because several of them were initially happy to help out, before changing their minds. In some cases this was because they discovered that it would violate the terms of their NDAs with Adobe, and in other cases they simply didn’t want to upset anyone at Adobe. A few agreed to help out as long as they could remain anonymous. I found this slightly surprising, because this was never intended to be some sort of After Effects character assassination, but I can understand the notion that you don’t want to bite the hand that feeds you.

Meet the team

While some of the people I contacted preferred to stay anonymous, a few were happy for their opinions to be public.

Chris Vranos is relatively new to the After Effects plugin scene, but he’s certainly made a bang with both “Composite Brush” and “Lockdown”, two incredible plugins that have quickly established a large fan base.

Antoine and Nicolas are from Autokroma, who’ve created the popular AfterCodecs plugin as well as the BRAW Studio plugin, which allows native Blackmagic files to be imported into Premiere and After Effects.

Last year, a team from Mainframe in England made a huge splash in the motion graphics world with their new app “Cavalry”. Cavalry was the first major new motion graphics app to be released since, well, a very long time. Chris Hardcastle and Ian Waters were happy to be interviewed about creating Cavalry – an app which many users see as an alternative to After Effects.

Andrew Bromage is an old high-school friend of mine, and is the odd one out here because he’s not a part of the After Effects world. He’s just one of those people who’s really bloody clever. Andrew’s been a professional software developer for a long time, and it’s always been great to pick his brains about technical matters. Andrew’s worked with 3D graphics and high-performance clusters in the past, and is a great font of technical wisdom and funny quotes.

He’s not the Messiah, he’s a VFX artist who can code

Here’s a thought exercise for you: Try to imagine a piece of graphics software that’s over 20 years old, produced by one of the largest software companies on the planet. For a number of years now, its huge global user base has been growing increasingly dissatisfied with the lack of development. Visualize users all around the world asking for features such as support for multiple CPU cores, and GPU acceleration. Let’s try to imagine that one feature in particular is especially slow, unstable and basically outdated. The user base is frustrated by paying annual subscriptions for updates that they feel are not worth it, and specific requests for GPU acceleration are either ignored or dismissed as being too difficult, while the software company continues to post huge annual profits.

Now let’s imagine that one single VFX artist, working in his spare time, single-handedly writes a brand new plugin that does everything the users have been asking for and more. This brand new plugin is incredibly powerful, super fast, fun to use and most of all it runs on multi-core CPUs and is GPU accelerated. After years of the global software company saying it was impossible, out comes a plugin that is fast, responsive, feature-rich, all written by someone who was still working full-time as a VFX artist.

There’s good news and bad news about this thought exercise. The good news is that it’s true, but the bad news is that I’m not referring to Adobe and After Effects. The software package I’m referring to is Autodesk’s 3D Studio Max, specifically the “Particle Flow” feature, and the new plugin is TyFlow.

For years Autodesk included a particle simulation plugin with 3DS Max called Particle Flow. It is, as I alluded to above, relatively slow, sluggish and unstable. From all reports Autodesk ignored requests to improve it, with some users suggesting that Autodesk claimed that GPU acceleration would simply be too difficult.

Last year, visual FX artist Tyson Ibele released a new plugin for 3DS Max called “TyFlow”. It did everything that Particle Flow did, and much more. It was fully multi-threaded and therefore very fast to use. And Tyson developed it by himself, in his spare time between regular VFX work.

In the studio where I was working, Tyson’s name was mentioned with a reverence usually reserved for people like Steve Jobs, Einstein and God. It wasn’t just that TyFlow was a great plugin. It is, but that’s not the point. The real point is that one guy in his bedroom was able to achieve something that one of the world’s largest software companies had not.

The bolt of lightning that was TyFlow raises the question: could the same thing happen with After Effects?

I didn’t know, so I approached Tyson for some insight as well.

Virtual Chat

Over the past few months I collected the thoughts of everyone involved, and I’m presenting them here grouped roughly by topic. Although this is the easiest way to read everything, it’s probably worth pointing out that everyone was interviewed individually.

The first thing I wanted to do was to check that what I’ve written in previous articles was accurate. One recurring topic is the evolution of desktop CPUs, especially when Intel retired the “Pentium 4” and introduced the Intel “Core Duo” in 2006. The new multi-core architecture in the Core line of CPUs is what I refer to as a “generational change” (all of this is covered in part 6). From this point, increased CPU performance would come by adding more CPU cores, not by increasing their clock speed. Part 8 in this series begins with a bunch of quotes about how difficult it is to write software for multiple CPUs (and multi-core CPUs), but they date back over ten years.

So was the launch of the Core Duo in 2006 really that significant?

Andrew: “It signified the demise of Moore’s Law giving you a freebie. There was a time from about the 60s to the mid noughties where everytime there was an increase in transistor density, you got an increase in everything. You got an increase in speed, in RAM, in pipelining, and so you could now waste transistors by adding sequential tasks on the CPU. The rise of multi-core CPUs meant the end of that freebie.”

Anon AE: “The speed increase each year really diminished. We’re seeing leaps and bounds made in regards to GPU hardware but not much at all with CPU’s. Power efficiency is always getting better but processing increases have slowed dramatically.”

Let’s pause for a second and clarify what Andrew is saying. During our chat, Andrew mentioned Moore’s Law a number of times, so it’s worth a quick refresher. The Moore in question is Gordon Moore, the co-founder of Intel. Moore’s Law is often quoted incorrectly, with the most common misquote being something like “CPUs double in speed every two years”. That’s not what he originally said. The actual quote is a lot longer, but the most common interpretation is that “the number of transistors on a chip will double every two years, for the same price.”

Andrew is confirming the point I made in parts 5 & 6 – that when CPU manufacturers increased the clock speed of their products, software automatically ran faster without programmers having to do anything. Software developers were given a “free ride” by faster CPUs, and from the moment that CPUs were invented up until the Core Duo in 2006, clock speeds were constantly being increased. As CPU manufacturers added more and more transistors to the chips (doubling every two years, as Moore predicted), there were so many transistors available that they could be “wasted” by adding more and more new features to the CPU, such as FPUs and vector units (this is what Andrew meant by “sequential tasks”, all of this was explained in part 6).

When the Pentium 4 reached the end of its life, and was replaced by the Core line of CPUs that we still have today, Moore’s Law didn’t stop. The number of transistors on subsequent CPUs has still continued to increase as predicted. It’s just that those transistors are no longer being used to add more and more new features to a single CPU. Now, they’re used to fit multiple copies of a CPU onto the one chip. As I write this, AMD have a desktop chip available with 64 individual CPU cores.

So having been reassured that the introduction of the Core Duo really was a generational change (Andrew referred to it as the “commoditization of multiprocessing”), the next step is to look at how to utilize the power of these newer CPUs.

In previous articles, I’ve frequently commented that writing software to efficiently utilise all of those cores is very difficult. But just how difficult?

Chris Vranos: “Think of it like a bunch of people building a pyramid. If you just have one person (1 core) doing it, you just tell them to stack the blocks, then paint the blocks, in order, as fast as they can, and they’re done.

In some abstraction, giving a human an order is a lot like giving a CPU an order. But now let’s say you have 20 people. Well now you have to tell them to pick up the blocks in a certain order, and place them in a certain order without each trying to put the blocks in the same place. Amidst placing the blocks, you may need to paint them as you go. You might need to tell 19 of them to STOP and wait until 1 person has finished painting the previous row of blocks. Either that or maybe you tell them all to drop the blocks they’re holding, go grab paint brushes, and help him paint? Or do you tell each of them to place 10 blocks, then paint 10 blocks? No matter how you try to organize these people, you’re wasting time as they’re either waiting for each other, switching between one task and another.

Working with CPU cores is like working with the dumbest people you’ve ever met. They just follow your orders exactly as you give them, as if they’re blindfolded and don’t know why they’re doing it. If your instructions aren’t perfect, they’re going to trip into each other and destroy everything. So while this is a simplistic explanation, you’re going to get a lot more bugs and inefficiency with multi-core code. It’s much harder than single core programming.”

One of the reasons I chatted to Andrew was because he wasn’t tied to After Effects, he’s just a mate from high school. I can ask him simplistic questions without feeling silly. The problem I faced was that I was getting mixed messages about the ease of programming for multiple CPUs. Even the most basic Google searches revealed loads of articles suggesting it was very difficult. And yet some of the people I spoke to suggested otherwise:

Ian: “It’s very easy these days to work with multiple CPU cores. There were certain nodes that we worked with where we changed one line of code, and suddenly everything you do is spread out across all the cores available. It used to not be easy, now it’s very very easy.”

Tyson: “Once you get the hang of it, multi-CPU programming is quite straightforward. And the fact that particle simulations tend to involve modifications to large numbers of separate elements means it is ideal for parallel processing.”

So here’s the deal. Writing software that utilizes multiple CPUs is called parallel processing, because multiple CPUs process the data in parallel. This is different to the traditional way software is executed, which is referred to as sequential processing. Parallel processing refers to the overall concept of running software on processors. There are a number of different approaches to parallel processing, one of which is multi-threading – where the core software itself has multiple separate parts running simultaneously.

To summarise a few decades worth of computer science, some types of tasks are more suited to parallel processing than others. When you think of everything that a computer is used for, only some of those things will benefit from multi-core CPUs. And even the tasks that can be sped up with parallel processing don’t keep increasing their performance with more CPUs forever. In other words, if you have a task that takes 1 minute to process on 1 CPU, you can’t expect it to take 30 seconds if you have 2 CPUs. It might only speed up slightly, maybe taking 45 seconds. And adding a 3rd CPU might not make any difference at all. It depends on what you’re doing.

Chris Vranos: “ I’d say if you’re looking at the total speed, I’d rather have a magical fantasy CPU that is just one core, but moves as fast as all the other cores combined. There’s complication each time you break out instructions to multiple cores. After Effects splits instructions between the UI, and the render, which is a fairly logical way to do it. But once you get to 4 cores or more, at that point it’s not going to be as easy to just dedicate a core to a separate task, and instead you’re going to want all cores working on the same task.

Luckily for video, there are a few relatively simple ways to split it up. You can send a frame to each core for example. But in short, you lose efficiency going from single core to multi-core, and the only reason we’re going to mutli-core is because we don’t have the ability to make single cores that much faster.

You can expect multi-core to be faster, but it won’t be a linear increase per core added. In other words, 2 cores won’t be twice as fast as one core of the same speed.”

Ian: “There are restrictions with thread management for certain things, like the UI. The UI should really only be running on a single thread. You can have 64 cores, but it won’t speed up the UI. It will only be as fast as your fastest core.

For certain problems, scaling up to multiple CPU cores is fabulous and wonderful – particle systems for example. Using all 64 cores would be great for a flocking simulation. But if I’ve got a node in Cavalry that’s just doing simple addition then I can’t spread that across 64 cores, because it’s just simple addition. It depends on the problem you’ve got, and sometimes just throwing cores at it doesn’t help, and in some cases it can even make it slower.”

But there’s good news for digital animators. There’s one particular type of task that is unusually, especially well suited to parallel processing. And that’s 3D rendering. In fact, 3D rendering is about the only thing that scales across multiple CPUs with almost perfect efficiency – it does keep getting faster with more and more CPUs. But it’s the exception to the rule. When you try to think of every conceivable thing that computers are used for – web browsing, email, spreadsheets & databases, playing music, playing games and so on – none of them will continually get faster and faster if you keep adding more and more CPUs…

…except 3D rendering!

It’s so unique that computer scientists call it “embarrassingly parallel”. Particle simulations, which often go hand-in-hand with 3D rendering, are another very niche example of something that scales very well with multiple CPUs.

Andrew: “What Moore’s law has enabled, is thinking about problems in terms of what is “embarrassingly parallel”. A good example is ray tracing. Everyone’s going from scanline rendering to raytracing now. Each pixel, or sample, is independent – that’s something that we can throw an awful lot of CPUs at.

When it comes to video processing, you can make it embarrassingly parallel if you want to. If you have enough CPUs you could assign a pixel to each one. In practice you wouldn’t do that, you’d assign pixels in a block, but the point is that it’s all to do with how you design the software to begin with.

Even something as simple as a rotation – let’s say you have a rectangular video and you just want to rotate it. There was a whole load of research done in the 1980s on how to rotate an image efficiently, and get it all filtered correctly. You don’t have to do it that way any more. In theory, each pixel can be processed independently. You can just assign a CPU to a small block of pixels and get them working independently. Multi-core CPUs have changed the way we think about that, and video is a good example of something that has the potential to benefit.”

The difference in how well some tasks lend themselves to parallel processing is why you have different opinions about how easy it is. If you’re talking about one of the very few tasks that is “embarrassingly parallel”, like 3D rendering and particle simulations, then the software development tools are relatively mature. There have been increasing developments in the best ways to write more conventional software for parallel processing, but only in recent years.

A major area of development has been the emergence of what is called “lock free” programming, which aims to eliminate the classic problem where some parts of a multi-threaded program are “locked up”, waiting for another thread to finish first. The theory behind lock-free programming dates back decades, and most of the research comes from developing telephone exchanges – who knew, right? But once multi-core CPUs began appearing in games consoles like the Xbox and Playstation 3, and then regular Intel CPUs in the mid 2000s, there was a huge surge in research and development on more common desktop platforms. Still, many books, courses and articles on lock-free parallel processing are less than ten years old.

Anyone writing new software from scratch has the benefits of the latest research and technology, and parallel processing is one area that continues to advance quickly. By starting with an initial design that’s suited to parallel processing, and adopting the most recent software development toolsets, anyone writing a completely new application can utilize multiple CPUs much more easily than in the past.

Andrew: “Moore’s law has allowed us to think in these terms. People with a code base before multi-core happened weren’t thinking in those terms, and it’s very hard to retro-fit, especially when you have a huge plug-in community. It’s easier now. The problem was that if you had an existing application back in 2005, like a word processor, then making the old code multi-core is hard, if not impossible.”

Andrew has raised two hugely significant points. Firstly, that retro-fitting an existing software app to utilize multiple CPUs is difficult, if not impossible. Andrew uses 2005 as an example because it’s the year before Intel released the Core Duo. But in 2005 After Effects was already 12 years old. Andrew’s second point is that retro-fitting code that wasn’t designed for parallel processing will impact on plug-ins and other 3rd party software. This was something I hadn’t thought of before.

So instead of discussing the difficulties of multi-core CPU programming from a general perspective, what specific problems are Adobe facing?

Andrew: “Being the market leader is a great position to be in but also not a great position to be in. Being the platform that everyone relies on and uses is great, but it limits how fast you can move.

Your windows machine supports the same API that I was using on my summer job in 1988. To some extent, Windows still supports DOS. Backwards compatibility limits what you can do. No-one ever used 80286 protected mode, yet your CPU supports it for backwards compatibility. They’ve got to. Adobe is in a similar situation here.

Adobe have accompanied themselves into a corner. They are at the mercy of their 3rd party developers. They can’t move fast because a lot of other companies depend on Adobe for their livelihood, and Adobe depend on them for their market share. There’s only so much they can do.

Adobe have resources, but they also have corporate interests. If Adobe have to choose between re-writing their software to utilize 64-core CPUs, or keeping their market share, which one do you think they’re going to pick? If they’d spent the intervening 20 years keeping their core product up to date, then it wouldn’t be a choice. But I would imagine that their plugin API has evolved more slowly than the main application, because plugins are part of their eco-system and if they change it too frequently that’s going to annoy a lot of people.”

This is something that I hadn’t considered before, and it was interesting to hear the thoughts of someone who isn’t at all involved with the After Effects world. Andrew’s point is that when you’re looking at a piece of software like After Effects, that’s been around for over 25 years, then it’s not enough to think of it as a single software application. It’s more of a platform. When looking at the topic of After Effects and Performance, we have to look at the overall After Effects ecosystem. That includes a huge range of 3rd party support, and inter-operability with other software. As Andrew says, Adobe and its developers rely on each other. After Effects wouldn’t be what it is without the incredible range of 3rd party plugins and scripts available for it, and those plugin developers rely on Adobe as the source of their livelihood.

“After Effects isn’t just a piece of animation software, it’s a platform”

-Andrew Bromage

In order for 3rd party developers to write plugins and scripts for After Effects, Adobe maintain a software development kit, which revolves around the After Effects API. An API, or “Application Programming Interface”, is like a language that After Effects uses to talk to plugins. If you want to write plugins for After Effects, you’re programming them using Adobe’s API.

As I mentioned in part 2, one of the major features of After Effects is its backwards compatibility. If there are major changes to the API, then backwards compatibility with existing plugins can break. Changing the API is a big deal. It means all software developers have to update the code for their plugins, which is no small feat for developers with a large range of products. Customers then have to update their plugins and make sure they have the latest version. Not to be overlooked is the significant amount of effort that needs to go in to communicating these updates to users, and dealing with the increase in support requests from people who experience compatibility issues.

This also raises some difficult questions for software companies. Updating existing products to a new API can take a significant amount of time, and obviously this has a cost attached to it. Do plugin developers update their products and make them available to existing customers for free, or do they pass the costs on? Customers don’t want to have to pay again for products they already own, but at the same time software developers can’t be expected to work for free. There’s no easy solution.

To put it simply, changing the API is a big deal.

The point of this is that Adobe can’t necessarily add major new features, such as support for multi-core CPUs, without breaking all of the existing plugins that are available. As much as After Effects users want new features, they definitely don’t want to have to update all of their plugins – and potentially pay for updates – every time there’s a new version of After Effects.

Andrew (who hasn’t used After Effects) asked me if I knew how often Adobe had made major updates to the After Effects API for plugin developers. Despite the fact that After Effects itself is up to version 17, he was speculating that Adobe would not have updated the API nearly as often. I asked around to see who knew, and there seem to have only been two major API changes that required updated plugins – one to include 64 bit support with CS 5, and the other with CC 2015, to incorporate Adobe’s firsts steps at multi-threading.

This would tend to support Andrew’s comment that large developers like Adobe can’t move too fast, because they risk alienating the 3rd party developers they rely on. And if every new release of After Effects requires users to update their plugins (possibly for a price), then the users will complain either way.

Chris Vranos: “I think users want it NOW, not at a reasonable pace. After Effects is pretty amazing in that you can open a project made 10 years ago in an ancient version, and it STILL opens and renders correctly. If you work in broadcast, where templates and graphics may be needed for years, this is 100% necessary.

However that presents huge complications in development, because it takes a ton of work to overhaul the performance of a program, changing how it renders, but keep backwards compatibility so it renders exactly the same visually.

I think Adobe is doing a much better job than the public gives them credit for. They could remake After Effects, blazing fast, in a year or two if they wanted to, but they’d have to scrap backwards compatibility to do it. Everyone would scream and cry in horror as they lost all their old projects. Adobe is making the reasonable decision.”

Both Chris and Andrew are speculating that any major changes to After Effects will result in a breaking the backwards compatibility that After Effects has. Andrew thinks that if Adobe add significantly improved multi-threading in the future, such a change will require a major update to the plugin API, which in turn will require all developers to update their existing plugins. Because this is such a massive undertaking for everyone involved – including all After Effects users who’ll need to update too– presumably Adobe will wait until they have a suitably large update in store before making such a big change. But judging from the grumblings in the motion graphics community, users will be happy to update their plugins if it means they get improved multi-threaded performance.

Enough about the CPU, on to the GPU

As the general software industry has slowly come to terms with dealing with multi-core CPUs, the GPU has really come to the forefront of 3D rendering. The latest GPUs are incredibly powerful. As detailed in Part 11, modern GPUs are no longer limited to rendering 3D graphics for games. Programming languages like nVidia’s CUDA, and Open CL, enable a much broader range of software to be processed by the GPU. Does that mean we should all be asking for After Effects to be GPU accelerated, instead? And are they mutually exclusive – for software developers, is it a choice between programming for multiple CPUs vs the GPU?

Ian: “Something we discovered with Mash is that just putting something on the GPU does not automatically make it faster, regardless of how fast the GPU is. There’s quite an overhead in shepherding the data to the GPU and then getting it back again. It means that it can be a lot cheaper to do certain calculations on the CPU, as opposed to the GPU, because of that overhead.

Andrew: “For software developers, a CPU is a CPU, you have the same CPU on Macs, Windows, and Linux. Unless it was an obvious GPU problem, like ray tracing, I’d personally favor the CPU. Everyone’s got more or less the same type of CPUs in their machines. You can assume that an Intel-esque CPU is an Intel-esque CPU. If it supports the instruction set then that gives you a set of guarantees. It’s reasonably open, everyone’s programming for the same instruction set, for the same registers, for the same capabilities.

But there are competing GPUs, and they’re all different. And they’re different in subtle ways. One of the biggest problem for games developers is driver incompatibility. They have to test games on every sort of hardware that their game might run on. It’s why consoles are much easier to work with, the hardware is fixed.

If you care about performance for customers, the wide variety of hardware you have to deal with is a real pain. If it’s just for yourself, and you’re only programming for the specific GPU you have in your machine, then that’s OK. But if you’re working on something to distribute then that’s a huge problem you need to deal with.”

Chris Vranos: “GPUs are a total mess, but this problem is not unique to animation. The funny thing I first noticed about game development is that a $400 console often seriously outperforms the same game on a $2,000 PC. I came to understand later that when a game is made for a console, it’s targeted specifically to that hardware, and has maximum efficiency, using all the hardware to its fullest potential. But when you develop for an unknown PC, you just have to play it safe and just make sure it’ll run. You can’t put too much effort into targeting specific hardware that not all users have.

So for us, we do as much as possible on the CPU, since CPU instructions are very easy and standardized to send to any CPU. We do use the GPU, but we only use standardized calls that work on all types of graphics cards. It’s too much maintenance to write code for nVidia and AMD separately, so often times our products are much slower than they could be. This is a necessary evil because our team is small, and we would be re-writing and testing every time a new GPU came out.”

Andrew: “The one area where Macs and Windows are really different is the way you directly program the GPU. Apple uses Metal, and if you’re doing GPU programming for a Mac you write it in Metal. If you’re doing it for Windows you’re using Direct 3D. If it needs to run on Linux you use Open GL and hope for the best. You could use CUDA but then you need to hope it’s running on the right hardware.”

Ian: “Working with the GPU on Mac and Windows, there are some differences there. We’re somewhat abstracted from that. They both cost us time for different reasons. Thankfully we don’t have too much trouble. We don’t program directly to the GPU, we program to an intermediate language that then gets sent out to whatever backend requires it, which sends things to the drivers. If we had to deal with that stuff directly, then Cavalry would have practically no features.”

Anon AE: “Currently we don’t have issues with cross platform CPU code, but this may change soon due to Mac based ARM machines. In regards to GPU, this is a real pain. Initially, the AESDK (After Effects Software Developers Kit) came with a single GPU template that ran on Open GL. But because Apple has been deprecating Open GL, Adobe has provided their own updated framework but it’s not cross platform. If you want it to run on Windows you have to write your code for Open CL (and/or CUDA for nVidia hardware). On Mac, both these frameworks are deprecated and will soon be phased out completely in favour of Apple’s own Metal platform. So developers will need to know at least two GPU frameworks if they want their GPU code to run on Windows and Mac. This is all due to Apple, not Adobe. Microsoft is so chill in this regard, as they have their own framework (DirectX) but they don’t force it down anyone’s throats.

GPU’s have made certain tasks much more accessible to programmers. Since no 3D transform functions are provided in the AESDK, developers must implement their own CPU based functions, or use the GPU which is much easier. With GPU architecture being much more suited to certain pixel processing tasks, some effects that have previously been prohibitively expensive on the CPU have now been made possible.”

Tyson: “The biggest challenge I’ve faced when writing single-GPU software is just how difficult it can be to wrap your mind around GPU programming paradigms. Interfacing with a GPU is quite different than a CPU, and trying to debug complex GPU kernels can take a long time. Instead of a handful of threads operating completely independently (in a CPU), you’re dealing with thousands of threads organized into blocks and warps which all need to be properly synchronized, directed to use shared memory, etc. Even the simplest GPU kernels can require a ton of work to debug and optimize.”

Andrew: “Compared to CPUs, the way we currently code for GPUs is rudimentary. It’s the one thing which is vendor dependent and OS dependent. There are technologies which are emerging to address the problem, such as ISPC. The way people are programming for GPUs today is the way people were programming for multi-core CPUs in 2004, before they were commoditized. It’s a different way of thinking. Lots of people do it because we’re starting to think along those lines, but the cross-platform tools aren’t really there yet.”

Tyson: “It’s not as simple as just flipping a switch in order to use a GPU over a CPU. I’m not sure if a GPU is ideal for image/video processing in all cases, due to VRAM limitations and the computational cost of transferring huge numbers of uncompressed images to and from the GPU as the data is manipulated. For example, if I’m working with 4k uncompressed frames, it may be faster to process each frame locally on a multi-CPU system, than to transfer each frame to a GPU to be manipulated and then transfer it back in order for the result to be stored in a playback cache. I guess each attempt to optimize processing with a GPU would need to be benchmarked accordingly.

For example, one bad situation to avoid that would cripple performance is to mix CPU and GPU effects all the time, because the pixels have to be transferred between CPU RAM and GPU RAM each time (a GPU effect needs to read pixels from the GPU RAM and a CPU effect needs to read pixels from your usual RAM). It could lead to a slower result just because the two are too much intertwined in the project.”

Anon AE: “Since many effects are now GPU accelerated, it’s a waste that each effect’s rendered result has to go back to the CPU, then back to the GPU for the next GPU effect applied. If effects could send their GPU buffers without going back through the CPU each time, this would speed things up tremendously. Of course this only applies to a GPU effect followed by another GPU effect. And not all effects are suitable candidates for GPU acceleration.”

The issue of bandwidth has raised its head, something which we covered in part 4. The problem with CPU vs GPU is amplified when you need to combine processing between them. Certain types of rendering lend themselves to GPU acceleration, other types to CPU. But shuffling data between the two incurs a very significant performance hit. Even if one effect in isolation renders faster on a GPU than on a CPU, combining that effect with one that renders using the CPU may be slower overall than if they were both rendered on the CPU, together.

Identity crisis

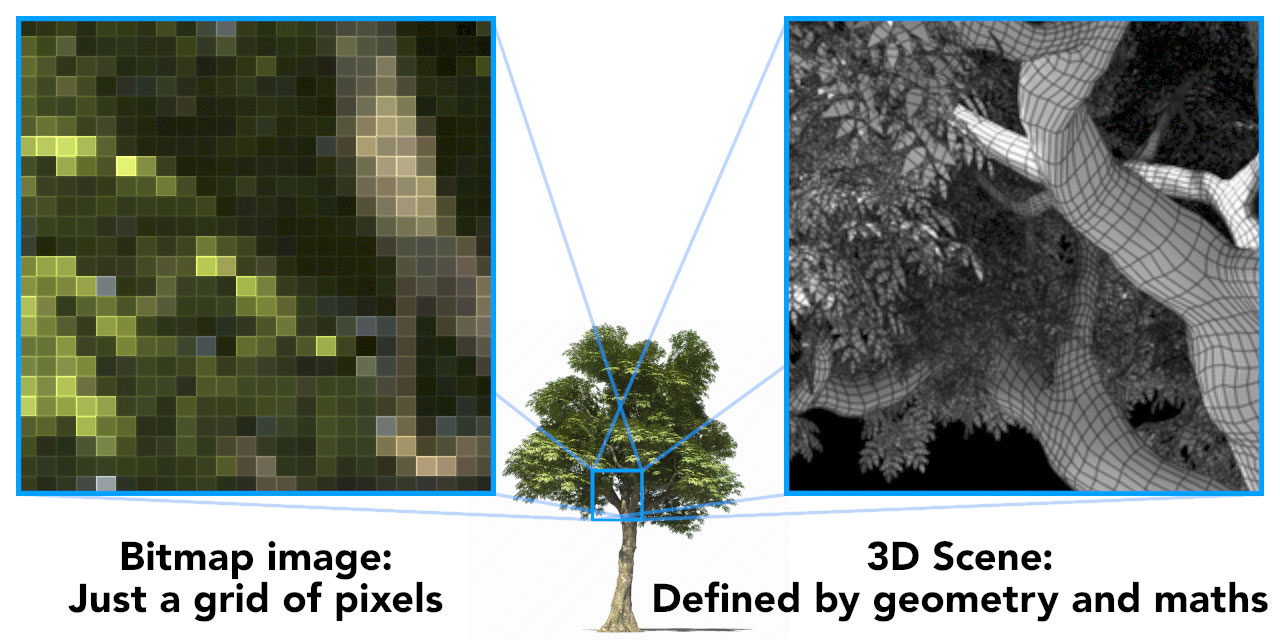

If this sounds complicated then in some ways it is. It brings us right back to the issue that this series started with: What After Effects actually does. And to summarise the first few articles in this series, After Effects is an application that combines bitmap (raster) images together.

For some developers, this is a far bigger issue than simply choosing between the CPU or GPU. For the team who developed Cavalry, the primary problem facing After Effects isn’t just a technical feature like multi-threading or GPU acceleration, but a fundamental identity crisis:

After Effects just isn’t suited to the type of work most of its users are doing.

-Chris Hardcastle

It is broken, and you can’t fix it

As software developers, Chris Hardcastle and Ian Waters didn’t see the problems facing Adobe being fixed with a new plugin, or through a technical advancement such as GPU processing. Instead, they saw a much larger problem facing After Effects – what it was designed to do. Feeling that the issues facing After Effects are too big for Adobe to solve, they created their own new motion graphics software – Cavalry. Cavalry certainly made a splash when it first launched, but despite the huge surge of interest from established After Effects users all around the world, Chris doesn’t see it as being a replacement.

Chris: “We love After Effects and we love using it. But there’s lots of things that it doesn’t do well because of its heritage. The architecture that it’s built on is very very old, and it’s just not engineered to do certain things.”

Ian: “It became apparent to us that what people were using After Effects for is not what it was designed for. In our (design) world, anyway. There were people who use it for compositing which is great, but it had drifted from its original usage.”

Chris: “We’re not trying to replace After Effects. We have people telling us: if you want to be taken seriously, you’ve got to do compositing. And we’re like – After Effects does good compositing. Fusion, Nuke do good compositing. There’s lots of options there already. We’d like people to understand where we’re coming from.”

Ian: “The big problem for Chris was speed. And that stems from the fact that After Effects is raster-based, and all the work we’re doing is geometry based. These days, all of the innovation is happening in the geometry domain.”

Chris: “After Effects is built on a platform where there’s nowhere to go. It’s start again.”

What’s worth emphasizing here is how elegantly Ian has summed up the main problem facing Adobe. This series on “performance” has 15 parts and is somewhere around the 100,000 word mark, and yet the executive summary could well read:

“After Effects is raster based… all of the innovation is happening in the geometry domain.”

-Ian Waters

The difference between raster / bitmap images and geometry is so crucial that it was the topic of part 2 in this series. They’re completely separate worlds. After Effects was designed from the ground up to composite bitmap images together. 3D animation software is designed from the ground up to process geometry. All of the major technical developments over the past ten+ years have revolved around the world of 3D geometry.

So what challenges are there for software developers as they write software for bitmap / raster images in a world optimized for 3D geometry?

Chris Vranos: “It’s mostly just that bitmaps are big and heavy, and you’re always thinking about optimizing, compressing, caching, and parallelizing. When we created the motion vector pass for Lockdown, we initially thought we might store those vectors in the plug-in somehow. But we realized it would be a ton of work, and we would really be much better off letting After Effects handle the render and re-import, since it already does it so well.”

Ian: “Cavalry’s fast, and the viewport is fast, but the problem with working with giant files is getting the image out to a file.”

The biggest problem with bitmap images is that the number of pixels to be processed increases exponentially as the resolution goes up. This was the topic of part 3. Over the 25-ish years that After Effects has been around, working resolutions have gone from standard definition to high definition, now to 4K, and only a few months ago Blackmagic released a 12K camera. What challenges are there for bitmap software like After Effects as resolutions keep increasing – how would current computers cope with 16K video?

Chris Vranos: “We just try to target 4K as a standard, and ensure everything feels generally smooth and operable at that resolution. Our general hope is that people working on 8K footage work at half-res most of the time! I still occasionally freelance as an animator and compositor, and we may shoot at 8K, but we almost always transcode to 4K right away.

To answer the question a bit more thoroughly, we’re approaching the end of Moore’s law, and to be able to just rip through 16k footage in the same way we do 1080, it might not happen for decades. The more reasonable solution would be to compress 16k footage in such a way that subsets of its resolution are easily accessible. So for example, aligning the data so it’s easy to access every 4th pixel, effectively storing the same image in 4k, 4 times, each offset a pixel. Then the user just works with the 4k subset, since rotoing at 4k is good enough for most situations anyway. Then the final 16k render calls all the remaining pixels.

There are tools that already do this, and I believe After Effects does this very well with certain image types. I’d imagine to make working with 16k footage smooth, we’d have to really think about a new format that specifically saves 16k images in a way that a lower resolution can be accessed quickly, and make tools to take advantage of this.”

Antoine: “From what I understand so far, 12K has no point in broadcast / diffusion (unless for VR / 360?) but could be useful for shooting and post production (VFX). The first benefit is to get the cleanest / sharpest 4K and 8K images possible. Theoretically it makes sense; if professionals really need it I have no idea. And there is also the question of if they can afford it (storage space and more computing time of projects). The second benefit is for better control (pan, crop, zoom, more info for chroma keying etc.)

We need to investigate what people need it for, but it would seem reasonable for me that Blackmagic RAW API has resize procedures to 4K and 8K and our importer would use them to give our users the best image possible without ever going to 12K in the whole pipeline of Adobe (only at the import stage with our BRAW Studio importer).

In any case it’s possible to implement 12K import and we will do it but it will come with cost in terms of speed, exporting time, storage space, troubles with codecs etc.”

Tyson: “The problem is that the display of images – which the GPU is built for – is quite different than the direct manipulation of images.”

Just as Ian eloquently summarised the main problem facing Adobe (raster images vs geometry), Tyson has eloquently summarised the problem with GPU processing. To re-word him slightly:

The display of images on a GPU is quite different to the manipulation of images using a GPU.

-Tyson Ibele

This is a point that I touched on in Part 12, when looking at the differences between games and CAD. When playing a game, for example, the GPU renders each frame and outputs it directly to the monitor. The actual image itself is not stored anywhere as a file. It isn’t processed by the main CPU. When you play a game each frame is rendered, displayed, and then it’s gone. Even though a modern game with a top graphics card might be able to render a 4K image at 60 fps, that’s not the same as processing and saving those frames as data.

The latest GPUs have phenomenal performance when it comes to rendering 3D games in real-time, but it doesn’t mean they’re ideally suited to processing and manipulating bitmap images.

Feature Creep

The evolution of multi-core CPUs and powerful GPUs are clearly hardware developments. They’re the two most common things people think of when it comes to their computer’s performance. But as I noted earlier on, another key factor – both to the user and to developers – is features. Most of the developers I spoke to all mentioned how the notion of “performance” was intrinsically linked to developing, incorporating and supporting new features. The Cavalry team took this to an extreme level by writing a brand new application from scratch. For existing After Effects developers, the issue is more difficult.

Chris Vranos: “How could Adobe make After Effects faster? The answer is the same for any company. Feature Freeze! I battle with that a lot personally. You want to keep bringing new features, but they halt optimization work.”

Antoine: “There are others areas where we could improve performance but we would also like to bring more features so that’s a tradeoff we need to continuously make. Speed is not everything!”

Chris Hardcastle: “Features, by their nature, disrupt stability. You’re introducing new things that may allow users to do something you’ve not thought about, and that can expose parts of the app that mightn’t work that way. Features can definitely affect speed.”

Ian: “You could make an app that’s fast and full of features, but it will be impossible to use because the UI will be skeletal.”

Andrew: “Adobe have the problem of things like language support, not just the user interface but all of the supporting documentation as well. So a simple change to something can have so many flow-on effects.”

Earlier I suggested that overall software performance is a balance between speed, features and stability. As After Effects users have been vocal about their desire for improvements in speed, this suggests that Adobe have been prioritising new features and stability. But new features are another area where the user base – me included – have felt that After Effects is lagging behind. For anyone who’s missed it, an older article of mine looks at a range of modern animation tools which After Effects lacks.

This leaves us with stability – and while I personally find After Effects to be very stable, there are still plenty of users out there who regularly encounter unique glitches.

So according to the After Effects user base and general motion graphics zeitgeist, AE isn’t especially fast, lacks new features in key areas, and isn’t always that stable.

Is this a case of the user base being unrealistic and unfair in their judgements? What insights can professional software developers provide into working on an application the size of After Effects, for a company the size of Adobe?

Tyson: “In my experience, After Effects is stable, but very slow. It’s not optimized for multi-CPU systems (in fact, development has regressed in that regard – with the removal of support for multi-CPU caching that existed in older versions) and is a pain to use on complex projects.

I wish Adobe cared more about performance than they do. The problem with these monolithic developers is that there is little pressure to push performance because the software is so ubiquitous and it’s not users you have to answer to – it’s their shareholders.

The movement towards subscription-based licensing also hurts users; why elevate the quality of your software when you’ve got people paying for eternity just for the privilege of being able to open it? In the past, you had to incentivize users in order to get them to upgrade to the next version. Now you’ve got them on the hook forever, regardless of whether or not the codebase ever changes again. C’est la vie, I guess.”

When I asked Andrew about the challenges facing large software development teams, he laughed and mentioned Conway’s Law. The basic concept is that software companies design software that mirrors their own internal organization.

Any organization that designs a system (defined broadly) will produce a design whose structure is a copy of the organization’s communication structure.[2]

— Melvin E. Conway

Andrew: “I think there’s a lot of waste in the software industry. The way we make software is unnecessarily inefficient. The methodologies result in scaling up to lots of people, but not to lots of functionality. If not enough people on the team can think of the product holistically, then the product will be designed in a compartmentalized way, and communication between different components will kill performance.”

Ian: “I’ve been on a development team that was 100+ people. What tends to happen at large companies is that everything gets bogged down with a ridiculous number of stakeholders, product designers, product managers, product owners, then you’ve got development managers. You’ve got all these layers, and meetings and meetings and meetings. And so the person who has the vision for an idea is so far removed from the implementation of that idea that things just go around in circles.

We used to astonish the people at Autodesk at how quickly we could develop, because the same person who had the idea is the one who implements it. Or, if we needed to have a conversation about it, the only person we needed to speak to was Chris.”

Adobe is one of the largest software companies on the planet. In terms of sheer size it sits alongside industry behemoths such as Microsoft, Google, Apple, Oracle and Autodesk. With so many resources available to it, couldn’t Adobe just throw loads of developers onto After Effects and “fix” it? When I asked Andrew he laughed again, and mentioned Brooks’ Law. Brooks’ Law comes from Fred Brooks, who wrote a famous (and very well respected) book on software project management in the 1970’s. His famous law is :

Adding manpower to a late software project makes it later.

-Fred Brooks

While this might not seem 100% relevant to After Effects, there are definitely users who would consider features such as multi-processing to be late. If we agree that the lack of comprehensive multi-threading in After Effects is a feature that is late to market, then according to Brooks, simply throwing more and more software developers at the problem will only make it even later.

Brooks’ first book “The mythical man month” has a fascinating Wikipedia page, and it appears that many of the ideas in his book are still just as relevant today. Somewhat disappointingly for After Effects users, Brooks insists that improving performance by a factor of ten over a period of ten years is simply impossible – this is referred to as a “silver bullet”.

“there is no single development, in either technology or management technique, which by itself promises even one order of magnitude improvement within a decade in productivity, in reliability, in simplicity.”

– Fred Brooks

Unfortunately it seems, sometimes software development just takes as long as it takes. Another well know quote from Fred Brooks compares software development to pregnancy:

“The bearing of a child takes nine months, no matter how many women are assigned.”

-Fred Brooks

In other words, if it takes a woman nine months to have a baby, you can’t make it happen faster by getting nine women to have the baby in a month. This is a good example of “programmer humour”. Some tasks are, as Brooks would say, “sequentially constrained”. They’re going to take a certain amount of time no matter what, and the time can’t be reduced by having more people work on them.

Do it again

This article began with an audience member at Melbourne’s Node Fest asking how long it would take to re-write After Effects. Despite the naiveté of the question, it was pretty clear that the audience was keen for an answer. So what do the professionals think? While the technical challenge is obvious, it’s interesting that the guys from Autokroma see the real challenge in replicating the user base, and not the software itself:

Antoine: “If right now Adobe stopped improving After Effects it might take 10 years to fully die. And by the Lindy effect if Adobe continues to support and improve it, as they should, the best guess we have is that After Effects is going to be relevant for another two decades.

So yes I have quite a lot of ideas of how I would write After Effects from scratch, but none of them matters. Competing with After Effects, to me, is more of a UX & building a community challenge, rather than a technical challenge”

Tyson: “After Effects is a pretty gigantic piece of software, so it would likely take a huge amount of work to create something competitive. There are alternative pieces of compositing software which rely on a different node-based paradigm (like Nuke or Fusion), but I’m not sure if an analogous layer-based NLE exists with the same scope of features, and it would take many, many years to create one from scratch.”

Chris Vranos: “It would take millions of dollars, and many years. After Effects does a lot.”

The sheer size of After Effects makes it unlikely that any one single new application will appear that directly competes with all of After Effects’ features. After Effects simply does too many different things – After Effects Product Manager Victoria Nece has often said that there isn’t a single After Effects user out there who has used every one of After Effects’ features. Instead, competition will continue to appear via a range of apps that focus on specific features. This has been going on for as long as After Effects has been around, it’s just that After Effects is the only all-in-one app, so not all competition can feel like competition. But if you look at individual features, including video compositing, rotoscoping, editing / playback, character animation, 2D vector animation as well as type animation, then there’s a whole range of software out there that can be considered competition. What makes After Effects unique is that it manages to address all of these features together, in one package. And this bulk simultaneously makes After Effects difficult to compete with directly, but also slow to advance.

After Effects is simply so big that it’s unlikely to be challenged directly, but it’s also unlikely to change dramatically.

Chris Hardcastle: “We’ve tried to build something (Cavalry) that is good at the things which AFter Effects wasn’t originally designed for. We’re definitely not focused on compositing. We do have some raster support, so you can bring in JPGs, Quicktimes and MP4s, but we’re definitely not focused on that world.”

Instead of praying for someone to come along and completely re-write a new version of After Effects, are there technical changes that Adobe could implement to improve the speed of the current application? If our developers were given a job at Adobe, and tasked with making After Effects friendlier for developers, what technical approaches would they introduce?

Tyson: “If I had to re-write After Effects I would take the same approach that I take for tyFlow: speed and efficiency should take priority over everything else. It might sound silly or obvious but so many pieces of Adobe software – AE and Photoshop especially – have become horribly inefficient, bloated messes over time.”

Chris Vranos: “I would get away from resolution and bitmap images as a foundation for a project. There would be no 1920×1080 timeline. A composition wouldn’t exist in that sense, it would just be a 3D space. Your renderer would just be a camera with a certain aspect ratio, and chosen resolution, and everything would sit out in 3D space, full vector. Rasterized images would live on planes as textures, and may sit in front of a camera. Masks would live in camera space. Perhaps each “composition” would be its own independent 3D space with its own 3D camera. All coordinate systems would be based around a 0,0,0 origin. Any paint work I suppose could still be rasterized on top of a plane. Unreal is a good example for a lot of this stuff. There’s a lot to think about!”

Ian: “We like open software – open meaning accessable APIs, scripting and so on, so you can sellotape your own things on the side of it, like we did with Mash for Maya. We had some trouble here with After Effects, we raised our eyebrows and thought “wouldn’t it be great if there was a 2D app that had the openness of Maya”.

Chris Vranos: I don’t feel very restricted by Adobe, it’s a hard question to answer. AE saves us tons of development time by handling stuff that would take us months to write. Our products would not be even remotely possible without Adobe, and I think they’re doing a very good job keeping a good environment for the developers.

Anon AE: “I’m not sure how feasible this is, but since many effects are now GPU accelerated, it’s a waste that each effect’s rendered result has to go back to the CPU, then back to the GPU for the next GPU effect applied. If effects could send their GPU buffers without going back through the CPU each time, this would speed things up tremendously. Of course this only applies to a GPU effect followed by another GPU effect. And not all effects are suitable candidates for GPU acceleration. ”

3rd act from the 3rd party developers

With all of the technical discussion out of the way, I asked if anyone had any final thoughts on the general topic of “performance”.

Chris Hardcastle: “Performance isn’t exactly crucial to the artistic side of the job, but it really helps you play, experiment and find out what’s wrong before you find what’s right. A lot of time in the design process is working out where you’re going. Choosing colours isn’t exactly computationally expensive.”

Ian: “You don’t want to feel like you’re fighting the software. You want fluidity, the frictionless workflow. It’s not just about playback.”

Progress Bar

If performance wasn’t an issue with After Effects then this series wouldn’t have been written. Improved performance – specifically through CPU multi-threading – is the top feature request on the Adobe uservoice forums, and GPU acceleration is also high on the list. But talking about the issue with a range of software developers – including those who’ve created a new motion graphics app from scratch – has helped illuminate how complicated the issue is.

Having spent the past few months swapping emails and chatting with professional software developers, here’s a summary of the more notable points.

- Software performance is a balance of speed, new features, and stability.

- Writing software to take advantage of multiple CPUs (and multi-core CPUs) is called parallel processing, implementing it involves writing multi-threaded software.

- Certain tasks are more suited to parallel processing than others. 3D rendering and particle simulation is unusually well suited to multiple CPUs.

- In order for other types of tasks to benefit from multiple CPUs, the software needs to be designed for parallel processing from the start. This is a relatively recent development for everyday desktop computers.

- Some of the more recent innovations in parallel processing have only emerged over the last ten years.

- While the tools for writing software for multi-core CPUs have advanced significantly since 2005, adapting / retro-fitting / re-writing older software for parallel processing is difficult, if not impossible.

- Considering that After Effects was first released in 1993, and was over 12 years old by the time Intel released their first multi-core CPU, Adobe must have a huge existing code-base that pre-dates the current generation of parallel processing software.

- If (when) Adobe make significant updates to After Effects, and improve parallel processing, then it’s highly likely that existing plugins will break, and will need to be updated.

- According to Fred Brooks, simply adding more developers to a software team will not make development faster. So it’s not reasonable to expect Adobe to simply add more programmers to the team and speed up progress.

- While programming for multiple CPUs has become much easier, and doesn’t differ greatly between different platforms, programming for GPUs is much more difficult.

- Similarly to parallel processing with CPUs, processing on GPUs suits some types of tasks more than others.

- Unlike CPUs, programming GPUs directly is something that differs greatly between OS platforms (ie Windows / Mac) as well as hardware vendors (ie nVidia, AMD, Intel). This makes it much more difficult to support, test and debug. In some cases, developers need to write completely different code for different platforms, making GPU support much slower and costlier.

- Because there’s a large performance hit when transferring data between a GPU and the CPU, spreading the processing between both can result in slower performance overall. Despite the obvious speed benefits that GPUs have for “embarrassingly parallel” tasks like ray-tracing, in many cases overall performance is higher when using CPU cores only.

- All of the major innovation in GPU performance has come in the separate world of 3D animation and rendering, ie geometry as opposed to bitmaps, and this is simply not something that After Effects does.

- Ultimately, the biggest problem facing After Effects is that its core functionality – compositing bitmap images – is not ideally suited to parallel processing.

There’s a lot of information here that I didn’t know, and hadn’t thought about before. Thinking of After Effects as a “platform” and not just a piece of software is one example.

Now that we’ve heard from software developers about the real-world issues stemming from supporting multi-core CPUs and GPUs, the next step is to hear directly from the After Effects development team at Adobe.

Hopefully that will be soon!

Special thanks to everyone who was happy to chat and share their knowledge for this article – thanks to Andrew Bromage, Chris Hardcastle & Ian Waters, Chris Vranos, Antoine & Nicolas @ Autokroma, Tyson Ibele, and a handful of others who let me pick your brains.

This is part 15 in a long-running series on After Effects and Performance. Have you read the others? They’re really good and really long too:

Part 1: In search of perfection

Part 2: What After Effects actually does

Part 3: It’s numbers, all the way down

Part 4: Bottlenecks & Busses

Part 5: Introducing the CPU

Part 6: Begun, the core wars have…

Part 7: Introducing AErender

Part 8: Multiprocessing (kinda, sorta)

Part 9: Cold hard cache

Part 10: The birth of the GPU

Part 11: The rise of the GPGPU

Part 12: The Quadro conundrum

Part 13: The wilderness years

Part 14: Make it faster, for free

Part 15: 3rd Party opinions

And of course, if you liked this series then I have over ten years worth of After Effects articles to go through. Some of them are really long too!

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now