The days of setting exposure with zebras set at white clip are most likely over. Here’s a new technique I’m trying out that works very well, particularly when viewing a log image…

Early in my career I shot a lot of “run and gun” projects. When I made the move from camera assistant to operator and second unit DP I had a hard time finding film work as I was considered “too young” for those jobs. The video world didn’t have that hangup, so I filled the time between film jobs by shooting TV magazine shows such as “Inside Edition” and “A Current Affair,” as well as dozens and dozens of karaoke videos. At that time, Betacam cameras had black and white viewfinders and about six stops of total dynamic range. Ironically, it was easier to set a “proper” exposure back then than it is to do that same thing today.

The reason is pretty simple, but it takes a little bit of explaining.

The original Rec 709 spec was designed to hold about six linear stops of dynamic range. A theoretical matte white object that reflected 100% of the light striking it fell at 100% on a waveform monitor. Later cameras were able to retain detail in matte white objects that reflected 120% of the light striking them, for a waveform value of 109%, but as it’s impossible for a matte object to reflect more light than is striking it this extra 20% was strictly overexposure headroom for such things as specular highlights or objects that were struck by additional light beyond that illuminating the immediate scene. (A matte, or diffuse, surface reflects light equally in all directions. An example of this might be a piece of copy paper. This is different to a shiny surface with a specular highlight, which is basically just a mirror reflecting the image of the light source back at the observer. That image, understandably, would be much brighter than a matte surface reflecting diffuse light.)

The only common field exposure tool available back then was zebras. Some camera people—mostly in the news world—set zebras for a general flesh tone value. I refused to do that as flesh tone covers a wide range of lightness and I didn’t want to make dark skinned people look lighter or vice versa. Instead I followed what was then the more traditional route: I set zebras for 100%, so I knew when a white object clipped.

This worked really well for quite a long time. Then camera technology changed, A LOT.

I don’t often reference the standard Macbeth chart other than to wonder why anyone still uses it for video (as it means nothing in the video world—look at it on a vectorscope and resulting pattern looks more like a shotgun blast than anything else), but it works very well to illustrate a point I want to make.

The Macbeth Color Checker Classic. This is a great chart to use as a printing reference for stills, but it doesn’t have much usefulness in the video world. The white and black chips, however, illustrate very well the points I’m trying to make in this article.

The Macbeth Color Checker Classic. This is a great chart to use as a printing reference for stills, but it doesn’t have much usefulness in the video world. The white and black chips, however, illustrate very well the points I’m trying to make in this article.

The white chip in this chart reflects about 72% of the light striking it. When I read a Macbeth chart with my spot meter that chip comes up at two stops brighter than 18% gray. Using the same technique, the black chip reads about two stops under 18% gray. Given the dynamic range of both film and the better HD cameras of today, that’s a little startling. If an Arri Alexa or Sony F55 is capable of capturing 14 stops of dynamic range, isn’t it a little nuts that these two chips, that represent two stops brighter and darker than 18% (middle) gray, can trigger the visual sensation of white and black?

I thought about this on a recent run-and-gun shoot I did for a major fashion design company. We shot with Sony FS7s, recording SLog3 and viewing the image through the LC709 Type A LUT. One of the many quirks of this camera (as well as the F5 and F55) is that the waveform isn’t available in all shooting modes. Zebras generally are, but in this case—with that configuration—the in-camera waveform monitor refused to turn on. And even if it had turned on, it’s too small and low resolution to use critically and quickly.

My old trick of setting zebras at 100% didn’t work, for two reasons:

The first is that I rate the FS7 at ISO 1000 to reduce noise, and at that ISO the LC709 Type A gamma curve tops out at 95 IRE. Setting zebras at a value higher than 95 IRE means they’ll never come on. (White clip level changes with ISO and LUT settings in Cine-EI mode.)

The second is that setting zebras to come on at 95 IRE means seriously overexposing the image. This brings us back to the white chip in the Macbeth chart, which is two stops brighter than 18% gray. Each doubling or halving of light is equivalent to a one stop change:

2 x 18% = 36% (or +1 stop)

2 x 36% = 72% (or +1 stop)

Therefore two stops brighter than 18% gray is 72%. Following that same methodology, 100% reflectance is +2.4 stops above 18% gray (1.4 x 72% = 100%). Back in the old days, when cameras clipped at about 2.5 stops above 18% gray, setting zebras at 100 IRE resulted in very accurate exposures because no matte surface in the world reflects more light than is striking it. Are you shooting outdoors and see a cloud in the sky? Set that cloud’s exposure to just below 100 IRE using zebras and everything else that’s lit by sunlight falls into line. Are you shooting in an office and see white paper sitting on someone’s desk? Expose the paper at just under 100 IRE using zebras and everything else falls into place.

What happens, though, when your camera sees five, six or seven stops above 18% gray before white clips? The aforementioned system doesn’t work so well anymore. Exposing a cloud or a piece of paper just below white clip overexposes it by several stops.

18% (middle) gray has been a universal film reference since the invention of the light meter, and yet it is a surprisingly difficult tone to recognize in the real world. When I shot a lot of film I was completely enamored of my spot meter as I thought it was the only tool that told me exactly how my images would turn out (although I’ve since learned that this is not always the case!). I’d look for an 18% gray reference in every scene—a white wall in shadow, gray asphalt, etc.— and use that as the basis for my exposure. The problem is that 18% gray is surprisingly hard to quantify visually. As we saw above, the difference between two stops above and below middle gray is the difference between white and black: at those values we stop perceiving gray values as gray and instead think of them as the lower limit of white or the upper limit of black. We are extraordinarily sensitive to slight variations in tonality in mid-tones, but our visual system is also extremely adaptive: our perception of middle gray can change depending on whether that tone is surrounded by white, black, or a solid color. Exposing 18% gray at plus or minus a half stop either way can result in a somewhat serious distortion of the cinematographer’s intent.

While my brain easily tells me when it perceives something as white or black, it does not do the same for middle gray.

And then it struck me. Instead of using 18% (middle) gray as a zebra reference, why not use white?

Barium sulfate is a standard diffuse white reference that reflects 97% of the light striking it. It appears to the eye as a very bright white. Almost no diffuse object in the real world is that bright, so the white standard in the video world is 90% reflectance instead of 100%. Log curve graphs published by most manufacturers will reference a point on that curve that shows where 90% white falls, and 85-90% is the brightest white that you’ll typically see on a glossy camera test chart.

While I can’t always put my finger on when a gray value hits exactly 18%, I can much more easily recognize when a diffuse object is white. I learned this partially by learning to calibrate a viewfinder to bars: I was taught to turn contrast down and then slowly bring it up until the white chip at the bottom of SMPTE bars starts to look white. It’s an abrupt transition: one second it’s light gray, and the next my brain says it’s white.

SMPTE Color Bars. I was taught to lower contrast and then increase it to the point where the white chip just started to appear white. Going any farther would make highlights bloom in the viewfinder and present the image as brighter than it was being recorded.

In the case of my run-and-gun adventure, I decided to set one of the camera’s zebras to 90% white. The FS7 has two sets of zebras that function in two different ways: zebra 2 is very traditional in that one sets an IRE level and zebras are triggered when a portion of the frame goes beyond that, but zebra 1 requires one to specify an IRE percentage around which one defines a window, or “aperture.” If I set zebra 1 at, say, 60 IRE, with an “aperture” setting of +/- 5, then zebra 1 will come on when a portion of the image falls between 55 IRE and 65 IRE.

Knowing this, I set zebra 2 at 95 IRE, where white clips when using the LC709 Type A LUT at ISO 1000. Zebra 1’s bottom limit was 50 IRE, so I bottomed it out and set an aperture of 7 or 8 units. I then found a neutral white object and underexposed it so it fell just below the zebra 1 settings, exposing the white object at around 40-43 IRE. Then I opened up 2 1/4 stops—which should make an object that was exposed at 18% gray now appear 90% white—and reset zebra 1 to have an aperture of +/-5 and while changing the calibration point so that zebra 1 came on at the new exposure.

I think the new zebra 1 IRE value turned out to be 75 when viewed through the LC709 Type A LUT. If I’d done this same trick while viewing the image in SLog3, the IRE value would have been around 61 IRE (which is Sony’s SLog3 stated 90% white point).

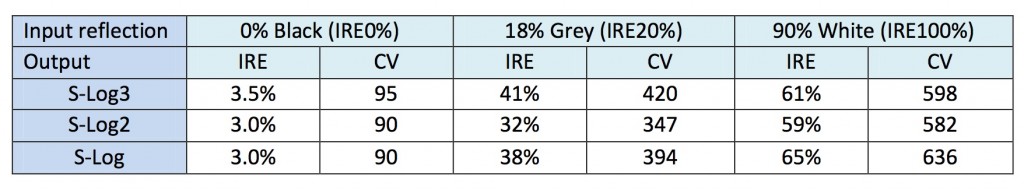

Comparison of white, black and 18% (middle) gray values in SLog, SLog2 and SLog3. I don’t know why this chart states that 90% white is 100 IRE; according to a formula given to me by Charles Poynton and published in several of his books, 100% reflectance falls at 100 IRE.

Comparison of white, black and 18% (middle) gray values in SLog, SLog2 and SLog3. I don’t know why this chart states that 90% white is 100 IRE; according to a formula given to me by Charles Poynton and published in several of his books, 100% reflectance falls at 100 IRE.

I still set exposure largely by eye using a calibrated viewfinder, but if I saw a diffuse white object on the set I could check my exposure using zebra 1 and see if it was actually exposed to be 90% white (75 IRE on that particular gamma curve). If not, I made a little aperture tweak to place that object at the 90% white value for whatever mode I was in, and the result was almost always a slight improvement.

Where this really comes in handy is when I have to expose an image using zebras while viewing log directly. The F5/F55/FS7 cameras can’t engage LUTs while shooting slow motion, and estimating exposure in log can be a bit difficult. SLog3’s middle gray point is 40 IRE, which makes mid-tones appear “right” when viewing log directly, but the rest of the image is still flat and gray. Bright values, in particular, look dull. Setting one of the zebras to trigger at 90% white may help to dial in a correct log exposure, avoiding the tendency to overexpose because the image doesn’t otherwise “pop.” 90% white falls around 60 IRE on a waveform monitor in SLog3, so while that looks light gray on a calibrated display it will appear as a bright and diffuse white object when graded or viewed later through one of Sony’s standard LUTs.

Knowing that a diffuse white object falls at 60 IRE in SLog3, and being able to place it there without using a waveform—which Sony doesn’t give us under certain circumstances—can instill a lot of confidence while looking at a flat log image that’s never meant to be viewed directly. Sadly, with some of Sony’s most powerful yet affordable cameras, this can’t be avoided.

This was a bit of a revelation for me. My brain has a much easier time recognizing diffuse white than a perfect 18% gray reference. Knowing that the brightest diffuse white objects tend to be, at most, 90% reflective, and knowing how to find that on an IRE scale, gives me one more tool to bail myself out of questionable situations when I have to work quickly, with cheap but powerful cameras, and I don’t have the time or the tools to do things the “right” way. Just find a diffuse white surface, expose it for middle gray, close down 2 1/4 stops, and set zebras accordingly.

Disclosure: I have worked as a paid consultant to both Sony and to DSC Labs, maker of high-end camera test charts.

Explanation: Yes, I know that the label “IRE” has been effectively retired, but it makes sense to use it in this case to prevent confusion between waveform percentages and percentages of reflectance.

Art Adams, Director of Photography

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now