Adrenaline. High drama. Zero failure tolerance. Beta software. Which one of these things is not like the other? This is the story of how even a flagship broadcast brand can take an honest risk once in a while, and a glimpse of how it all played out behind-the-scenes this past weekend, as relayed to me by one David Simons, co-originator of After Effects.

Now, software developers have tackled many exciting challenges since the era when coder Margaret Hamilton put humans on the moon and changed the notion of what humans could do, or be. After all, no one knew whether it could be done until the mission succeeded, and had it not, the failure would have played out on an international stage.

Which is more or less exactly the situation Simons and fellow O.G. After Effects computer scientist Dan Wilk found themselves in Sunday evening, in a broadcast equivalent of mission control, Fox Studios in Century City.

From left, Dan Wilk, Dave Simons, Allan Giacomelli and Adobe Sr. Strategic Development Manager Van Bedient celebrate a job well done.

(For the rest of the article David Simons is referred to as DaveS, a nickname he has held since the early “There’s a 50% Chance My Name is Dave” days of After Effects, and Dan Wilk is Wilk, as he says is typically called now that the team is full of guys named Dan.)

The moon-shot in question was an opportunity to improvise on live television for an audience of several million viewers—via animation—running on software that is technically still pre-release. Moreover the feat had to be completed twice, for EDT and PDT time zones.

How does an opportunity like this even come about?

The initial invitation came to Adobe a few months earlier, in February. The plan involved a sequence for the final three minutes of episode 595 (entitled “Simprovised”) that would be acted and animated in real time. The idea was to feature skilled improviser Dan Castellaneta, as Homer Simpson, responding to questions from live callers—real ones, who dialed a toll-free number—as a series of other animations played around him. The beginning and ending lines would be scripted but would still be performed in real time. The production team of the Simpsons had contemplated trying such a feat before, but it was only once Character Animator was in preview release that they felt that there was in fact a possibility of going through with it.

Technically, the challenge was unprecedented. The software wasn’t even designed for real-time rendering. In early tests, the team was not satisfied with the lip sync quality, so Adobe Principal Scientist Wil Li went to work overhauling the way phonemes (distinct units of sound in speech) were mapped to visemes (mouth shapes). “We dropped one of our mouths,” says DaveS, “added two more, and renamed one… ending up with around 11” (technically 15 total, adding in four corresponding exaggerated mouth-shape versions). Translation of phonemes into mouth shapes created in a Photoshop file is at the core of what Character Animator does.

What worked?

Although the software can use video to determine facial expressions and body positions, in this case, contrary to what has been reported elsewhere, Castellaneta was only on a microphone. No video of his facial or physical movements was captured. “They decided they didn’t want Dan to have to worry about any performance other than what he was saying, no camera on him.”

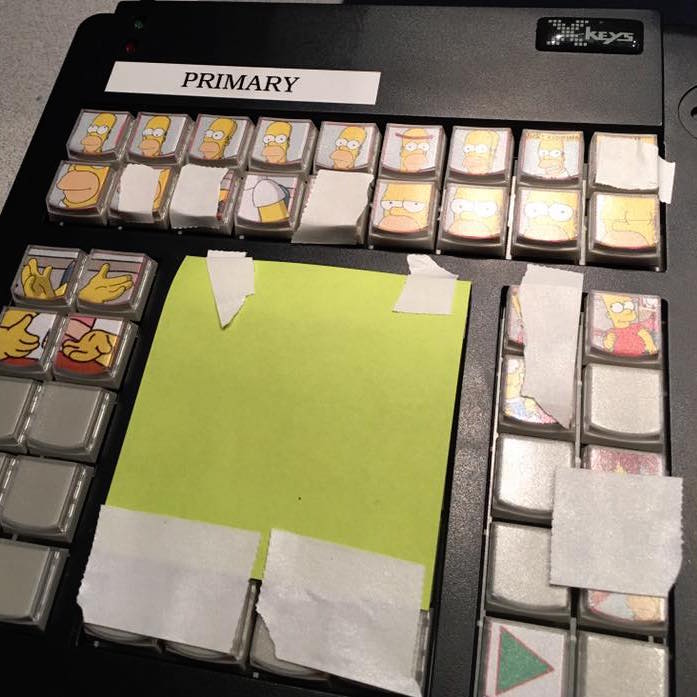

For Homer’s body, longtime Simpsons producer David Silverman operated a keyboard with preset actions from the audio mixing room. Allan Giacomelli from Fox sat next to him, operating a second system, ready to take over if the need arose. The keyboard, DaveS explains, was also set up to trigger camera views (a wide and a close-up, which had to be rendered with separate source due to details like line thickness) as well as everything else that happened in the scene: Homer answering the phone, a series of other characters moving across the frame in cameos, and the big finale in which the walls of the “bunker” collapse to reveal Marge in curlers, gently burping Maggie on the couch.

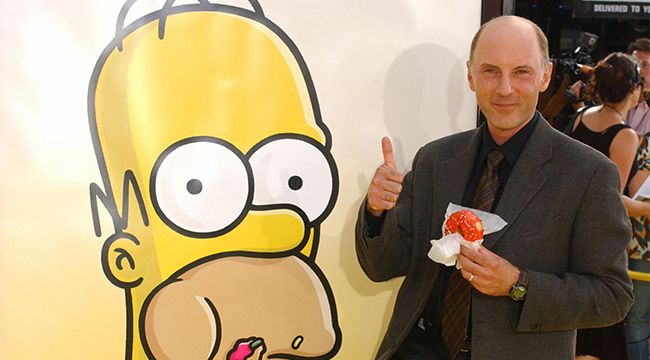

Of course, none of this would be possible without extensive improv experience by the voice of Homer Simpson, Dan Castellaneta. (Photo: Getty Images)

One huge question was that of adapting the software to operate in real-time. It was designed as a module for After Effects, which of course is render-only, and so real time usage had not come into play other than for previews. “The first big challenge is that live lip-sync isn’t as good as when your timeline can see into future,” DaveS elucidates. “This isn’t just smoothing or interpolation, the software knows the odds of what’s going to happen” by using the future information to derive the most likely mouth shape.

This, in turn, led directly to a second major improvement that was fast-tracked for Character Animator: “We made it so you can (use the future-looking lip sync) mode live, with just a half-second delay.” This is a special mode that won’t be in the upcoming preview release of Character Animator, but which is likely to be in the next version. “Anyone who wants access to it can contact us to get into our pre-release program.”

The render systems for show day were the fastest available Mac Pros—two of them for fail-safe redundancy. Since Castellaneta had no visual monitor, a short delay was not a concern; it would be added to the 7 second delay needed to meet FCC requirements for a live event (to allow for bleeping of foul language—the closest thing to this having been the word “Drumpf” in the second airing, a subject widely anticipated beforehand and left in, unedited).

What could possibly go wrong?

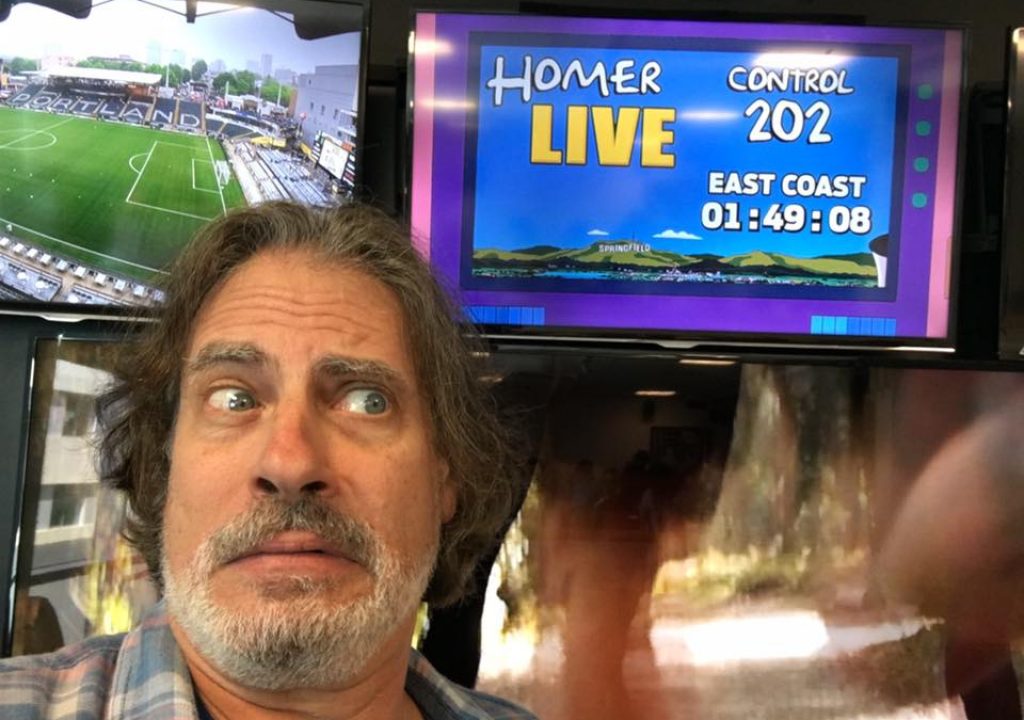

Simpsons veteran David Silverman prepares for everything to go perfectly according to plan as showtime approaches. (Photo: David Silverman)

There were just three rehearsals with Castellaneta at Fox Sports in the two weeks prior to the show. A rehearsal with employees lobbing questions was recorded, and an international version was recorded earlier last week that was ready to be cut to as a backup.

The main concern was that the puppet being animated was “the largest we had in terms of memory by far.” The 478 MB .psd file’s 2659 layers, delivered last Friday morning, included not only Homer’s rig but the full animations of all of the supporting characters as well as of the scene itself. Optimizations had been done to make this one enormous “puppet” created by the Simpsons artists operate properly; it could have been rendered just as quickly if created as a set of layers, but “they were just putting everything into the Homer puppet, one enormous Photoshop document. It was working despite the possibility that it was just too big.”

The principal line of defense against unexpected surprises was to have two Macs set up the exact same way, each with its special keyboard to trigger the animations, with audio fed to both. “If one crashed, we could switch to the other Mac. If he was in the middle of a special movement, it might glitch” but the show would go on.

In the trenches: the X-Keys keypad with customized Homer-keys at the ready (Photo: David Silverman).

It wasn’t until 4:00 pm on Sunday afternoon, one hour prior to airtime, that an issue emerged with the backup machine; “the gags were all running slowly. The main machine was running fine, but the backup one was clearly running seconds too late. The Macs were identical, so we were thinking, how do we know the main one isn’t gonna suddenly bog down?” There no way DaveS and Wilk could know for sure if some previously unseen glitch would also emerge on the main system to prevent subsequent animations from being triggered, including the wall collapse that ends the episode.

“We had to go to air without knowing what was wrong.”

The backup machine wasn’t required for the east coast broadcast, which came off without a major hitch (if you look for excerpts on YouTube, you may see stuttering motion which has been confirmed as the result of faulty capture, and did not appear in the actual transmission).

That left the other shoe to drop in 3 hours. “We got some pizza, had a drink, and then went to work.” After Wilk kicked around various unlikely theories with DaveS and Allan, he had a sudden flash of insight: “it had to be App Nap.” This feature, introduced in OS X Mavericks, causes inactive applications to go into a paused state, helping to reduce power usage. As it turns out, App Nap had been disabling the software that ran in the background for the custom keyboard.. “We used the terminal command that forces that machine to kill the feature.” Problem solved!

Except… the question remained what to do about the main machine, which had performed fine, with App Nap actively running, in the first performance. “We decided if it ain’t broke don’t fix it.” The two developers were also able to confirm a lag on the main machine if it were left idling, which, naturally, it hadn’t been.

And so, the west coast broadcast came off without a hitch.

How will this change the way an animator works, or even what it’s possible for animation to do?

An odd parallel: After Effects & the Simpsons (as a Fox series) are the same age, give or take 3 or 4 years; more to the point, each has demonstrated staying power that has extended far beyond the odds (or the competition). While the cartoon was a juggernaut nearly from its inception in 1989, the software that debuted in 1993 was anything but; yet just like the show, it soon found its passionate fans, myself very much included in both cases.

Character Animator is an application in its own right, both in terms of its power and the learning curve involved to rig a character and make full use of it, and it appears destined to become that rarest of entities, a new desktop video application developed entirely within Adobe.

He couldn’t tell me about other series or studios that are interested in Character Animator yet, but when I asked DaveS what type of show he thought was a good fit for the technology, he named Archer, a dialog-heavy series with a clean straightforward artistic look.

“Straightforward” doesn’t mean the character needs to literally face straight toward the camera, but each new angle requires a separate puppet rig unless a production is very clever about warping a flat-shaded character. While the Simpsons has been and will continue to be animated by hand in Korea, it seems it’s only a matter of time before another major show adopts Character Animator—whether or not animation for live television becomes a bonafide trend.

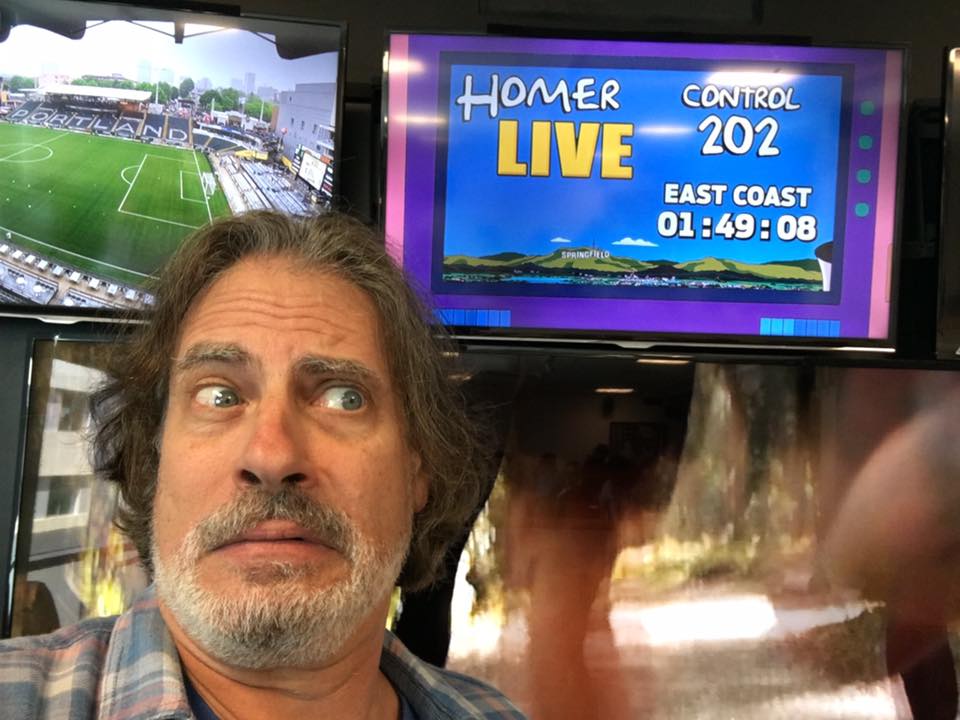

Homer stands by.

Homer stands by.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now