Yes, it’s true: your camera has no color gamut. Don’t believe me? Good, ’cause I’m going to try to lay it out for you. I’m not sure I’ve got it all down yet, but let’s see how far I get.

Ready? Brace yourself.

SPECTRAL SENSITIVITIES

A camera is a measuring device. That’s not sexy, but it’s true. It’s basically a piece of lab equipment that we use to capture images. It doesn’t have a native color gamut. “Color gamut” doesn’t even apply, because the only things that can have color gamuts are things that can display color.

Projectors have color gamuts. Monitors have color gamuts. Printers have color gamuts. Cameras do not have color gamuts.

All a sensor does is measure how many photons strike its surface. Sure, that surface is covered by thousands of tiny filters that attempt to limit the wavelengths of light that reach any given spot, but it’s still just a measuring device. At the sensor level, color doesn’t exist.

COLOR MODEL

A color model is a way of representing colors in numerical form. RGB is a color model. HSL and HSV are color models. Y’CbCr is a color model, although it is often referred to as a color space. Color models encode hue values in different ways, depending on what is desired, and they each have their strengths and weaknesses depending on their encoding scheme.

In RGB, 125,64,23 is a color. (We don’t know what color yet. That comes later.)

Color models don’t come into play until the number of photons striking each photo site on the sensor has been converted from a voltage to a number.

A color model is simply a way of assigning numbers to color values, but they don’t mean anything… yet.

COLOR SPACE

A color space is a color model with some additional attributes that relate color to something. In an absolute color space (what we’re interested in here), hue values encoded in a color model are related directly to human vision. Typically a color space will have a set white point: Rec 709, for example, uses D65 (roughly 6500K) as its version of pure white, while P3, the digital cinema standard, uses D63, which is a slightly warmer and greener white point.

When we white balance a camera, we are attempting to define what white is for the purposes of capturing neutral color in a scene. That measurement reflects only what the camera has to do in order to make your white reference appear white on an output device. If I white balance a camera and it tells me that the white balance is 4300K, all that means is that the camera has had to make some adjustments in order to make the white reference in the scene look white later on a monitor or screen. That white will always appear to be D65 in color on an HD monitor and D63 in a P3 theater.

A color space will also employ scale information. A color model is simply a way to numerically define a color, but without some sort of scale, and a way of relating that color designation to human vision, we can’t reliably know what it is or reproduce it.

Remember the RGB color I “encoded” above? Here’s what 125,64,23 looks like:

We can know this because it is likely being displayed on your monitor in sRGB. sRGB has a defined white point, scale, and primary colors, so it’s possible to (somewhat accurately) reproduce this color across many displays because we know what 125,64,23 relates to. On their own, those three numbers mean nothing.

An absolute color space relates a color model directly to human vision.

COLOR GAMUT DIAGRAMS, AND HOW THEY LIE

A “gamut” is a range. When we talk about color gamuts, we’re really talking about the most saturated hues that a display can reproduce within a color space. Those are defined by “color primaries,” which are the most saturated hues that a device can display. The trick, though, is that these primaries often represent at a middle luminance value, and don’t show us the entire gamut possible as the colors a display can reproduce change with luminance.

One of the big differences between a television and a film print, for example, is that an OLED television can display a very bright, pure blue (0,0,255). That’s because its surface is covered with tiny light emitters, and turning on the blue emitters only results in our seeing a very bright, pure blue. Film, on the other hand, can only filter light. We start with white light and carve away everything that isn’t blue. By the time we’re done, what’s left is a pure but dark blue. It simply isn’t possible to create a bright-yet-pure blue because of film’s subtractive nature.

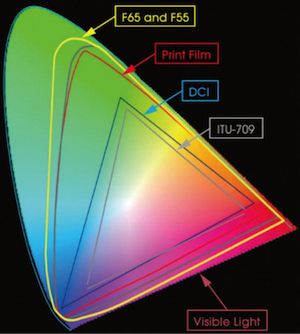

You wouldn’t be able to tell this from looking at a film color gamut in relation to a video gamut:

2D gamut representations don’t tell you the full story, as gamuts are 3D.

Gamut representations also don’t necessarily show you what the camera can do. They are often a mathematical representation of how colors are coded for storage, but that’s it. They’re a handy working container. Sometimes they are built bigger than they need to be, because that gives camera engineers more space in which to store saturated hues.

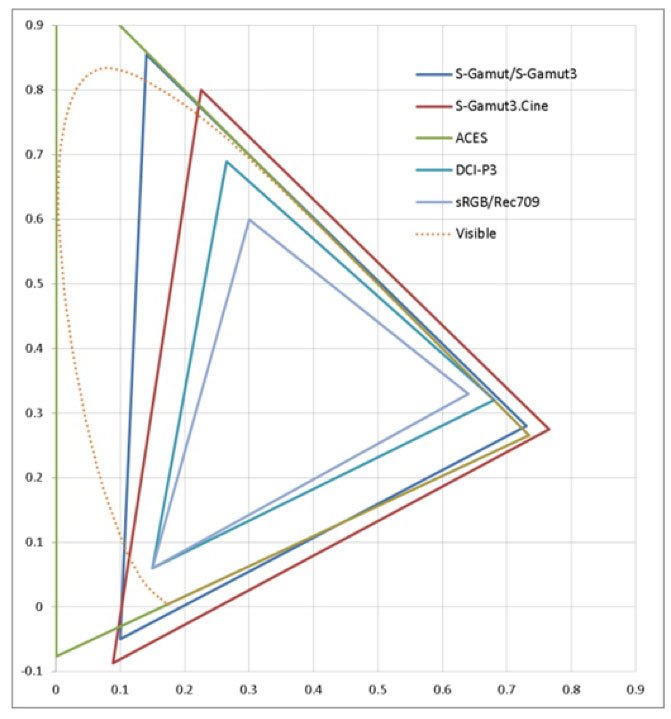

In the diagram above, SGamut, SGamut3 and SGamut3.cine represent the same range of camera spectral sensitivities. SGamut came out first, and there was a bit of a backlash. Many colorists don’t like grading through LUTs as they can cause color errors, but they also saw unexpected hue shifts when working with lift, gamma and gain in SGamut. This was something new.

As you can see above, the SGamut/SGamut3 green primary was shifted to the left in relation to the green primaries in Rec 709 and P3. It is possible to take footage encoded in the P3 gamut and desaturate it to fit into Rec 709 without too much trouble, because their green primaries fall in line with white (in the center of the triangle) but SGamut/SGamut3 doesn’t work that way.

Sony’s solution was to create SGamut3.cine, whose green primary mathematically aligned with those of P3 and Rec 709. Colorists could now use lift/gamma/gain to create predictable results in P3 and Rec 709. Does it matter that SGamut3.cine’s green primary is shifted outside of the boundaries of human vision? Not really. The gamut of encoded colors is smaller to accommodate this shift, not because anything about the camera has changed but because of how the color values have to be encoded to make things easier.

Take a look at how the green point of the color gamut triangles line up between SGamut3.cine, P3 and Rec 709/sRGB. When primaries line up like that, one can reduce the gamuts of the larger color gamuts to fit within the smaller ones simply by desaturating the color. (This isn’t a complete solution, but it’s possible.)

2D gamut representations don’t tell you what the camera sees. They show you an abbreviated version of what colors the camera engineers can store and make available.

In a similar way, ACES (in its various incarnations) is only meant as a storage medium. It’s built to encode colors right to the edge of human vision. At the bottom left of the ACES triangle (above), the blue primary drifts into negative number territory but that’s only to ensure that it can encapsulate human vision at the top left. See how the spectral locus bulges on the left side? The human visual color gamut isn’t a perfect triangle, as you can see by the dotted line, but when we make containers in which to store that color range it’s easiest to do so mathematically within a triangle. It’s much harder to come up with a model that perfectly emulates the curves in the human spectral locus, and the locus only represents average human vision anyway. By defining our three color primaries—or “anchor points”—outside of the range of human vision, we can code anything that falls within it. That doesn’t mean we’re capturing and recording invisible colors, but it does mean we can encode everything that falls within human visual limits—if a camera can see it.

That extra room also helps in color grading. If the gamuts above only show what colors it is possible to encode, then they need to leave room in which to push those colors around. Also, hue shifts are apparently unavoidable when grading RGB footage, but the larger the color space and the finer its scale then the smaller those errors will appear.

There’s an amazing discussion of this topic here.

WHAT DO CAMERA COLOR GAMUTS MEAN?

To a DP, probably nothing. We can infer things from the storage space that a manufacturer designs around their camera’s sensor, but these diagrams don’t tell us anything real about the limits of what a camera can reproduce. And, indeed, we can’t see what a camera will do until its footage is displayed, because cameras don’t have color gamuts. Displays do.

In single sensor cameras, color is math. How “good” or “bad” a camera looks has everything to do with how camera engineers can add, subtract and bend the raw data that comes off the sensor. It’s a combination of artistry, technology, and making do. It’s also hugely complicated.

Until recently, I knew that color gamut charts were of limited usefulness but I still thought they presented information about a camera’s capabilities. Now I know that they only represent a small portion of the working space that a camera manufacturer thinks they need in order to capture the best color their sensor can offer. In other words, I know more than I did a short while ago, but less than I thought I did. A lot less.

In the end, there’s no replacement for a trained pair of eyes, a calibrated display, and a keen awareness of color to figure out what a camera can do for you.

I’ll be teaching two Arri Academy classes in mid-March, 2018 in Brooklyn and Chicago. If you’d like to learn the ins-and-outs of Arri cameras, accessories, color processing, LUTs, recording formats, etc., this is worth checking out.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now