My last article resulted in some new input on color gamuts. Here’s what I think I know now.

Before I start, if you haven’t read my previous article it might be worth taking a look as I’ll be expanding on those ideas, but not completely recapping them.

CAMERA RGB: THE COLOR GAMUT WITH PERSONALITY

There are a lot of different perspectives on color science. The details are hard to nail down, as the amount of information is fairly spread out, and there are different philosophies of how it works. For example, I’ve been told by some color scientists that cameras don’t really see a color gamut, they see “spectral sensitivities” that can then be bent into a color gamut. After my last article (linked above) a color scientist got in touch and told me that they believe camera’s do have a native color gamut, but they aren’t very well defined.

I’ve whipped up some diagrams that I very much hope will be helpful.

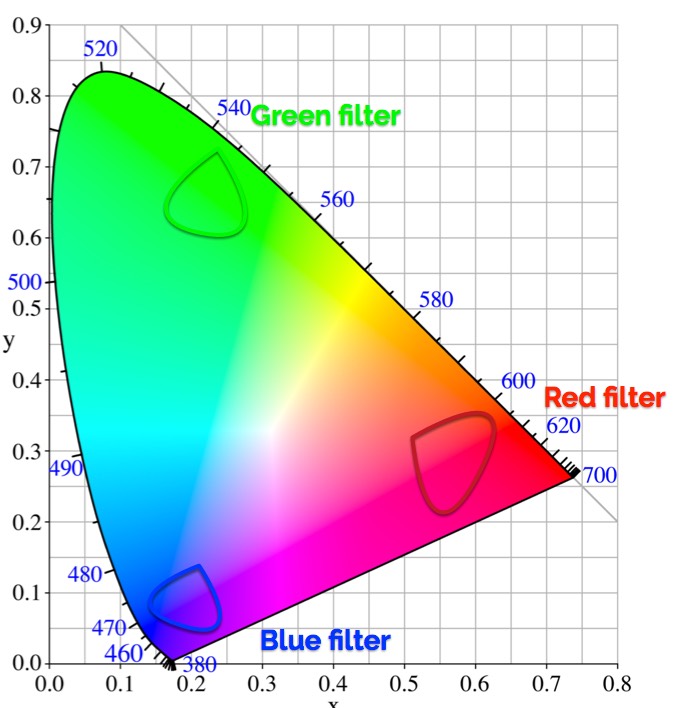

This is the CIE 1931 graph representing the gamut of human vision. It’s not a great representation because it only addresses hues at one brightness level (roughly 18% gray) but we’ll use it for convenience. Generally color gamuts are represented as a triangular subset of these colors, although please notice that human vision itself is not a perfect triangle.

This is the CIE 1931 graph representing the gamut of human vision. It’s not a great representation because it only addresses hues at one brightness level (roughly 18% gray) but we’ll use it for convenience. Generally color gamuts are represented as a triangular subset of these colors, although please notice that human vision itself is not a perfect triangle.

This is my semi-competent attempt to show how camera filters work. It is by no means terribly accurate, but hopefully this will illustrate the general idea.

No filter is perfect, and perfection isn’t desired: without overlap, camera wouldn’t be able to reproduce any hue other than pure red, blue or green. The trick is to have enough overlap that a color scientist can pull out secondary hues, but not so much that they can never be completely separated as colors will appear dull.

For example, in order to see a pure saturated red, a real world red would have to fall within the red filter’s area but outside of the the blue and green areas.

Also, the fake camera shown in this (crappy) diagram would have a hard time seeing purple, because there’s very little area where red and blue overlap that doesn’t contain some green as well. Too much green results in a low saturation purple.

Color is made by adding and subtracting color channels from each other in a way that creates someone’s idea of accurate or attractive color. Not enough overlap means no secondary or tertiary colors. Too much overlap means dull, unsaturated color. And if you’ve ever wondered what a camera’s color matrix is, well, it’s the mathematical function that does the adding and subtracting to bend colors into a defined color gamut.

Because camera filters aren’t pure, I’ve often heard camera color primaries described not as points but as “clouds.” A camera’s red, green or blue primary is a region of color, not a specific hue. That’s because a specific hue would require such a pure filter that it wouldn’t overlap at all with any of the other color filters, and we’d have a camera that could see very pure reds, blues and greens—and nothing else. And this would be problematic as the real world doesn’t really contain pure reds, blues and greens.

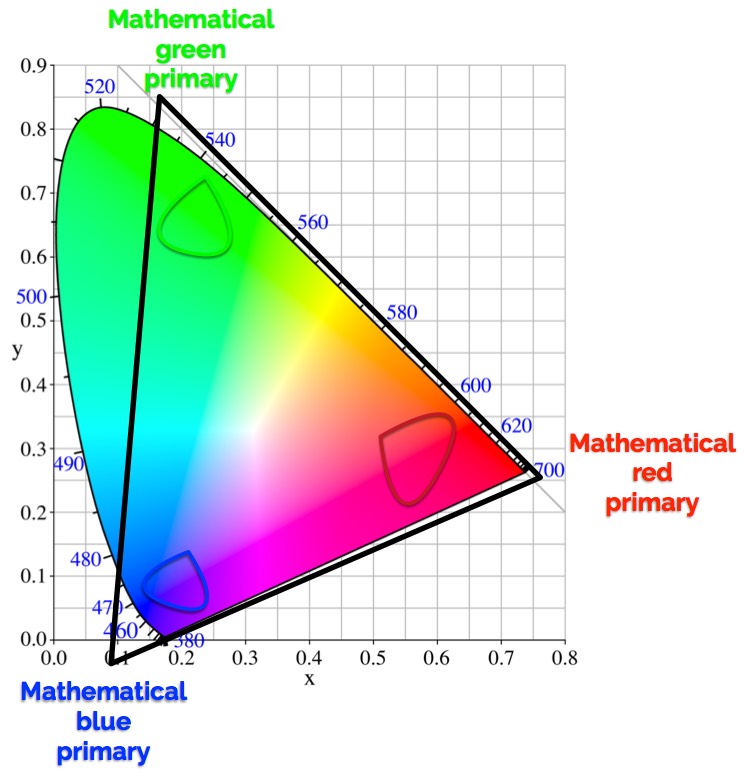

A color space, though, has to have defined primary colors. What a color scientist does is define a color space that will encompass those “clouds” and give them some room to work their color magic. That gamut is defined mathematically, in a computer.

Here, our fake camera’s primary “clouds” are encapsulated by a mathematically-defined color gamut that should contain all of the hues that a camera can reasonably reproduce. Sometimes the camera can see colors that fall outside of this color gamut, or it can’t see all the hues that fall within it. That’s fine, this is just a model in which a camera’s colors will be crafted depending on how much they stimulate the camera’s red, green and blue channels.

Here, our fake camera’s primary “clouds” are encapsulated by a mathematically-defined color gamut that should contain all of the hues that a camera can reasonably reproduce. Sometimes the camera can see colors that fall outside of this color gamut, or it can’t see all the hues that fall within it. That’s fine, this is just a model in which a camera’s colors will be crafted depending on how much they stimulate the camera’s red, green and blue channels.

Sometimes this color gamut will be made larger than it needs to be in order to more accurately reproduce hues that otherwise might be compromised. For example:

This color gamut uses red, blue and green primaries that don’t exist in the real world. This bumps out the left edge of the triangle to include more saturated hues between blue and green.

This color gamut uses red, blue and green primaries that don’t exist in the real world. This bumps out the left edge of the triangle to include more saturated hues between blue and green.

Color spaces are color models with some additional aspects to them: a reference white, some sort of gamma or tone mapping curve, and a numerical way of relating colors to human vision in a predictable way. Often those numbers extend beyond what humans can see. That doesn’t mean that those colors exist, but only that a color scientist can expand their working color gamut to try to maximize the number of hues that they can reproduce using a camera’s unique sensor and color filter array. This is commonly known as the “camera RGB” color gamut, and it is different for every manufacturer—and sometimes different between different camera models from the same manufacturer.

The placement of those “virtual” primary colors has a large impact on the look of a camera, because it affects everything about how colors will relate to each other and to display color gamuts.

There are trade offs. The larger the color gamut, the greater the number of code values necessary to designate all possible hues. If the color gamut is too big, then the number of code values that actually get used might be fairly small. For example, if a color scientist pushes the red, green and blue virtual primaries WAY out, they can potentially reproduce a wider range of hues while only using, say, 50% of the available code values. That could mean a lot of wasted camera and post computer processing power, as well as steps between hues that might be too narrow to prevent banding across gradients.

It’s a delicate balance between making the working color gamut big enough to reproduce most of the possible hues while not making it so big that the numbers involved become wasteful and inefficient. For example, if your color gamut has (arbitrary number) 16,000 possible code values for each color channel, but there’s no real world color that will result in a color more saturated than code value 8,000 in all three channels, then that extra gamut needs to pay off spectacularly because the cost of making the camera will be higher and image file sizes may be larger.

It’s important to understand, though, that every camera has its own unique color gamut that a color scientist uses as their color palette. From there those hues can be mapped, twisted, tweaked and shoved into a variety of other color gamuts (Rec 709, Rec 2020/2100, and DCI-3, for example).

None of this is revealed, however, in a simple CIE chart purporting to depict a camera’s color gamut.

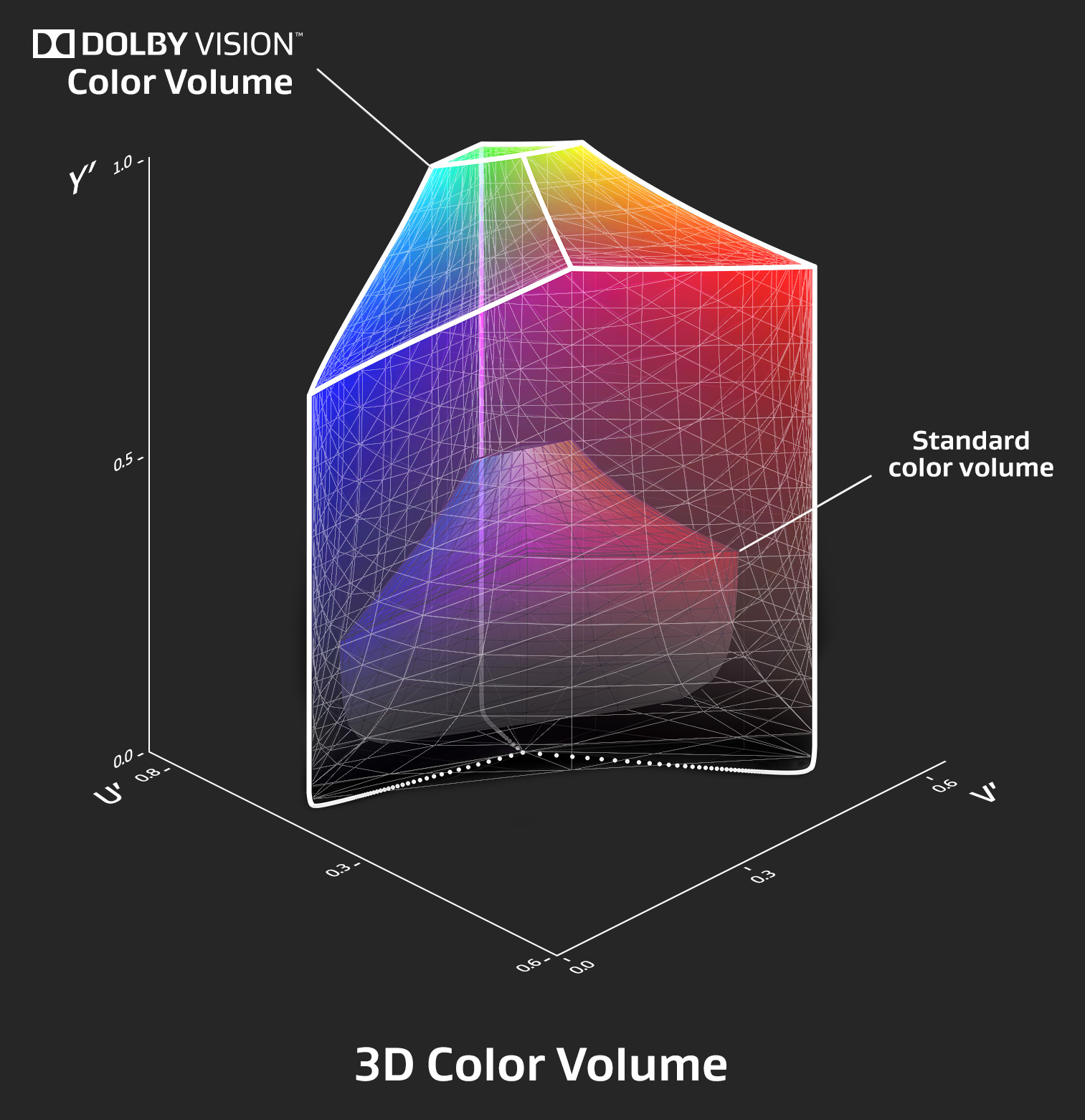

This is an illustration I used in an article I wrote about HDR last year. This is a depiction of an SDR display vs. an HDR display, but even so it gives you an idea of what a color gamut really looks like. It’s a three dimensional shape that extends from blackest black to whitest white. A simple pie slice is not going to tell you what a camera or display can reproduce.

This should also show you how complex it is to build a color gamut that encompasses the full range of a camera’s color possibilities.

A NOTE ABOUT HDR

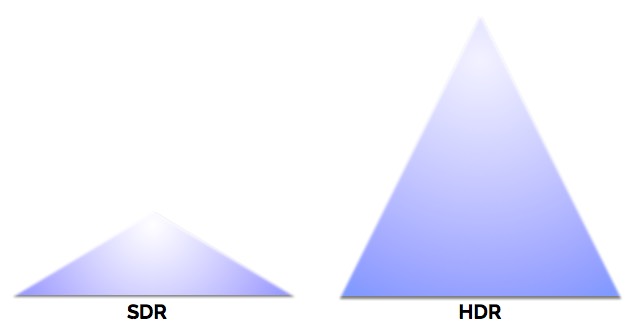

One color scientist I spoke to made an interesting point about HDR. He said that most of the extended color gamut we see in HDR has more to do with dynamic range than color gamut. For example:

Here’s a crude illustration showing the possible blue hues, from most saturated to white clip, in SDR and HDR. SDR has a dynamic range of only about 2.4 stops from middle gray to white clip, so there’s not a lot of room for color to breath and highlights are heavily compressed. HDR, on the other hand, has up to 5ish stops from middle gray to white clip (for a 1,000 nit display) so there’s lots of room for colors to breath.

Here’s a crude illustration showing the possible blue hues, from most saturated to white clip, in SDR and HDR. SDR has a dynamic range of only about 2.4 stops from middle gray to white clip, so there’s not a lot of room for color to breath and highlights are heavily compressed. HDR, on the other hand, has up to 5ish stops from middle gray to white clip (for a 1,000 nit display) so there’s lots of room for colors to breath.

A hue can be both very saturated and very bright, which effectively increases the color gamut of any HDR display. It is possible for an HDR display to be limited to Rec 709 color and it would look very similar to a Rec 2020 display simply due to the increased dynamic range.

Rec 709 encompasses a significant number of the hues that we see on reflected surfaces in the real world. Rec 2020 expands that a bit, but it’s not likely that we’d see those hues outside of animated content (or real world footage with the saturation cranked to 11). Rec 2100 (which is Rec 2020’s much larger color gamut but with HDR dynamic range added in) simply reintroduces the dynamic range and contrast that we’d see in the real world to hues that we’d see in the real world, before being smashed into the limited dynamic range of Rec 709.

If you’d like to read more about camera color gamuts, I’d recommend taking a look here. It’s mostly about still camera color gamuts, but the principles are exactly the same. This page is less comprehensive but deals with digital cinema color gamuts.

The things to remember are:

- Primary colors in single sensor cameras are, natively, “clouds” of spectral information. There are no specific camera red, green and blue primaries as such.

- Part of a camera’s unique look is based on what kind of color gamut a color scientist creates mathematically in which those colors can exist. This includes defining primary colors (that may or may not be real), choosing primary colors that work with a sensor’s strengths, and scaling that color gamut to include as many hues as possible without losing the ability to represent them in the fine steps needed to prevent banding and other artifacts.

- Once all that is done, a color scientist can then focus on transferring those colors into a common color space (Rec 709, Rec 2020/2100, DCI-P3) that displays and projectors can understand.

I’ll be teaching two Arri Academy classes in mid-March, 2018 in Brooklyn and Chicago. If you’d like to learn the ins-and-outs of Arri cameras, accessories, color processing, LUTs, recording formats, etc., this is worth checking out.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now