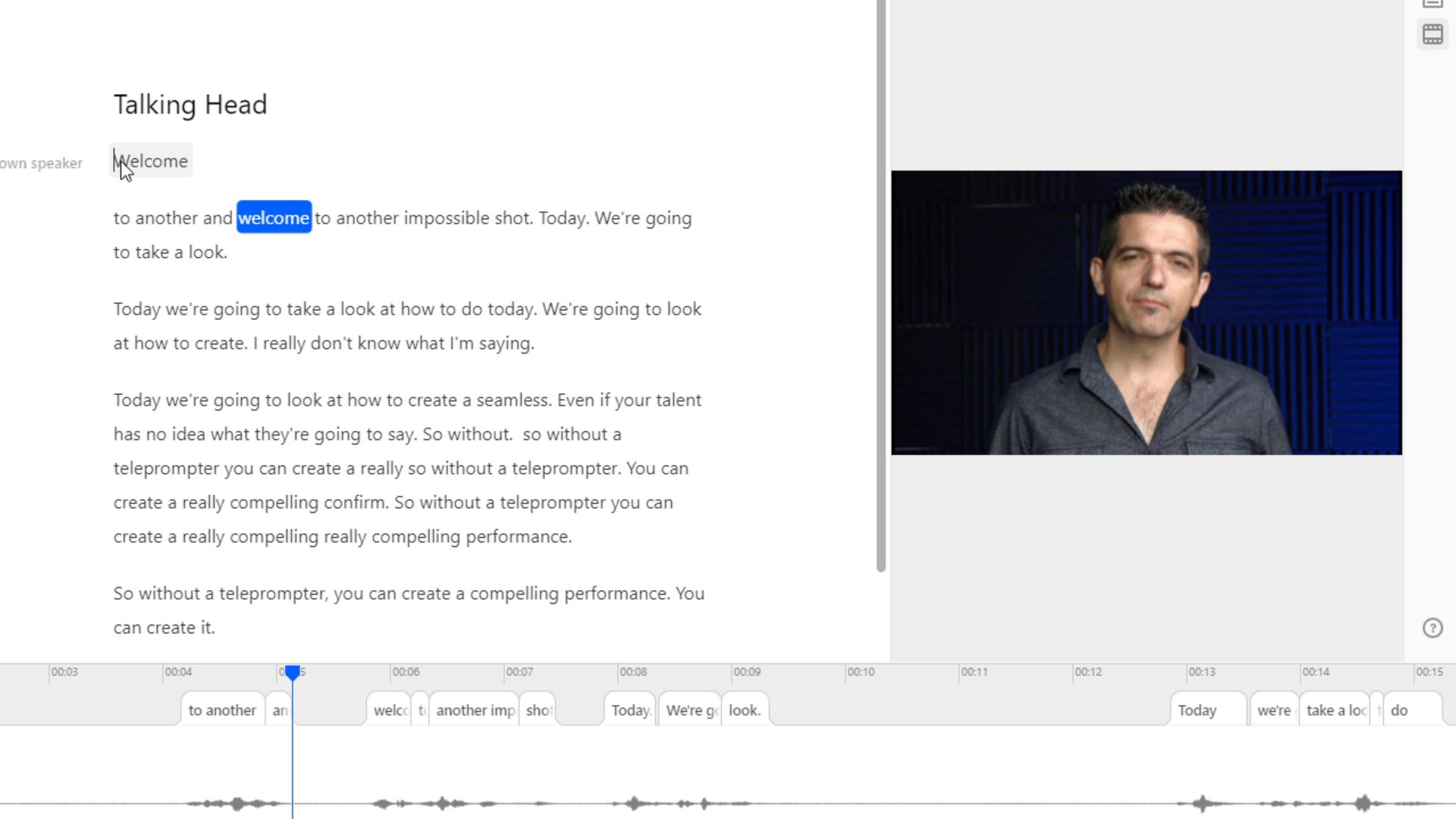

Ethics aside, editors often have a legitimate need to hide a jump cut. Many editors are still unaware of morphing transitions, while others don’t know what to do when they go wrong. In this week’s Impossible Shot, I work through the process of applying and tweaking morphing transitions (Fluid Morph in Media Composer, Morph Cut in Premiere Pro, Smooth Cut in Resolve, and Flow in FCP X).

Step 1: The radio edit

Step 1: The radio edit

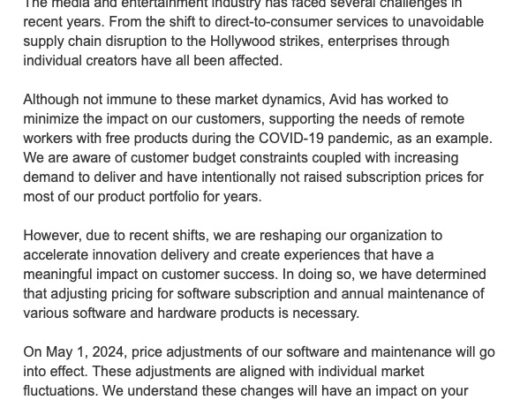

The free video lesson actually covers two topics: Morph cuts and using Descript to build the radio edit. I’m such a rabid evangelist for Descript right now that I’m covering that in another article. So obviously the first step in crafting a single take from multiple clips or takes involves cutting together a “radio edit” of the monologue. If you’re unfamiliar with the term, watch this video.

Step 2: Apply the morph transition and trim

When you apply a transition to a cut, it will usually be added with a default length of a half to one second. Unless your talent is particularly lifeless, there’s often too much movement going on over the course of a second for the morph cut to convincingly blend action from the tail of the A side clip with the action of the incoming B side clip. Morph cuts often work best with 8-10 frames of cross dissolve, so simply by trimming the length of the transition you might see a dramatic improvement in the “invisibility” of the edit.

Step 3: Roll the edit point

Usually there are several frames of pause at an edit point, since we tend to cut between words and phrases. So if your initial morph doesn’t work, roll the edit point forwards and backwards and try to find a transition point where the head and body are a closer match. You’re actually looking for two things: matching position in frame, and matching direction of motion. If the head is turning to the left in the A side clip, but turning to the right in the B side clip, the morph won’t work even if the head is in the identical position in both clips at the cut point. That’s because the head will appear to unnaturally pivot, and the frames of the A and B side clips will quickly misalign spatially moving away from the cut point, causing the morph to fail. In fact, I would say that of the two things it’s more important to match the momentum of the motion than find a perfect match pose between the clips.

Step 4: Do some custom surgery

Step 4: Do some custom surgery

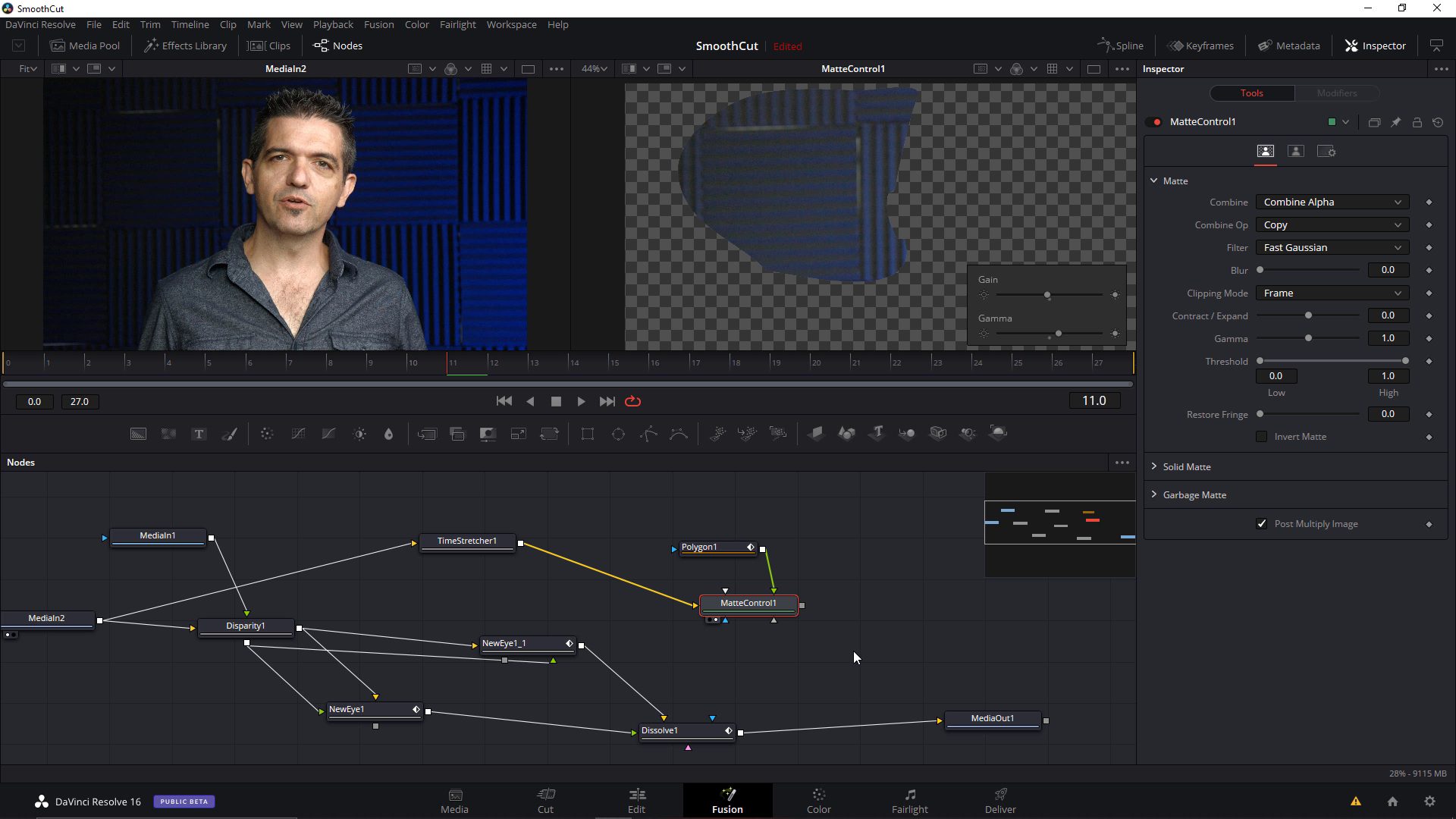

When all else fails, you can move into a compositor to do some deeper surgery. I show a relatively simple recipe for this in the video, hijacking Fusion’s stereoscopic toolset to perform a more advanced fluid morph, then eliminating artifacts via cleanplating. However even when this technique fails, there’s one more stop: a custom warp.

Using either a spline or grid warp (my preference would always be a spline warper, but if you only have a grid warp, it’ll work in a pinch) you can match the specific features of the face and body of your on-screen talent, then morph between the two. Why would this work when the morph transition failed? Because as a reasoning human being you’re matching mouth to mouth, corner of eye to corner of eye, chin to chin, etc. While some of the morphing transition tools use facial recognition to enhance their matches, they still ultimately resort to matching motion vectors, “guessing” which pixels in one frame match the pixels in the other. If the talent makes any radical facial expressions or drastically changes the profile of their face, this will inevitably generate errors in the motion vectors, errors that you the human master and ruler over your software can avoid by simple observation.

Of course in this brave new world of machine learning, it’s only a matter of time before the computer does this better than us. In fact there’s no real obstacle to this and I’m a little surprised there isn’t a tool in the market already. The only thing standing in the way of such a tool is a detailed data set with derived ground truth image sequences.

Ethics aside?

The very first words of this article were “ethics aside.” And yet I feel the need to discuss the ethical issue here before we wrap. My firm conviction is that this technique should only be used either for fictional narrative (dramatic works) or in situations where the on-camera talent has the opportunity to personally review the edits outside the pressure of deadlines and other distractions. In the latter case, the person in question should also be made fully aware of where the original edits were and what was omitted.

Anecdotally, this hits very close to home for me. Several years ago a large studio called me in to build a system to perform pristine morphs on some 4K footage. The Avid Fluid Morphs that looked fine during the offline edit were falling apart at 4K when being onlined. So I was asked to build a system in Nuke that would achieve a flawless result. I ended up adapting The Foundry’s Ocula tools along with some custom spline warps on tougher shots.

It was only after I had completed the work that I discovered the footage was for a documentary and that the on-screen subject was not necessarily privy to the edits. Someone suggested to me (I have no idea if it was true) that some of the non-verbal footage had been altered so that, for example, the subject appeared to smirk at an interviewer’s questions. Now this kind of low-life editing can be done even without fluid morphs (you can always cut to an interviewee smirking at a question of deep gravity and imply subtext that was never present during an interview), but morphing edits make the process that much more insidious.

Some of you may be wondering how I could have been duped into doing such a thing. Unfortunately, VFX artists are rarely provided with the sound that accompanies the picture (and that was the case here), so unless you can read lips (I can’t) there’s really no way of knowing what you’re working on. I’d like to add though that I do believe morph transitions are an invaluable tool for many other situations, where they actually help the presenter communicate their message without putting the audience to sleep with a thousand awkward pauses…

Watch it free

Like all the content on moviola.com, this Impossible Shot is completely free to watch. As usual, we keep the runtime short and try not to waste your time. Click below to watch the video.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now