Introduction

Artificial Intelligence has promised so much for so long, and in many ways, it’s been less than perfect. Siri doesn’t quite understand what you’re asking; Google Translate can’t yet seamlessly bridge the gap between languages, and navigation software still doesn’t always offer an accurate route. But it’s getting pretty good. While a variety of AI-based technologies have been improving steadily over the past several years, it’s the recent giant strides made in image generation that’s potentially groundbreaking for post production professionals. Here, I’ll take you through the ideas behind the tech, along with specific examples of how modern, smart technology will change your post workflow tomorrow, or could today.

How this AI stuff works

Let’s take a step back and look at some broad brushstrokes. Artificial Intelligence encompasses many areas: natural language processing, neural networks, expert systems, machine vision, and more. Today it’s often known as Machine Learning, or ML, and the latest chips from Apple and Intel both include modules designed to improve the speed of ML operations.

Back at university, where I studied Information Technology, I majored in Artificial Intelligence and Artificial Life, but the pieces weren’t in place in the 1990s for a true revolution — “game-changing AI” was always a year away. Yes, you could train a neural network, and you could use fuzzy logic for crude tasks, but today’s tech has upped the speed and scale of what’s possible by several orders of magnitude — here’s a technical primer.

Training a machine learning model is essentially showing a computer what you want by example, many times over, by providing it with a data set (inputs) and telling it what it means (outputs). To teach a computer to understand human handwriting, you could show it many pictures of handwritten characters, tell the computer which characters the images represent, and then use that trained neural net to identify new handwritten letters. That’s how the world’s post offices automated the sorting of mail, and the same basic strategy is how we have OCR today, live on our phones in photos and videos we capture.

AI in your tools today

In truth, AI tech has been around for a while, but it’s only recently taken a more central role, and is doing a better job than traditional approaches. For example, Final Cut Pro’s traditional Noise Removal feature can remove background noise from audio, but that older feature can’t compete with its more modern Voice Isolation sibling, based on machine learning. If a clip is too small, you can use simple upscaling in any NLE, or jump to more advanced AI-based upscaling with products like Video Enhance AI from Topaz Labs. Photoshop has long offered many ways to up-rez an image, but the recently added Neural Filters include a Super Zoom feature, which invents new detail rather than letting things remain fuzzy.

In the land of color correction, FCP’s Color Balance and Match Color features are often unpredictable, while the more modern Colourlab AI grading solution (for all the major NLEs) seems to do a much better job. (While I haven’t reviewed this app yet myself, check out Oliver Peters’ look at it here.)

Those are just a few of the AI-based tools you might be using already, but there are a lot more out there that you’re probably not using. Let’s start with something simple and useful that’s often overlooked.

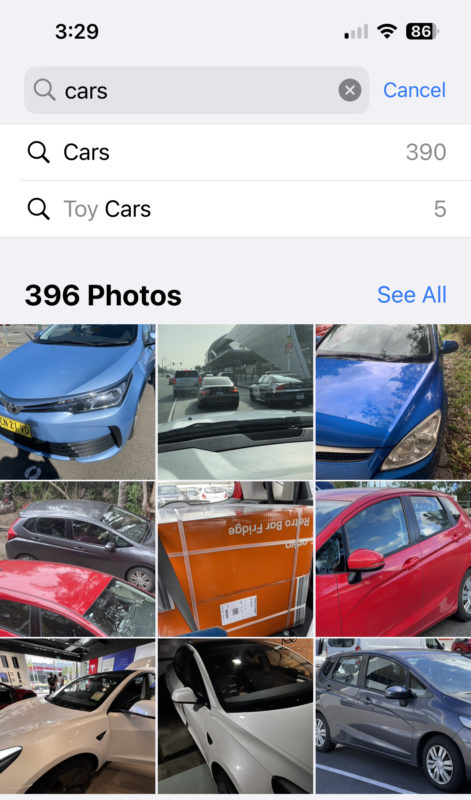

Image recognition

On an iPhone, head to the Photos app, then search for any word, like “chair” or “beach” and it will show you your own photos of those things. This is down to AI-based categorization of your images that occurs entirely on your device, in the background, without violating your privacy. Photos on the Mac can do the same trick, and if you put videos in there, it’ll work on those too.

There’s even a Mac app called FCP VideoTag which can create Final Cut Pro keywords based on what it sees in the videos you feed it, which is a very clever trick indeed. If you’ve got a library of stock videos, tagging them could be a great way to help you find the right clips more easily.

Automatic tricks with words

Automatic captioning (in Premiere Pro, iOS, YouTube and Vimeo today) is a neat AI trick and a big time saver. This is only going to get better, and it’s essentially free, today.

To me, the interesting thing is not saving a bit of time creating captions of your finished edit, it’s getting free, accurate transcriptions of every source clip, to make your editing job easier. Paid solutions like Builder and ScriptSync have offered this in many forms, but if AI-derived time-coded transcriptions become free, accurate and quick enough, text-assisted editing will become a much more widely used workflow.

Writers shouldn’t feel deprived: there are enough automatic AI-based writing tools that here’s a list of the 19 best, should you need to produce uninformative filler text that nobody will enjoy reading. Jasper is probably one of the most widely promoted of the recent crop, and while I’m sure its output is typical of much of what passes for content these days, I wouldn’t describe it as creative or surprising. Here are a few samples.

Are screenwriters safe? So far, AI attempts at actually writing scripts have failed — check out this short from 2016 for a laugh — but I’d say that AIs have a fair shot at writing fair-to-middling scripts fairly soon. Words are relatively easy, though. Let’s dive into the technical end of the pool.

Person recognition for instant background removal

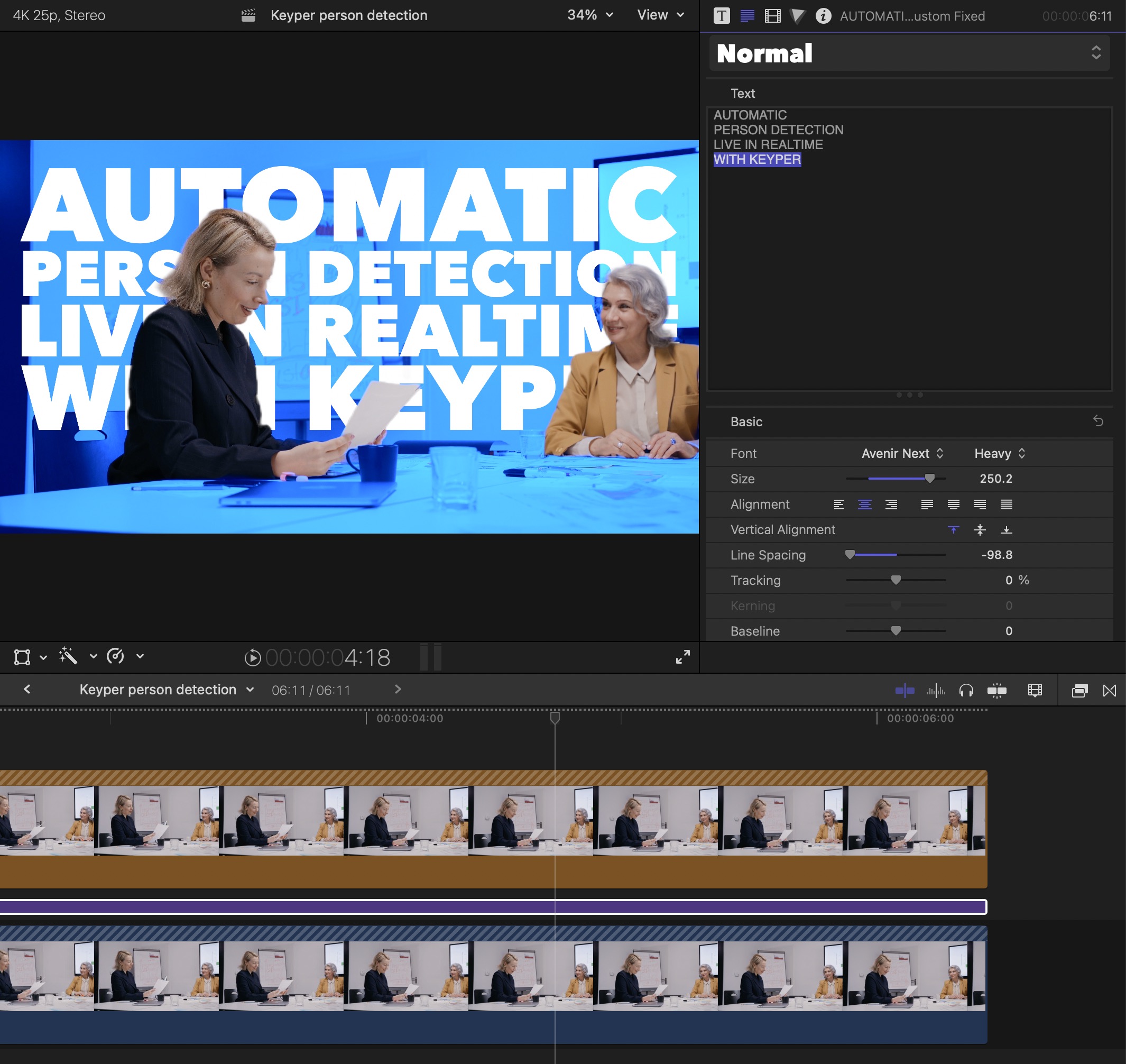

You’ve seen automatic background blur on video calls; not great, but usually not terrible. And you’ve probably seen Portrait Mode on your phone; better than videoconferencing, not perfect, but often good enough for many. Well, wouldn’t it be cool if you could automatically recognise people and remove the background behind them? You can. It’s called Keyper, and it works in Final Cut Pro, Motion, Premiere Pro and After Effects right now.

While it’s not 100% perfect, it’s good enough to let you color-correct a person separate from their backgrounds, or to place text partly behind a person. If you can control the shot at least a little, it’s like a virtual green screen you don’t have to set up, and can work wonders.

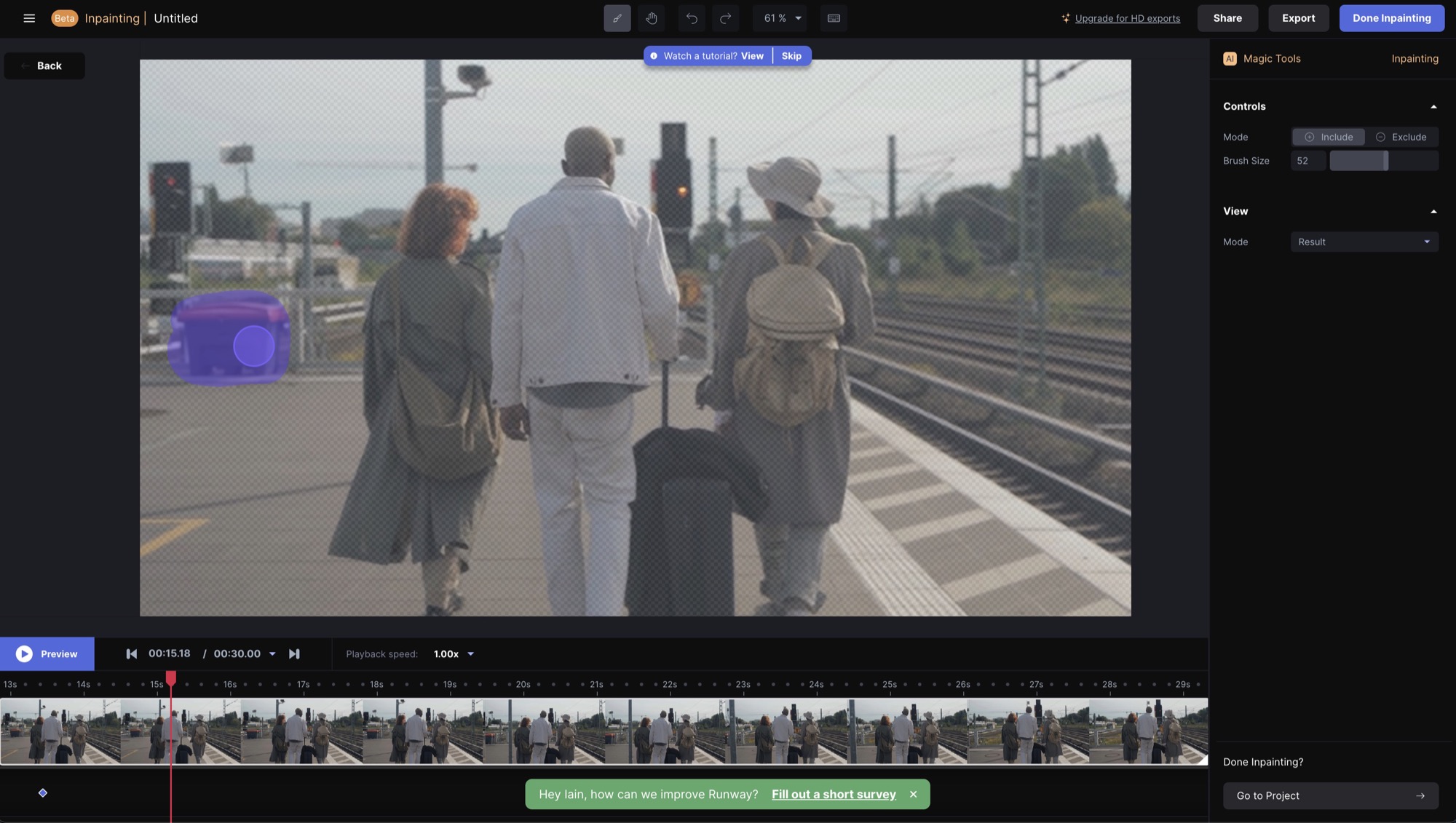

The same trick has also shown up in a web-based video app called Runway, which asks you to simply click on a person and it just gets rid of everything else. That’s not the only trick Runway offers, either — read on.

Filling in backgrounds, in stills and video

Simply cloning one part of an image over another is mechanical, but Photoshop’s Content-Aware Fill is a fair bit smarter. Known more generally as “inpainting”, it’s an impressive technique that’s essential for many VFX tasks, and After Effects incorporated a video-ready version of this tech a few versions back. It’s not new, but ubiquity shouldn’t make it any less impressive. Runway can do that too.

Soon, you should also be able to use ML-based techniques to automatically select an object, a trick which even your iPhone can do now. Another feature to be added is text recognition of your requests, so you’ll just be able to ask to “remove the bin in that shot” and it will.

We all know that life isn’t quite like a marketing video, but I haven’t seen a marketing video that promised goodies like this before. Fingers crossed?

Audio cleanup

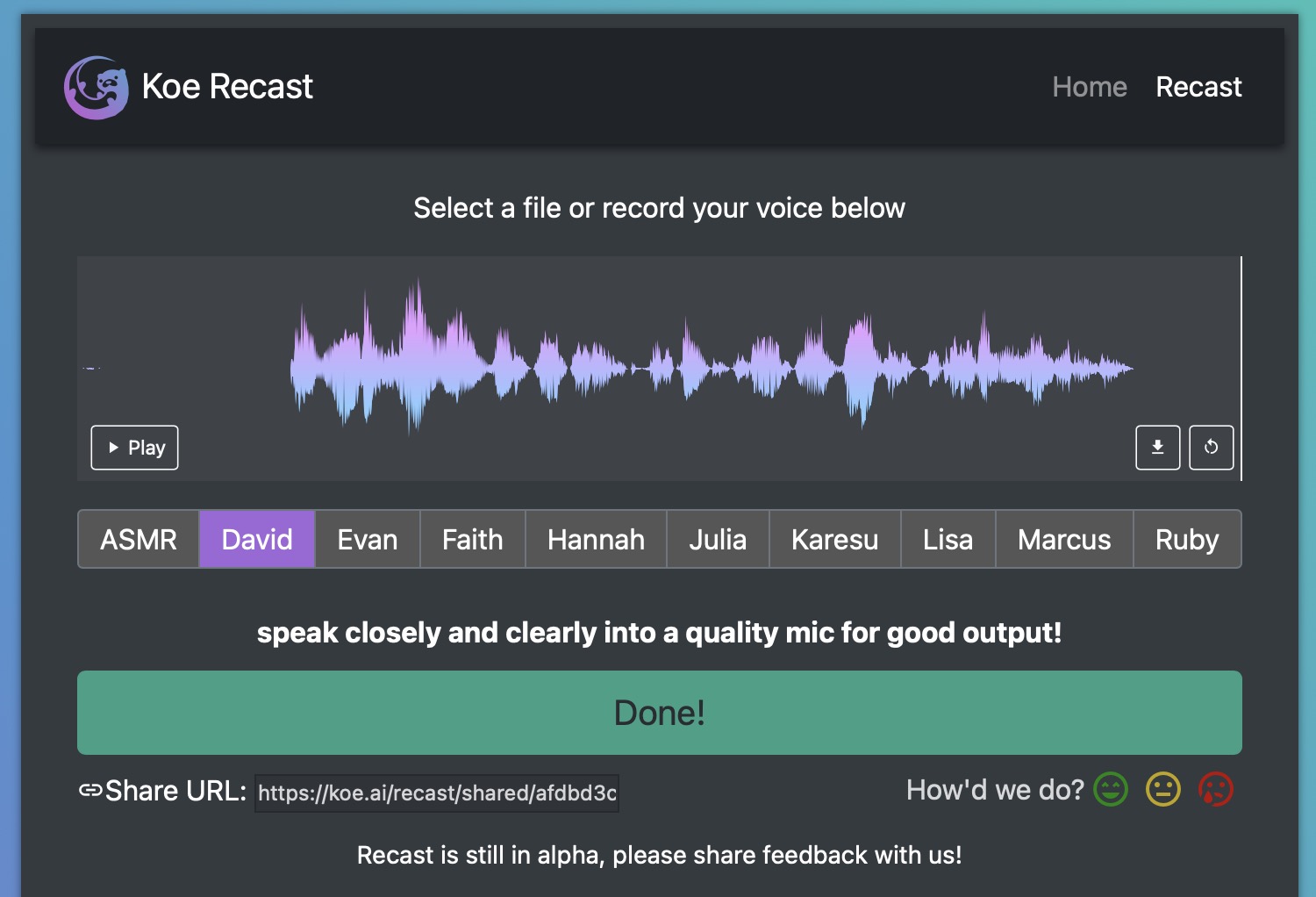

Bad audio is easier to clean up than ever. iZotope RX’s Voice Denoise and the new Voice Isolation feature in Final Cut Pro are both great, thanks to modern ML-trained models of what voices should and shouldn’t sound like. But beyond cleanup, modern AI methods can transform one person’s voice into another’s.

Unsurprisingly, it’s not perfect, but Koe Recast is still crazily good, and you can try it out right now. It’s a new service to let you turn your voice (or a recording) into one of a selection of replacement voices, with a decent amount of emotion, and with far better results than the terrible robotic nonsense used by cheap YouTube ads today. All these models will, of course, keep getting better, and we’ll definitely be hearing more generated voices of both kinds. When there’s more variety in voice styles, I suspect a large chunk of voice work will be machine generated.

But hang on, it gets crazier.

Image generation from a text prompt

It was just a couple of months ago when DALL·E 2 burst onto the scene, promising to create realistic or artistic images from a text prompt, though access was pretty restricted.

Here’s just one example, and here are many more.

Shortly afterwards, Midjourney popped up on a Discord server. A similar service, it made image generation far more accessible, and though it wasn’t quite as realistic, it’s capable of some amazing art.

Then, in late August, Stable Diffusion appeared, as an open source, freely downloadable, offline version of much the same thing. It’s fascinating that a 2GB model is now able to (more or less) create endless new images from any text prompt you care to imagine — a different one every time, with no internet access needed, and totally for free.

These automatic image generation engines have been trained on millions of the images on the internet, identified by surrounding text or their alt tags, and do their magic essentially by guided random chance. Starting from noise, the image is then randomly changed many, many times. Each new generation is assessed to discover which is the closest match for the text prompt you’ve provided, and as the process repeats, it eventually produces a coherent image.

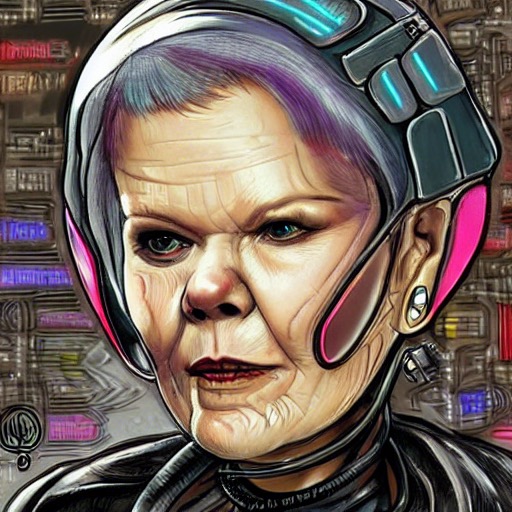

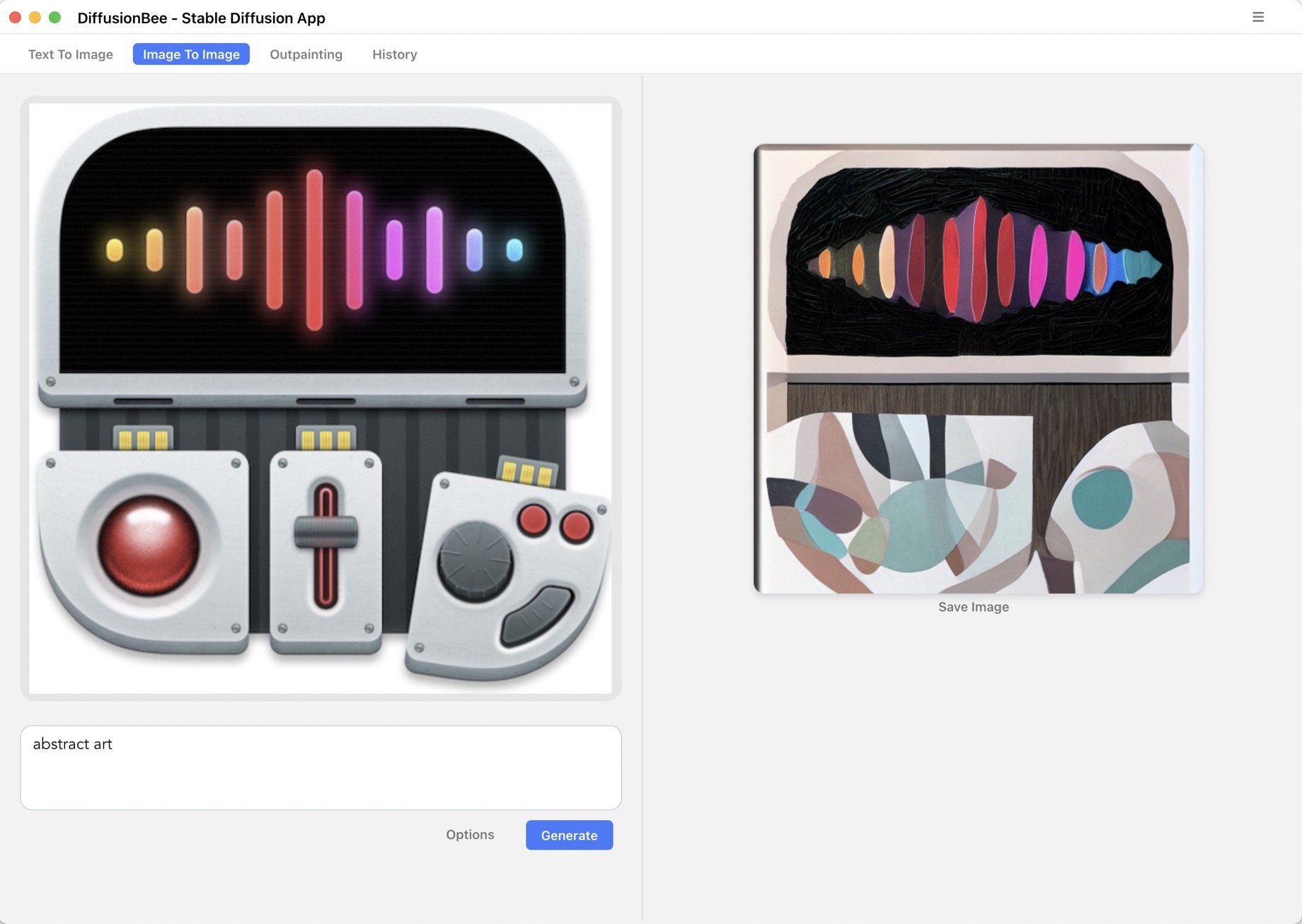

Though I did successfully compile and install a command line version of Stable Diffusion from scratch, I’d recommend grabbing Diffusion Bee if you’re on a modern M1 Mac; it’s entirely pre-compiled and just works. You just type in your phrase and receive an image in under 30 seconds on an M1 Max, or potentially much quicker if you’re on a PC with a fast GPU. Here are a few examples I’ve generated:

While this tech can inspire open-mouthed awe in a way we haven’t seen in a while, you might struggle to see direct applications for video production, besides the generation of backgrounds. So let’s take it further. One trick is the ability to input an image and generate variations of that image.

Another method was used by the VFX wizards at Corridor Digital to train their model with tagged images of their staff, then create images of them to tell a consistent fantasy story.

If you’re determined enough, you can also use these techniques to create an animation, like Paul Trillo did here using DALL·E 2 and Runway’s AI-powered morphing. In fact, Runway promises to integrate this tech directly into their editing solution for a seamless “replace the background of this shot with a Japanese garden” experience.

This is just the start, and when you start combining image generation with other open source projects — for seamless texture generation, AI-based upscalers, and deep-fake-style face mapping — you’ll start to see the potential. There are plenty more ideas in this thread.

Conclusion

While some fear a sentient robot uprising, many professionals fear the coming of AIs for their jobs. It’s nothing personal, and it’s nothing new; progress has always displaced people from employment. When the car arrived, anyone who drove a horse and cart could hopefully see the writing on the wall, and the car did, indeed, take over. The transition wasn’t instant, and it wasn’t universal, but today there are far fewer people who own horses than cars.

Is editing in danger? There are many apps on phones and on the web which promise to automatically edit your video for you, to save you the burden of actually watching your footage and trying to tell a story from it. And sure, if you’re not paying much attention, they’re a decent quick fix. But just as Canva might be fine for an invitation yet doesn’t scale to designing an annual report, these tools are not built for anything that requires a longer attention span.

Though every field is unique, the AI/ML genie isn’t going back in its bottle, and for some specialities like painting, drawing, and concept art, a lot of work is about to dry up. But it’s not all doom and gloom. While an ML system can get a lot done very quickly, humans still bring unique skills and creativity, and an artist who knows how to drive an image generation machine can use it to their advantage rather than bemoaning its existence. Going forward, the smart artist should focus on what an AI-augmented toolkit can do for them.

Across all these fields, the promise of AI is that computers will be able to figure how to create what we want, without us having to know exactly how to achieve the effect. We want two shots to look like they were shot in the same place at the same time, on the same camera. OK. We want the traffic noise in an audio clip removed. Easy. We want a car to look like it’s covered in fur. Sure, just ask the AI. But this doesn’t remove the need for a skilled post-production artist — your clients will still need your human help to make their visions become reality.

On the brighter side, it looks like a pretty creative time ahead for anyone ready to embrace the new wave. You never know, maybe this will end up being more fun than flying cars?

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now