This is the third installment of a six-part HDR “survival guide.” Over the course of this five-part series, I hope to impart enough wisdom to help you navigate your first HDR project successfully. Each day I’ll talk about a different aspect of HDR, leaving the highly technical stuff for the end. You can find part 2 here.

Thanks much to Canon USA, who responded to my questions about shooting HDR by sponsoring this series.

SERIES TABLE OF CONTENTS

1. What is HDR?

2. On Set with HDR

3. Monitor Considerations < You are here

4. Artistic Considerations

5. The Technical Side of HDR

6. How the Audience Will See Your Work

There are several crucial considerations when choosing an on-set monitor:

- How does the monitor process color information?

- How does it handle out-of-range highlights?

- Will the monitor be viewed in total darkness or under some form of ambient illumination?

- What happens to the display when large areas of the screen approach maximum intensity?

MONITOR COLOR GAMUT AND PROCESSING

The camera used on the Mole-Richardson shoot fed an unprocessed 10-bit raw signal to the DP-V2410 monitor, which was de-mosaic’d within the monitor, passed through ACES® and mapped to HDR. The color gamut setting was Canon Cinema Gamut > Rec 2020, which scales the camera’s large native color gamut to fit within the Rec 2020 color gamut. That result is then scaled once again to fit within the display’s color gamut, which is approximately that of P3.

There are exact settings for P3 and Rec 709 as well, although Rec 709 is not considered suitable for HDR.

HDR monitors can only, at present, reach P3 saturation levels or slightly beyond, but data should be captured in Rec 2020—or a camera’s native color gamut, if it is larger—for future-proofing. As we saw in Part 2, consumer monitors are generally considered to be HDR-ready if they can reproduce 90% of P3’s gamut, and indeed most programs are currently mastered at P3 saturation levels—but this will likely not be the case for long.

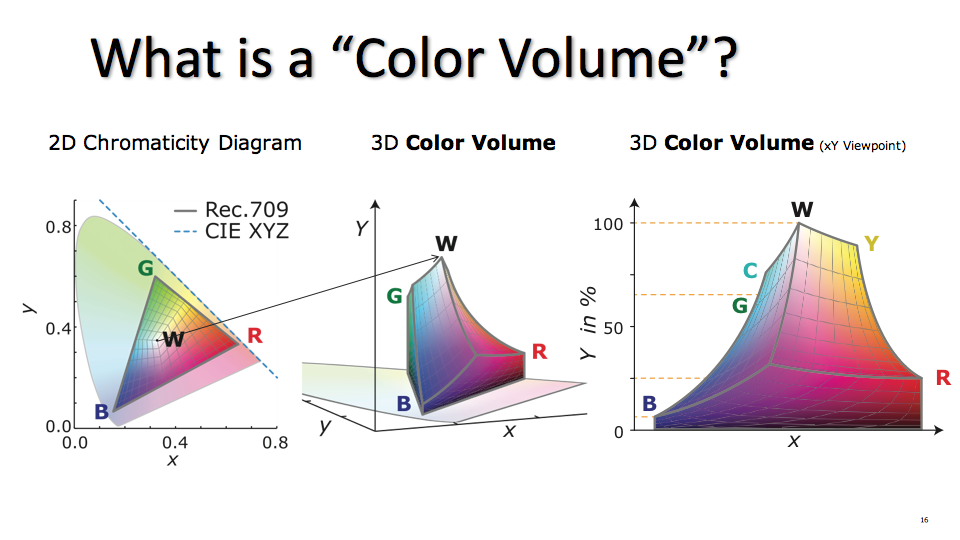

The term “color gamut” brings to mind the traditional scalloped CIE 1931 color chart, but that doesn’t tell the entire story. That chart represents only a thin slice of the full color gamut, cut from the middle of its tonal scale. The full range of a color gamut is a 3D shape that bends and narrows at its extremes, as certain hues can’t be fully saturated at the limits of luminance. Dolby® Laboratories has coined the term “color volume” to better describe this shape.

(above) A comparison between the “flat” CIE representation of SDR’s color gamut, using a slice through the color volume at middle gray, versus two different 3D representations of the same gamut that show how colors saturate and desaturate across the luminance range. (©2016 Dolby® Laboratories.)

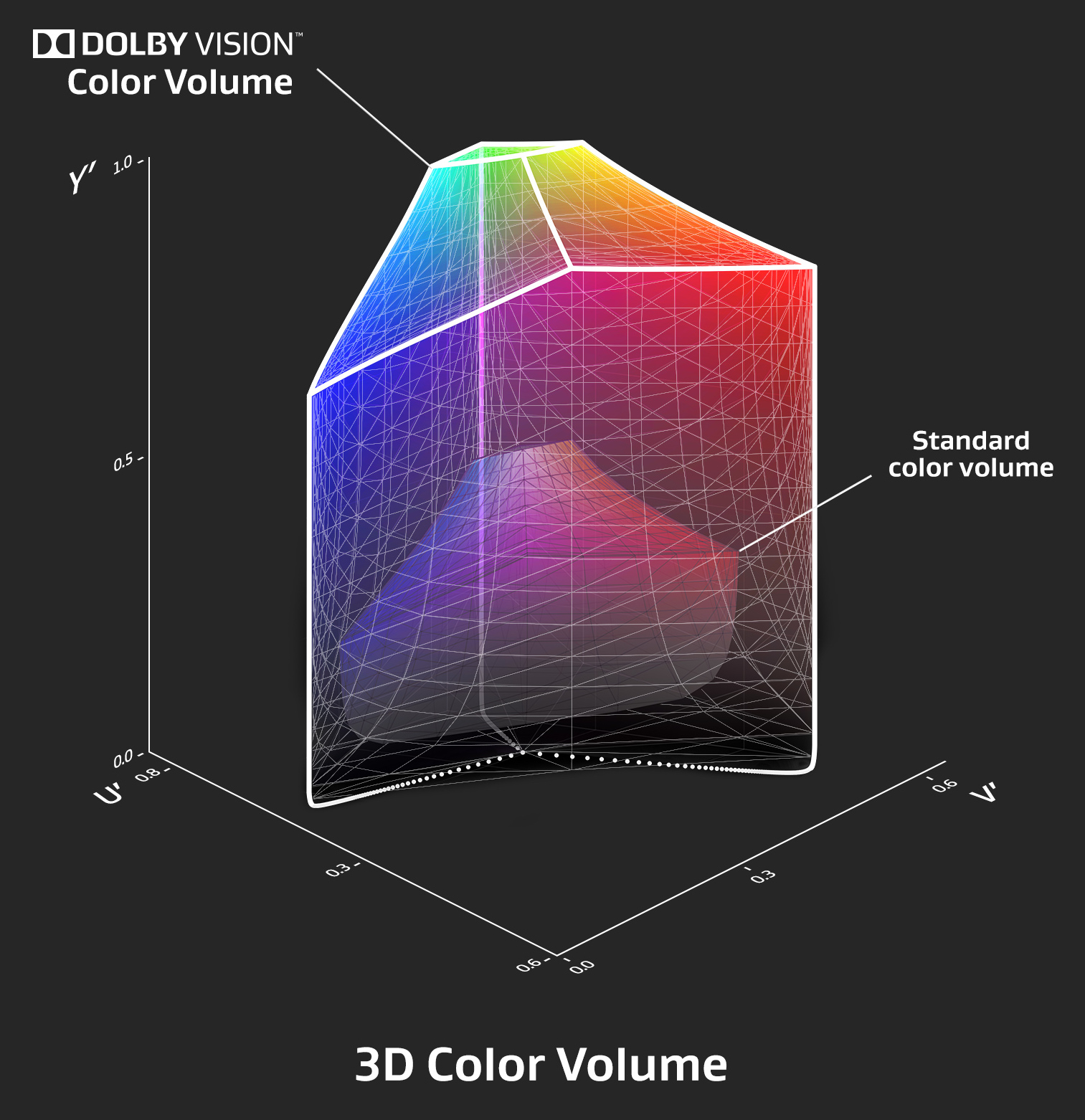

(above) This representation of SDR’s color volume, shown within HDR’s, illustrates Dolby VisionTM‘s increased overall saturation as well as improved saturation in highlights and shadows. (©2016 Dolby® Laboratories.)

“Color volume” is a wonderful description as it communicates the concept of a three dimensional color space in a more intuitive manner than does the traditional 2D CIE chart. It reminds us that changes in saturation occur across the full luminance range: highlights and lowlights may not be as saturated as mid-tone hues, and some hues may appear more saturated than others at different brightness levels. The traditional CIE chart shows none of this as it represents only a narrow slice of mid-tone hues.

I will, however, use the more commonly accepted term “color gamut” throughout the remainder of this article, as that’s the term used in the various HDR specification papers.

OUT-OF-RANGE HIGHLIGHTS

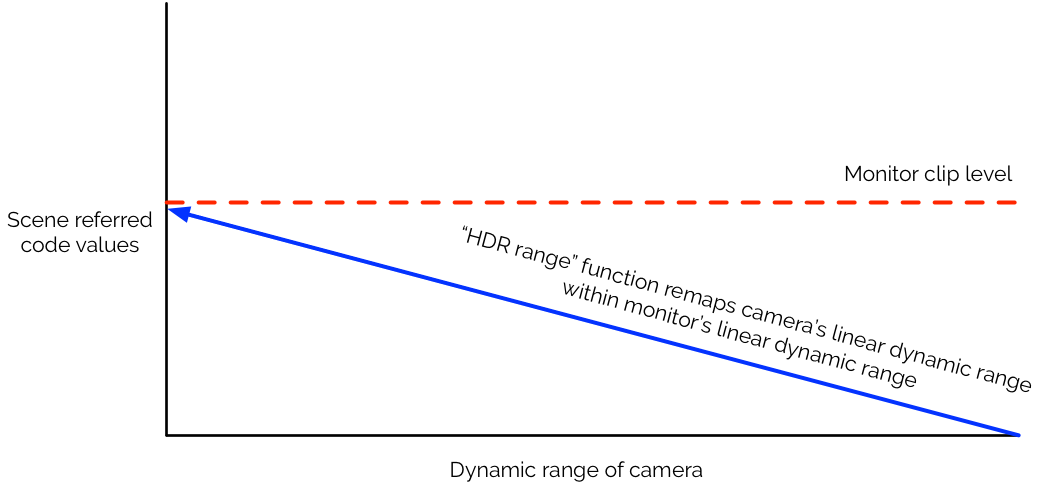

Canon’s HDR range scales out-of-range highlights to fit within the display limitations of the monitor, and can be found in all Canon HDR monitors. Setting HDR range to 4,000 scales the dynamic range of a 4,000 nit highlight to fit within the DP-V2410’s 400 nit container. Image contrast is distorted—mid-tones and blacks will darken—but highlight detail, contrast and saturation are easily evaluated.

As HDR range renders the image unusable except for examining highlights, it can be toggled via a function key for intermittent use. Simply program the maximum nit level desired (from 400 to 4,000) and push a button.

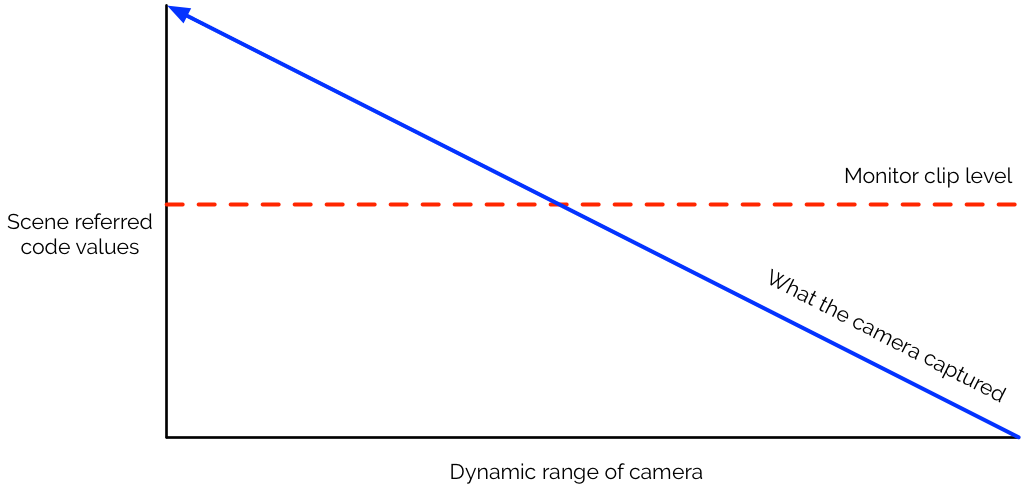

(above) What the camera captures may not always fall within the capability of a monitor to display.

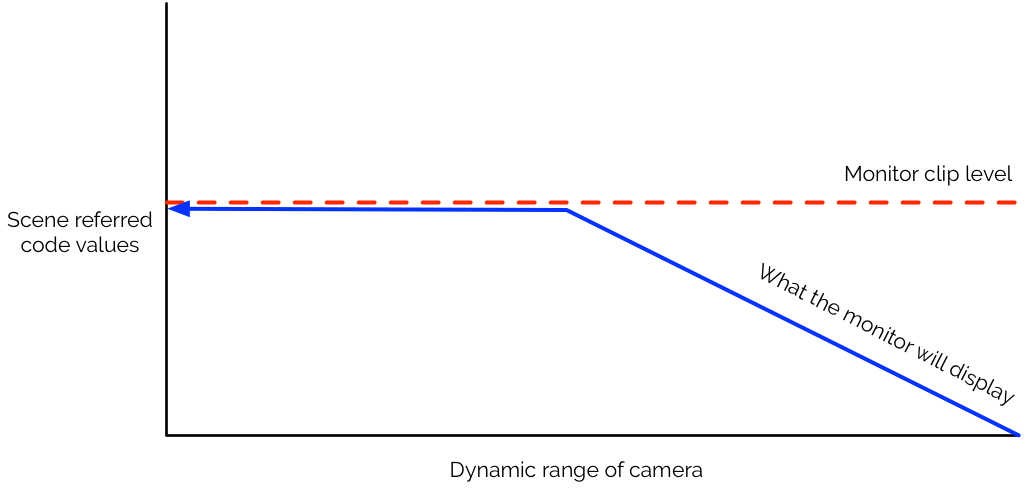

(above) Code values that exceed what the monitor can display will be truncated, resulting in clipped highlights.

(above) Temporary use of the HDR range feature allows the user to remap the camera’s dynamic range to fit within the monitor’s dynamic range. Mid-tones and shadows will darken, but highlight contrast, detail and saturation can be visually assessed.

At present, given HDR’s rigid encoding scheme, this is the only method available for viewing out-of-range highlights short of using a custom LUT. The good news is that this feature is built-in and ready to go, so users will never be left without a way to visually check highlights that fall out of the range of their monitor. (At present, unless one has a very large, very expensive and very power hungry 4,000 nit monitor on set, there is no other way to evaluate highlights.)

AMBIENT LIGHT VS. OLED AND LCD

Tests show that OLEDs and LCDs thrive in very different ambient lighting conditions.

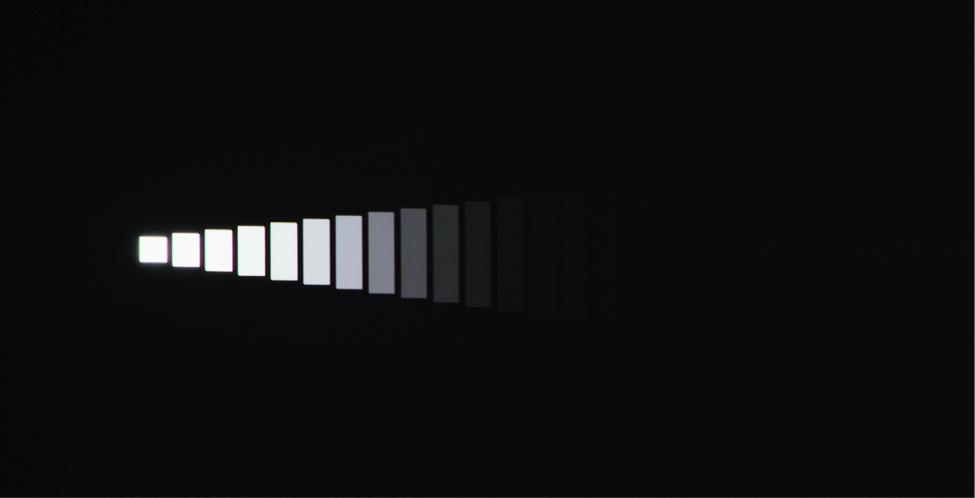

(above) 1,000 nit OLED display in total darkness. This is a DSC Labs XYLA 20 stop dynamic range chart.

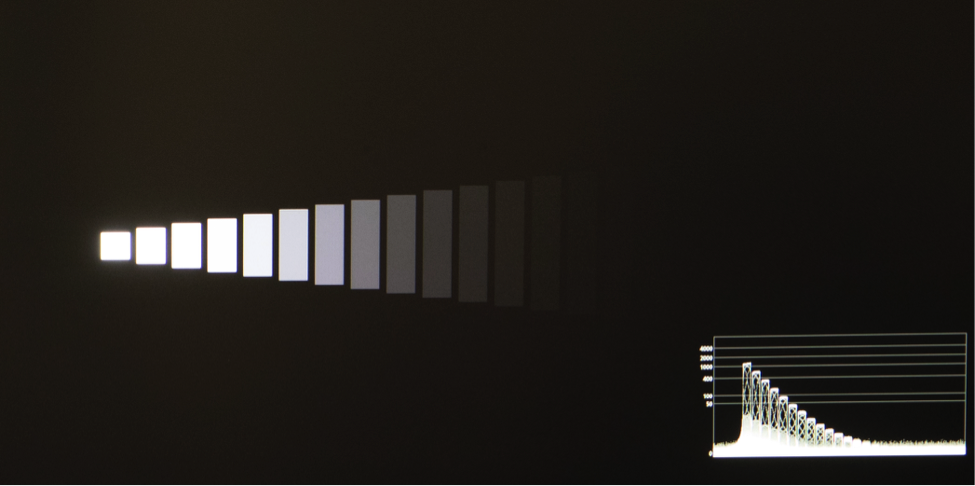

(above) 1,000 nit LCD display.

In perfect darkness the OLED display shows more contrast in the shadows when compared to the LCD display. The steps drop off more quickly due to OLED’s deeper black levels. Due to backlight leakage, the LCD screen can never be as dark as the OLED screen due to slightly elevated black levels.

(above) 1,000 nit OLED in uncontrolled lighting conditions.

(above) 400 nit LCD (Canon DP-2410) in uncontrolled lighting conditions.

On the other hand, any ambient light striking the OLED monitor results in visual loss of detail near black, as the shiny black surface will quickly reflect its surroundings. Shiny surfaces can be made infinitely black in environments that lack ambient lighting, but this is not all environments.

The slightly lifted blacks of the LCD display accurately portray shadow detail in situations where ambient light cannot be controlled.

ABL, MAXFALL AND THE WISDOM OF CONTROLLING HIGHLIGHTS

It takes a lot of power to drive an HDR display, and with power comes heat. OLED displays dissipate heat horizontally, across pixels, so the display will automatically dim large highlight areas in order to protect the monitor. LCD monitors are subject to similar heat issues, but to a lesser degree. Both contain ABL circuits—for “Automatic Brightness Limiting”—that will dim the screen overall to prevent heat from destroying the monitor.

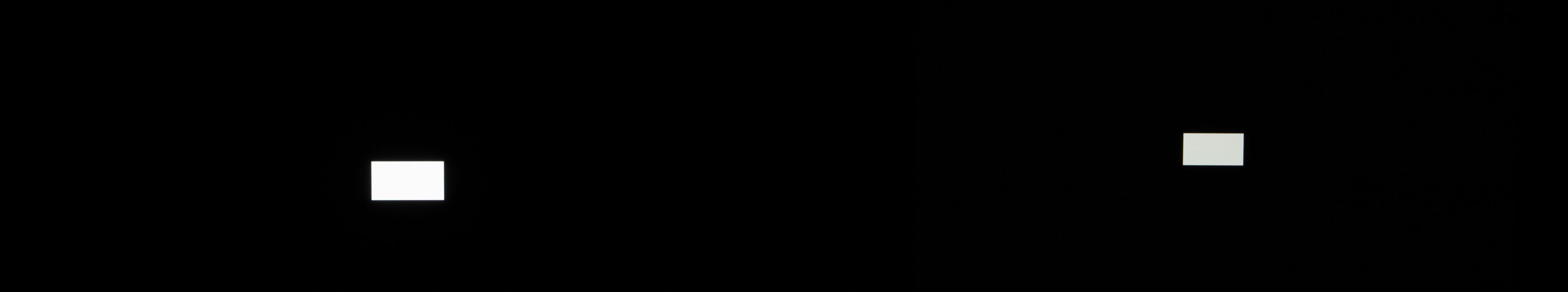

Here a 1,000 nit OLED monitor is compared to the 400 nit Canon DP-V2410 LCD monitor:

Left: OLED (1,000 nit). Right: LCD (Canon DP-V2410 400 nit display). Large areas of white cause the OLED to dim dramatically, while the LCD display, although dimmer initially, remains fairly consistent in brightness.

The white square in the first image is designed to clip on both displays. As the box, which requires maximum power to display, increases in size, both displays reduce brightness overall to control heat dissipation. This is a feature not only of professional displays, but of consumer displays as well. It is wise to take this into account while shooting.

There are two post terms that come into play that will weigh heavily on how your material will be seen by consumers: MaxCLL and MaxFALL. They are defined by the SMPTE ST.2086 spec for HDR encoding, and apply to HDR10 encoding (covered in section 5, “How the Audience Will See Your Work”).

Maximum Content Light Level (MaxCLL) is a metadata value that records the nit level of the brightest pixel in the frame. If a consumer television can’t produce that level of brightness then it will attempt to compensate in some way.

Maximum Frame Average Light Level (MaxFALL) is a metadata value that records the average brightness of every pixel in the brightest frame of a program. This is another piece of information used by a consumer television to modify a program whose brightest scenes may cause its ABL circuits to activate.

As every HDR monitor to date suffers from heat dissipation issues, and very bright pixels generate a lot of heat, every monitor has a MaxFALL value. When the image hits MaxFALL it’ll start to clamp down so the display isn’t damaged by excess heat. That’s what we’re seeing above: the white square is coded to be the brightest white possible, and the monitor is happy to reproduce that intensity… to a point. Once the image’s MaxFALL value exceeds the television’s MaxFALL value, the television will attempt to save itself by reducing overall image brightness. And every television and on-set monitor will have a different MaxFALL limit that is governed by it’s maximum brightness level and how well its manufacturer thinks it can dissipate heat.

My rule of thumb is that ABL circuits tend to activate when more than 10% of the screen reaches maximum intensity, but larger highlight areas of lesser intensity can also trigger ABL protection.

It’s important to keep this in mind when shooting HDR. Large, bright highlight areas may cause ABL dimming to occur, and if these are important for artistic effect then it may be important to increase ambient light levels to compensate for this. Otherwise, exposing those areas to be less intense will retain detail in the rest of the scene.

It should rarely be necessary to drive large screen areas to maximum intensity. The brightest highlights should generally be the smallest, as they tend to stand out better against a wide variety of backgrounds.

Remember, any neutral tone that is two stops brighter than middle gray is going to appear white. Beyond that, you’re painting with intensity and creating an artistic statement that physically impacts viewers while battling the limitations of display technology.

Care should be taken to prevent large bright backgrounds, such as windows, from reaching the maximum intensity of the average consumer monitor. If they are a small part of your wide shot, plan ahead so they don’t become a large part of a closeup. Failing to plan for this may cause your colorist to make some compromises across both shots. (Some level of brightness mismatch is okay as we often see this happen optically: out-of-focus specular highlights drop by about one stop in brightness as they diffuse across a dark background, and of course we cheat closeup lighting all the time.)

Watching for MaxFALL excursions is only one of the many reasons it helps to have an HDR monitor on location. HDR is prone to motion judder at fast panning speeds due to HDR’s increased contrast, especially at frame rates as slow as 24fps (120fps has tested well for broadcasting 4K sports). Movement at slow frame rates also reduces resolution in finely detailed images due to motion blur: this can be seen in 4K sports programming shot at 24, 25 or 30fps, where richly textured grass dissolves into a blur as soon as the camera moves. HDR monitors are especially useful for critically evaluating and/or eliminating lens flares, which are brighter, more saturated, and potentially more distracting in HDR than in SDR. Veiling glare can also eliminate the HDR effect in dark shadows, and should generally be avoided.

NOT FOR 4K ONLY

Rec 2100, which defines modern HDR, does not specify resolution. It is entirely possible that we could see 1920×1080 HDR televisions come to market. 4K televisions have become so cheap, though, that it’s imperative that we monitor on set in 4K when shooting HDR. The difference in resolution, in conjunction with enhanced dynamic range and perceived constant contrast, does not allow for mistakes: everything in the frame will be visible in 4K.

The Canon DP-V2410 4K monitor is positioned well as a low-cost and physically manageable on-set monitor, as brighter monitors tend to have a larger footprint and require more power. (The next generation monitor, the DP-V2420, qualifies as a Dolby Vision mastering monitor and complies with the ITU-R BT.2100-0 HDR standard, which specifies a peak luminance of 1000 nits and a minimum luminance of 0.005. It’s basically the post version of the V2410, although it could be used on location as well.)

As part of my research I asked whether it was possible to use a consumer HDR television as a cheap on-set reference monitor. I learned that this is not currently possible as consumer televisions receive HDR signals over HDMI, which carries Dolby® or HDR10 metadata—created in a color grade—that is crucial to shaping the HDR image to fit the television’s capabilities. Cameras don’t output this data, and without it consumer televisions will default to SDR.

There is a possibility that tools will be developed in the future to overcome this problem.

THINGS TO REMEMBER

- The color gamut of a professional monitor will likely approximate P3. In spite of this, always shoot to the largest color gamut available for future proofing.

- Color gamuts are three dimensional. Colors will saturate differently across varying luminance levels.

- Out-of-range highlights can be assessed by toggling Canon’s HDR range

- OLED monitors work well in perfect darkness. LCD monitors work well in low ambient light.

- Large bright areas that push a monitor beyond its Maximum Frame Average Light Level (MaxFALL) will cause it to dim in order to avoid heat damage. This limit varies from monitor to monitor. It can be avoided by limiting maximum brightness to small highlights, or by raising fill light levels to compensate for possible monitor dimming. The 10% rule (ABL activates when 10% of the screen reaches maximum intensity) is a good general guideline, but not an absolute rule.

- HDR is unforgiving. Always monitor in 4K in a color gamut not less than P3.

SERIES TABLE OF CONTENTS

1. What is HDR?

2. On Set with HDR

3. Monitor Considerations < You are here

4. Artistic Considerations < Next in series

5. The Technical Side of HDR

6. How the Audience Will See Your Work

The author wishes to thank the following for their assistance in the creation of this article.

Canon USA

David Hoon Doko

Larry Thorpe

Disclosure: I was paid by Canon USA to research and write this article.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now