The use of AI for upscaling is an emerging and competitive field and Kimball Thurston, Robin Melhuish and Patrick Cooper, who together formed the company Zeoshi, believe they might just have the edge – and I don’t think they’re wrong.

Their background is impressive – spending many years working at renowned film restoration company Lowry Digital, which was recognised with an Academy Awards Technical Oscar, around the same time the founder John Lowry passed away.

There they had been involved in restoring hundreds of millions of frames of some of the biggest films in history from Star Wars to Disney to Bond as well as working with NASA on the Apollo moon landing. They also worked closely with James Cameron, someone who loves to push the limits of technology. They had two high end film scanners, a film recorder and over 600 Power Mac G5’s running in parallel running some incredibly complicated code, which was a challenge to train staff to run (the manual was 200 pages long!) – it is safe to say that they pushed traditional algorithms as far as they could go.

AI and neural nets

AI would seem the natural next step for this team, with neural nets and machine learning already beating older algorithms and getting better all the time. Their ambition is to use AI for a number of applications and Zeoshi Uprez is just the start. Other applications might include HDR which would meet another industry need. But as they point out, it’s upscaling that is the compelling business case right now with over half of US households having a 4K TV, yet only about 7% of total streaming content in 4K. This number might seem surprising, until you remember the vast libraries of older HD and even SD content that some of the streamers have available.

One simplified model

There are different approaches in building AI models for upscaling. In the example of popular end user software Topaz Video Enhance AI which I reviewed, there are 24 models to choose from and multiple parameters within each model. The Zeoshi team have taken a different approach – to build one model and make it parameter-free. That makes it ready to run at the click of a button on any amount of content thrown at it, whether animation or live action – and crucially, without the user needing to understand anything about it.

Building something this complex, but making it so easy to use is much easier said than done. It is true that there is plenty of readily available research into AI upscaling in the scientific community and some incredible results are being seen. If you’re interested in following that in a fairly accessible way, I recommend the YouTube channel Two Minute Papers from Dr. Károly Zsolnai-Fehér. This recent video for example shows off startling image re-creation from the presumably fairly clever Google Brain Team, working with images as small as 64×64 and getting them to 1024×1024 (that’s 16x upscaling!):

However, a lot of what can be achieved under controlled conditions does not translate into real world usage and a scientist does not necessarily have the eye to know what high end film and video should look like. Also, a lot of the research is for single images and film has additional complexity in, for example, movement dependent motion blur.

With their specific background, the Zeoshi team are bridging the gap between the cutting edge science and the aesthetics of the film industry. There’s also the not insignificant matter of having worked with millions of the best frames Hollywood has ever produced when deciding how to train your model to be aesthetically pleasing and consistent with original creative intent.

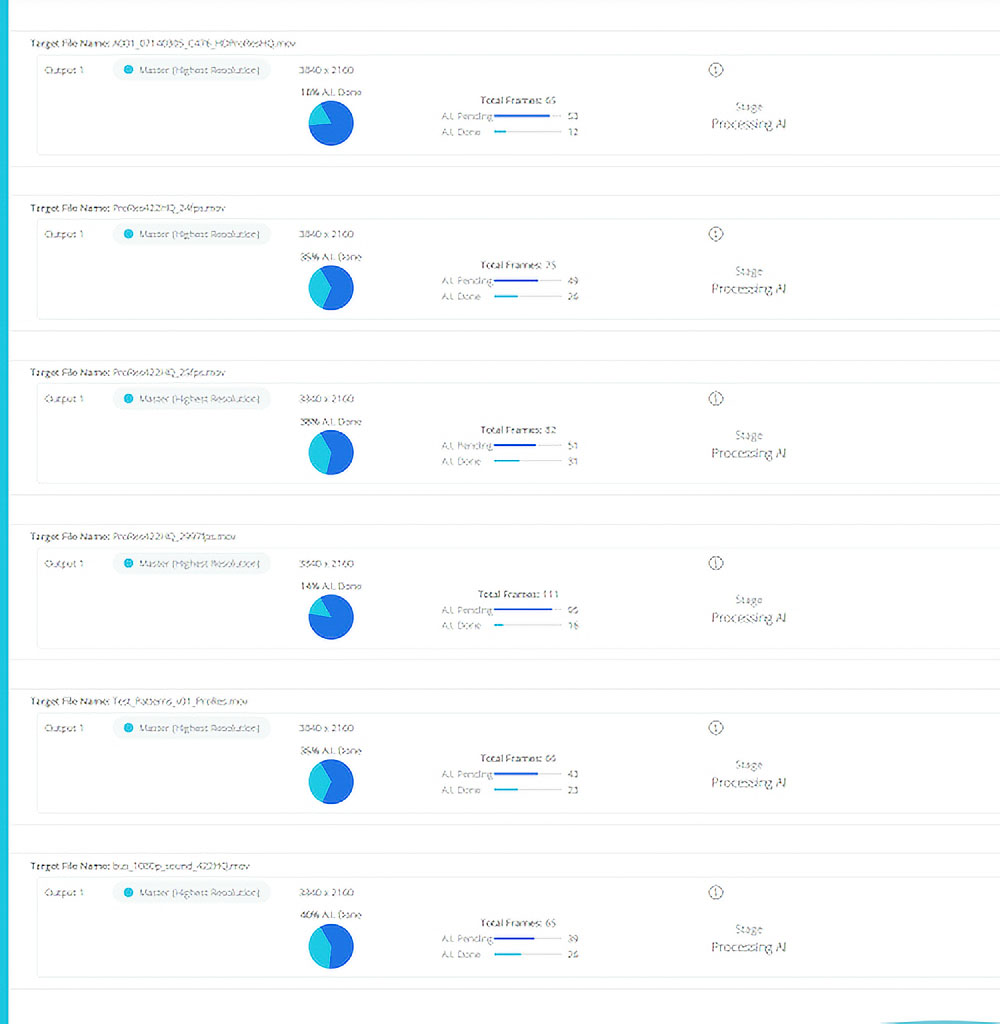

Cloud compute

There are also different approaches when it comes to actually crunching the data of complicated machine learning models. My personal computer can render a few shots using Topaz, but what if I have a lot more content? Zeoshi decided to build their model in the cloud – specifically AWS – where you can basically have as many graphics cards and CPUs as you need running in parallel to do the heavy lifting. This means it can be spun up for as much content as you have, as well as providing industry standard security.

A quick test

Having previously tested a number of different upscaling solutions, I thought I’d run the same test through Zeoshi and it passed with flying colours, in fact beating the rivals such as Topaz, Pixop and Davinci Resolve, making an image barely distinguishable from the original.

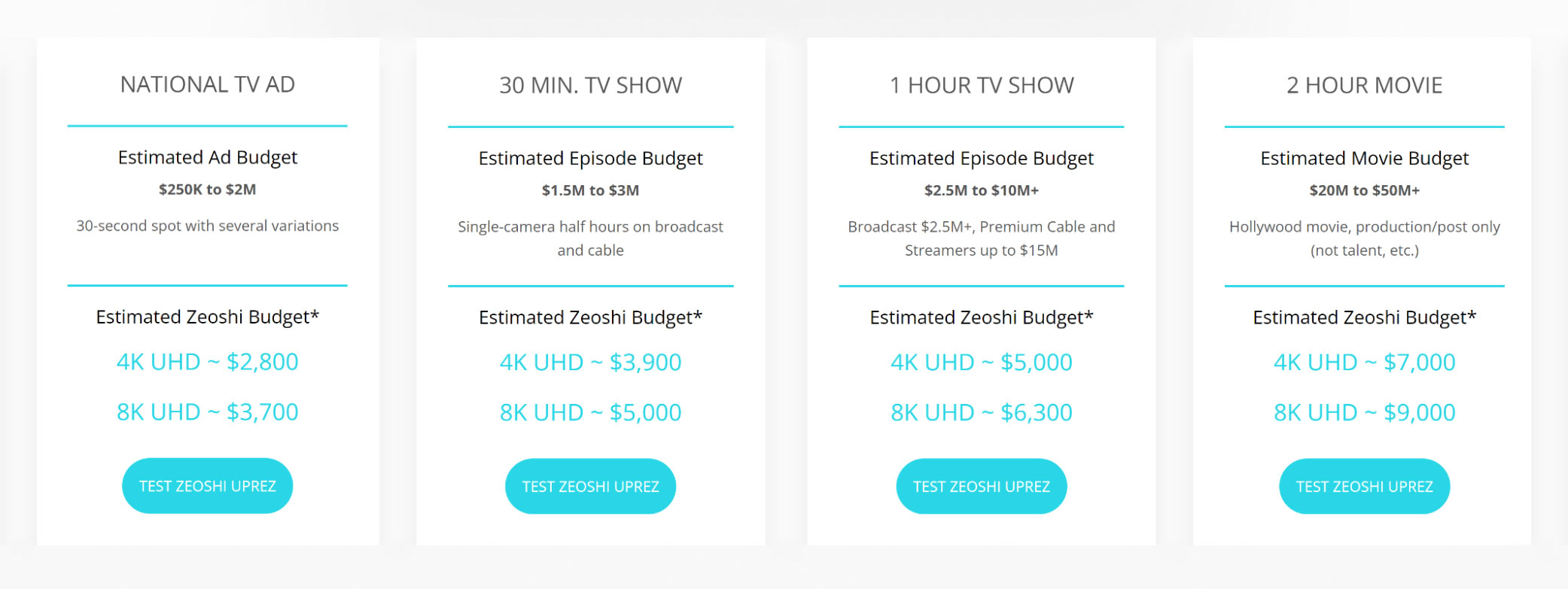

Pricing

Pricing is targeted at heavy hitters – production companies, studios and streamers wanting to uprez an entire commercial or film or even their entire back catalogue. As things stand, all the big streamers have hundreds of hours of HD content. As consumers increasingly expect 4K (and will expect 8K soon enough), you can easily imagine some of the big players making the call to start uprezzing their material.

I did see a little “Re-mastered in 4K” badge on a film on Apple TV+ the other day and it’s surprising to me that we haven’t already seen that a lot more across the streaming services as I would have thought they’d see the value in it to distinguish themselves from their competitors, especially given how good the upscaling tech already is.

A recent example of AI upscaling at work is Peter Jackson’s “Get Back” documentary on The Beatles, restoring the original 16mm film (if you’re interested here is a before and after). To my eye it was a bit too strong at times, but it was impressive nonetheless & more than that, with its modern feel, you feel much more like you’re there with them.

Rethinking resolution

Zeoshi have a vision that goes beyond upscaling old HD masters. They believe that machine learning is so powerful, it could affect production itself. The argument goes like this: it costs money to shoot at a higher resolution. Cameras cost more, storage requirements are larger, faster hard drives and more powerful computers are needed to deal with the footage, from editing through to VFX and grade. Transfers take longer. Everything is more difficult and more costly.

Now imagine you have a production that needs to deliver in 4K, but only shoots in HD. You shoot in HD, edit in HD, do all post and VFX in HD and right at the end, upscale it to 4K at a quality that to the human eye looks like it had been shot at 4K all along. The kicker is this – the money you save from doing it that way can go into making the film better because you could spend the money you save on things that improve the image – better crew, better lighting, better lenses, better locations.

If doubling resolution from HD to 4K feels like a stretch, consider the Arri Alexa Mini which shoots at a maximum resolution of 3.4K. Many DoPs swear by the Arri sensor and would prefer to shoot on a Mini if the budget didn’t stretch to the Mini LF. And in this case it would be a much smaller jump to 4K and even more likely to pass unseen.

It’s a very interesting idea and one you could imagine many would reject initially, simply because they would not trust the idea that you can upscale without issue. But the team at Zeoshi is here to say it can be done and it would only take a few early adopters to pave the way for this to be something we don’t bat an eyelid at.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now