In an age of dizzying technological churn, it’s hard to know which emerging fringe tech is worth paying attention to. Not so with virtual production: Anyone who’s experienced it can instantly see where things are headed. So what exactly is it, and when will you—the Indie filmmaker—be able to get your hands on it?

In an age of dizzying technological churn, it’s hard to know which emerging fringe tech is worth paying attention to. Not so with virtual production: Anyone who’s experienced it can instantly see where things are headed. So what exactly is it, and when will you—the Indie filmmaker—be able to get your hands on it?

Bringing the virtual into the physical

Most people—if they have an awareness of virtual production—think of it as some kind of previsualization system, or picture Peter Jackson on a greenscreen set looking at CG trolls through a VR headset. Now while all this is true, and part of the history of virtual production techniques that has brought us to today, the virtual production of tomorrow will be far more intrusive into the physical realm.

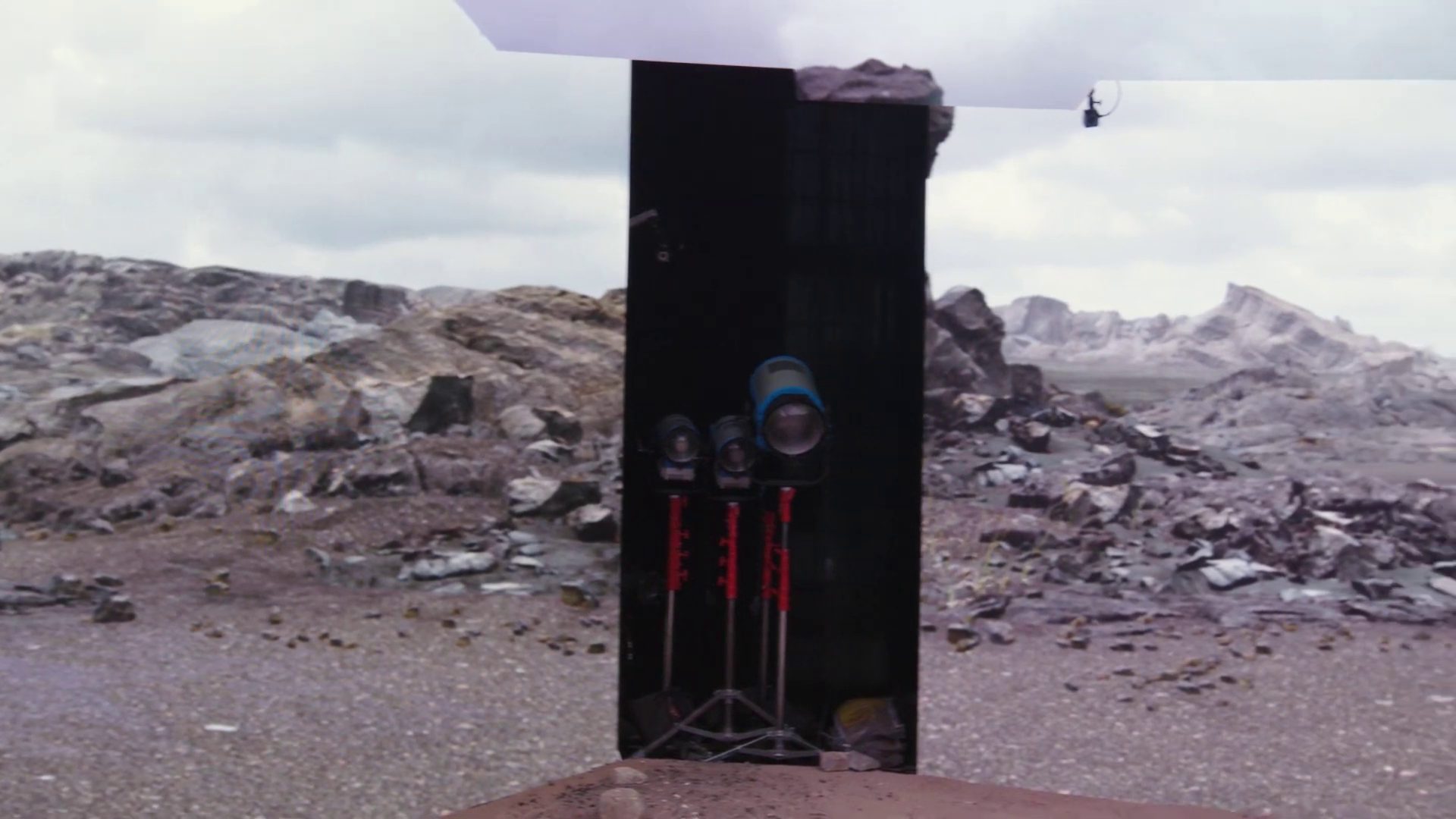

Lux Machina, Profile Studios, Magnopus, Quixel, and ARRI in collaboration with Epic Games, have been showing a prototype of a virtual production set based on real-time projection of background environments via massive LED panels. At its basest level, this is an updated version of the old rear-projection systems used for shooting in-car scenes, where moving footage of the background was placed behind the actors. This is, however, a gross oversimplification. The system is superior to its historical predecessor in every way.

Lux Machina, Profile Studios, Magnopus, Quixel, and ARRI in collaboration with Epic Games, have been showing a prototype of a virtual production set based on real-time projection of background environments via massive LED panels. At its basest level, this is an updated version of the old rear-projection systems used for shooting in-car scenes, where moving footage of the background was placed behind the actors. This is, however, a gross oversimplification. The system is superior to its historical predecessor in every way.

The benefit of using the LED light panels is that they actually provide a physical lighting source for the live action actors and props.

Essentially, we’re dealing with the physical equivalent of what game developers call a “skybox,” a cube containing the HDR lighting environment intended to illuminate the game set. Full-scale LED panels project the background image from stage left, stage right, the main rear projection, and the ceiling. Presumably foreground lighting could be provided by either more traditional stage lighting sources, or perhaps a matrix of LED PAR cans that can simulate the portion of the HDR coming from the front of the scene. (And alternative option is a curved background screen configuration.)

What’s even more significant is the ability for the background to update its perspective relative to the camera position. The camera rig is tracked and its ground-truth position is fed into Unreal Engine, which updates the resulting projections to the LED panels. The specific region of the background directly within the camera’s frustum is rendered at a higher resolution, which looks a little strange on set, but obviously makes sense from a processing efficiency standpoint (the entire setup requires three separate workstations running Unreal Engine: one for the two side stage panels, one for the background panel, and the third for the ceiling).

The result of the perspective shift means that backdrops feel much more natural with sweeping jib and crane moves. The real-time nature of the projection also means there’s the potential to have secondary movement like a breeze blowing through trees, or last-minute directorial changes, like literally moving a mountain to improve the framing of the shot.

Lighting can also be changed in an instant. Switching from sunset to sunrise to midday is as simple as dialing up a different sky. Changing the direction the sun is coming from is as simple as rotating the sky image. Try doing that in the real world.

The beginning of the end for exotic travel?

One of the most exciting aspects of this virtual production stage is the potential to take a script with globetrotting locations and bring the budget from the nine-figure realm down to an indie level. Imagine being able to shoot in Paris, New York, the Everglades, and the deserts of the Middle East from the same soundstage, all in the course of a three-week production schedule? We’re not there yet, but it seems likely that over the next three to seven years the GPU compute power, the sophistication of the software, and the display tech will get us there. Crowd simulation software could even provide digital extras for the mid and far-ground.

One of the most exciting aspects of this virtual production stage is the potential to take a script with globetrotting locations and bring the budget from the nine-figure realm down to an indie level. Imagine being able to shoot in Paris, New York, the Everglades, and the deserts of the Middle East from the same soundstage, all in the course of a three-week production schedule? We’re not there yet, but it seems likely that over the next three to seven years the GPU compute power, the sophistication of the software, and the display tech will get us there. Crowd simulation software could even provide digital extras for the mid and far-ground.

And then there are the exotic sci-fi and fantasy scenes. Such sets are extremely complex to build, and greenscreen composites are often unsatisfying. The CG often feels a little too ethereal. With the CG set illuminating actors and foreground props on the virtual production stage, there’s an immediate improvement in the way the real and computer-generated scene elements mesh.

No doubt Tom Cruise will still be filmed hanging from real skyscrapers in Dubai, but for indie features and episodics hungry for a “bigger” look, the virtual production soundstage will almost certainly kill the exotic production budget. Film crew members will need to find themselves a cosy travel magazine show to work on if they want to any jet-setting perks.

Limitations

I’ve been careful to talk about this as the future; there are a couple of limitations that hold it back from being 2019’s solution to location shooting. Part of this is simply the nascent state of the tech; everything is still very much in the prototype stage. This is not to say that it can’t be used for production today, just that there are certain compromises that would need to be accepted.

I was extremely impressed with Epic’s David Morin, who is spearheading the virtual production initiatives at Epic, and his cautious approach to promoting the venture. He’s clearly mindful of the way overhype has caused the bubble to burst too early on many VR and AR technologies (along with the entire stereoscopic industry) and is thus being careful not to reach for hyperbole when talking about the current state of the art.

To me, the biggest present limitation seems to be the display technology. Don’t get me wrong: they look pretty. The LED panels simply lack the dynamic range to replace the 14-16 stops a modern cinema camera can capture in the real world. The dynamic range is probably adequate for most television and OTT content, but with the industry pushing hard into HDR standards, a reduced contrast range will have limited appeal.

A possible compromise is the system’s ability to instantly convert the background to a greenscreen. This allows directors to visualize the scenery on-set, while still performing the final composite as a post process with floating point precision. Of course, at that point you lose some of the benefit of the interactive lighting provided by the panels. The scene can still be lit from above and the sides, but much of the background illumination will obviously be replaced with greenscreen illumination. Still, a significant improvement on a traditional greenscreen.

(I can’t help feeling that by pulsing the refresh rate of the LCD panels the engineers could extract some kind of segmentation map, which would allow a higher dynamic range background to be composited in post, while still using the LCD panels to illuminate on set…)

The solution to this problem is mainly dependent on future advances in the display hardware. Another limitation that’s more intrinsic to the system is the directionality of the light sources. This is an issue that also affects HDR lighting in CG scenes. The panels can simulate the general direction from which a light source is coming, but it can’t angle that light to only illuminate a specific object or set of objects in the scene. You couldn’t, for example, simulate a light raking across one actor’s face without it also affecting another actor at the same time.

This is the kind of granular control DP’s and gaffers expect on a shoot. That’s not to say traditional lighting hardware can’t augment the virtual production lighting of course. Additionally, the virtual production stage lends itself to outdoor scenes. Exteriors favor the sort of ambient lighting the virtual production system excels at, so directional lighting control may not be as important for these shots as it would be for interiors. This isn’t so different from the way many outdoor scenes are shot now, with bounce cards and reflector being used to control lighting rather than high-powered practical lighting.

This is the kind of granular control DP’s and gaffers expect on a shoot. That’s not to say traditional lighting hardware can’t augment the virtual production lighting of course. Additionally, the virtual production stage lends itself to outdoor scenes. Exteriors favor the sort of ambient lighting the virtual production system excels at, so directional lighting control may not be as important for these shots as it would be for interiors. This isn’t so different from the way many outdoor scenes are shot now, with bounce cards and reflector being used to control lighting rather than high-powered practical lighting.

Baking for speed

Obviously, an essential component in the adoption of virtual production is the realism of the computer-generated scenery. There are three main ways to produce realistic background scenes in CG: baking, Unreal Engine’s dynamic lighting system, and real-time raytracing.

In baking, lighting and shadow information is rendered at offline speeds, then applied as a texture map to the scene geometry. Think of it like shrink-wrapping artwork onto a car, only in this case the artwork you’re shrink-wrapping includes shadows and shading detail. And instead of wrapping a car, you’re potentially shrink-wrapping an entire landscape.

The benefit of this method is that you can take as long as you like to render the imagery, because the render doesn’t need to happen in real-time. The real-time renderer then has very little heavy lifting to do, since most of the lighting is already calculated and it’s simply wallpapering the scene geometry with the pre-rendered images.

The downside of this method is that it locks down your set. As soon as you move a background set piece, the lighting and shadows need to be recalculated. It’s also unable to account for specular highlights and reflections. These change with the camera’s POV at any given time, and so need to be calculated in real-time rather than baked into the scene geometry’s textures.

Baking, then, can work for situations where the background set design is locked down and you’re not dealing with highly reflective objects, like metallics.

The next alternative—Unreal Engine’s Dynamic Lighting system—uses the traditional “cheats” animators have been using for years to simulate the results of bounced light without actually tracing individual rays. Using shadow maps and techniques like Screen Space Ambient Occlusion (SSAO), real-time changes to lighting can look quite realistic, again depending on the subject matter. The more subtle the shading and scattering properties of background scene surfaces, the harder the resulting real-time render is to sell to an audience.

What we really need is real-time raytracing.

RTX in its infancy

Raytracing is—as the name suggests—the process of tracing virtual rays of light as they bounce around the scene. Due to the way microfaceted surfaces scatter light rays, it requires a crazy amount of calculation work by the computer. Nvidia announced last year a new generation of graphics cards with silicon dedicated to real-time raytracing, dubbed RTX.

Raytracing is—as the name suggests—the process of tracing virtual rays of light as they bounce around the scene. Due to the way microfaceted surfaces scatter light rays, it requires a crazy amount of calculation work by the computer. Nvidia announced last year a new generation of graphics cards with silicon dedicated to real-time raytracing, dubbed RTX.

Right now, artists and developers are still coming to terms with how to best use the new hardware. I have no doubt that we’ll see some very clever ways of squeezing more realism out of the existing cards, even as we anticipate enhancements in future generations of the hardware.

All that to say, with today’s tech there are certain virtual environments that lend themselves to in-camera real-time production, while others would not pass the same realism test. As RTX evolves, we’ll see just about any environment viable as a virtual production set.

Too expensive for the independent filmmaker? Perhaps not.

Before you tune out and decide that this is all out of your price range, consider that a day rental for a virtual production studio may not end up being significantly more than a traditional soundstage. Obviously the market will set the price, but there’s no reason to assume that the cost of renting a virtual production stage in the near future will be stratospheric.

Even the expense of creating the environment to project can be quite reasonable. Companies like Quixel allow access to a massive library of scanned objects and backgrounds for just a few hundred dollars a year. Putting together a set can be as simple as designing a custom video game level.

And if you don’t want to create your own set? Stick on a VR headset and do some virtual scouting of predesigned environments, then simply pick the one you want to license for your shoot.

Even more affordable “in-viewfinder” solutions will soon be available for everyday use. These systems are more akin to the early virtual production systems used by Peter Jackson and James Cameron, but they will allow filmmakers to see a representation of the final composited shot through a monitor or viewfinder as they shoot.

Virtual production in your hands today, thanks to Epic’s free Virtual Camera Plugin

Is there any way to get your hands on virtual camera technology today? For free? Well, if you’re interested in virtual production for previsualization, the answer is yes. Last year Epic released a Virtual Camera Plugin for their Unreal Engine editor. You can download both the editor and plugin for free from their website.

With the plugin and an iPad (and a computer running Unreal Engine) you can physically dolly and rotate a virtual camera to experiment with framings. This is a great way to previs upcoming shoots.

Take moviola.com’s course on Previs using Unreal EngineCheck out moviola.com’s free series on Previs using Unreal to see how you could quickly build an entire replica of your shooting location, and then use the Virtual Camera Plugin to block out your shoot.

For more information on the Virtual Camera Plugin, check out this guide by Epic.

Virtual production is the future

Virtual sets have already become a major part of filmmaking. And not just for your Game of Thrones-style fantasies; plenty of primetime dramas rely on CG backdrops to enlarge environments far beyond what their budgets would permit to shoot in camera.

Bringing the virtual elements onto the live action set is the next logical step. Ultimately, it’s poised to democratize the use of computer-generated environments the way digital cameras and affordable NLE’s have democratized the rest of the filmmaking process. If nothing else, this is definitely a space to watch.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now