How does TLCI stack up against the other measures of color accuracy? I’ve argued that its testing methodologies might be suspect, but I’ve never actually looked at data to see how it stacks up against the other common measures.

Recently, though, I realized the data was right in front of me.

Note: This article has been updated.

I’ve written a couple of articles on TLCI. Here’s the first. I’ll post a link to the second when a bit farther down, where it is more relevant. You don’t need to read them to understand this article. I provide them only as background info.

The TLCI Color Rendering Index: Does It Really Work? Color Me Skeptical

THE SETUP

A while back, I was turned on to a database of light color index values at Indie Cine Academy. This website is pure gold. My understanding is that this project started when someone walked around NAB with an AsenseTek Lighting Passport Spectrometer, measured as many LED lights as they could, and then published the results.

This is the most comprehensive database of lighting data I’ve seen anywhere, and it’s hugely valuable—if you have some idea of what the numbers mean. That’s not terribly hard to figure out if you have access to a few of the fixtures on the list and you can build a visual baseline of what you like versus what you don’t, but numbers alone don’t tell the full story. We’d like them to, as we are occasionally forced into making lighting purchases and rental decisions on the basis of numerical data alone, but they don’t.

My question: do any of the common numerical measures of color fidelity give us more accurate information than others?

Indie Cine Academy’s public database provided all the necessary data. As I can be a bit “driven” when looking for an answer to a problem, I found myself copying all the daylight LED numerical data from the database and putting it into an Excel spreadsheet. (Why only daylight? Because, after putting the data from 140+ lights into a spreadsheet, I couldn’t be bothered with the tungsten data. I have only so much time!) I then proceeded to create a series of graphs comparing the different measures to see if any of them were more granular than the others, and whether there were any outliers or if all the measurement systems tracked each other closely. You’re going to see those graphs shortly. First, though, you should understand how these measuring systems work.

The Indie Cine Academy link above is to a page that explains all the AsenseTek readouts available. I didn’t find TM-30-15 Rg to be a very good predictor of the quality of a light, although it does indicate where a light is over- or undersaturated in a way that communicates that information quickly and intuitively. Instead I focused on CRI(ra), CRI(re), CQS, TM-30-15 Rf and TLCI.

CRI

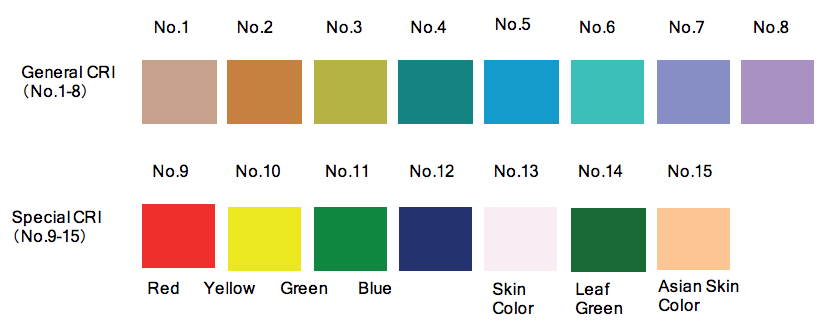

CRI is the oldest of these measures. The classic CRI measurement, CRI(ra), compares how nine color patches appear under a test light source when compared to a standard light source. This system works very well for measuring color response for broad spectrum light sources (for example, daylight and tungsten light, or a mixture of the two), but it was never designed to measure LEDs. It is regularly used by marketing departments who want to assign a high rating to their LED fixtures in order to sell more of them, but the patches are too desaturated to measure LEDs properly.

This is because a low-saturation colored surface makes for a very large target. A pure red, for example, will reflect a narrow range of wavelengths, whereas a desaturated red will reflect a broader spectral range that incorporates wavelengths attributed to other colors.

A saturated color target is a small one, and a desaturated color target is a large one.

LED color spikes are very narrow. They require a lot of small targets across the entire spectrum to reveal where spectral spikes and gaps occur. CRI(re) targets are very large ones, and as such they are not very good at revealing the nuances of an LED light.

A variation, CRI(re), adds six more color patches, including several highly saturated hues, to improve accuracy. The shocking thing is that CRI(re) includes flesh tone… which means that the most common CRI measurement, CRI(ra), doesn’t!

CRI(re) includes a red patch, R9 (CRI targets are named “R” followed by a number), that helps indicate whether an LED light emits enough red to reproduce healthy-looking flesh tones, along with a couple of targets that resemble flesh tone hues. Once again, though, most of the targets are very broad, and LED spikes and valleys can be very narrow.

CQS

CQS is a newer system that attempts to overcome the weaknesses of CRI. In addition to working in a different color space and using different criteria for determining the final score, it uses a series of 15 highly saturated patches instead of CRI(re)’s 15 split of saturated and desaturated patches. In theory this should provide more granular information when attempting to detect an LED light’s spectral peaks and valleys, but the reality is that LED gaps and spikes are so narrow that even CQS’s narrow targets may be too large.

TLCI

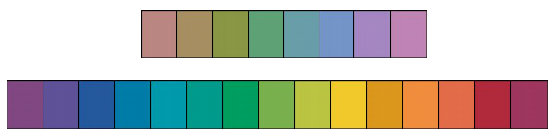

TLCI is the newcomer. Its goals are sound: profile a number of different cameras, build a color model that represents their characteristics, and use that model to calculate how any given light will appear across a wide range of cameras without having to view each light through every camera.

It’s a good idea, but several things gave me pause. One of them was the use of a “Macbeth” chart (X-rite Color Checker) as the color reference in building the virtual camera data. While the chart is fairly well understood scientifically, it was meant as a color reference for printing transparency film in magazines. As such, it isn’t necessarily the best chart to examine the spectral output of light sources on video—I might have used a Rec 709 video chart instead—and the chart itself is printed with a matte finish, which tends to broaden the size of the targets by desaturating them. Still, I didn’t have a lot of reason to doubt that it would work—in fact, with 18 color targets, it was possibly more granular than the other measures.

My concern was with the kinds of cameras profiled. One paper notes that nine prism cameras were profiled, but neglects mentioning single sensor cameras, and another paper mentions that one single sensor camera was profiled but doesn’t say which one. (In fact, none of the cameras were named, apparently due to manufacturer requests.)

I got into a bit of an online argument with one of the developers of TLCI over whether prism cameras saw color the same as single sensor cameras. He said they did; I said they didn’t. I later showed my work in one of my TLCI articles.

TLCI and Camera Color: What a Difference a Prism Block Makes

His insistence that both types of cameras saw the world in the same way concerned me greatly. This is clearly not the case. How could an index designed primarily around one particular type of camera color technology work reliably for a completely different kind of camera? I’m still not sure how much of a difference there might be in TLCI scores between prism and single sensor cameras. It might be more accurate for one than the other. The inclusion of any camera data, though, gives it quite a lead over the other systems.

THE RESULTS

While discrepancies between camera types can’t be answered with this data, I can immediately see how well TLCI tracks with current commonly-used indicators of color. First, here’s the data set that I used, if you wish to download it for your own experiments.

Indie Cine Academy Daylight LED Results Spreadsheet

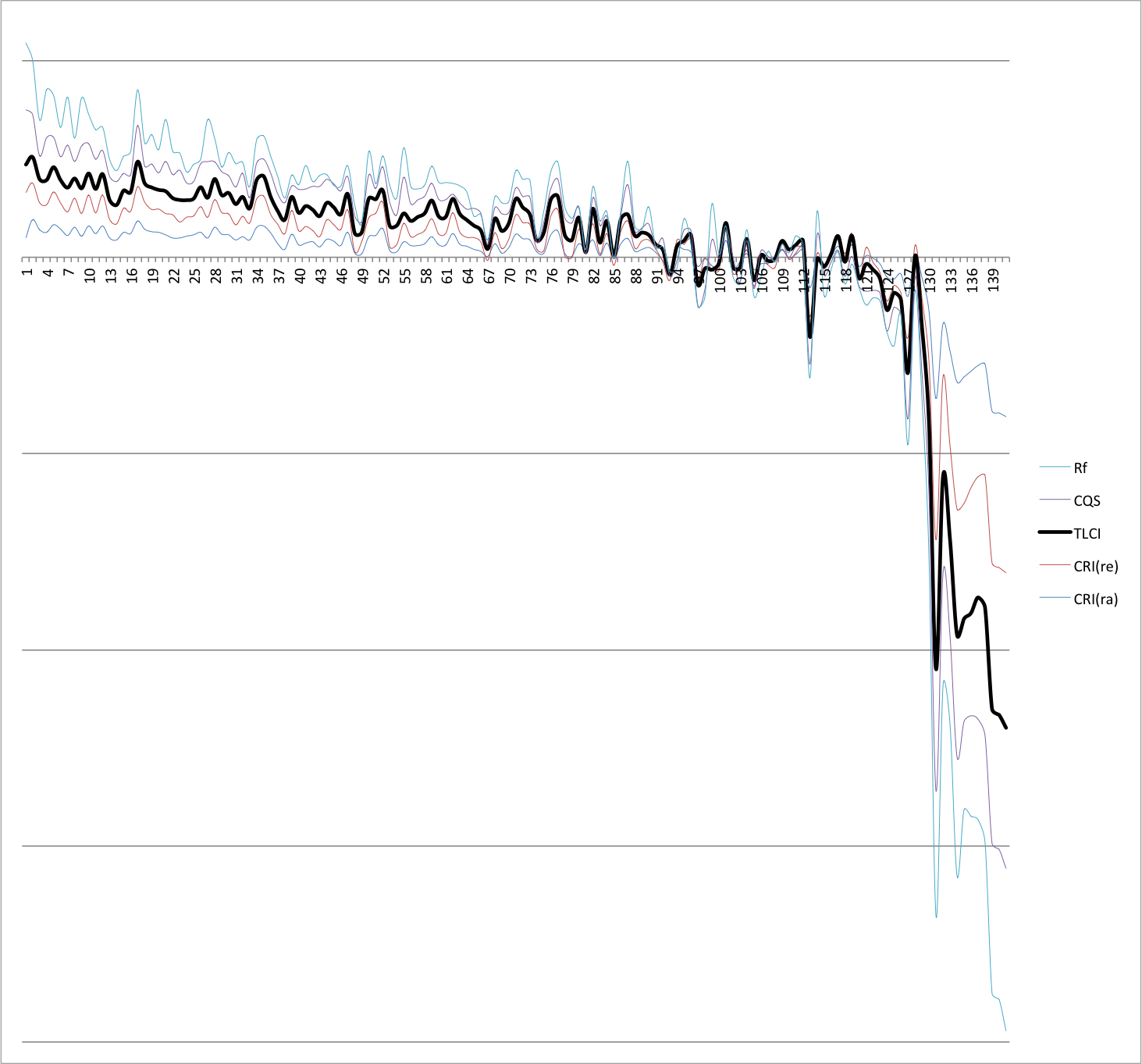

For each light there are multiple measured values, all derived from a single Asense Lighting Passport reading. I focused on CRI(ra), CRI(re), CQS, TM-30-15 Rf, and TLCI.

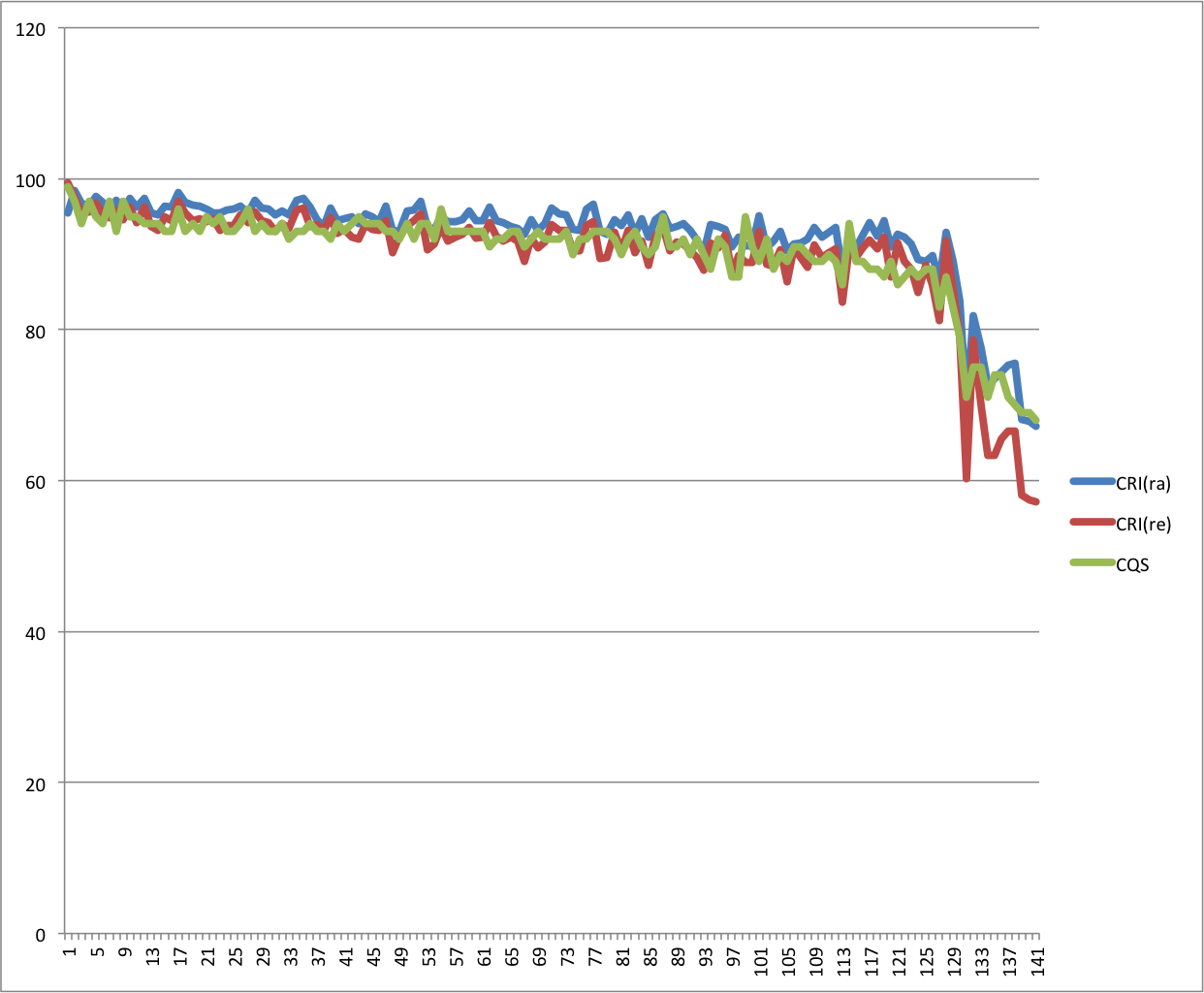

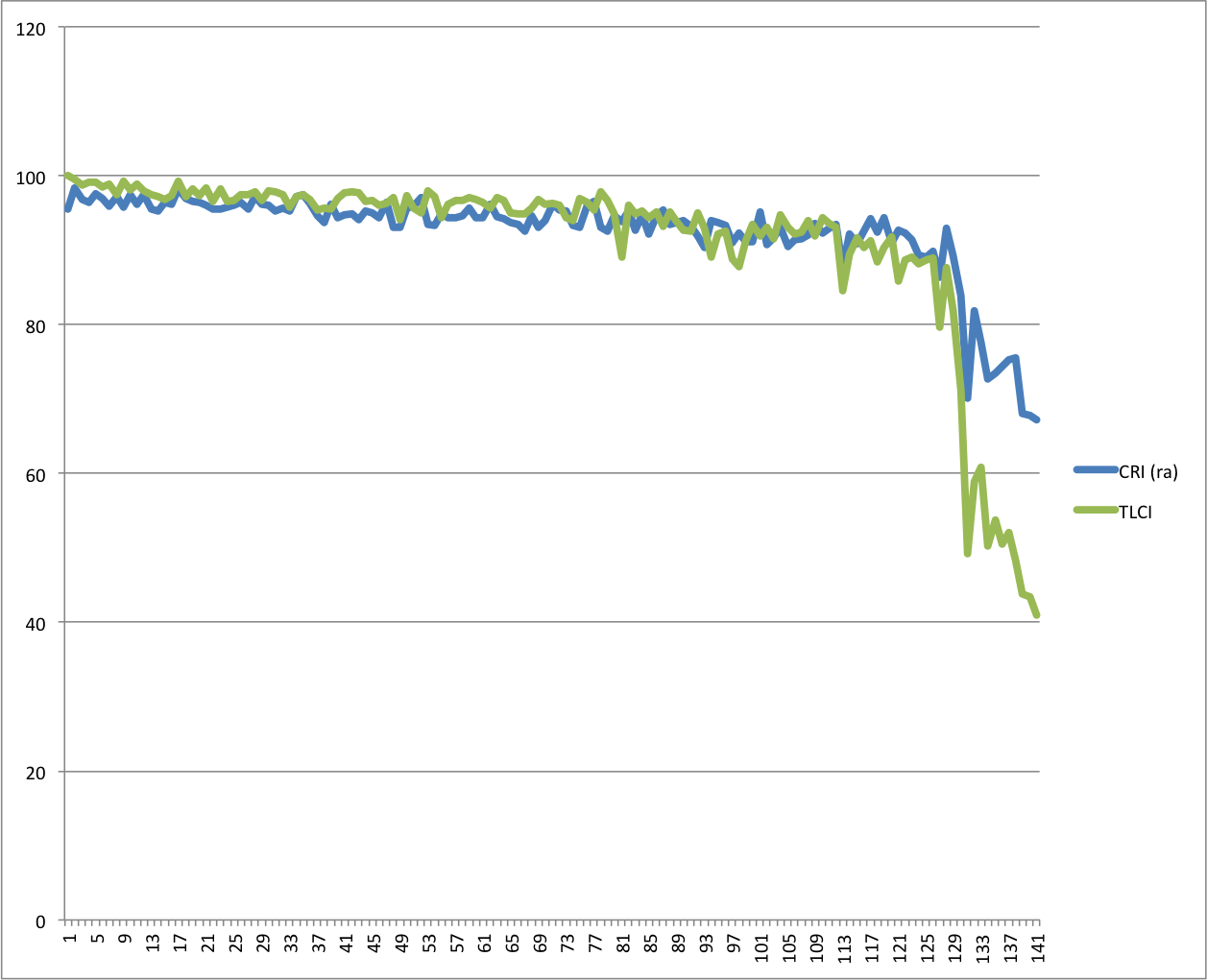

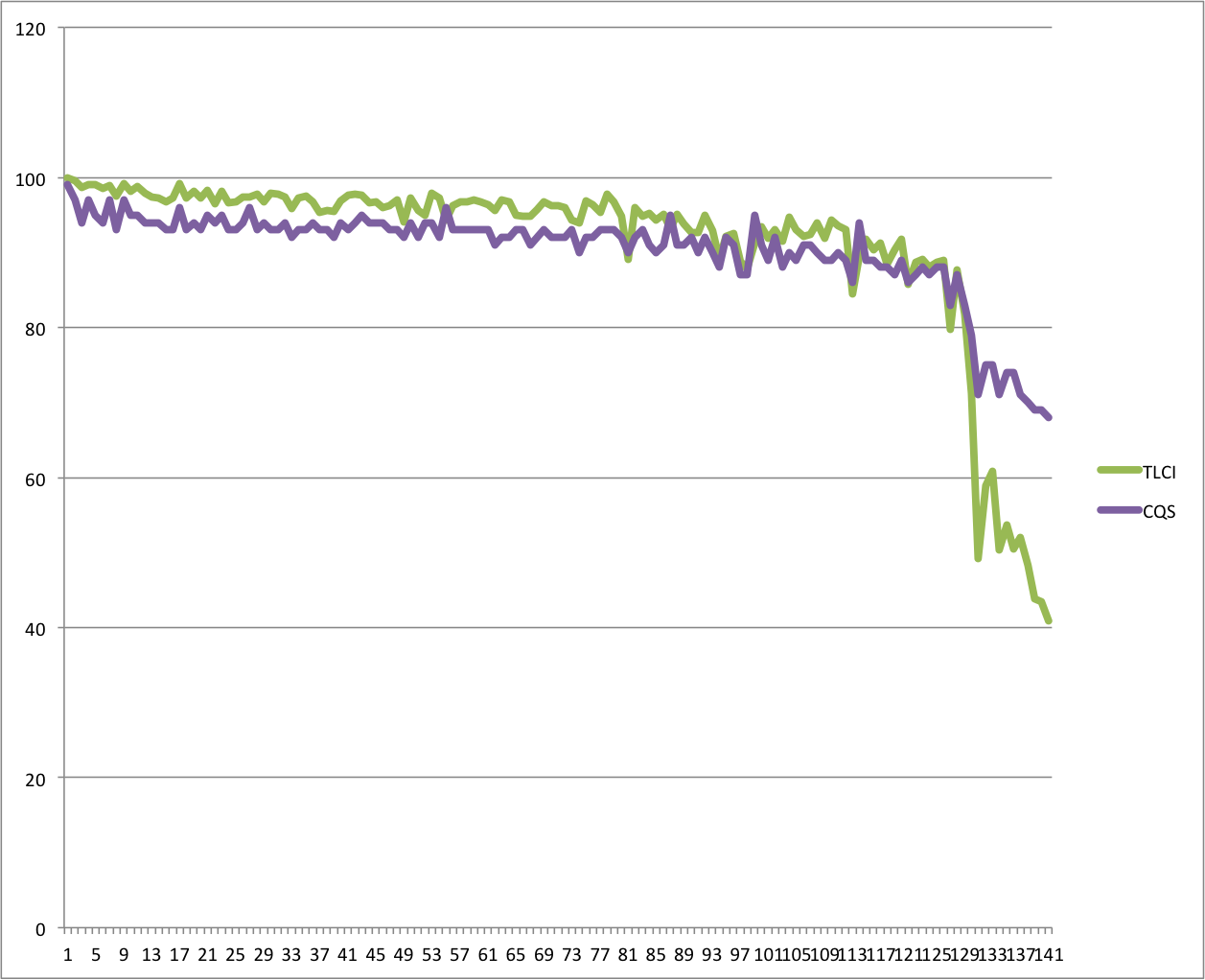

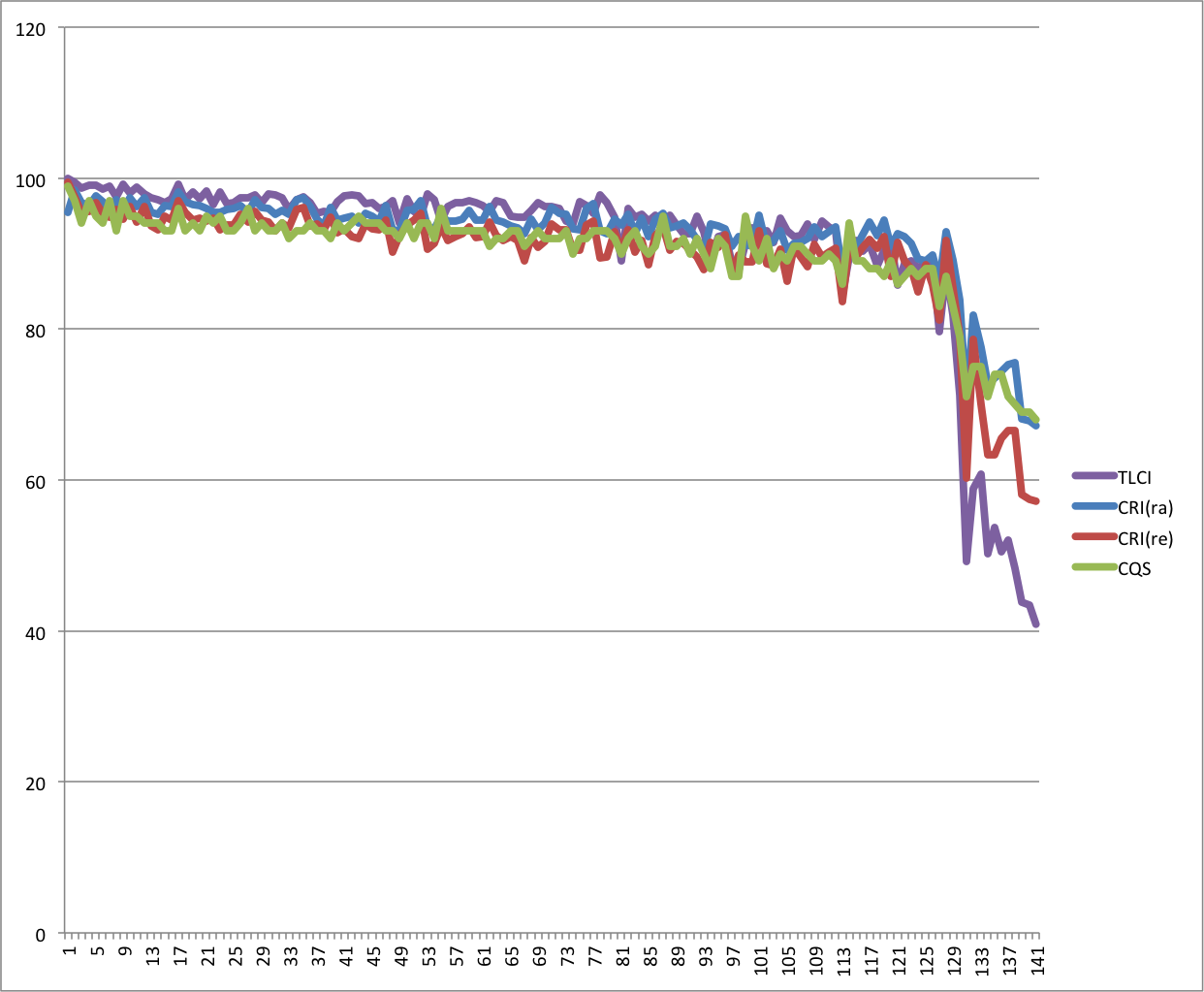

The first thing I did was to look at how well the main color measuring systems stacked up against each other.

The first thing I see is that CRI(ra), in blue, with its 8 low saturation samples, tends to give lights mostly higher scores. CQS, in green, with its 15 high saturation samples, tends to give lights mostly lower scores. This makes sense, as CQS targets are more narrow and are more likely to reveal disparities in light sources.

CRI(re), with its fifteen samples of mixed saturations, tends to fall mostly in the middle of the other two, although there are occasions where it scores lights lower than any of the others.

CRI(ra) and CQS seem to track at the low end, which is interesting as CQS should be a much more granular measure. All three are consistent up to the point where one should never use a light anyway: below 80, although lights tend to look fairly marginal by that point already. (I have a hard time using a light that falls below 90-95 on the CRI scale.)

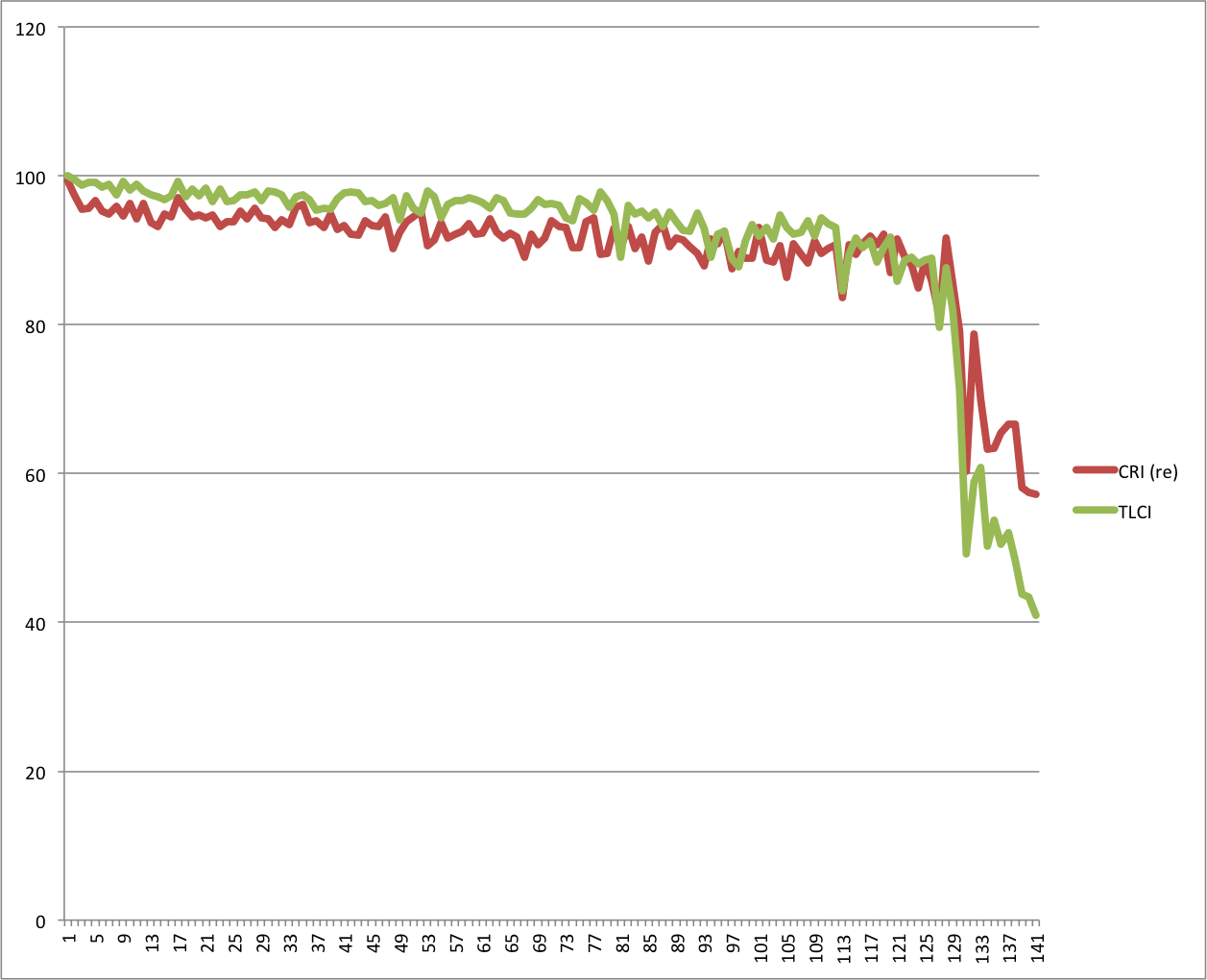

TLCI tends to score fixtures more highly than CRI(re) does when they do a good job, but punishes them with a much lower score when they fail beyond a certain point. The crossover point seems to be around 80: anything above that gets a higher score, and anything below that gets a much lower score. It’s almost as if an abrupt contrast curve was added to the data.

Otherwise, while TLCI and CRI(e) scores are similar at the high end, there are some discrepancies. TLCI may be doing what it says, and providing a more accurate measure of how a camera might see color.

The more coarse CRI(ra) scores lights within a few points of TLCI at the high end but is much less responsive to light quality. It diverges greatly at the low end. The patterns are similar at the bottom of the scale, which implies that they are seeing roughly the same thing, but it’s odd that TLCI’s math results in such a sharp drop.

Maybe this is how cameras see LED lights: as progressively worse until they hit a certain point, beyond which they all look absolutely terrible—with nothing in between.

Once again, CQS doesn’t dip as sharply as TLCI across the 80 boundary.

With few exceptions, TLCI rates better lights generally higher on the scale than the other measurements do, but rates poor lights much worse than the others on the lower end.

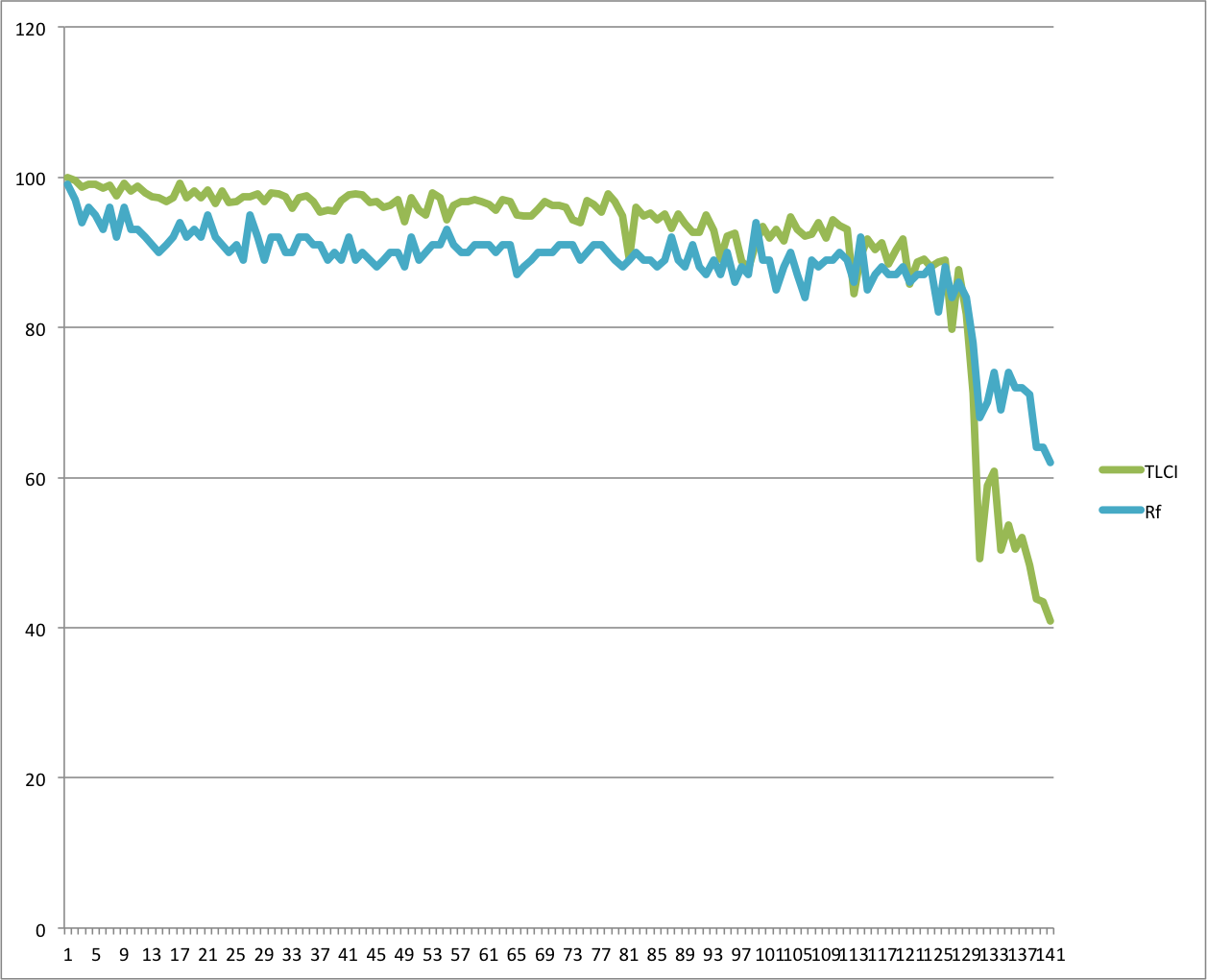

One clue came from TM-30-15-Rf.

Rf, which is a measure of overall color fidelity, shows the same rough pattern that TLCI does. TLCI, once again, shows good lights with higher scores than Rf and bad lights with vastly lower scores once they cross the 80 threshold. TLCI and CRI(re) seem particularly sensitive to reduced color fidelity beyond a certain point, while CRI(ra) and CQS see the same dip but don’t score the lights as severely.

LATE UPDATE

After publishing this article, it occurred to me there must be a better way to see whether TLCI tracks the other measures or deviates significantly. After a bit of research, I learned how to normalize the data and present it in a stacked chart:

The normalized chart shows TLCI mostly tracks the other systems, but has dramatically different opinions regarding a couple of the lights. The horizontal axis represents spreadsheet lines, and each line is a light: I see noticeable differences between lights 18 through 26 and 90, where TLCI reacts less strongly to differences; and lights 99 and 114, where it completely disagrees with the other measures. Otherwise, it tracks fairly well.

SUMMARY

It’s still unclear to me as to whether TLCI is visually a better indicator of how cameras see color than any of these other measurements. It doesn’t always agree with the other curves, which is good; but the other curves don’t always agree much either, so it’s hard to know whether any is truly more accurate by simply looking at data. What does seem to be true is that TLCI tracks the other measures in a similar fashion, and occasionally makes a bold statement about a light that the other systems contradict. There are certainly variations in degree, where another system might score a light significantly better or significantly worse, but TLCI still rises and falls at most of the same points that the others do.

It’s certainly true that TLCI may have a bit of an edge under certain circumstances. I think Indie Cine Academy did exactly the right thing by presenting all the numbers, so we can compare, contrast, and make educated guesses rather than relying on any one number.

Given that TLCI was designed around how camera’s respond to light, I find myself tempted to rely on it over the others. There’s certainly no reason not to rely on it, as it yields roughly the same results as the traditional measures, and it may yield better results in certain conditions (as seen in the normalized graph above).

I hope that you’ll never have the occasion to use a light that scores lower than 80 on any scale. For the others, it will be a matter of calibrating your eye by finding a light that you like on the index, finding another that you don’t, designating an arbitrary number on the scale of your choice that falls between them, and use that as your “don’t go below” reference.

This is, however, no substitute for actually looking at the light. A single number doesn’t tell us much about all the variables involved, such as saturation, fidelity, spectral gaps and spikes, etc. Keep that in mind while reading marketing materials: if a light doesn’t rate a full 100, there’s something slightly off about it. What’s missing may be something you can live with… or it might not.

Many thanks to Indie Cine Academy for their amazing public service in the creation of their LED lighting database.

Disclosure: I have worked as a paid consultant to DSC Labs, maker of color charts for video.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now