It’s been a few years, now, since the film and television industry collectively came to the quiet realisation that stereo 3D was not going to “kill,” in the pugilistic metaphor of the day, conventional cinematic production. That’s possibly because the stereo 3D of the 2010s faithfully continued to exhibit several of the biggest problems stereo 3D had in the mid twentieth century, and as such it didn’t work all that well as either a vehicle for artistic expression or a means of making money.

Proudly standing as a literal cornerstone of Dell’s exhibit at the NAB Show this year, however, is an example of something that’s a lot more 3D than the 3D we’ve seen previously; an actual way of making properly three-dimensional recordings of things. Several approaches to volumetric capture are on display, perhaps most prominently IO Industries’ Volucam, but our subject today is not the capture; it’s the post production. Arcturus is showing Holosuite, software which facilitates editing and manipulation of volumetric capture data.

Volumetric capture?

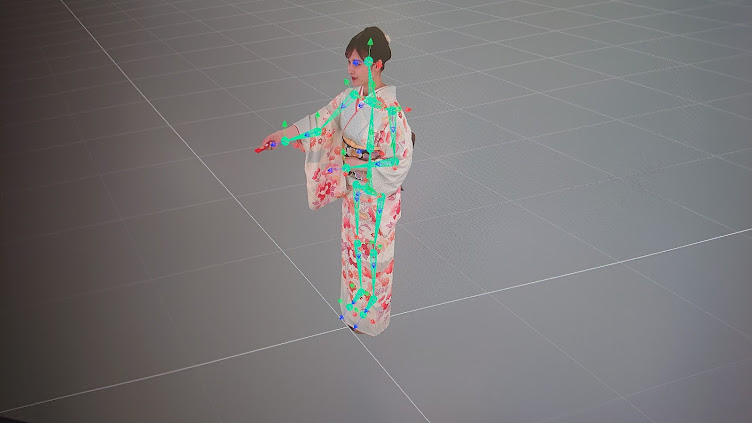

Volumetric capture is sometimes also called volumetric video, which seems like a bit of a misnomer. What Holosuite works with is not so much a series of pictures of a scene as in conventional cinematography; it’s a series of textured 3D meshes representing the subject (often a person) over time. It is, in essence, an animated 3D scan, and like more conventional motion capture, the problem is not so much in capturing the data but handling it, and particularly editing it.

Volumetric capture is already useful in visual effects work, as the 3D model can convincingly represent a live person and yet be viewed from different angles, relit (depending on how the capture was lit), and placed into any virtual environment almost effortlessly. It doesn’t risk the clunky animation of hand-animated CGI, nor the potential modelling issues. It’s also tremendously helpful for video game development, where human characters might be represented by volumetric capture of real actors in real costumes.

There’s a very domain-specific problem in video games where the capability of computers to render detailed scenes has begun to significantly outstrip the ability of developers to do the design work on sufficiently detailed environments for the machines to render. As such, the option to scan things in real time – especially people, and especially animated people – is hugely significant. What’s more, given the success of Unreal Engine in virtual production for film and TV, the relevance of volumetric capture to both fields is clear.

Hit it with a hammer

The problem is editability. When a modeller builds a human form manually in a 3D program, a single mesh is built, representing the shape of the subject, and pushed around with various tools to simulate human movement. The triangular polygons that once formed part of, say, a forearm, will always form part of a forearm.

Volumetric capture data isn’t nearly so tidy, and consecutive animation frames may have meshes that have nothing to do with one another bey

ond describing different positions of the same shape. Make changes to a single mesh in an animated sequence, and all we’ve done is change one frame; the result is a mess. Similar concerns arose when motion capture was new and equally hard to edit, although the scale of the problem is entirely different. Motion capture might use a dozen reflective balls on a suit; a 3D mesh might have thousands of triangles.

Arcturus’ tools can, among many other things, perform processing to minimise the number of triangles used to represent a given shape, impose a bone system on a volumetrically captured human and generally work with it in a much more conventional manner. There are limits to what it can do; the bigger the change between the scanned data and the target the trickier things get, but it’s a lot, lot, lot easier than manipulating the same data using more conventional tools.

Through the Looking Glass

Arcturus exhibited using displays from Looking Glass Factory which are certainly among the best currently available. Looking Glass is not the only stereoscopic display at the show, as Sony showed its Spatial Reality Display, although the two aren’t that comparable – Sony’s display relies on face tracking to establish a single observer’s point of view and thus can cover a wider range of viewing positions, while Looking Glass is a true lightfield display which works for any number of viewers at once and doesn’t rely on tracking the observer’s position.

Looking Glass probably deserves just as much attention as Arcturus, especially given the relatively high quality of the image. The display has to be able to emit a huge number of slightly different views of the same object simultaneously, and even though the type pictured reportedly uses an 8K panel there’s clearly a limit to how many pixels it has and how accurately it can describe all those images at any one time. The result is, yes, some visible quantisation (if that’s the word) of certain image features, but it’s class-leading stuff.

Looking Glass’s displays are finding use in several areas, particularly including molecular biology, where complex atomic structures can be visualised better than ever before. Whether volumetric displays showing volumetrically-captured content will ever become a form of entertainment in its own right remains to be seen – that sounds like a video game more than a movie – but the application of this sort of tech to a wide range of the media arts is hard to overlook.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now