Are you inadvertently clipping your exports from Resolve? I wrote an article about this a while ago, Solutions to Resolve 4: Full data and video levels in DaVinci Resolve – don’t clip your proxies and transcodes. It turns out that this is not the end of the story.

Jean-Pierre Demuynck, an engineer who helped develop the ITU-R BT specifications, drew my attention to a further problem I hadn’t been aware of. That’s what I’ll be sharing with you here. (If you’re not familiar with using full and video levels in Resolve, please first read the Solutions to Resolve 4 article mentioned above.)

Here’s the problem:

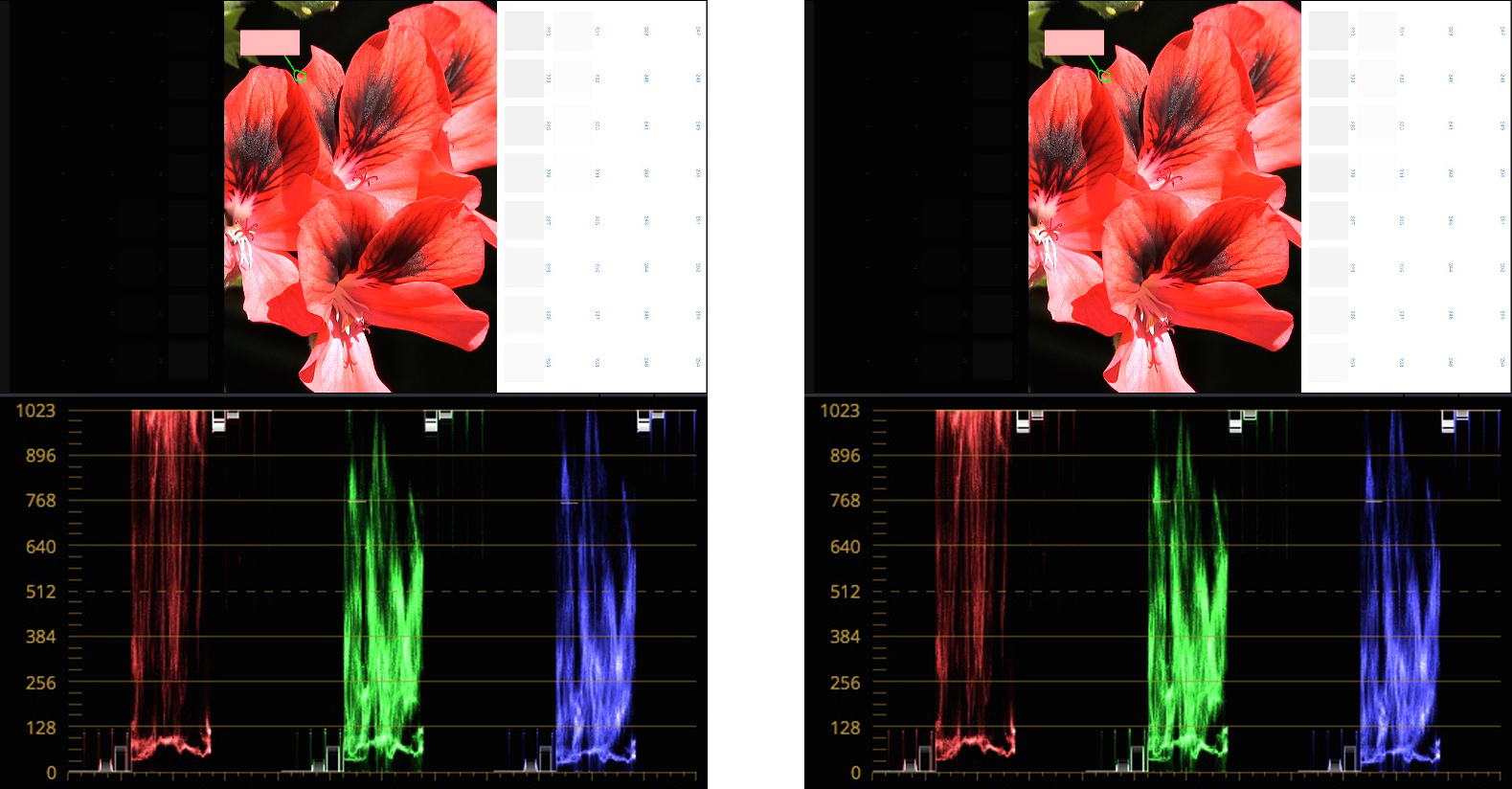

The flower on the left is the original image, imported into Resolve. On the right, the image has been exported from Resolve, then re-imported to see what it looks like on the scopes.

Identical? Well, not quite.

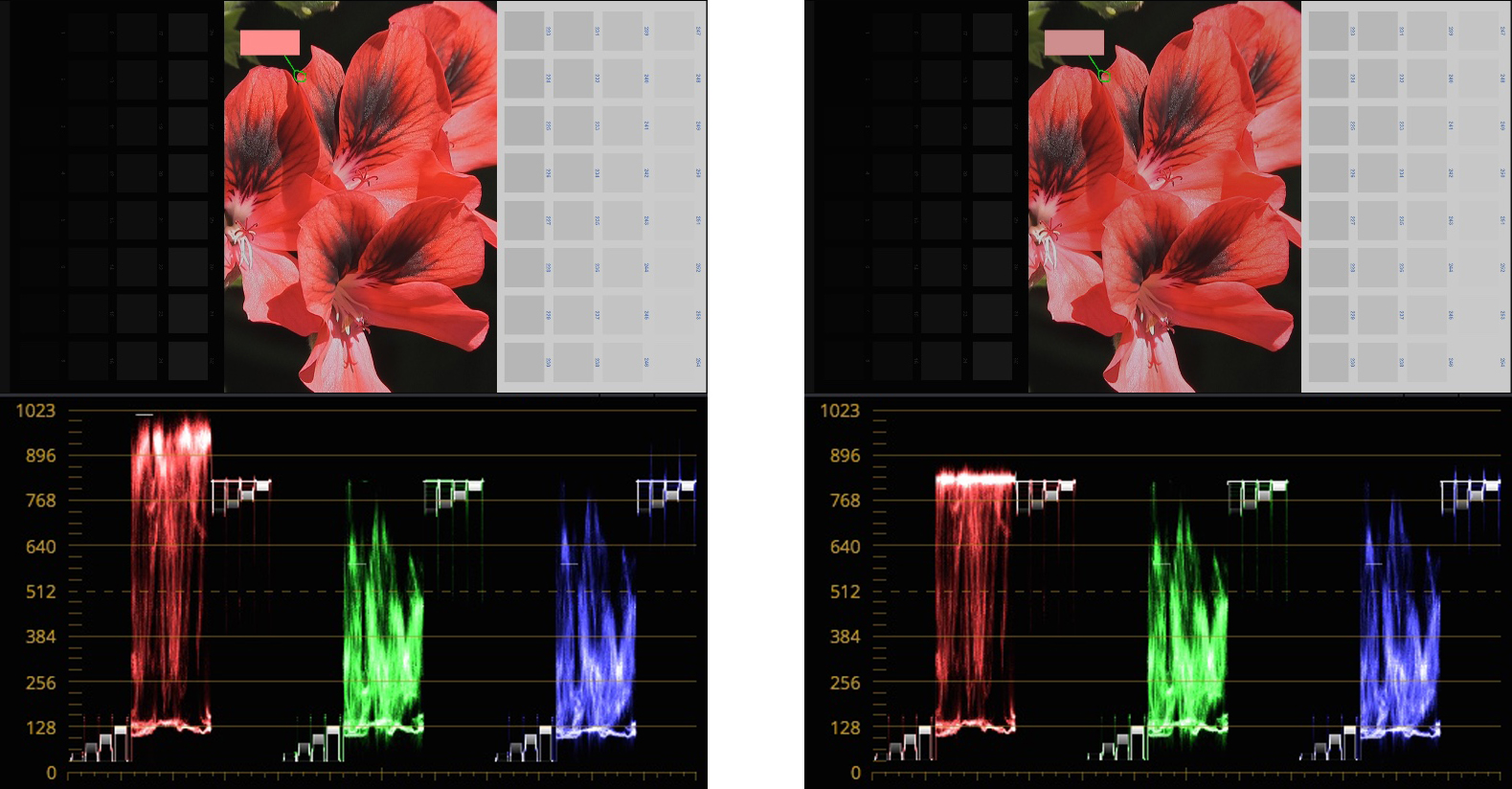

Here they are again with the gain reduced and the blacks lifted. The same correction has been applied to both images.

In the export, the red channel has been severely clipped.

You might think at first (if you’ve read Solutions to Resolve 4…) that the solution would be to activate the option “Retain sub-black and super-white data” in the advanced settings of the Delivery page. But no, this was activated.

You can see it was activated by looking at the black and white patches on each side of the flower. The sub-blacks (black patches with values from 0 to 15) and super-whites (white patches with values 236 to 255) weren’t clipped on export and are clearly present in the graded image.

It turns out that “Retain sub-black and super-white data” option works for luminance, but doesn’t stop the colour channels from clipping.

Is there a solution for this? Unfortunately no, not really. You could choose one of the half-float codecs for delivery (DPX or EXR). They won’t clip, but they aren’t practical for standard exports. All the usual post-production codecs like ProRes and DNx will be clipped.

Before I get into the reasons for why this is happening, it’s worth taking a minute to look at the implications of this. How serious a problem is it for editing and post-production?

If you’re color grading, it’s not a problem at all. It’s the colorist’s job to manage clipping, so anything clipped on delivery is the colorist’s fault, not Resolve’s. It’s not a problem either if your rushes are RGB. This only affects YUV media.

However, since almost all the media we work with from day to day is YUV, and we don’t always grade it before exporting, the clipping remains a serious problem. If you use Resolve to transcode your rushes, it may not retain all the original signal. The same is true if you export an ungraded Master of your edit – something that is required practice for many broadcasters and production houses.

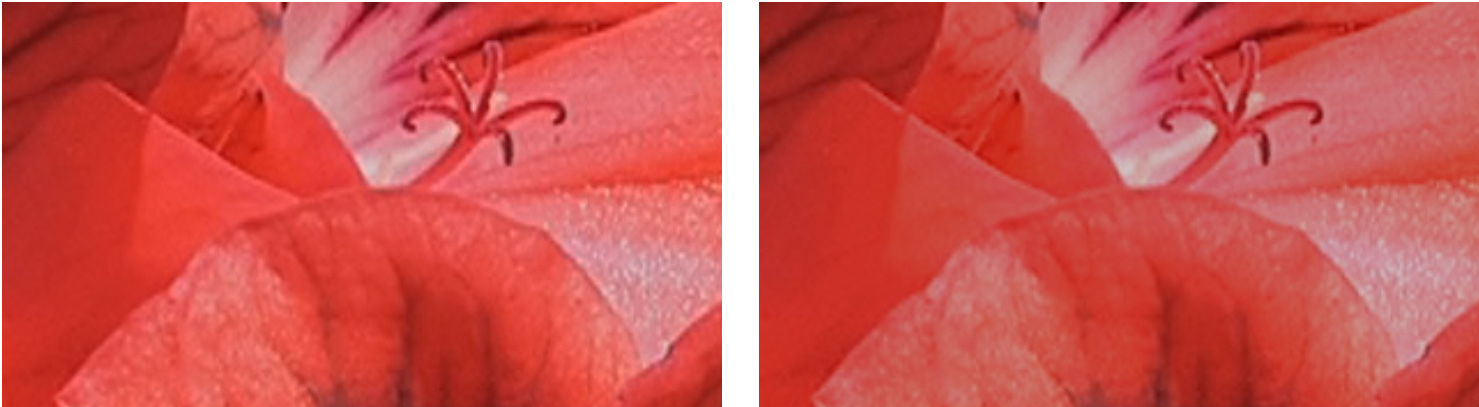

You won’t see the problem when you export, the copy will appear identical, as demonstrated at the beginning of this article. It’s when you decide to use the export later for grading that the clipping will show up. Here’s a close-up of the graded images shown above.

You don’t have to be an expert colorist to see that the export has lost the original clip’s deep saturation and fine color details. Grading won’t get them back.

So what exactly is happening here? When I first discovered the problem, it was a real enigma. How could the red channel possibly be so much higher than maximum luminance in the first place? There are no values above 255 in an 8-bit file…

It turns out that this is true and not true, at least where YUV is concerned. YUV can, in fact, pack a higher range of colors into an 8-bit file than RGB. It can do this because of the way the conversions between RGB and YUV are calculated.

Here are the equations: (Note: these equations convert from RGB to Full YUV levels, not to Video YUV levels which would require another step, and which I’ve left out because it makes no difference to the issue we’re discussing.)

To convert from RGB to YUV:

Y = 0.2126R + 0.7152G + 0.0722B

U = 0.551213(B-Y) + 128

V = 0.649499(R-Y) + 128

To convert from YUV to RGB:

R = Y + 1.539651(V-128)

G = Y – 0.4576759(V-128) – 0.18314607(U-128)

B = Y + 1.8142115(U-128)

You don’t need to understand these equations, but you can see they’re pretty simple, no more than basic school maths. The important point here is that they have very surprising consequences.

Take, for example, a YUV signal where Y=234, U=148 and V=195. That’s a perfectly legitimate 8-bit signal because the values are between 0 and 255. Convert that to RGB and you get R=337, G=200 and B=269 which you can’t encode in 8 bits because some of the values go beyond 255.

The equations allow YUV to record a higher dynamic color range than RGB in an 8-bit file. (The same is true for 10 bits, 12 bits, etc. – I refer to 8 bits in this article just for simplicity, the principles are the same for the others.)

Camera manufacturers take advantage of this to squeeze the most they can out of their sensors[1]. They oversample the RGB signals coming from the sensor to cover the extra range above 255 before this is converted to YUV. For example, if you use 9 bits instead of 8 bits to sample the RGB, the extra bit would cover anything from 256 to 511.

So in the example above, the signals from the sensor would be R=337 G=200 B=269, subsequently converted to a standard 8-bit YUV file with the values Y=234, U=148 and V=195.

Moving on down the production workflow, what happens when this file is imported into DaVinci Resolve? Resolve works in RGB (YRGB color science) so you’d expect the signal to clip when it’s converted from YUV.

It doesn’t because Resolve uses 32-bit float for its working space. Float systems differ from the usual 8, 10, 16 or 32-bit sampling in that they don’t have a ceiling or a floor. Once you get to the top or bottom of the standard signal range, you can keep going, there’s no limit. That’s why Resolve doesn’t clip the signal when you’re grading, no matter how far you push it. That’s also why EXR and DPX float codecs don’t clip on export.

So your YUV rushes are fine while you’re working in Resolve, you always have the full, original signal. You can actually see this with the color picker.

(This doesn’t always work, by the way. In some versions of Resolve 16, the picker is clamped at 255 maximum, whatever the real values are. If you have the Studio version and an external monitor, you should be able to get this to work correctly in Project Settings > Master Settings > Video Monitoring by activating the “Retain sub-black and super-white data” option. In the Color Management settings, Broadcast Safe should be default, ie. IRE -20 -120 and “Make Broadcast safe” turned off.)

The problem with clipping in Resolve only happens on export. It looks very much as if the software converts its 32-bit float YRGB working space to standard RGB (which would clip the color channels) before it converts to YUV for codecs like ProRes and DNx. Instead of going straight from 32-bit float YRGB to 8, 10 or 12-bit YUV, there’s an sRGB bottleneck in the middle.

Why this should be so, I have no idea. There are other NLEs that don’t clip the color channels, so this is not inevitable and there should be ways to solve it. Now that Resolve has become a major player in editing as well as color grading, it’s an important issue to fix. Maybe in version 17?

One final note for colorists: This problem also affects the video feed to an external monitor. The colors will clip in the same way as a YUV export, whatever the range you’re working with (16-235 or 16-255). You won’t be able to see the bright, saturated colors you would if you connected the camera directly to the monitor, and there will be a hue shift.

Note: Most of the facts and figures mentioned here were provided by Jean-Pierre Demuynck, including the sample file used for the illustrations. This is as much his article as it is mine!

If you’d like to experiment yourself, you can download the video file used in this article here. The image was assembled with no grading.

[1] Sony and Panasonic cameras use an extended xvYCC color space where the channels can go over 350.

Other articles in the Solutions to Resolve series:

-Solutions to Resolve Part 1: Precise sharpening and noise reduction

-Solutions to Resolve Part 2: Accurate shot match in ColorTrace

-Solutions to Resolve Part 3: Unexpected clipping when grading

-Solutions to Resolve Part 4: Don’t clip your proxies and transcodes!

-Solutions to Resolve 5: Taming Color Management – Part 1

-Solutions to Resolve 5: Taming Color Management – Part 2

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now