Last weekend I attended the two-day Createasphere 3D Production Workshop Utilizing Panasonic AG-3DA1 Cameras in Burbank, and got a good overview of the issues in shooting stereo 3D (S3D) content, as well as some immensely instructive hands-on experience with the AG-3DA1 S3D camcorder.

I went as a 3D newbie; I had no previous experience shooting S3D, and wanted to learn the basics. I was concerned that the focus on the 3DA1 camcorder would detract from the generality of the course, but my fears were unfounded: general S3D theory was discussed in detail, and the comparative simplicity of the 3DA1 (fixed interaxial [IA, a.k.a. interocular, or IO]; single-camera operational convenience) allowed plenty of opportunity to get into 3D compositional trouble without getting bogged down in the mechanical hassles of aligning a more traditional S3D rig.

An AG-3DA1 feeds a 3D plasma screen over HDMI in the main classroom.

The class consisted of about thirty students, fairly evenly balanced between 3D newbies such as myself, early adopters of the 3DA1 looking for for training, and Panasonic dealers and rental house folks.

Day 1 started off with an overview of S3D theory and practice, followed by an introduction to the AG-3DA1. We then had a hands-on workshop in the late afternoon. Day 2 commenced with another hands-on workshop, continued with a review of editing and finishing options for 3D material, and concluded with a final Q&A session.

I attended as a guest of instructor Bob Kertesz (whose email sig says, “DIT and Video Controller extraordinaire. High quality images for more than three decades – whether you’ve wanted them or not.©”); there were several seats left over in the 40-person limit for the course, so I wasn’t displacing a paid customer. Mr. Kertesz tag-teamed with Dave Gregory, S.O.C. (whose card says, “Your very own gray-haired LIGHTING CAMERAMAN and EDITOR”), an optical effects specialist whose filmography dates back to the original “Star Trek – The Motion Picture” (the first—and so far the only—big-name Hollywood picture I worked on, grin) and “Star Wars – Episode IV”. These two gentlemen talked through the general theory and practical issues of shooting stereo 3D for the first half of the first day.

S3D Theory

I can’t recount the entirety of what was discussed (for one thing, I’m still absorbing it all myself), but I will list some of the things I made notes on. Please excuse me for not reprising basic S3D theory here!

• Without absolute pixel-by-pixel synchronization between the two views (genlock, at a minimum), motion in the scene shows up as positional disparity (since there’s a time difference between the L and R frames). If it’s horizontal motion / disparity, it reads as false stereo depth; if it’s vertical, it just hurts the eyes. That’s one reason “two Canon 5Ds nailed to a 2×4” won’t work as a stereo rig.

• When you go to a 3D theater, the best seats are at the back, on the centerline. The worst are in the front row and/or off to the sides.

• BSkyB (European satcaster) says “too much coming out of the box is intrusive in people’s homes.” Only 2% (of screen width) disparity is allowed.

• 10% of people don’t see stereo 3D.

• Converging the eyes (to see stuff close) is easy, up to a point. Divergence (seeing stuff past the convergence point; going walleyed) is OK up to about 1 degree; beyond that and “eyes pull out of sockets”—very disturbing, if not downright painful or impossible for most folks. Staying within that 1 degree divergence is generally the limiting factor in S3D depth budgeting, and it depends on screen size and how near that screen the viewer is!

Bob Kertesz demonstrates disparity using crossed sticks representing the L & R viewing axes.

• “Depth budget” – the near/far limits of comfortably viewable depth in an S3D image, defined by the disparity of near and far elements. Depth budget is controlled by IA, the convergence point, and the size of the the final image is viewed at. Smaller screens allow a deeper depth than larger screens.

• “The one thing that can’t be fixed [relatively easily and inexpensively] in post is depth volume.” If you shoot with too wide an IA, it can be fixed by interpolating between the L and R images; if you shoot too narrow, you have to dimensionalize (e.g., recreate one “eye”) from scratch.

• Vertical disparity is horrifically disturbing, since you don’t see that in real life and your eyes don’t work that way (other bad disparities: size, rotation, distortion, color, exposure; that’s why matching lenses and matching camera settings is so critical).

• Humans normally use binocular depth perception only out to 130 meters.

• “The rule of 1/30″: IA should be no more than 3% of the distance to the nearest object in the scene. Some say 1/50 (2%); or even 1/100 (1%) for large screen presentations (e.g., 3% for showing on a 42” screen is OK, but 1% is more appropriate for 60 ft. cinema screens). You can violate this rule for short, quick shots, but no for long takes. Soft focus allows more violations, but has its own issues.

• Corollary: close-ups are “danger, Will Robinson!” S3D prefers medium shots and MCUs over ECUs.

• Horizontal edge violations (where one eye sees something the other doesn’t, because the edge of the image crops it off) are terrible; fix in post with a “floating window” to crop the excess info, but do it with half-second wipes in the affected channel, not hard cuts.

• In S3D, convergence is the substitute for shallow depth of field in 2D.

• Beamsplitter (over/under) rigs have more issues than side-by-side rigs (or all-on-one cameras like the 3DA1) due to the semitransparent mirror: differing reflections and polarization effects. These and other “bandits” (elements appearing to one eye, but not to the other) are especially prevalent with water waves, rain, etc. Organic beamsplitters avoid a lot of these problems, but they’re $1000 each, and they suffer from fungus growth in wet environments, as they found on out on “Pirates of the Caribbean 3D”!

• Setting up a two-camera rig is time-consuming. Even a quick one like the Kernercam rig (which is built for 2/3″ box cameras and matched Fujinon zooms) takes about 10 minutes to build and another minute for a “homing” procedure to calibrate the servos to the cameras and lenses—and that’s a very fast one. Calibration needs to be re-done on every lens change, so it’s preferable from a time and money standpoint to stick with calibrated zooms instead of swapping between primes from shot to shot.

The Kernercam 3D beamsplitter rig, with custom-fit cabling preinstalled.

• As far as the learning process is concerned, there are four levels of 3D competence:

Unconscious 3D Incompetence – you don’t know that you’re doing it wrong.

Conscious 3D Incompetence – you know that you’re doing it wrong, but you don’t know how not to do it wrong.

Conscious 3D Competence – you know how to do it right, but you have to think about it to avoid making horrid mistakes.

Unconscious 3D Competence – you have internalized the rules, and you just shoot good 3D naturally.

The AG-3DA1

After lunch, the ubiquitous Jan Crittenden, Panasonic’s product manager for the 3DA1 (among other things), took us through Panasonic’s integrated S3D camcorder.

Jan Crittenden demonstrates the 3DA1 as Dave Gregory looks on.

• The AG-3DA1 is “so much like a standard, 2D camcorder that your initial 3D footage will stink!” You’ll shoot 3D as if it were 2D, because the camera makes it so easy, and boy! Will you ever be sorry! (I can speak from my experience in the following 24 hours that she was saying nothing more than the absolute truth, grin.)

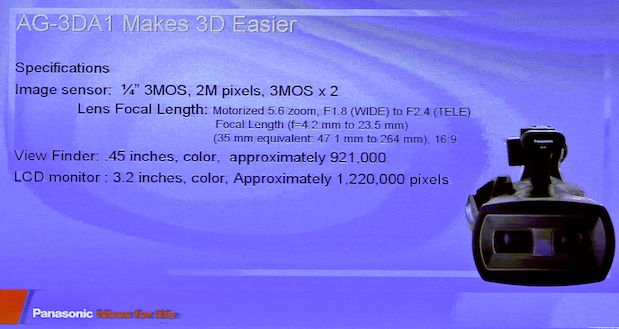

• The zoom is 5.6:1. It started out as 10:1, but as it was optimized for 3D production the zoom range shrank.

Basic tech details for the 3DA1, courtesy Panasonic.

• The 3DA1 was nine months from concept to production, half the time of a typical camera.

• The camera is switchable between 50 Hz and 59.94 Hz standards. It will shoot interlaced, but don’t do it! Instead, shoot 25P or 30P, deliver as 1080i (that way there won’t be any temporal disparity between the channels regardless of presentation technology). Of course, the 3DA1 shoots 24P as well.

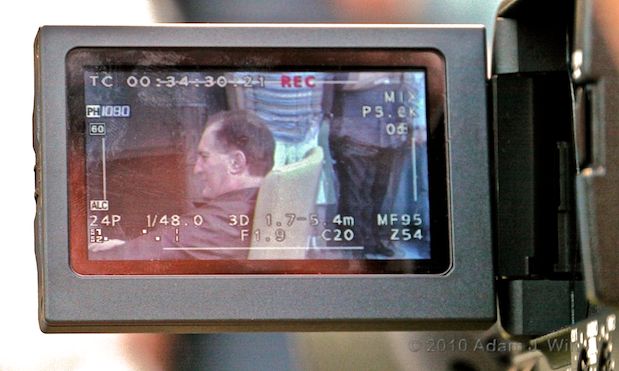

• Convergence is controlled with a side wheel, shared with iris/gain. Settings from C00 (nearest convergence, about 2 meters / 6 feet) to C99 (shooting parallel). The EVF and LCD can show left eye (normal), right eye, or “mix” mode with both eyes overlaid; the camera is converged where the images overlay without disparity.

• The 3DA1 has a fixed IA of 65mm (roughly the same as a grown human’s IO), and its most appropriate shooting range is from 3-30 meters, or 10-100 feet.

• It has wired remote start/stop, focus, iris, and zoom, like other Panasonic handycams (think Bebob and Varizoom remotes), and adds remote convergence control. HD-SDI carries start/stop signals for synchronized offboard recording.

• Camera has HDMI 1.4 output (needed for full-res, two-channel S3D) and dual HD-SDI outputs. HDMI and HD-SDI are mutually exclusive; need to switch HD-SDI off in the menus to get HDMI output.

• “ProRes LT has more than enough headroom for AVCHD transcoding.” (I’m not sure I agree; I see differences between LT and normal when I transcode AVCHD; even differences versus HQ, though those differences are visually insignificant. But I’m splitting hairs; LT is probably fine for most needs.)

• The camera always records PH mode: 24 Mbit/sec overall bitrate (long-GOP AVCHD, with 8-bit 4:2:0 sampling). Class 4 or faster SDHC cards; 180 minutes on a 32 GB card. Sandisk or Panasonic cards recommended, “otherwise, you’re on your own.” Audio is only recorded on the left-channel card, so the left card will fill up faster than the right card: don’t freak out, this is normal.

• The 3DA1 was designed to take you from 2D’s “Guitar Hero Easy” (control focus, iris, and zoom) to 3D’s “Guitar Hero Medium” (control focus, iris, zoom, and convergence). You don’t have to fuss with the rest of the 3D setup as it’s controlled by the camera; the left and right channels are factory-tracked and factory-matched.

• The camera has a “3D Guide” function, showing you your approximate depth budget for your current zoom and convergence settings. It has two presets: when “3D” is shown in white, it’s assuming a 77″ screen; when it’s green, it’s working based on a 200″ screen.

• The 3DA1 uses shift lenses for convergence, not toe-in of the lens axes, so there’s no problem with keystoning.

• Iris ring also controls gain, but according to Bob Kertesz, noise in 3D isn’t as apparent as in 2D, so it’s not a big deal. (I find this to be the case; looking at the camera’s output on the 25″ LCD and 50+” plasma without 3D glasses, the gained-up images are pretty noisy, but once I put on the glasses, I simply don’t notice it. Interesting…).

• It’s $21,000 instead of the $4,000 you’d assume (in essence, the guts of the 3DA1 are comprised of two $2,000 AG-HMC40s side-by-side) because the zoom lenses are hand-matched, and each camera is manually calibrated with servo look-up tables to ensure that the two lenses track each other precisely.

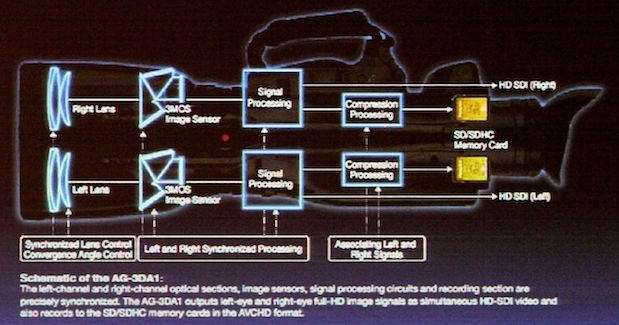

A Panasonic slide showing the 3DA1’s basic schematic.

Hands-on, Day 1

We broke up into four groups of seven or eight people each; two groups went with Dave Gregory and two went with Bob Kertesz. Each group was given an AG-3DA1. Theoretically, one group with each instructor was supposed to shoot a dramatic scene in 3D, while the other shot a “making of” docco, also in 3D. Practically speaking, both groups strove to get interesting and informative shots, regardless of assignment.

A couple of folks from the “drama” group were “volunteered” to be actors, so the drama group would have something to shoot (grin). These unfortunates were usually dealers or rental-house folks; it was felt, not unreasonably, that they would have ready access to 3DA1s after the workshop, so their hands-on time in class was less critical. It must be noted that the actors acquiesced to their fates with dignity and good humor.

I was in the docco group with Mr Gregory (practically speaking, this was the time I shot stills; all action pix were shot that afternoon, before and between the times I got my hands on a 3DA1 or I was helping to wrangle cables or drag monitors around).

Since the 3DA1 sensibly limits near convergence to 2 meters (lest the background divergence go so wide as to instantly fracture a viewer’s skull), a large stage was needed, and we dragged a desk, desk accessories, and two chairs outside to a patio so the cameras would have enough room to work. The two actors went through the motions of a job interview, while the drama crew tried a variety of shots.

Setting up the “drama” cam on the patio.

The docco crew shot the drama crew and also branched out into experimentation: a telephoto shot of someone walking towards and away from the camera, odd angles, shooting through foliage, and so on.

The “docco” crew composes a shot.

The drama camera was tethered via dual HD-SDI lines to a large 3D plasma in the room next to the patio, so folks could watch the camera’s output live. The docco camera’s monitor had only a short HDMI cable, so it went untethered, and the docco crew used the camera’s mix mode alone to monitor convergence.

Several folks used the 3DA1 handheld, just because they could.

Handheld, eye-level.

Handheld, using the LCD.

Try that with a traditional, two-camera stereo rig!

When I got my hands on the camera, I tried a variety of things I was told I shouldn’t: shakycam (not that I like shakycam, mind you; I just wanted to see how much worse it looked in 3D), extremely close foreground elements; tight closeups, edge violations.

After a feverish period of shooting, everyone went back into the room to watch playback.

Everything we were told to avoid looked really bad in playback. Shakycam was unwatchable—even more so than normal. Edge violations by things in front of the screen plane were execrable. Tight closeups were disturbing. Converging too close, in an attempt to keep close action “inside the box” (behind the screen plane) caused too much disparity in the background, making it impossible to fuse visually. Cutting between shots with widely-varying convergence distances caused headaches.

Y’know? All that stuff about depth budgets and choosing your convergence point carefully? All those rules? They aren’t fooling! I can’t speak for the other folks, but I went away from this exercise with a newfound respect for stereographers and the constraints under which they work.

Next: Day 2; Operating the 3DA1; Post; Overall…

Hands-on, Day 2

We returned the next morning for a second hands-on session. This time, I was in Mr. Kertesz’s “drama” group—and now I knew enough to be dangerous (the day before, I knew so little as to be downright lethal; being merely dangerous was an improvement). Furthermore, I had watched a 3DA1 test tape that Mr. Gregory had shot at Vasquez Rocks (familiar to anyone who has seen just about any television show or movie shot in southern California from 1931 onwards) in which he narrated his convergence settings, 3D Guide readout, and expected outcome, so I had some cunning plans…

Mr. Kertesz’s room was big enough to accommodate the crews inside, so we had an “office set” up against bright windows for backlighting.

We commandeered a luggage cart to use as a dolly, and tried some slow, sweeping moves, both with a fixed convergence point, and pulling convergence during the shot (if there had been room on the cart, we could have used a focus puller as well). We tried convergence pulls between two actors as the camera panned. We tried the usual “comin’ atcha” trick of having someone FAR AWAY take an extended C-stand and point it at the camera, so that the end of the C-stand was REALLY CLOSE. We shot ECUs and contrasted them with MCUs. We shot from floor level and from ceiling level, to develop the 3D space in different ways. We tried setting convergence on the nearest point in the scene (the shoulder of the actor nearest the camera), and on a midpoint (so the near actor was “in front” of the screen while the far actor was “behind” it). We shot ECUS with both crisp and defocused backgrounds, intentionally creating excessive background disparity, so we could see how defocusing affected our perception of the depth budget violation. When I was operating, I narrated what I was doing as I did it, so I (and anyone else unfortunate enough to be in viewing distance during playback) would have some clue as to why the image was doing what it did when it did it.

As before, we all gathered ’round the big screen for playback. As before, the playback was both sobering and informative.

Convergence set on the near corner of the yellow chair. Image photographed off of the live feed on a Panasonic BT-3DL2550 LCD.

(One side note: we all got to experience the “Super Bowl problem” [1]. Each room had about six or eight sets of 3D glasses, and each room had twice that number of people wanting to use them. There just weren’t enough glasses to go around, so there was a fair amount of backing up and replaying certain shots accompanied by a frenzied redistribution of eyewear—something not as easily done with a live Super Bowl broadcast.)

Having the hands-on work broken into two sessions on different days was hugely helpful. The day before, I was “unconsciously 3D incompetent”, but afterwards I had time to reflect on what I had seen and done. This day, I was “consciously 3D incompetent”, and by the end of the exercise, I was able to guess with fair certainty what would work, what would fail to work, and what would send the hapless viewer scrabbling for barf bag and aspirin bottle. Conscious 3D competence? Let’s just say that I could walk onto a 3D shoot tomorrow if I had to, and not screw up too embarrassingly in the first five minutes… which is not something I could say with any justification at all before attending this workshop.

I know, I know: a lot of verbal handwaving. Where are the pictures? The simple truth of the matter is that you really have to be there to Get It: it’s one thing to sit in a classroom and learn what the rules are; it’s something entirely different to wave a stereo 3D camera around, twiddle its knobs, and see what comes out of its video spigots.

Operating the AG-3DA1

The cameras we worked with appeared to be final-stage prototypes and/or first production-run samples. Once in a while, a couple of the cameras refused to respond to menu selections and had to be power-cycled by pulling their batteries, but aside from that they seemed to be fully functional.

The 3DA1 is light in the hands and quite usable, if slightly front-heavy. It works just like an HVX200, HPX170, or HMC40, except with a few more controls. Both zoom and focus operate smoothly and both L and R lenses move in perfect lock-step even during fast (2 second end-to-end) zooms, something that definitely wasn’t the case with the NAB prototypes.

The EVF’s image is a bit on the small side. The EVF is hinged along its top edge, so putting any pressure on the eyecup tends to rotate it down to its horizontal position; annoying if you’re trying to operate with the EVF pointed up.

The LCD’s image suffered a bit in direct sunlight, but was otherwise quite usable.

Both the EVF and the LCD are sharp enough for focusing.

The iris wheel doubles as the convergence control; you flip a side switch to toggle between modes (Jan Crittenden suggests that you set convergence, then flip it to iris; it’s more obvious when you screw up exposure during a take than when you inadvertently bump convergence). There’s a MIX mode button to toggle mix mode on the LCD and EVF, and a programmable function button defaults to 3D Guide: push once for a small-screen “depth budget”, push again for large screen.

The 3D Guide turned out to be very useful, and accurately predictive of the usable depth in a scene. I can imagine going on a shoot with the 3D Guide alone as my depth-budget calculator; with any other 3D system I’d require a separate app on my smartphone/laptop/tablet/abacus before I’d take the job (now that I know enough to be dangerous, that is; prior to this workshop I’d have been none the wiser about the need for such a calculator).

The 3D Guide readout says “3D 1.7-5.4m”. Mix mode shows convergence set to the near corner of the chair.

By the same token, MIX mode provides a direct visual indication of convergence point, near divergence, and far divergence (Dave Gregory added tape strips to the margins of his camera’s LCD, showing tick marks at 5% intervals and size references for 1%, 2.5%, 5%, and 10% divergence, a very useful thing indeed).

Dave Gregory’s disparity guides on his 3DA1’s LCD.

I wound up operating in MIX mode the entire time; it wasn’t distracting, and it allowed me to manage my convergence point and monitor my divergence at all times. At times I wished both the LCD and EVF images were a bit larger, so I could discriminate more easily and exactly where my convergence point was, but this was a minor annoyance and not a crippling deficiency.

In short, operating the 3DA1 really is just like operating a standard 2D handheld camera, only with a few more easy-to-use controls thrown in. There’s no onerous setup and alignment, and the camera is as light and portable as any other small-format HD handheld camera.

“Real stereographers” accustomed to complex rigs might consider this cheating—the camera perfectly and invisibly handles all those niggling details of vertical alignment, lens matching and tracking, channel sync, and color and exposure matching that keep most 3D techs very busy—but I found the camera simply “stayed out of the way” and let me concentrate on making pictures.

True, it didn’t let me vary IA, and that limits the usable distance ranges the camera is suited for, but keep things in perspective: an AG-3DA1 rents for $700-$800/day and can be carried and set up by one person without breaking into a sweat, whereas even a compact rig like the Kernercam goes for $5000/day (cameras and Fujinon zooms included) and really needs two people to lug it around.

3D Post

After lunch, Michael Kammes, Senior Applications Editor at Key Code Media, spoke about post options for 3D… for this week. It’s a rapidly-evolving field and things are always changing. Also, he spoke as Michael Kammes, not as a representative of Key Code Media; he warned us that what he said was personal opinion. With those caveats, here’s a very rough summary of his key points:

• Don’t span clips across cards! Makes post very error-prone. Also, use a consistent clip-labeling scheme, such as project/day/unit/camera/eye.

• Always verify frame sync, shutter sync, record start/stop sync on-set, or you’ll be in a world of hurt when you get to post.

• No native editing in 3D… yet. Neither FCP nor AVID handles 3D without plugins.

• Storage: plan for three times what you’re used to: Left eye plus right eye plus the multiplexed output for editing.

• Pre-transcode to your editing format (e.g., while wrangling). Why? Pay now or pay later; where is the stress? Hurry up and wait on set, or hurry up and panic when the deadline is upon you and you need to render your timeline.

• Avid: slightly ahead in 3D. Avid Media Access understands native formats and Quicktime, although may not play in real time. Get 3D into Avid using Avid’s free MetaFuze program (PC only, s-l-o-w). MetaFuze will create 1920×1080 over/under, side-by-side, or interlaced 3D depending on your monitor’s needs (since this is a single 1920×1080 stream with two multiplexed views in it, it’s an offline format; you’ll need to reconform your full-res sources afterwards). MetaFuze handles LUTs, image flip, etc., and accepts AVCHD media. Media Composer 5 maxes out at 1920×1080 (thus, no 2K resolution, so no ability to fix convergence using HIT—Horizontal Image Translation— without blowing up and taking a resolution hit).

• FCP: Cineform Neo3D plugin, app, and codec. “Remaster” wraps/transcodes source files into Cineform as .mov or .avi. Active Metadata for real-time eye picking, one-light color-correction; can tweak live with Tangent Wave control panel. “Firstlight” for combining the two views and changing the grade. Works at up to 2k per eye. Neo3D is $3000 and offers realtime output using AJA Kona cards. Alternative: Dashwood’s Stereo3D Toolbox. 3D Toolbox is format neutral (works with AVCHD natively, though it’s a bit slow), has advanced geometry. $1500, no video I/O.

(Bob Kertesz notes that on-set 3D LUTs work in post 10-15% of the time, regardless of camera and toolset, even with a perfectly calibrated Cine-Tal.)

• Monitors: “3D Ready” means it works, “3D Capable” means it can be upgraded for 3D.

• 3D Adapters for feeding monitors: Cine-Tal Davio, 3ality, AJA Hi5-3D.

• Creative considerations: editing in depth, slower cutting and lingering longer, smaller frame in the picture with everything contained in action safe to avoid edge violations.

• Color: Warmer colors appear closer, cooler colors farther away (light green and blue are normally colors of a natural background).

• 3D dailies are still very difficult to deliver; think of encoding farms using Telestream Episode.

• Options for the 3D conform step: Avid DS. Iridas SpeedGrade (common on set, $60k DI system; a bit uncommon and not very stable, limited support). DigitalVision FilmMaster; great, but $100,000 to $200,000, not much US support. Quantel Pablo, $350k, very fast, very proprietary. FilmLight’s BaseLight, GPU scalable, $400k to $750k. Autodesk Lustre, getting old, $100k. DaVinci Resolve, $1k to $80k, plays well with FCP XML. Assimilate Scratch, very RED centric, best RED support.

• Broadcast delivery: typically Sony HDCAM SR masters, with separate left- and right-eye full-res streams; thus need to output to SRW-5800, SRW-5800/2 VTRs. Final answer: what does the network’s red book say?

Overall

I returned from the weekend with my head jammed full of stuff I didn’t even know I should be worried about. I learned a lot more than I expected to, and having two separate hands-on sessions with a working 3D camcorder drove home those lessons far more effectively than any number of hours of book-learning and/or watching existing 3D films.

Is the class worth the $795 that Createasphere is asking? If you haven’t shot S3D before and you have a need to get up to speed with the theory and techniques of the medium, I’d say so. Consider that the 3DA1 alone rents for $700-$800/day, and you’ll also need a 3D display to see the results of your experiments. For the price of a single day’s rental for the camcorder and display, you get a two-day workshop with experienced instructors, including two hands-on sessions with your peers (everyone learns more from watching everyone’s mistakes, grin), and have enough left over to handle some of the travel expenses and hotel if the class isn’t nearby.

And yes: I attended the workshop as a guest, paying only my travel and hotel expenses. But had I known beforehand how much I would learn, I would have spent the money up front; as it was, I simply didn’t know how much I didn’t know, and thus I didn’t know how valuable the training would turn out to be.

Createasphere is doing one more 3D Production Workshop this year: Washington DC, 3-4 December, with both Mr Kertesz and Mr. Gregory instructing. More workshops are planned for next year, but the schedule, locations, and instructors haven’t been settled yet.

If this is the sort of thing that interests you, it’s worth checking out.

[1] For readers outside of the USA, the “Super Bowl” is a televised annual religious festival lasting several hours. Lavishly-produced hymns of praise for consumer goods and services are interspersed with short periods of heavily stylized violence, called “football” (no relation to the game of the same name played elsewhere in the world). At halftime, a passion play is performed; sometimes a sacrificial “virgin” is ritually defrocked, whereupon the FCC, playing the role of the Deity, metes out divine punishment in the form of staggering fines.

Celebrants traditionally gather in “Super Bowl parties” to worship communally. Parishioners often belong to differing sects (Panasonic, Sony, etc.) and have different large-screen altars; due to doctrinal disagreements in IR protocols their 3D glasses may fail to operate with the altar in the Party House (and some worshippers are insufficiently pious to own 3D glasses in the first place), hence the “Super Bowl problem” in which these unfortunates are denied the full immersive experience, placing their immortal souls in incalculable peril.

FTC Disclaimer: I attended the 3D Workshop as a guest of instructor Bob Kertesz, which saved me the $795 admission fee, though I spent $572.15 on travel, hotel, and food. When Mr. Kertesz offered me the seat in the workshop, I told him I would be attending specifically to learn things, not to write an article on it. He said that was fine; there was no quid-pro-quo, and the only precondition was “no heckling.”

Had Mr. Kertesz not offered me the seat, I might not have attended; I was worried about the 3DA1-specific focus of the workshop and concerned that I wouldn’t learn enough about Stereo 3D in general to make the expenditure of time and money worthwhile. I had written both Mr. Kertesz and previous 3D Workshop instructor Geoff Boyle to get more details before signing up, and expressed my concerns, which was when Mr Kertesz made his offer. The free admission made my predicted cost $500 instead of $1300; this made it worth attending, even on the off-chance that I might not learn as much about 3D in general as I wanted.

I know Mr. Kertesz from the cinematography mailing list and from various other industry events, but aside from that our paths have not crossed; neither of us has been in a position to hire the other for a gig, nor have we ever worked on the same show.

I have not attended other Createasphere events before, and Createasphere didn’t offer me any considerations other than the free seat. When I told the organizer, Marty Meyer, that I was attending as a student and not a reporter, she said that was fine.

Obviously the free seat influenced me to attend the workshop. I do not think it influenced me to report on the workshop in a biased way—but you should make up your own mind about that.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now