When producing large scale video projections it’s important to establish some type of preview, to guage how the content will look when projected. There are a variety of techniques available to do this.

While building projections can be incredibly impressive, and they’re usually created and displayed at sizes well above TV and even cinema, the actual process of creating the animation is fairly conventional. The critical step is the creation of a template – which can be as simple as a flattened photograph, a 3D model based on building plans or a CAD model, or even a 3D laser scan of the building. But however the template is created, the animation can be produced using everyday software such as After Effects, Maya, Cinema 4D and so on- there isn’t special software required that is unique to projection mapping projects.

If you’re interested in the evolution of building projections, check out this compilation.

If you’re interested in the technical aspects of building projections, check out my earlier video article here.

If you’re interested in an in-depth case study of how I used After Effects on a projection for the Sydney Opera House, check out this article.

Previewing large scale video projections

The real difference between working on a project for broadcast and working on a building projection is understanding what the animation you see on your monitor will look when it’s projected onto a surface that may be 100,000 times larger.

If that sounds like an exaggeration, then lets look at some figures.

As an example, my desktop monitor screen measures 60cm x 40cm, which is .24 m2.

The Alfa Bank projection was on a building with a surface of 25,500m2.

That’s 106,250 times larger than my monitor.

It’s also 364,285 times larger than my laptop, which is typical of the type of computer a colleague or client might be watching previews on.

It’s no exaggeration that a building projection may end up being 100,000 times larger than it appears on your desktop monitor – or 364,285 times larger in this exmple.

Working at a desk with a monitor a few feet in front of your face is very different to how the public will see the end result, when they’re standing on the ground in front of a building that might be 50 metres high. So previewing, or previsualisation, is a fundamental part of projection mapping. When working on a projection mapping project some sort of set-up is created to help us preview the work – and while the animations can be created using conventional software and techniques, previewing them can involve more specialized methods.

When the projection surface is flat, and when the basic template is just a flattened photograph, then what you see on the monitor is a pretty good indication of what you get on location- but you still need a bit of imagination to remember that the public are standing on the ground, looking up. Even though the Sydney Opera House is a complex curved shape, a basic flat 2D render still gives an accurate impression of the projected image.

The flat animation file (top) compared to the actual projection (bottom). Even though the Sydney Opera House is a complex curved shape, the flat animation gives a good indication of the what the actual projection will look like.

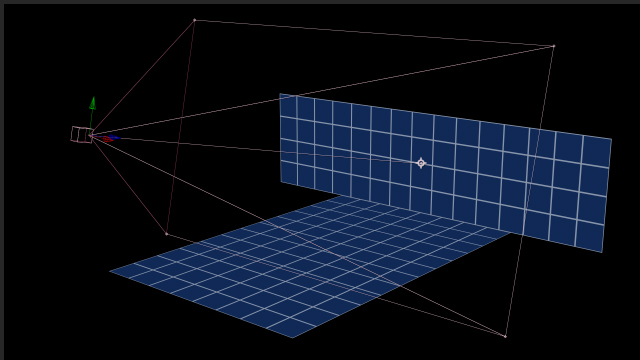

When working on stage shows where there are projections on the floor and the back wall, a basic setup can be created in After Effects to check that these elements align with each other. This can be as simple as arranging the pre-rendered wall and floor layers at 90 degrees in 3D space, which is quick to set up and to render. A variety of cameras can be added to preview the content from different perspectives.

This After Effects composition uses two 3D planes and a camera to preview how a wall and a floor appear together. While simple, it’s extremely effective.

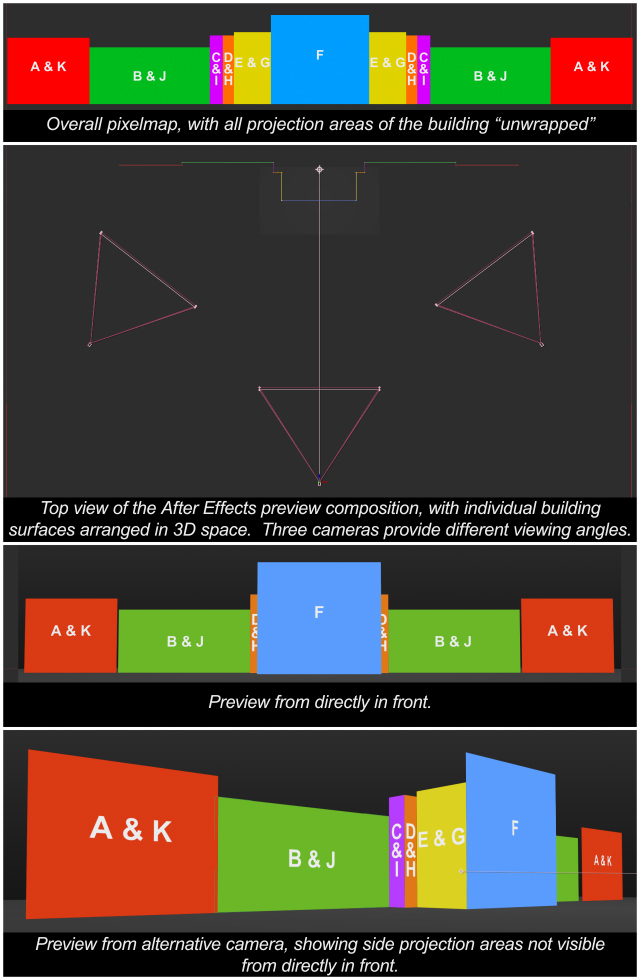

This preview is for a more complex building, but the basic approach is the same. 3D layers are arranged in After Effects and multiple cameras are setup to show different angles.

The next step is to add a few more 2D solids to represent the audience, and any seating or elevated wings. Again, this is pretty simple to do in After Effects and provides valuable feedback on how the wall and floor elements are working together.

This preview still has the same basic setup – just 3D solids in After Effects – but additional detail has been added, such as the audience and the cropping of the stage wings.

If there is content that will be obscured or masked out, seeing it in-situ can dramatically change the sense of pace and timing – as you’re no longer influenced by elements which won’t actually be visible.

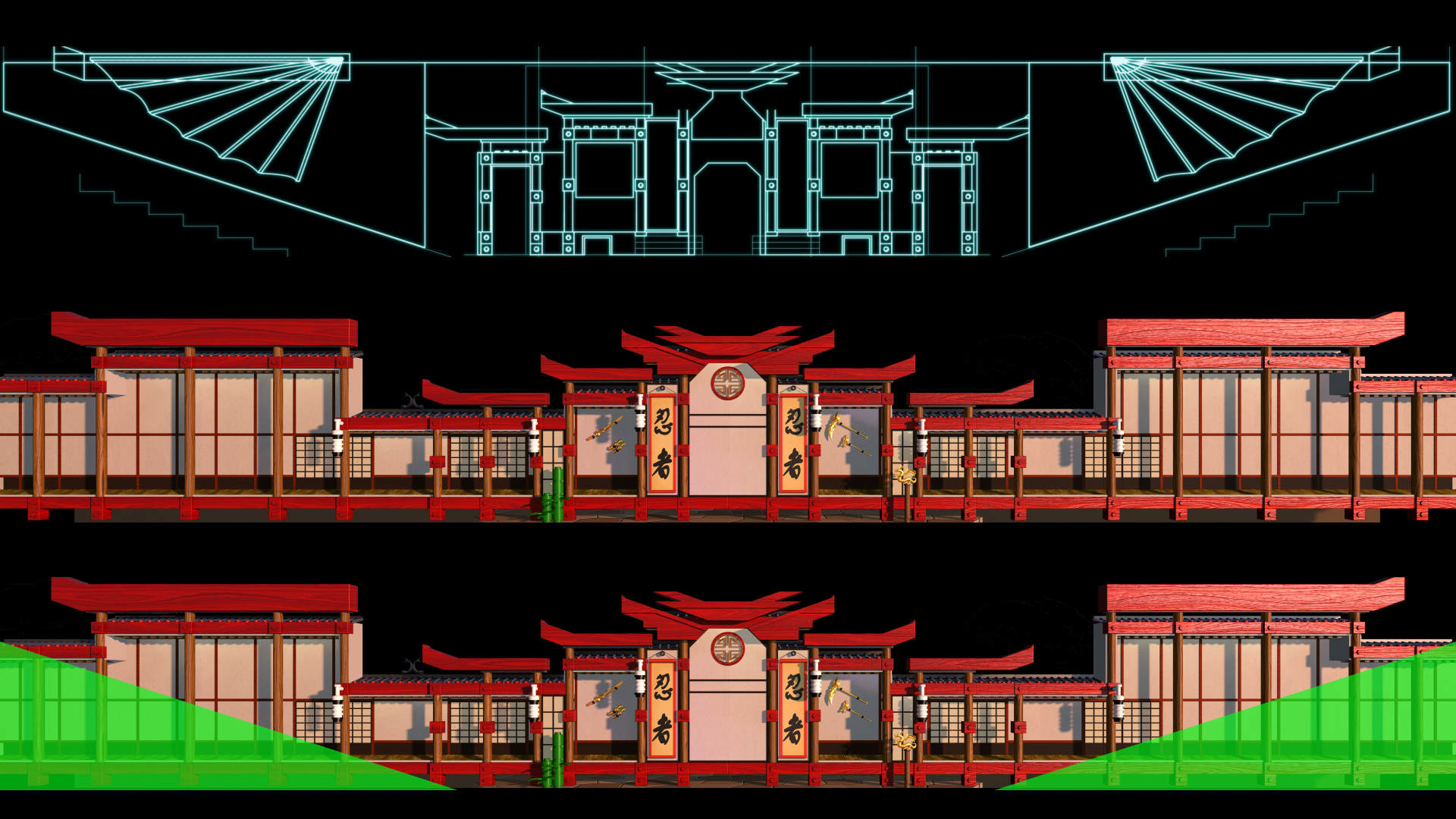

These three images are from a recent project for Legoland. The top image is from the original CAD plans for the stage. The middle image is the raw 3D render of the set, modelled in Maya. The bottom image highlights the areas of the 3D render which are not visible – the shape of the stage and the auditorium obscures this section. When animating elements and adjusting the timing for objects to enter and exit the stage, knowing what is visible and what is not is essential.

However some buildings are simply so complex in shape that a simple flat 2D preview isn’t accurate enough. The Hong Kong Cultural Centre posed a much bigger challenge, because it’s a very odd looking building. You can see from an aerial shot that the sides of the building are much higher than the centre, and the whole thing curves around like a croissant.

The Hong Kong Cultural Centre has a very distinctive shape. It is very difficult to judge how a flat image on your monitor will appear when projected onto the curved faces of the building.

When we began work on the Hong Kong Cultural Centre the relationship between the flattened animation template and the shape of the actual building wasn’t intuitive, even for seasoned animators, and so we needed a more sophisticated method to preview our work.

To do this, we used an After Effects plugin called RE:Map, made by Re:Vision FX, to warp our flat renders onto a 3D model of the curved building.

The first step was to create a basic 3D model of the building to use as a background plate. This was saved as a single jpg image.

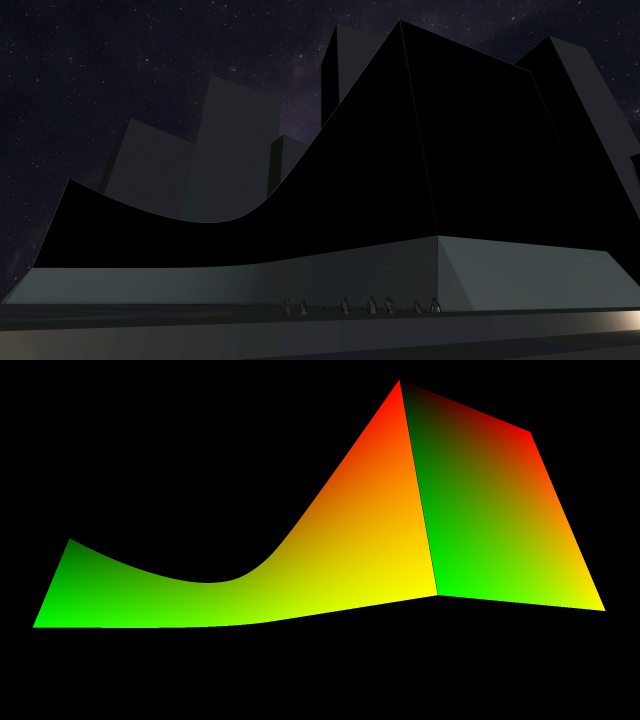

The top image shows a simple background plate modelled in a 3D application. The shape of the building is also rendered as a UV map (below). The red and green channels of the image represent the building’s geometry.

The building was also rendered from the 3D program as a UV map. A UV map represents the geometry of 3D objects using the red and green channels of an image. In After Effects, the RE:Map plugin can interpret UV maps, and warp the flat animation onto the shape of the 3D building – but much faster than actually doing it inside a 3D program.

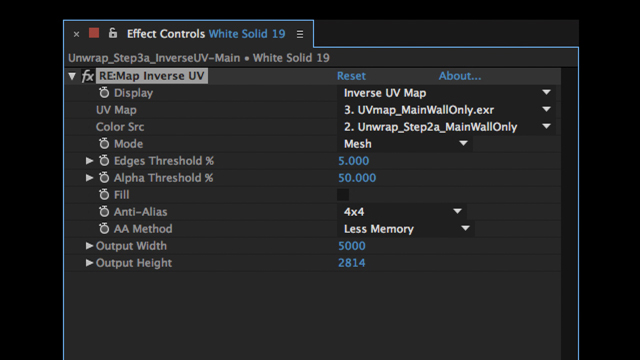

The RE:Map plugin, by RE:Vision FX, enables After Effects to work with UV maps.

This meant that we could create content in a flat 2D world and wrap it onto the curved shape of the building quickly and easily. This gave us a great indication of how the content would work with the building and was also a valuable tool for client presentations.

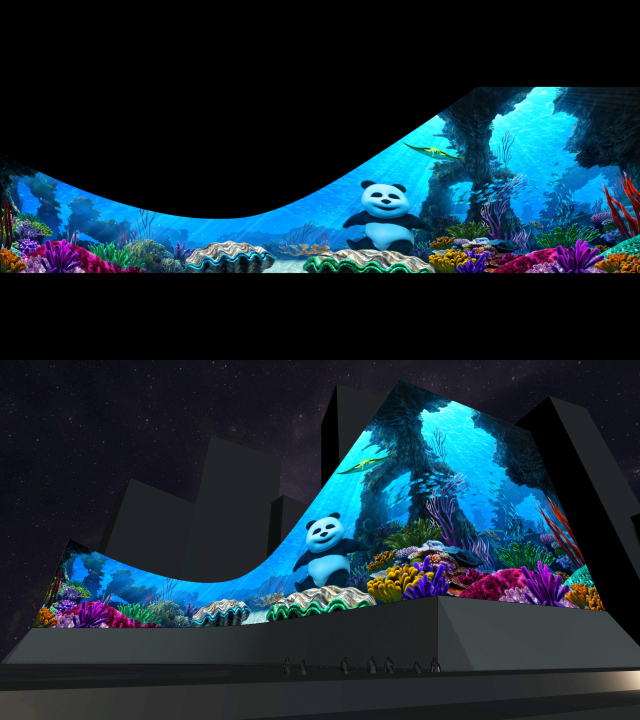

The flattened animation for this underwater scene, for example, can be previewed quickly and easily to check the position and alignment of the various characters with the sides and corner of the building.

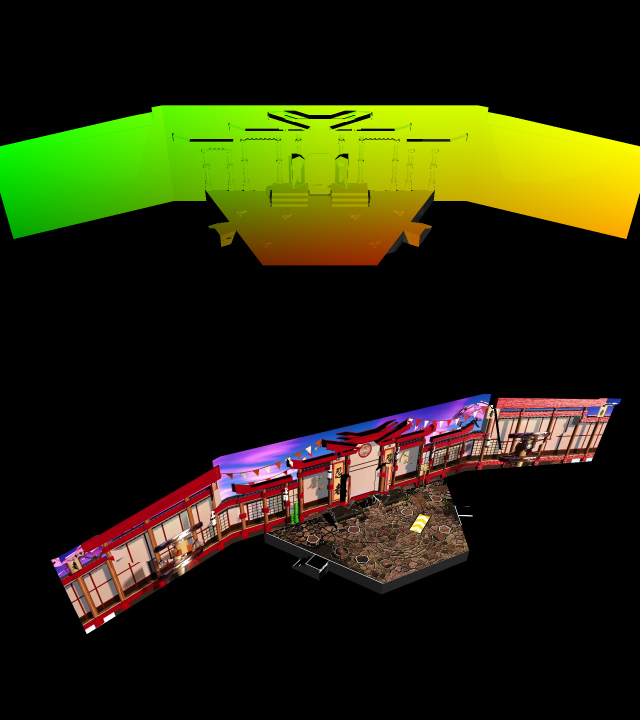

The source animation file (top) as it appears in After Effects. By using RE:Map, we can preview the animation on the curved building surface (bottom).

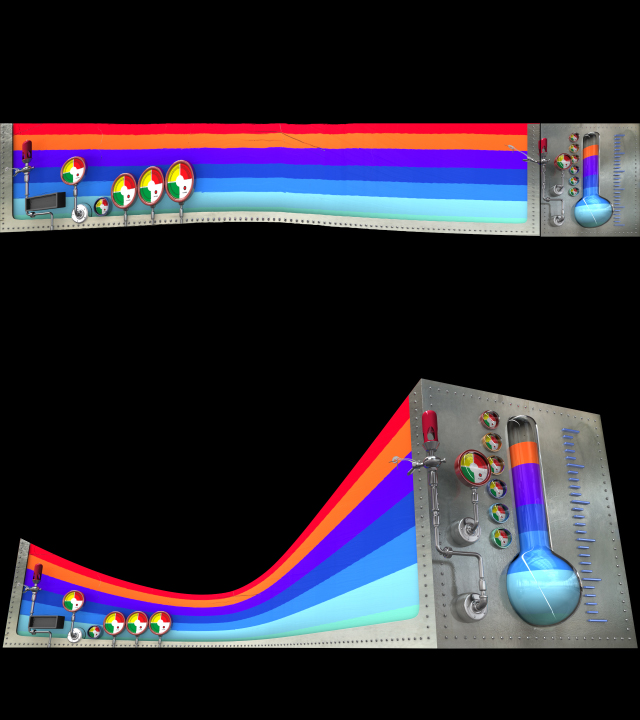

The RE:Map plugin also gave us the ability to combine our 2D and 3D worlds, giving us greater flexibility in compositing by utilizing the different strengths of 2D and 3D programs. 3D animations that had been created using Maya, for example, could be unwrapped using RE:Map into a flattened 2D layer inside After Effects. Text and other graphical elements are much easier to create and design inside After Effects than they are in Maya, and so any motion graphics content could be made using After Effects but still combined with 3D renders. Once the scene had been finished in After Effects, the final composite could be re-warped back to the original 3D shape.

While it’s difficult to explain exactly what is happening here – it’s one of those problems you don’t realise exists until you run into it – these images represent the combination of 3D elements modelled in Maya with 2D elements created in After Effects. The RE:Map plugin was used to “unwrap” the 3D renders into a flattened form where they were composited with 2D graphics in After Effects. The final composition was then wrapped back into the 3D state – again using the RE:Map plugin.

More recently, we used the same approach – UV maps and RE:Map – to check the alignment of projected sets for the Legoland stage show mentioned earlier.

The Legoland stage mentioned earlier was also previewed using UV maps and the RE:Map plugin. This allowed us to check the alignment of the animations with physical set elements such as stairs, columns, alcoves, and other real objects.

The RE:Map approach to previewing renders is very valuable, and we continue to use it on various projection projects. However when the building is as large as the Hong Kong Cultural Centre, it can still be a bit misleading.

One of the projections we did for the Hong Kong Cultural Centre featured an animated lion dance. The storyboards included lions at several different sizes, including a close-up that filled the entire building surface. While I don’t have the original storyboards to demonstrate, you’ll have to trust me that the extreme close up looked awesome both as a 2D frame, and as a 3D preview rendered using the UV map method demonstrated above.

However when the crew arrived on site and began doing some projection tests, the sheer scale of the building and it’s unique bendy shape meant that the content wasn’t working as well as it appeared in our previews. The building is so large – and so curvy – that it’s very difficult to see the entire image from one spot, and so when the extremely large lion head was projected, it wasn’t actually clear what it was. The experience of the public on the ground was very different to what it looked like on our monitors.

So despite how good the original storyboards looked, and how well the concept worked in our preview, after the technical rehearsal there was a mad rush to replace the extreme closeups with groups of smaller characters.

To avoid this type of problem in the future, the next step was to preview the show from as many different positions as possible.

The latest projection on the Hong Kong Cultural Centre, produced by Artists in Motion, used the same real-time 3D engine found in many games. The flattened animations that we were delivering could be mapped onto the building as we moved around in real-time – just like playing a computer game.

At the top of this frame is the flattened animation file, underneath is the real-time preview being wrapped onto the building. The character can run around the building, look in different directions, and we can see how the content looks from many different angles. This project was one completed by Artists in Motion in Sydney, for the Hong Kong Cultural Centre.

No matter how advanced the preview may be, however, nothing beats actually testing the images on the building itself.

Testing testing testing…

While previewing as we work is very valuable – and it’s normally essential to provide ongoing previews to the client – nothing beats actual testing on the building surface itself. For large scale events, such as the International Fleet Review staged in Sydney Harbour, several technical tests were held over the months leading up to the show.

It’s well after midnight in the middle of winter… perfect time to do some technical tests.

As I mentioned in an earlier article, the content for the International Fleet Review was being simultaneously projected onto 5 different building surfaces. When the project was initially pitched the intention was to make a single, large source video primarily designed for the main side of the Sydney Opera House, and then we would crop and scale this master for the other surfaces.

However once we began technical tests it became apparent that all the buildings were far too different in size, shape, aspect ratio and so on to share the same source video, and so we had to create custom layouts of the video for each building.

The video was made up of 7 scenes, and when spread across the 5 buildings this meant we were creating and delivering 35 separate animations.

The different resolutions of each projection site were also critical to the way each composition was formatted. The main Opera House – the west side – was a high resolution projection, while the eastern side – the side that had never been projected onto before – was only about ¼ the resolution and also darker. On a more precise note, the west side of the Opera House had a pixelmap resolution of about 6K, while the eastern side was standard 1920 x 1080 HD.

The top image shows the west side of the Opera House, the side that most people normally see. The bottom image shows a test projection onto the eastern side, which hadn’t been done before. While the content for both sides was essentially the same, the text had to be individually formatted for each side.

We worked out pretty quickly that if the public turned up to the event and couldn’t read anything we projected, then we’d look pretty silly, so the tests that we conducted were crucial to determine how much text, and at what size, could be projected onto the eastern side while still remaining legible. We used to do these tests late at night – some of these photos are tagged at about 2am, and I always wondered what the few people around at those times made of the sight.

For large text, such as the opening title, the overall composition is basically the same. Other tests on the eastern side showed the limited resolution made smaller text illegible, and so for the eastern side of the Opera House we had to make key text elements bigger, and remove others altogether. While the overall result looked generally the same at all locations, the reality was that we were juggling multiple different timelines in After Effects and many elements were individually animated for each different building.

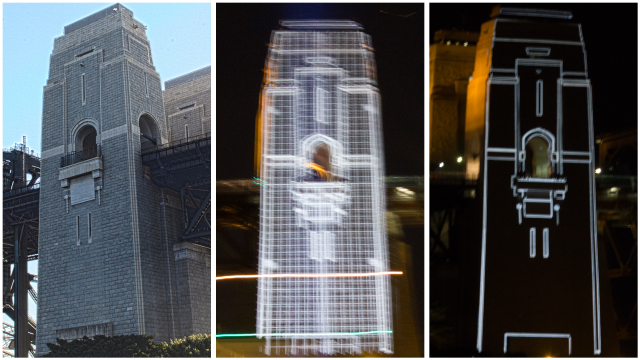

The pixel map for the harbour bridge pylons was created from a photograph I took, using the same un-pin process I demonstrate in my earlier article on projections. The pylons responded well to projection so all the content was very bright and crisp, however again their unique shape – tall and thin – meant that once again all of the content had to be specifically re-designed for their features.

Left – the original photo I took to create the pixelmap from. Right – testing the alignment of the pixelmap with the architectural features of the pylons. Middle – even blurry handheld iPhone photos could be useful to guage clarity and resolution.

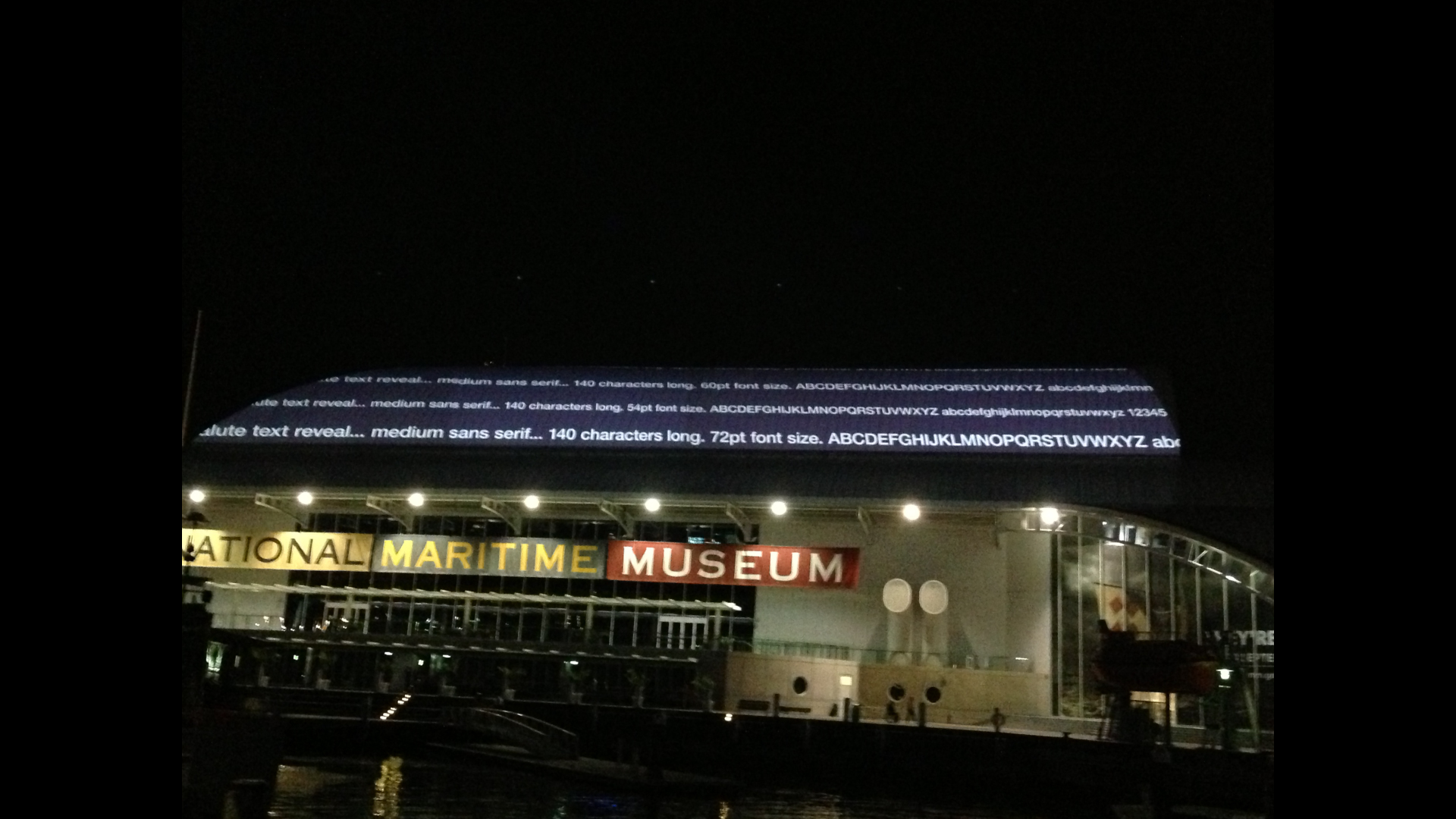

Projecting onto the maritime museum was especially difficult, as the curvature of the roof required a number of pre-processing steps in order to prepare the animations for playback. Because of the curvature of the roof and the warping required to deliver the final output, the basic animation template for the maritime museum wasn’t as intuitive to work with, and it took a few nights of tests to understand how the template we had corresponded to the actual building.

While I created many test patterns to help ascertain the unique characteristics of the maritime museum roof, again one of the more critical things to determine was the legibility of text. For our first night of tests, I created a long list of text at various font sizes, and also tested the difference between san-serif and serifed text. I got quite a shock when the largest line of text I had created was still a blurry mess, so I had to go back and create another test pattern using much larger font sizes. If it hadn’t been for this real-world test, I would’ve continued to work with text that was far too small to read – and the end result would have been very embarrassing for everyone involved.

The first test on the roof of the Maritime Museum showed that even the largest text I had considered using was still too small to be legible. If I hadn’t checked this early on, the results would have been very embarrassing!

A follow-up test with larger text proved to be much more successful.

In a similar manner, I also made a number of different grids, to work out how thin lines could be before they blurred into invisibility, and also how small the grid spacing could be before the grid itself turned into just a colour wash.

Similar tests established how thin lines could be before they became invisible, and how closely spaced grid lines could be before they appeared to be just a solid colour.

The International Fleet Review was the largest event ever staged in Australia, and so it was important to get the details right – such as making sure everyone can actually read the words on the buildings! Although we hadn’t initially planned on creating customized versions of each video for each surface, it was clearly evident that it was something we had to do, so we simply got on with it and did it.

While I’d rather be in bed at 2am in the middle of winter, technical tests on the actual buildings were essential to guarrantee the success of the final show. No matter what technique we use to preview our animations, whether simply watching the flattened animation on our laptop, or using more advanced techniques such as UV mapping or real-time 3D engines, nothing is more valuable than seeing the footage projected onto the building itself.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now