Our discussion today is with Ben Insler. Ben was the assistant editor on David Fincher’s feature film, Mank, which was edited in Adobe Premiere. David Fincher’s production company has its own post house – Number 13, which I have had the privilege of visiting in the past – and it is really on the cutting edge of post, with Premiere at its core for several years.

Ben has been an assistant editor on Mindhunter – which is also a Fincher production, edited at Number 13, and has worked as an assistant with Oscar-winning editor Kirk Baxter at Kirk’s commercial editing house, Exile. Ben’s other work includes editing numerous TV series and specials.

I’ve already interviewed Kirk about Mank – and that discussion of the craft will be coming in a few weeks – but Kirk turned me over to Ben for a discussion of the workflows and technology of editing Mank, including their mid-stream move to having the entire editing team cuttiing from home when COVID hit California in mid-March.

Also, if you are interested in reading previous interviews with Kirk about Gone Girl, check out this link. Or to read his interview with Tyler Nelson about editing Mindhunter, check out this link.

This interview is available as a podcast.

(This interview was transcribed with SpeedScriber. Thanks to Martin Baker at Digital Heaven)

HULLFISH: Let’s talk about what the workflow is like, what the schedule is like, and how you determined how Premiere was going to work for this project.

INSLER: Premiere has been the foundation for the last several projects here at Number 13.

HULLFISH: Just to fill folks in — David Fincher has his own personal post house for his projects called Number 13… I visited you guys a few years ago at the start of Mindhunter. It’s a nice facility.

INSLER: Thank you. I had worked with Kirk for a while, but I started at Number 13 with Kirk and David and that whole team in the middle of Mindhunter season 2. They’ve been using Premiere for a little while. An established pipeline was in place when I got there. It wasn’t like we were building a whole pipeline from scratch.

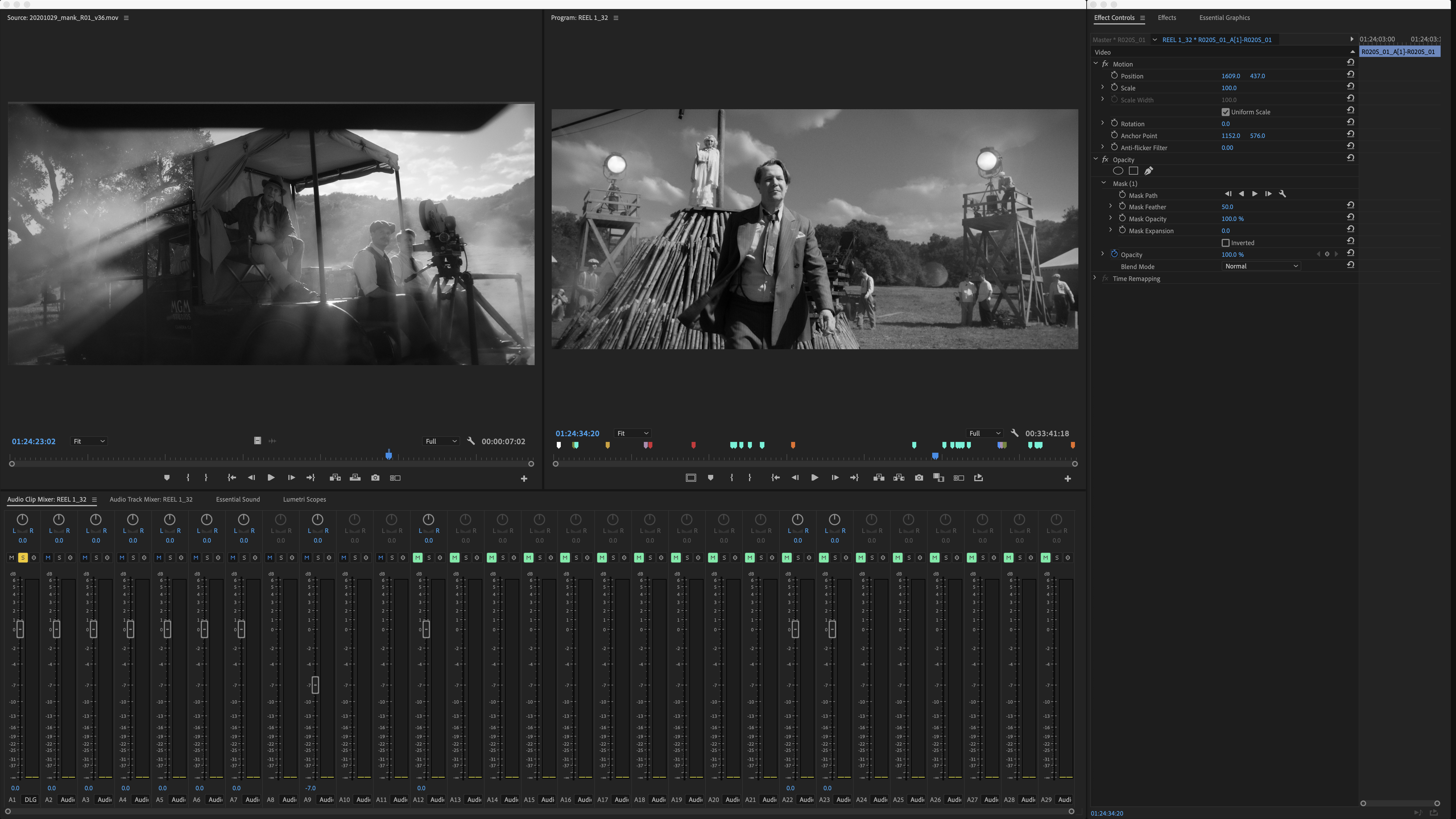

One of the things that’s really powerful about Premiere and the way that we use it is that we shoot everything with stabilization in mind and we shoot everything with visual effects in mind as opposed to knowing that it can happen but treating it as to sort of separate stages where we get the edit done and then the visual effects happen.

So in editorial we actually work very fluidly between the two — wherein the offline stages we will be doing offline comps, offline visual effects — all of the assistants will, Kirk will be doing it as well in the timeline himself — and having this sort of symphonic balance between Premiere and After Effects.

So the freedom to be able to do that work in the timeline in Premiere — bouncing back and forth very fluidly to After Effects without having to do a lot of rebuilding — it kind of does all of the setup for you. And we have some customized scripts that we have written like Javascript in After Effects to make that a little bit more streamlined so that we’re not creating the same nulls and sets of templated working structures over and over and over again.

We just press a button and it does it. Actually, we have two ways of doing it depending on who’s doing it. I have one preference. Our other Assistant Editor, Jennifer Chung has another preference. So we built two separate functions, but we have all that in place so that we can work very fluidly.

We’ve extended some of that on Mank. We didn’t have that automation in After Effects before Mank. But that’s one of the reasons why Premiere is used.

HULLFISH: So at Number 13, there is a color grading suite. And, as you mentioned, some really powerful custom scripts — especially for ingest right? Ingest and all the organization and adding of metadata is custom scripted. Can you talk to me a little bit about that?

INSLER: Absolutely. To go back to what you said about in house color grading, we do have a number of edit suites. During Mindhunter we expanded out to three dedicated editors editing suites operating in parallel while there were a number of assistant editors also operating in parallel — all in the same building. All tapped into the same centralized high-speed storage.

And then — I believe it was on Mindhunter season two — when we renovated the color grading suite. So that is now a fully-fledged DI theater. Mank was entirely graded in that suite and Mindhunter was entirely graded there, too. We all have an opportunity to actually see everything on a broadcast SDR monitor, or an HD monitor, or up on a 20-foot screen. It’s pretty amazing. Just walk downstairs if you want to see how some is going to look.

We do have a variety of additional scripted tools. One of the big ones that we’ve been using for a while — that was created by Billy Peake and Tyler Nelson, previously as assistant editors at Number 13 — is this platform called Dispatch which is an extensively scripted version of Filemaker (https://www.youtube.com/watch?v=nIn7LULP95Y&feature=emb_title).

A lot of editorial teams and post-production teams use Filemaker as their database for tracking dailies — as a codebook for tracking visual effects. Those guys really took it to the next level to actually be able to use that database as an automation platform for ingesting dailies and for doing visual effects turnovers.

So we process all of our dailies for Mank in Fotokem’s Nextlab. (https://postperspective.com/fotokems-nextlab-next-step-gone-girl-beyond) Once that’s all rendered for us, that also has the ability to export a metadata file which we then ingest into our Dispatch Filemaker database and then that has the ability to — using that data — create XMLs that can be imported into Premiere and, by importing that XML, and through a little bit of side-work with Adobe, we actually are able to have Premiere — simply by importing the XML — create all of the multi-cams that are already set-up for us and strung out in the order that we want in a timeline so that we can get right into breaking down the dailies and getting them over to Kirk.

HULLFISH: For you, that means as an assistant, you can spend more time doing things that are more productive or at least more creative or more interesting, right?

INSLER: That is all true and we can also not feel like we are in as much of a rush to get all of the technical bookkeeping done and also the really important creative work done. Even though some of that creative work on our end is still bookkeeping.

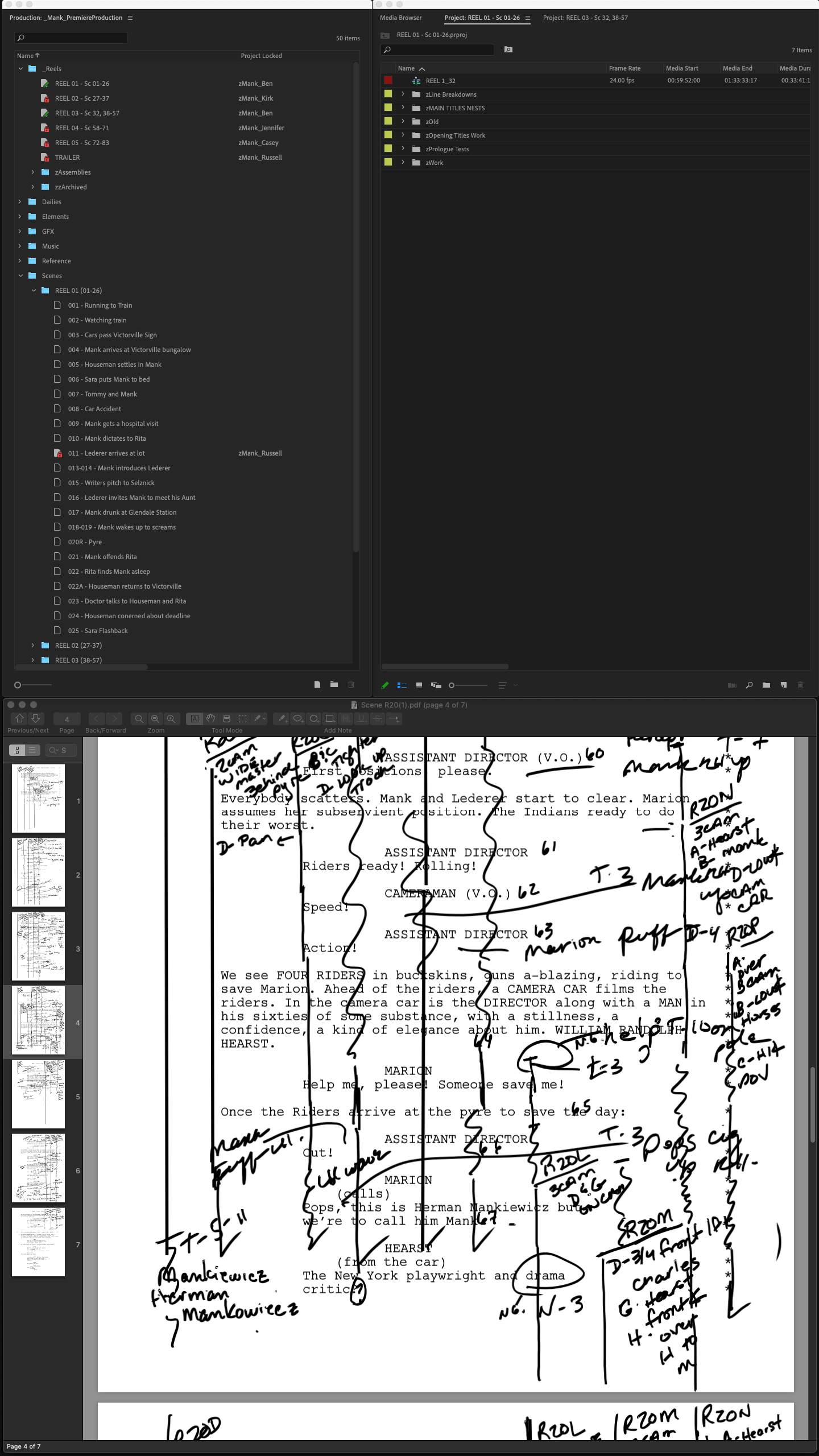

When we’re going through the process of preparing dailies, and we’re trying to get all of the script supervisor’s notes transposed from the page onto the clips — so that as Kirk is reviewing, he can actually see those notes in real-time in Premiere — we put all of that data onto the clips themselves.

Dispatch actually has some methodology for being able to take some of that data from digitally recorded script notes and put them directly onto the clips in that XML ingest process, but we still go through and make sure that it’s all accurate. We make sure that everything is transposed correctly.

Maybe there was a little note scribbled on a script that didn’t actually make it into an editor’s log or a facing page. So well we’ll track all that stuff. Then, by getting all of the syncing and multi-clipping string-out steps out of the way, it gives us more time to make sure that’s right because we work at a very fast pace.

If we shoot on a Monday, those dailies are going to Kirk on Tuesday morning. We have at least one person that’s starting on those at 8 a.m., so that when Kirk comes in they are ready for him. So those tools are really important.

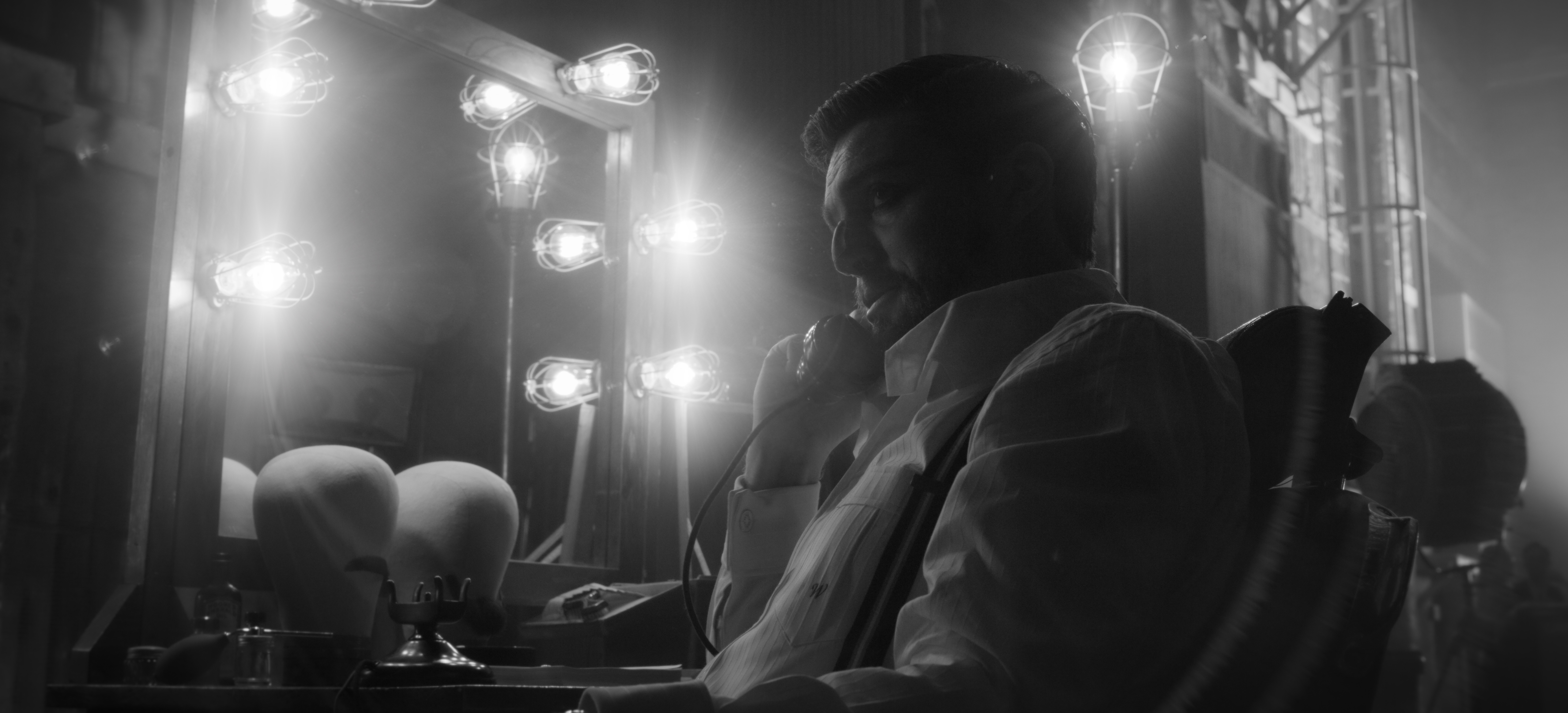

David Fincher’s MANK is a scathing social critique of 1930s Hollywood through the eyes of alcoholic screenwriter Herman J. Mankiewicz (Gary Oldman) as he races to finish the screenplay of Citizen Kane for Orson Welles. Gary Oldman as Herman Mankiewicz and Sean Persaud as Tommy. Cr. Gisele Schmidt/NETFLIX

HULLFISH: Does Dispatch also include data from camera reports or audio reports?

INSLER: It doesn’t incorporate the audio and the camera reports. That’s actually done manually as the dailies are being processed by the dailies tech Things like starred takes and all that information does come through. It’s just more of a process that it gets put in manually as the dailies are being processed and then when we get that metadata file from the NextLab, it’s all embedded in there.

HULLFISH: Is the NextLab set-up embedded at Number 13 or is that an outside process?

INSLER: It’s embedded at number 13 while we’re in production but it is not a mainstay. It comes in when we’re in production and then — once we have no need for it any more when shooting is complete — then they leave.

Though we do this process sometimes what we call rebaking. We’re shooting everything in a RAW format and then in order to turn it into editable dailies, we bake certain things down, like the exposure, the ISO, the color texture, even some look details.

Every once in a while you’d have something happening where there are like clouds passing by, so the exposure is changing slightly and then, as that gets edited, the part that has now become hot in that take because the clouds are gone even though overall the exposure of the shot from when it started rolling is actually correct.

So we will actually go back and reprocess that shot through NextLab so that it more accurately represents the correct balance in the edit, based on how the shots are being used. So we will hang onto the NextLab for a little bit after production stops just in case that last week of shooting needs some rebakes to be done because the NextLab system is pretty powerful so generating those dailies is minutes as opposed to what would be hours.

HULLFISH: And for someone who hasn’t worked with NextLab, it’s basically like a cart with a bunch of gear? (https://www.fotokem.com/#/production)

INSLER: We actually get it set up as an office. But yes, it can be. I don’t know how much it can be a cart the same way that you have a DIT cart or sound cart because there is a lot of interplay between multiple systems.

I believe that our system had one centralized server that we actually tapped into our fiber network and gave them a dedicated fiber network that was down in our machine room and then there were two other computers that were up in an office for the DIT to use and one of those was used for ingest and organization and metadata processing while the other was being used for actual transcript. He’s got a video monitor in there too, so just in one room, there are two computers and five or six screens. It’s a little bigger than a cart.

HULLFISH: For those who have been in a cave and haven’t seen even the trailer for Mank, it’s in black and white. Was it shot in color?

INSLER: The decision was made to shoot it entirely in black and white. From the start, it was shot on a RED monochrome sensor. I remember having a conversation with some of the other assistants when some paparazzi caught a day on location with Gary Oldman outside of a hotel and it was the first time we actually saw the color of the suit that he was wearing!

We really sort of dove in and lived in that world of black and white which is actually quite fun. You get past the initial shift of, “We’re not working in color,” and it was actually very quick to make that shift.

If you’ve seen any of the trailers there’s a variety of processing that’s being added. It’s actually different from trailer. There’s a variety of processing to give the footage an older-age feeling. It’s not black and white with a crisp, perfect, digital modernity to it.

We started that way back in the dailies process. One of the things that David wanted to do was give the footage a black bloom that would have been representative of one of the older film stocks, whereas the film was processed the blacks sort of leach out into the more exposed areas.

The darker areas of the image kind of get this little black halo to them the same way that if you’re shooting a really, really bright thing, that bloom leaches a little bit over the darker areas. It’s the same kind of idea in a way.

We figured out a way to do that with FOTOKEM in our Nextlab process. So we had a — very untweaked, at that point, but — representative look all the way through the edit of the film.

HULLFISH: That’s awesome. There’s a good Sapphire plug-in called Glow Darks that does something really similar. (https://borisfx.com/effects/sapphire-glowdarks/).

INSLER: It’s a very cool look. One of the things that we did learn right from the beginning is that we built a few different types of bloom depending on what LUT was being used for what day. Were we shooting day or night? Or was it day-for-night?

What we found out was that depending on the overall balance of the image and how light or dark the image was — based on its exposure — sometimes the bloom that we had set was way too strong or actually not really seen at all. So we may have tweaked a little bit of that in the offline process when the dailies were being processed, but when that effect went down to Eric and color grading suite that was tweaked just as much as any other grading step would be to make sure that that worked with every shot.

HULLFISH: We’ve talked a lot about the automated processes and the use of metadata. What is the purpose of the metadata that you’re spending so much time and effort to make sure is baked into these clips? What is it getting used for?

INSLER: There are two sides to that. There’s the metadata of the clips, and there’s the metadata of the process of the day. The metadata of the process of the day helps us to get everything tracked through the dailies ingest process and most of that is either translating data from script notes, from camera reports, and sound reports, like you were saying.

Then there’s the metadata that’s recorded as the shoot is happening. All of that is being recorded and tracked so that we have perfect control over the image through the entirety of the pipeline because we’re shooting REDCODE RAW.

So when we do something like rebaking a shot, we will track in our database that that shot has been re-exposed or the ISO has been changed from 800 to 600 or 400. Then when those frames are actually turned over to Eric in the color grading suite, that’s where he will start his exposure balance for the image.

So he knows that David has made those notes and we’ve already made those adjustments so that he’s not starting from scratch and having to rebuild or reinvent the wheel. That to me is really why all of that metadata is tracked — so that we can work with a flexible, fluid image. And at the same time, not treat this as more traditional pipelines would where every single stage kind of does their best work, but kind of starts from scratch.

HULLFISH: Were you guys working through COVID on this? Did any of this have to happen remotely?

INSLER: We actually went remote for COVID a few days before California itself decided to go remote. We started seeing it developing and all of us got — rightfully so — cautious of it. We started talking about it well beforehand. We have a little bit of an advantage that my mother is actually an infectious disease specialist in New York, so I was hitting her up for info.

One day Kirk, (producer) Ceán Chaffin, and I sat down in Kirk’s editing room and I had already talked with Peter Mavromates, our post-supervisor, about trying to figure out how we would do it — getting media to everybody and did we have storage, did we need storage. How do we coordinate all this?

We just made the call. We need to do this. I believe California closed all business and started the stay at home order on a Wednesday, and we actually had everybody working from home on the Monday before that. We’re pretty lucky at Number 13. The way that David has worked as long as I’ve worked with him — and I worked in commercials at Kirk’s commercial editing company, Exile. before working for David at Number 13 — it’s always been a digital, very versatile process working with him.

He’s very fluid and he does not need to be sitting in a room in order to review an edit and have a laser focus on what comments still need to be made. So I think we had a little bit of an edge going into that. Some of those foundations were already set up previously on Mindhunter when they were shooting in Pittsburgh.

There were times where Kirk would travel to Pittsburgh and work with David for a week while the shoot was still happening. So even before I got to Number 13 they had built some protocols to be able to handle that.

Then once COVID hit and we made the decision to go home I realized that that needed to be much more robust. We set Kirk up and we set all of the assistant editors up — on that would be me, Jennifer, Russell, and Casey — so five people all to work from home.

We gave everybody a copy of the media in H264.

HULLFISH: Local?

INSLER: All local. H264 is a lot smaller so it means that instead of the dailies being 60 terabytes, it’s 18 terabytes. There’s a lot of other stuff to consider. There are visual effects that are constantly being delivered. There’s music that’s being composed by Trent and Atticus that’s coming to us continuously.

And it’s like the After Effects handoffs — I’m going to be generating After Effects files and renders and Russell Anderson is gonna be generating After Effects files and Jennifer’s gonna be generating After Effects files. How are we going to keep all that in sync?

So developing this multi-faceted cross-sync capability was the big complexity of it all. Thankfully on Mindhunter, we had shifted around our infrastructure a little bit so that we had a VPN in place already and that actually turned out to be an incredible help as well because rather than keeping everybody isolated on their individual home local machines we used the Number 13 offices and the ability to VPN as the central main hub. We would call it the mothership and everybody would sync up to that and then it would feedback out to everybody else.

We used two platforms to do that. We used Resilio Sync. Resilio Sync is really good at syncing small files very fast. It is NOT great at keeping track of very large files — like changing video media. And it is not good at detecting changes made by multiple users across a server. For example, if you and I are on two separate computers that are accessing the same server and you make a change to a Premiere project file my Resilio Sync will not see that. Even though we’re both on the same server and then we’re syncing with someone in a remote location that change that you made may not get synced for a while.

We decided to use Resiliio Sync for the Premiere projects and the Premiere “production” because of its ability to keep those things in sync in real-time which works especially well for things like locked files where a project locks very quickly if Kirk opens it rather than Kirk opening up reel one and then I open up reel one and it turns out that we are competing with each other. One of us is going to delete the other person’s work.

So using that actually allowed us to use a Premiere production remotely with multiple different people working in multiple different locations and feel like we’re all working on a centralized server together.

Then we use another piece of software called Chronosync and that is what we use to synchronize basically all of the media and everything that is not project-file-related. It requires a little bit of tweaking and a little bit of network setup and those guys are great. I’ve emailed with them a number of times to sort of get into some of their hidden setting files and figure out how to make things work.

It’s really a backup platform so we kind of hacked certain things that didn’t seem like they would work, but we got them to work. We used it to synchronize all our media and basically, that was set up on every single person’s remote computer. Every 15 minutes It does a sweep of quickly changing media or media that changes often.

For example visual effects deliveries. Casey Curtis — our other assistant editor who mostly handles VFX — he might receive a visual effects delivery at noon. He might receive another one at one. Another one at two. We obviously can’t sync that once a day because he will receive those deliveries and cut them into reel one. Then if Kirk opens reel one to do some work, all of the work that Casey has done will be present in the project file, but if the media hasn’t synced, it will all show up as off-line. So we want things to get synced reasonably quickly.

So for media like that, we put everyone’s system on a 15-minute sync cycle. If there was nothing to sync, it would take around two minutes to connect to the remote servers and check if anything needed to be synced. If we needed to sync three hundred gigs of stuff, that would take a little while, depending on your connection.

HULLFISH: And that’s all happening in the background?

INSLER: It’s all happening in the background. In fact, it was very fluid and very easy to be working even if Casey had cut in a bunch of new VFX and it was still in the process of syncing to Kirk’s machine. I could text Kirk and say, “Hey, Casey just cut in a bunch of stuff. Work reel three for a while. I’ll let you know when the sync is done.” Then he could just keep working in reel 3. It’s not like it occupied his harddrive, occupied all of his Internet bandwidth.

We luckily have a very fast connection at Number 13 to serve the data up, so it really was just limited by everyone’s download speed. So we were able to shuffle things around very quickly. And for the most part, except on the assisting side when we were doing some QC and syncing large renders, we never really ran into hurdles where we said, “No. We can’t work on that yet.”

The other thing that we set up Chronosync to do it that for media that wasn’t really changing — like dailies — where we needed to do a rebake example. If that shot was rebaked, it doesn’t really need to be transferred immediately because the edit can still happen. It’s just going to look better tomorrow when the rebake is received.

So for things like that that are less critical and time-sensitive, we would set the computers on a daily cycle and they would sync all that additional media at 3:00 a.m.. I think that that cycle — if nothing needed to be synced — that cycle took about eight minutes.

Then the last thing the ChronoSync would do is to monitor system events on everyone’s local machine for all of the things that we assistants would be generating ourselves. So if I did a split-screen comp and needed to go into After Effects to do it, ChronoSync would detect that — in my dynamic links folder I saved a new file and elsewhere on the drive rendered that file to a Quicktime — and it would then push it up to the Number 13 servers and then when everyone else did their 15 minute sweep it would detect that those files would move and pull it down. So that’s how we got all of the assistants working in concert together.

One of the things that we found was that remoting in was actually very fast and fluid. So I preferred to work on my home machine when another assistant preferred to always remote in and do comps there. We also found that doing exports at the office for posting things — those computers are super fast so it was a lot easier and beneficial to us to push things off to the office when we were doing things like exports.

At the same time, we got to keep working. So we would remote in, set up a reel to export, export using the office computer while we kept working on something else on our home computer, then just jump in 10 minutes later, and post it up to PIX.

Very often, the assistants were actually using kind of a computer and a half.

HULLFISH: I just talked to a group of NYU editing students this morning and they were asking me “what do I think the future is going to hold?” I would be interested in hearing your opinion on this. My opinion is that COVID has kind of sped up the need or the desire or the ability to remote. And while there are a ton of reasons to want to be in the same place — especially for a director and an editor — the ability to go remote is pretty compelling.

INSLER: Yes it is. I agree with you. Before I say anything else, I have to say that COVID is terrible. It’s not necessarily worth it to discover these benefits by having COVID.

HULLFISH: Says the son of an infectious disease specialist!

INSLER: (laughs) Having said that, I think that one of the things that we’ve gotten to learn, from what COVID forced us into, is that we actually have the tools where this is working. When it came to setting us up, we’re lucky that we had a little bit of a foundation in place so that it wasn’t just like starting from scratch.

We’ve always been a facility that was deep in tech and advanced in looking at these solutions. But really it was a few late nights, but we got set up in three or four days’ time. We didn’t hit any major hiccups.

Working from home and the situation with COVID showed us that it’s not that we’re CLOSE, it’s that we’re THERE.

The two things that we really lost by working remotely were during our QC screenings. When we get to a specific stage, the movie gets rendered with sound mix and color grade. It may not be finished but we’re at a certain stage of completion and we all get together in one room and we all watch it together and we all make notes on it and then afterward — for one reel that is maybe 20 minutes long, this could be a two-hour process.

We watch through the whole thing. We all make notes and we all go back through the whole movie — person by person — and go to all of our notes, which is actually very fun to see, “Oh wow! You saw that! That’s crazy! I missed that.”

We did do that in a remote fashion with what are called PIX screening sessions. Using the PIX platform, we were actually able to synchronize everybody’s playhead in PIX together and then all get on a Zoom call at the same time so we can all have our conversations and at the same time — for the most part — watch in sync.

But we didn’t have the ability to have the true interaction that we would have at the office. The other thing that we lost is that at Number 13, all of the editorial assistants work within an eight-foot radius of each other.

On Mank, Russell Anderson and I are in one room and then in an adjacent room — with a pocket door that’s always open, so it’s kind of just an extension of the office — was Jennifer Chung. When we’re working in that environment it’s really easy to not only hand things back and forth but also detect when there might be a speed bump.

We’re all so used to working together and thinking out loud and talking out loud that something happens — like someone says, “Oh Crap!” — and we’re right there to hear what happened. We can ask what went wrong.

If Russell says, “Oh Crap!” it doesn’t get in the way of me working, but at the same time I can then have a conversation and we discover things a little bit earlier or find solutions a little bit more quickly. That, to me, is the thing that’s lost.

HULLFISH: I interviewed John Axelrod and his assistant editor and he said the assistant was in the next room and he would listen to the director and the editor talk and they’d say something, and the next thing he knew the assistant would say, “That comp’s done.” And they didn’t even ask for it. That’s the kind of thing that you can’t do if you’re remote. You don’t hear those conversations.

INSLER: Right. It’s not that the work doesn’t get done in the same way. It’s just that when you have really tight teams those are the things that everyone’s listening for. We start to understand a language that David uses that Kirk uses that Peter uses.

The other thing is that we can start to anticipate those things. As we see people in different scenarios reacting to different things and as a conversation turns into one thing or another — in the room — and get a jump on it.

You realize that something is going to happen. Even something as simple as back on Mindhunter we used to do these extensive postings of the whole show, so that David Fincher — over a long weekend — could review the entire show in one sitting. You’re talking about nine plus hours of content.

I might be able to figure out that in a screening of an episode that that might be necessary and get a jump on that whereas right now that type of thing really only gets communicated when an email or a call comes in because we’re not there to feel that out.

But all that aside, this proves that we can do it — and I agree with you — I think that it would be great if there is actually a middle ground or a balance that’s discovered where there is a way for both sides to be incorporated so that people do have the freedom to work from home and be remote when it’s helpful to them and then jump out into the office when it’s helpful.

Also, I think that the ability for actual collaboration cross distance is so much proven by COVID. In fact, I just saw an article last night about how some directors have been directing commercials, where the shoot is happening in Hong Kong or someplace like that, while they’re watching the video feed from the cameras in L.A. because of COVID and literally directing across the world in real-time.

No one ever would have suggested that that was possible in terms of latency, or secure if COVID didn’t push us there. Now it works and it is secure. Of course, there are always flaws in security and that still needs to be worked out, but I think it’s awesome. I really do.

HULLFISH: Let’s talk a little bit more about the creative side of your job with Kirk and you and the other assistants. What are you doing with him to collaborate creatively once the kind of technical work is done? How much is he depending, on you, “Hey, take a look at the sequence.” Or actually, cut a scene for me?

INSLER: It varies on the project and it varies what the needs are. Kirk is a very team-involved person. He has no problem with someone hanging out in the edit bay and just talking through a scene.

He has offered things for me to assemble before which is always such an honor and privilege to be able to do and also so much fun.

One of the things that Kirk is really phenomenal at though — which is sort of an impossible skill to develop, but he just has it — is having an intuitive understanding of what David has telegraphed to him by the process of shooting — what should be used and what the intent is for the scene.

All of us at Number 13, we have this great understanding that we’re all there to help David make this movie. We’re all there trying to get out of his head and onto the screen what he was envisioning. It’s not about, “How do I edit the scene?” It’s about how do I help pull that apart to figure out how David wanted the scene built.

Kirk just has that phenomenal way of seeing, “Oh! That’s what David meant this to be for” and “That’s why this looks like this because it’s actually not used for that it’s used over here.” “That shot is clearly expiring here and we should be over there” and “These angles look so similar. Maybe angle A to angle B is five degrees off. They look like the same shot, but the reason is for that look, looking over to this person.”

He just has such a surgical precision of “This is where the starting point is” even just getting an opportunity to dive into that for a minute is really fun and also really humbling to see Kirk fly through a scene that took three or four days to shoot and he gets it assembled in a day and a half — watching the dailies and everything! It puts you in awe.

There’s a lot of collaboration on the side of figuring out how to deal with the details of things. There are a lot of times where Kirk can’t truly get a scene advanced to the point where it’s ready to present to David because they’ve gone through a series of selects in the dailies and they all need a comp done or they all need stabilization.

Those actually sound like very technical things but they’re not. When we do stabilization on shots we are always trying to honor the work of the cinematographer and the camera operator. We’re not trying to reshoot the shot. We’re not trying to put our creativity of framing and whatnot on top of it.

But at the same time, all of that comes into play because if the shot needs to be stabilized and we are shifting the frame around a little bit we need to bring our education and our creativity to it to sort of layer on top of the work that was already done and honor it but make sure that it still looks good. Otherwise, we’re not adding anything to it. We’re not stabilizing it if we don’t do anything too. So that actually is a very creative process to figure out how does this honor the original camera work, but enhance it?

The same thing goes for things like split-screen comps: actually figuring out how the editor has roughed it together because this is the action, the speed, the pace that Kirk is trying to build in this shot. Now how do we sell it? And there is a lot of pride and creativity that goes into making it work.

HULLFISH: So could you explain that? When you’re talking about the pace of a split-screen you’re saying that there’s a split-screen that’s probably between two people and you’re trying to speed up the dialog or how one person interacts with the other by tightening it and then hiding the fact that there’s an edit in there?

INSLER: Yeah we do that in a variety of ways and forms. The simple example would be if a conversation was shot as a “shot-reverse” over-the-shoulder and — in order for the actors to not be stepping on each other’s lines… Let’s say they were constantly cutting each other off but they might not actually be cutting each other off in the shoot so that all the lines are recorded clean.

To tighten up that pacing, and actually make it seem like an aggressive argument where the characters WERE cutting each other off, the dialogue would then get tightened. Traditionally the way that that works is that the over-the-shoulder character’s mouth and actions are somewhat hidden by the over-the-shoulder but you might have lip flap you might not actually get the dialog aligned and it’s just sort of something that is a byproduct of the process that we hope the audience just ignores or doesn’t see. We don’t do that. We will actually splice it together so that that next line is being said by the foreground character and it lines up correctly.

Then we do a lot of that for manipulating time. We might also do that for continuity. So if in one take a character on the left side of the frame goes to pick up the phone because the phone is ringing but we actually want that phone to be ringing 3 seconds later because of the take that was used on the character of the next shot, we will actually figure out a way to splice the frame and take the characters picking up the phone and roll their part of the take earlier so that they are actually not picking up the phone when they performed it, but when it needs to happen in the movie.

Then we also do a trick sometimes with shifting time itself so that — let’s say — the same example of two characters arguing, but it’s a two-shot rather than “shot-reverse” over-the-shoulder. We may find places where we can actually split the screen down the middle and speed up the character that is about to interrupt.

HULLFISH: Mank is very quick-witted and so he’s always “stepping on people” he’s always right on the next conversation.

INSLER: Yes. I’ll make something up that is not in the movie, but let’s just say that we were having an argument between Mank and William Randolph Hearst. They’re in a two-shot and because of what I was describing earlier with them not stepping on each other’s lines, Hearst is supposed to cut off Mank, but it’s a little bit delayed. We may find a place where Charles Dance isn’t actually moving very much in the frame and retime him so that where it looks like he’s still, it’s actually sped up. So there isn’t a hard cut there. There isn’t a jump, but that gets sped up without any visual effect and it allows his later line to now be read earlier and that ends up being a split-screen that allows us to re-pace the take and adjust the performance to exactly the way that Kirk and David want that conversation to flow. And we do that so OFTEN.

HULLFISH: Can you describe a scene in the movie that you remember being a split-screen?

INSLER: I would not be surprised if there were hundreds.

HULLFISH: Hundreds!

I’ve heard from many editors that a good editor knows what the intent is of the director by just looking at the footage. You said Kirk’s very good at that. Can you explain exactly what you might see in a shot or what Kirk might have explained to you: “I know that I need to start this scene on this shot because of this” or “I know I need to be on this shot at this time. David is telling me this through the shot itself.”.

INSLER: Sure. There is one in particular that I remember where I was in the room when Kirk was going through some of the dailies and he looked at an angle and he said, “This angle doesn’t seem to match the line-of-action of the rest of the scene. I’m not really sure what that’s used for yet.”

If I remember correctly, Kirk just said, “Let’s keep going. We’ll see what else you got. We’ll come back to this one.” But he just let it keep playing and then all of a sudden it just clicked with him. There was a little camera move or a turn of Gary’s head or something and he could tell that that camera angle was just for that moment.

Someone less seasoned, less skilled, less fluent in David’s language might have said, “I have this other angle. Why not cut to it?” But Kirk knew from the moment he saw it, that it was not something David wants to use. Right away he knew: “This is not in David’s film language. There’s a red flag here and I need to solve this puzzle.” Then immediately when it became David’s language, he knew right away — “THAT’S what he wants to use here.”

HULLFISH: So interesting. Anything with structure or story itself — the way the film played that you got a chance to speak into you talked about these screenings that you guys do where you write down notes. Talk to me a little bit about some of the things that are discovered during those taking sessions.

INSLER: Usually, in those note-taking sessions It’s much more of a technical QC pass.

We do have creative screenings. Usually, that is a variety of people in the room taking notes and keeping tabs on things. Usually, those are for Kirk and David. But Kirk is very collaborative and team-oriented. He will — after the screening — say, “Let’s talk about it. What did you guys think?” He’s not making it an exclusive process. He’ll also ask questions like, “Did this resonate?” Or “Was there anything that felt weird?” Or “Were there any pacing issues?”

The QC screenings that I mentioned earlier tend to be more technical. Everything from, “There are VFX we should do there.” to “Is this something that we should reframe so that the symmetry of the shot is perfect?”

I’m sure that it’s no surprise that David is a very, very precise detail-oriented director. And so part of our job is to make sure that we have his back on that. He doesn’t really miss anything but we’ve all come to learn that same language of the details that he expects and his pushing for. We all really take pride in trying to shepherd that. Everybody that’s on the team, David expects that you’re doing your best and that we’re all speaking the same language and I think we are really proud to be doing our best.

So that’s what we do in those screenings — we look out for all of these little details and make sure that it’s perfect.

HULLFISH: I think of him as a very visual director. Talk to me about audio. Fincher’s sense of audio and also what you do with sound design. Sound design is a typical thing for an editor to pass to an assistant. Is that something that also happens at Number 13?

INSLER: Sound design is actually a big one on the creative side that gets pushed over to the assistants. Kirk will do a lot of the sound editing himself — especially dialogue editing and building the scene. Then, a lot of the sound design does come to us simply because a lot of sound design is hunting and finding that perfect sound of a teakettle or that perfect sound of a phone ring.

In Mank, for example, we’re talking about a period piece and there are a variety of phones used. There’s a pedestal phone that you’re used to seeing in the Andy Griffith Show. And there are actually rotary phones and handset phones. Doing the research so that in off-line we can make the pedestal phone sound like a pedestal phone. What are those bells sound like? What is that handset phone sound like? So that it actually feels right.

That’s some of the detail that we do and sound that comes back to us because that would really get in the way of Kirk’s ability to go from scene to scene and keep the movie building. But then we will also do sound design for things like ambiance and sound beds.

We would do extensive sound design for whether or not it was daytime or nighttime or whether or not the windows were open or closed. Do you hear the coyotes don’t you hear the coyotes? What does the wind sound like outside? Is the door open? Is the screen door swinging and creaking? Is the fan moving or not moving? We would take all that into account.

Then we would obviously send it up to Skywalker Sound and they would do this hundredfold. Then they’ll send us back soundbeds once they have something really intricately built. But we’ll do that an off-line right from the start. That actually can be a lot of fun.

The other thing with sound that we changed on Mank which we weren’t doing previously is that we set up the Premiere project so that we always had access to all of the production tracks live in the edit, so at any point if we needed to go to someone’s individual mic, it was right there available to us all the time.

HULLFISH: But it probably wasn’t in Kirk’s timeline?

INSLER: It wasn’t in Kirk’s timeline. The way that we used to do it was that the production mixdown went to the dailies and then if we needed to go to an iso mic, we had to go back to the production audio and find it and cut it in. This way, anyone could matchback and have the ISO mic in there in literally seconds.

HULLFISH: Is that something you built specifically with your internal coding abilities or is it something internal to Premiere that allowed you to do that.

INSLER: t was two things. Premiere allows you to do it, but we figured out a workflow to tell Premiere: All the audio is there but you’re not going to cut it into the timeline, which is a little manipulation on telling Premiere how many audio channels to USE as opposed to how many audio channels there ARE. So you can still match-back and see them, but it doesn’t think they’re there when it cuts them in.

We also change the way that we process our dailies because usually dailies are processed with just the production mix down and so it started from the NextLab stage of actually having the dailies operator render out all channels. It made our dailies larger but when we needed to go back to ISOs it made that workflow much faster.

The other thing that we did sound-wise, which I think you’ll really love to hear, is that, similar to what we did to age the image, we were doing that with sound too. So that the sound had a feeling of old 1930s 1940s Hollywood and the way that audio was recorded back then — as opposed to the perfect audio now.

So we worked with Skywalker Sound before the dailies ever started being shot to build a filter set to go into the Premiere sub-mixes so that — even though we were working with perfectly clean audio that could be mixed precisely by Skywalker — in Premiere all of the dialogue audio was filtering through sub-mixes.

We had two different ones, depending on the type of sound we wanted, so that it was getting degraded live so that when the edit was being played back for Kirk, for David, and for us, we were actually hearing it with this old-school sound.

HULLFISH: Oh that’s awesome. So it gave it an analog sound and also probably kind of noise or hiss or tape quality and degraded in the frequencies that that old tape couldn’t handle?

INSLER: We had a degradation process for the audio itself to sort of crunch it down and make it sound old and then we had some audio beds that were also playing underneath that to give it sort of that film flutter and warble and the sound of the audio playing back and scratching and hissing — that destruction of it because it was an old analog process.

HULLFISH: You were talking about audio design and finding the perfect sound. How much of that is going to a sound library and how much of it is your own in-house Foley and ADR? Do you just take your Zoom recorder and get a door slam?

INSLER: What we’re trying to do is get it to sound correct enough in the off-line so that it feels fluid, it feels environmentally appropriate and it’s not getting in the way of the scene being interpreted.

HULLFISH: Felt properly.

INSLER: Right. That works in both ways. Is the sound design hindering? Is what we are doing getting in the way? Or is it not there, so the absence of it is hindering the ability to take in the whole scene?

I remember one scene in Mindhunter working on the background sound of a train passing and that being a very detailed process where we tried to find the right train and give it the right tone as it was passing by. Kirk and I listened to it a number of times and did a number of passes deciding how it should sound when we’re inside of the car versus the camera being outside of the car and how loud should it be, and exactly what the tone should be. We did that in off-line before it even got to Skywalker and then they obviously did much more to it.

But for the most part, it’s library sound effects just because we have a variety of options from all of the temp sounds that we have access to and we don’t necessarily have every single thing to record. For example some of the period cars from Mank. We may not have that appropriate Ford or Chevrolet or whatever at the time was being driven but we may find a car in the sound library that is from that period that is at least possible whereas at Number 13, we have no cars from the 1930s to actually go and record.

At the same time the very same Zoom recorder that I’m using to record my side of this interview, we use to record things when we need to. There’s a scene in Mank where — in the background — you can hear Mank’s sons playing and breaking things in the background. For a good portion of the edit that was Russell Anderson and me. We had originally put in the sound of kids playing and David made notes about what that should actually be.

It was such a precise note that we couldn’t figure out how to build it using temp sound effects library sounds. So we went out to the parking lot and pretended that we were wrestling for a frisbee. It took multiple attempts of recording and cutting it to find the right intensity and speed. It was never intended to be a final sound, but that was one that needed to be recorded, so we did.

HULLFISH: You mentioned Premiere “productions.” For those that are not clear, or haven’t been paying attention — at least for the last about twelve months of Premiere — what is a “production” and how does it change things for you and for your ability to organize a large project and collaborate with others in Premiere?

INSLER: Traditionally — if you want to go back two to three years — the way Premiere worked is that everything was encapsulated in a single project file. So you could break up a movie into smaller Premiere project files that you’re working on. Like, back in the Final Cut days, having a sound effects project, or a music project that was separate from their edits and you would just bring in the music that you needed from the music project.

That let multiple editors access the project and it also let things be encapsulated in separate buckets as opposed to everyone requiring one giant project. Then Adobe really started pushing what it called shared projects. The problem that Adobe Premiere “productions” and shared projects solved was that the old method meant that any time you moved files between smaller sub-projects, it duplicated the media, and also it was harder to track.

What Premiere “productions” does is it takes that approach and it encapsulates it into this one single folder item called a Premiere production. What that means is that you’re now no longer essentially at the finder level managing all of your different shared media-linked projects, but still treating them as individual project files that you are managing yourself.

You now point Premiere to the production folder and it opens up a production window that looks just like any other Premiere window that you would be used to for navigating, but that production window only holds folders — which you could think of as bins for different projects. And project files themselves.

The great thing about that is that it also tracks the lock files within that production so you can see who has what open and it will prevent you from opening a project that’s locked and actually being able to overwrite it, but still let you access the contents of that locked file and it manages the entire contents of the folder structure and directory for you. So if you want to move things around, rename things, it’s all possible in there. It gives you a very sort of streamlined view of a multi-user environment.

There is a really substantial difference to having everything organized in Premiere — working in Premiere, staying in one ecosystem — and just continuously, fluidly getting the information of who’s in what project, what do I have open, what don’t I have open and where is everything organized in Premiere for me that lets us work a lot more fluidly and a lot more smoothly.

HULLFISH: Mostly because the projects within the production are smaller.

INSLER: Yeah. That’s a great point because of the cross project linking when I bring that piece of music over into the project, it doesn’t increase the size of the project because that new item is there.

On Mank, every single reel in the film has its own project, but then every single scene in the film has its own project. There’s a music project from Trent and Atticus. There is a working folder for every one of the assistants where we have tons of working projects within them, rather than having one giant working project that just gets more and more and more bloated.

There is a sound effects project. For each assistant, there is a dynamic links project where the dynamic links get rendered. Even though they get cut into the sequence that they’re in in real time when we do a render-and-replace, we bring those renders over to this sidelining project for all the dynamic links so that they’re not cluttering up and bloating the real project file. So everything does open very very quickly.

HULLFISH: Back in the old days the other problem was switching between big projects — like reels — would take a long time, so if you were reviewing a project with the director and got to the last scene in reel 1, it would take quite a while to close the current reel and open the next reel.

INSLER: Every single piece of media that was associated with building an entire real or entire episode — all of that scene material stays in the scene projects, in their individual scene projects, and it’s only the reference to them that gets put into the reel project and into the sequence.

The cool thing about it is that matchback does work. This is one of the things that the production is tracking — matchback does work back to the source, so if you want to matchback to that clip from scene 3 and go back and look at the select string out or the dailies, you can match back. Premiere will automatically open up that project for the scene and load up the appropriate clip in the browser window. And so it works exactly the way that you would expect in a project but it opens up lightning fast because it’s only scene eight, and if you need 9 the same thing happens with scene 9.

HULLFISH: Ben, thank you so much for talking to us about this project. Congratulations on working on a really fine piece of work.

INSLER: Thank you. My pleasure.

INSLER: Thank you. My pleasure.

o read more interviews in the Art of the Cut series, check out THIS LINK and follow me on Twitter @stevehullfish or on imdb.

The first 50 interviews in the series provided the material for the book, “Art of the Cut: Conversations with Film and TV Editors.” This is a unique book that breaks down interviews with many of the world’s best editors and organizes it into a virtual roundtable discussion centering on the topics editors care about. It is a powerful tool for experienced and aspiring editors alike. Cinemontage and CinemaEditor magazine both gave it rave reviews. No other book provides the breadth of opinion and experience. Combined, the editors featured in the book have edited for over 1,000 years on many of the most iconic, critically acclaimed, and biggest box office hits in the history of cinema.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now