We’re used to cloud services, but video editing remains, for most of us, a stubbornly offline anomaly. If Google Docs, Office 365 and iCloud can make cloud-based shared-documents a reality today, why do most of us still edit videos entirely locally, and when’s that likely to change? Of course, some companies have already tried to make cloud-based video editing an option for those who want it, but we’re a long way from it being the norm. Before we take a look at Sequence — an online editing platform with some genuinely exciting ideas — let’s take a look at some of the reasons why video editing is still an outlier.

Media is big and pipes are still too small

Starting with the most obvious problem, video files are getting larger, not smaller, and internet speeds vary widely. In Silicon Valley and many other parts of the world, it’s easy to get upload speeds of hundreds of Mbps, but to quote William Gibson:

“The future is already here – it’s just not evenly distributed.”

Here in Australia, after years with a top download speed around 100Mbps, I can now (yay) hit over 900Mbps down if I connect via ethernet, but my uploads are still stuck at 45Mbps. I can edit someone else’s footage in the cloud, but sending my own files into that cloud will take a while, and latency can be a big factor. There are of course many people with lower internet speeds than me, too. With less than perfect speeds, if you have to upload all your source files before you can start working on them, that can be an instant, massive, deal-killing problem for quick turnaround jobs.

We only have to look back to the launch of Lightroom CC to see how this can fail badly in the professional photo space. A new version of Lightroom promised to move all your photos to the cloud, enabling you to work on any device (like an iPad) with automatic sync. But professional photographers immediately rebelled, with reason, and stuck with Lightroom Classic — I still am. If you’re on-site at a conference and need to turn around processed photos quickly, it would be a terrible idea to place unreliable conference internet at the heart of your workflow. Also, forcing the use of one, online, monolithic library with limited space for all jobs is not a choice everyone’s comfortable with.

Of course, not every professional has the same needs, and if you prefer a less intense, mobile-first, socially-focused workflow, the newer, non-classic Lightroom CC may well suit your needs. Older professional users with a collection of drives full of images are unlikely to shift their workflows online, but we’ll all retire eventually. The world moves on, new requirements arise, and if the stars align, new workflows have a chance. Here’s how the stars are aligning.

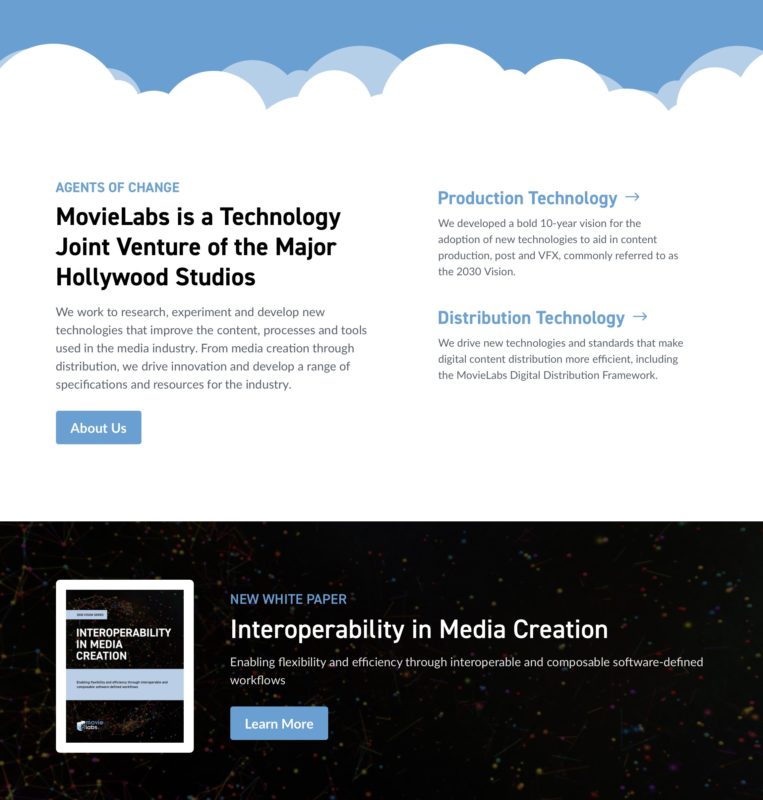

Studios are planning to move everything online

MovieLabs is a joint venture of the big Hollywood studios (Disney, Paramount, Sony, Universal, WB) which has a grand plan to move all post-production activity to the cloud by 2030. This is a serious plan in which all the details are being considered, and if it actually becomes official policy, it could really change how movies are made. Instead of different teams wrangling data locally, converting between formats and juggling transfers, all video and audio files would live in the cloud as soon as they’re recorded, alongside scripts and all other related assets.

To understand the grand plan that MovieLabs is suggesting, here’s a quick primer. The aim is to put everything online with secure access controls, and to enable real-time iterative workflows through common APIs. While their white papers are detailed, not everything is fleshed out just yet. However, since there’s a big focus on interoperability, and it’s historically been difficult to share between different NLEs, an interchangeable timeline format would make a lot of sense in this new cloud future.

One way that might happen is with Open Timeline IO (OTIO), an open source project that’s trying to make edited timelines more interoperable. Here’s one example of how Netflix experimented with using OTIO to surface production-sourced scene descriptions with viewers. Fingers crossed we see more of this, because reducing NLE lock-in will encourage innovation on all sides.

Who else wants to use the cloud?

Solo shooter/editors probably aren’t interested, but anyone working for remote clients could be keen. Right now, editing remotely requires organizational and media management rigor that’s not always there, and I’m sure many production houses would like to be able to more easily employ editors from cheaper parts of the world. (Note: this is of course not a big selling point for existing editors.)

While a collaborative in-person process is ideal, and likely to remain for larger productions, it’s not the only way to edit. For more production-oriented jobs, where editors are empowered to make their own decisions, cloud-based editing could have few downsides. And if the system takes care of media wrangling, great.

Where does Sequence fit into this?

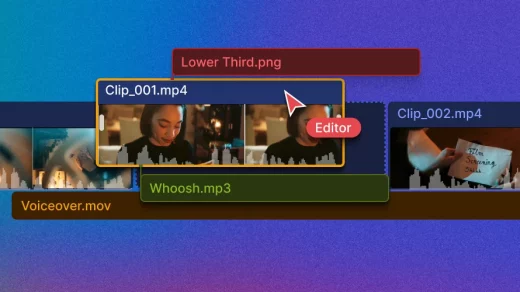

Sequence is an NLE that exists entirely in the cloud, with all video assets stored online too. Rather than using virtual desktops to drive a regular NLE on a remote PC like Bebop does, Sequence is a web app with streamed video and a cut-down, simplified UI.

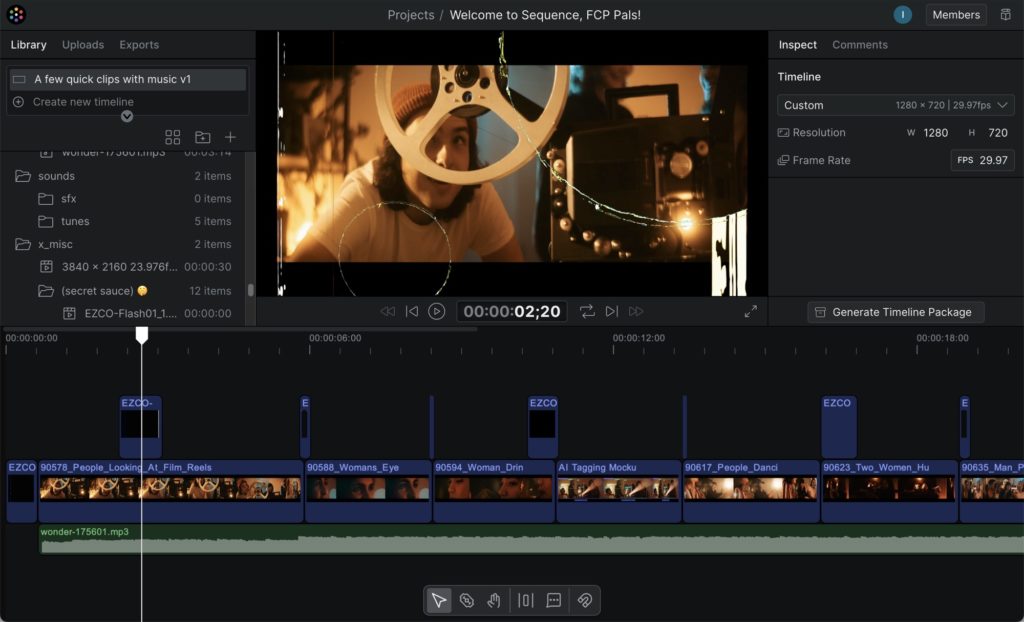

Right now, everything’s in alpha, so it could all change, but right now it bears more than a passing resemblance to Final Cut Pro. Indeed, the “multiplayer” feature, allowing many editors to work on the same timeline at the same time, allows clips to move around much like they do in FCP’s Magnetic Timeline. Edits always ripple, connections keep cutaways and sound effects in sync with their parent clips, and I don’t think there’s a better way to allow multiple editors to play together.

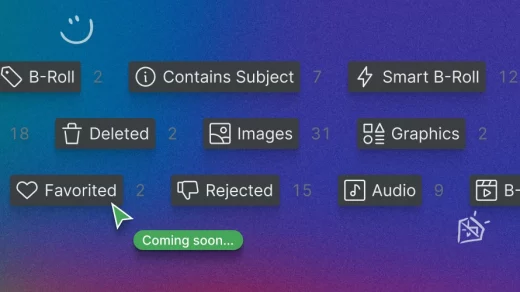

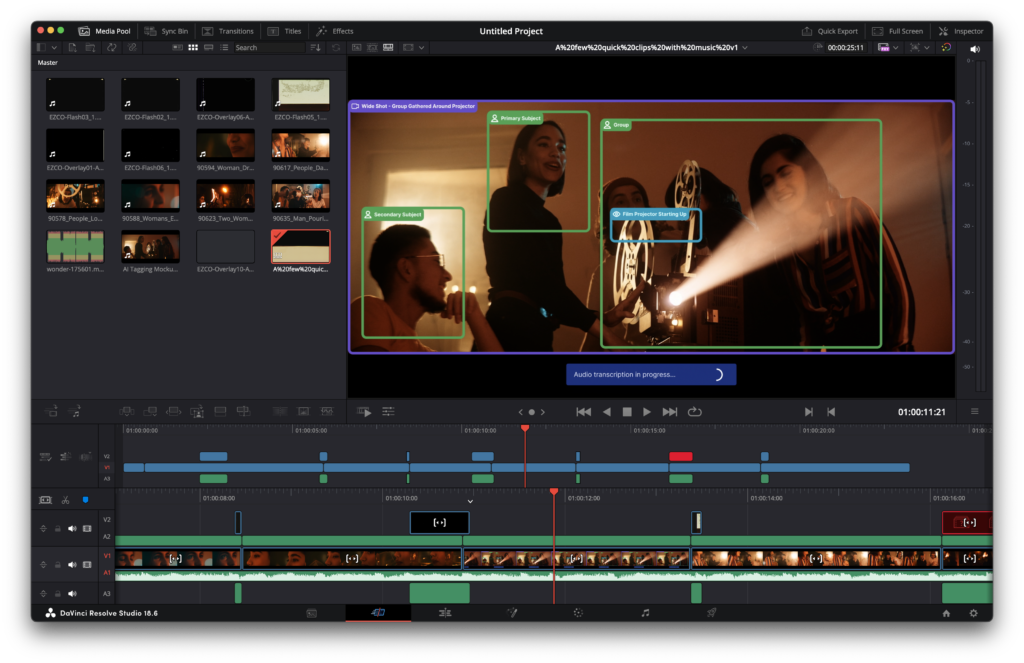

On the technical side, H.264 and HEVC are hardware accelerated, your favorite intermediate formats (DNX, ProRes, BRAW) are coming soon, and you can leave comments anywhere. In the future, Smart Asset Organization promises to analyze and then tag all your clips automatically, and this is one of the big things that none of the major NLEs can do quite yet.

Tagging and analysis is also, not coincidentally, one of the main features that Strada, a new cloud marketplace for video workflow tools, aims to offer with their “Tanalyze” feature, and is also similar to what OZU is offering with their AI-based analysis. On the desktop, only FCP’s keyword system currently offers a great UI for organizing footage with metadata tags like this, but applying these tags still a manual process on the desktop. The cloud systems are all over it — good stuff — and maybe they’ll all be interoperable?

What’s it like to edit in Sequence?

Basic editing feels good, and with ripple-trim and ripple-delete by default, it’s a lot like driving FCP. You can drag and drop, you can blade, you can trim, and there are plenty of undos. One standout feature is the commenting tool, which allows you to leave notes at a point in the timeline, or attached to a specific clip that might move around. The UI is simple enough that non-editors will get the hang of it quickly, but without advanced trimming tools (roll, slip, replace) editors can’t treat it like a full-featured NLE just yet. After all, this is still a work-in-progress alpha, so I’m not going to deduct points for lag or iffy connections just yet, especially from my warm home on the other side of the planet.

However, since you can download an entire timeline in OTIO format (aha!) there’s the potential for not just remote commenting and review, but some actual editing that integrates with today’s pro workflows. There’s nothing stopping me uploading an edited sequence and a few alternate shots for review, then actually editing live (trimming, adding b-roll, and responding to feedback) with a client or director watching.

When we’re done, with just a few steps, I can download every clip in the timeline and an OTIO file that the current version of DaVinci Resolve can successfully import. It’s great to have an eye on the future, but most revolutions in post production have happened one step at a time, and this is a potentially useful tool for me today.

While I don’t know that I’ll ever be entirely satisfied with a cloud-based NLE (I want all the bells and whistles) I think Sequence can grow to be a very usable option for many. If collaboration is an important part of your workflow, cloud solutions have an instant advantage, and they can grow their feature set more quickly than desktop apps can too.

On that front, features due soon are: color correction LUTs and creative LUTs, simple titles, importing from URLs, and advances in commenting and versioning. When analysis goes live, I plan to throwing a truly huge number of home video files at it to help me find out what I’ve been filming for the past 15 years or so. My hope is that eventually, all editors will have a way to instantly find every frame where a particular person appears, or find close-ups of a type of object, or find all the shots where people are happy, or mark the best takes automatically. Some of that stuff at least should be in Sequence soon.

Conclusion

Today, Sequence is a solid start for a full-featured cloud NLE, and if many more of us might soon be using a cloud-based system one way or another, I’m glad there’s one with a clean UI that’s actually pleasant to use. Editing has been too siloed for too long, and the sooner we can all use whichever NLE we choose, wherever we want, the better. Sign up for the beta now, and you’ll hopefully get to peek at the future soon.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now