The Sony F23 sees hues of colors I didn’t think a digital camera could see. Why?

A while back I attended a demonstration of the Sony F23, courtesy of Videofax of San Francisco. All in attendance were dazzled by the subtleties of color that the camera saw. Jeff Cree of BandPro gave a presentation during which he said the reason for this was the improved bandpass filters used on the camera’s prism block.

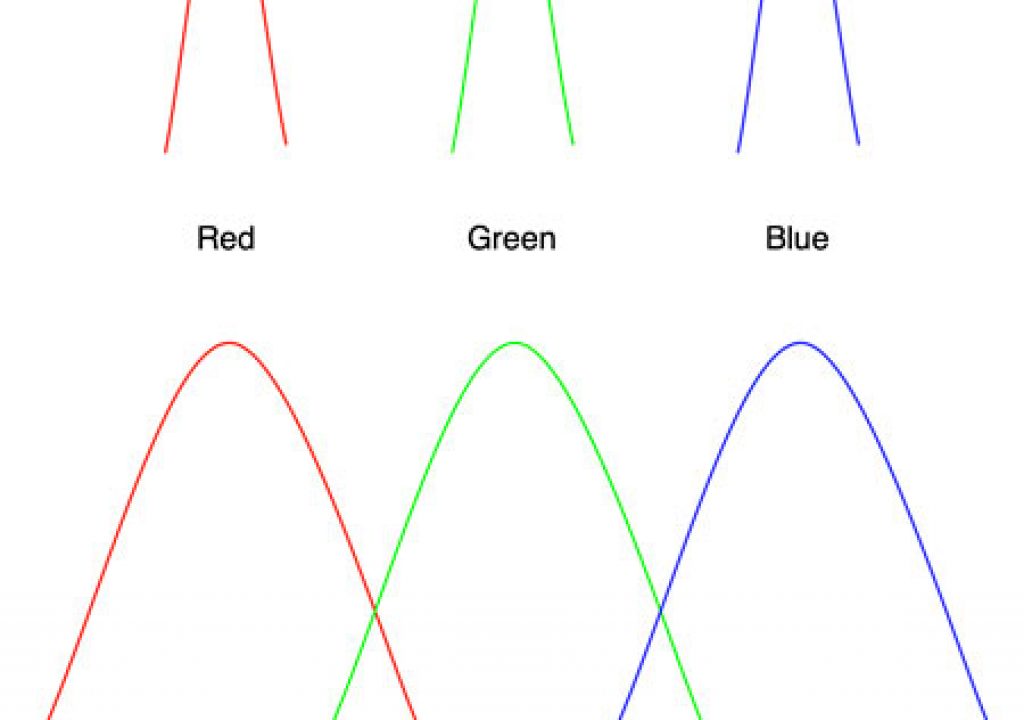

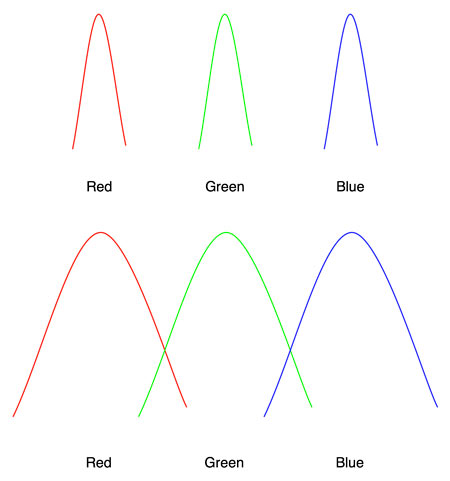

The diagram below is a simplified version of his explanation. The top graph represents a typical three-chip prism camera; the bottom represents the F23:

The typical three-chip camera prism block uses filters to separate red, green and blue and routes each one to its own individual chip. In the past those filters have passed only narrow bandwidths of each color, resulting in the inability for many video and three-chip HD cameras to accurately reproduce secondary colors (such as orange/yellow, magenta and purple). The F23’s broad bandwidth filters allow the red, green and blue signals to overlap a bit, capturing enough information to improve the reproduction of colors that fall between the three primaries.

A friend, Tim Blackmore, attended NAB this year and ran into a highly esteemed video engineer. Among other things, he asked this person why it had taken so long for someone to invent a broad bandwidth three-chip prism. “Oh, we could have done it 20 years ago,” was the answer. “We just didn’t need to because until now we’ve been working with NTSC, and no one would have noticed the difference. With digital cinema it’s a completely different story.”

I’m counting the days until NTSC disappears.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now