“Do Androids Dream of Making Movies” was part of the Future of Cinema conference track at NAB. Annie Chang, VP of Creative Technologies at Universal Pictures, led a conversation with Yves Bergquist of USC’s Entertainment Technology Center and Corto, an AI startup; and David Kulczar of IBM Watson Media.

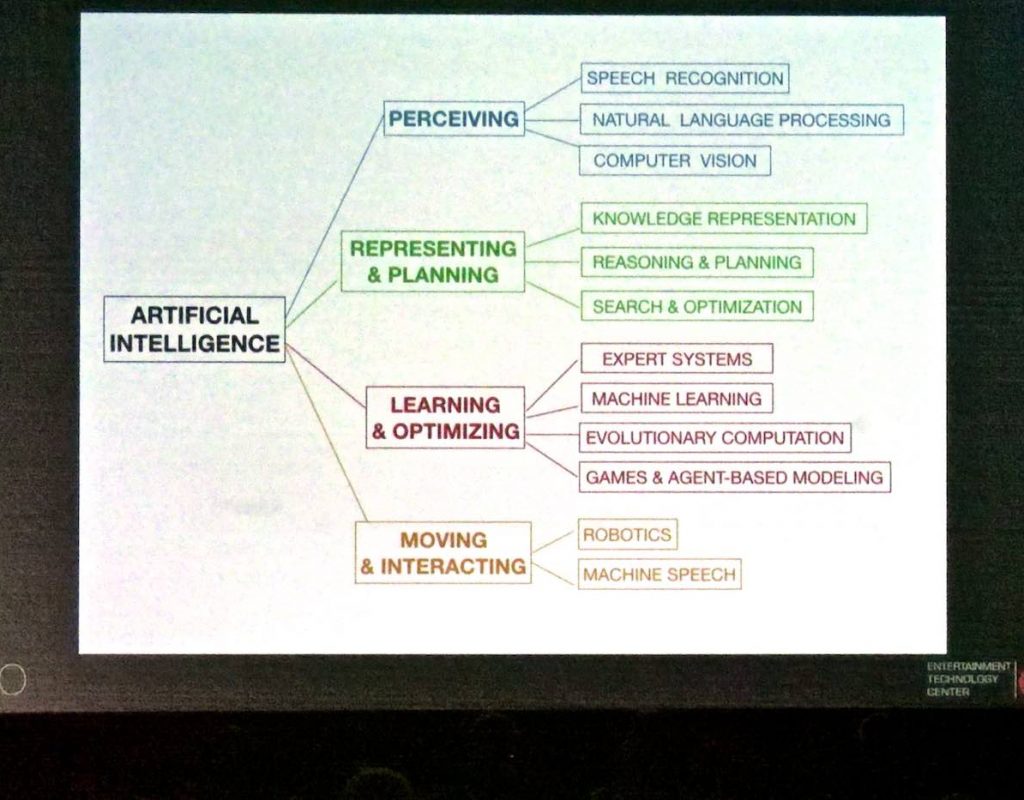

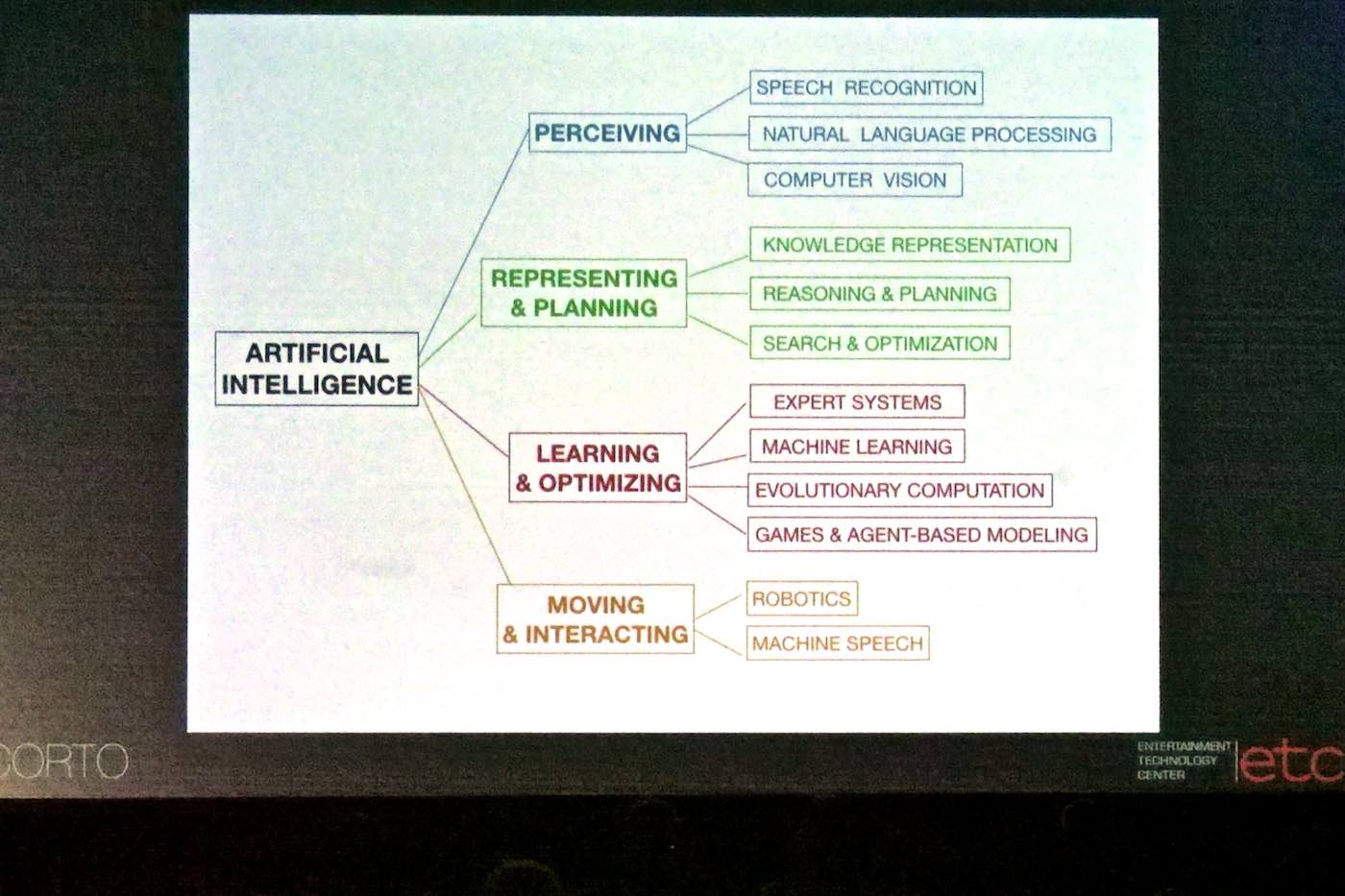

Yves: Some background: What is AI? The best definition I’ve found is the design of “optimal behavior of agents in known or unknown computable environments”. It consists of perceiving, representing, learning, and acting.

AI can lead us to “Borg orgs”, going from a siloed, hierarchical organization to an amorphous collection of actors.

David: Some recent things Watson has done: We had a presence at the Grammys, used to find things like that perfect Beyonce picture to display at the appropriate time. At the Masters golf tournament, giving you your own console to pick your favorite golfers, and automatically show their highlights. We’re using things like crowd response to fine-tune selections and find most relevant highlights.

Annie: Why would IBM be Interested?

David: What are the key challenges corporations face, and how can we solve them? Take all this AI / ML experience into more productized offerings.

A digital “look book”

Annie: Yves, what is ETC’s Snowflake?

Yves: Snowflake is a digital look book. Allows filmmakers to make more creative decisions. You upload a selection of images with a look, we use image recognition to find films in our database with similar looks and colors and show then to you. You can upload a script, We parse it, find the emotional arcs, then find films with a similar emotional arcs and how they worked visually. From script to screen, one central place to do your look book research. First time a tool has mapped how characters emotions are matched visually.

Corto is a product using an AI / general intelligence engine to go deep into semantic questions about why audiences react to certain content. Working with scripts and performance data to say “this sort of arc resonates with this sort of audience”. One example: for a TV network, we take all sorts of data combined to match scene-level attributes how they resonate, how does that drive performance. Corto is also used for evaluating risk models. The way we define risk to prevents a lot of really great products from ever coming to light. It’s poor risk definition. We’re trying to really give risk managers a lot of data to better understand risk, so more stories can get told that wold’t get told otherwise. This is only industry that sells novelty; that’s hard to reconcile with normal risk models. A misunderstanding of what audiences want. Learning to extract better risk models, understand what will work. We have a mathematical definition of everything that’s interesting, based on neuroscience: It’s a similar ratio of two categories of attributes: things that are familiar vs. things that are new. This ratio works for everything —media, relationships, anything — based on how the brain compresses data. A golden ratio of traditional vs novel.

Annie: How do you pick projects to work on?

David: Opportunities come in from various sources, We listen to clients, see where their problems are. More of a scale challenge: sort though all the clips of the Masters, for example. It’s how do we synthesize all of this data, too much for people to handle. Lots of market research; we have a robust research team; what’s fun to work on and how can we solve problems.

Yves: It’s simple: Do you have money? Are you friends with Putin? [Nervous laughter from audience. It was a joke. I think.] Designing solutions around problems, from inside the industry. Not grafting external solutions onto industry problems. The ability to really understand media.

What’s next

Annie: Testing humans, seeing how they react. What’s coming up for AI storytelling?

Yves: Ability to parse scripts, parse content; how does that line up with audience reaction, with social media conversations? We know people like the product, we just don’t know why. What is the meaning of, say, a green color in this context, this piece of music in this context, etc. Build up the database. So we can say, “this combination of attributes really works”.

David: We try to look holistically at humans and response patterns. Knowing your content, knowing your preferences, social media content. So our recommendation engine can make much better recommendations. Is it rainy, do you have a presentation to give tomorrow, so you need to watch “Rocky” to get amped up? Or do you need something to calm you down?

Annie: Talk about “audience of one”.

David: “Audience of one” is where we’re headed. In 5–10 years, we’ll have custom create content tailored to each viewer. Search all the news, sports highlights across multiple streaming services, to give you that custom viewer experience.

Annie: Are machines going to take over the creative process? Will we all lose our jobs?

Yves: Not in the short term. Two reasons: it’s extremely hard, and you need the human element or the result isn’t marketable. Sure, a lot of low-level content, automated to the max. Higher level content less automated. Machines will help creators push themselves beyond what they might otherwise do. Honestly, I think we’re on a cusp of explosion of creativity.

David: Right now, AI is a tool like any other. Need to understand it; it can help you scale, fast and efficient. Skynet is a long way away.

Yves: Jobs will disappear.

Annie: New opportunities?

Yves: Reorientation of tasks to relationship business, away from more automatable stuff. In terms of creation, we have a taxonomy of stories, it’s not infinitely complex. So we ‘ll see machine derived stories in a few years.

David; Yes, machine stories, but they may not resonate as much. We auto-created a trailer. It’s pretty good, but it’s not a narrative story. Still massive editorial approval required for anything serious.

Yves: There’s a right way and a wrong way. The wrong way: what engineers and statisticians say to do, based on statistics. Right way is to focus on cognition, to measure the right things. What are the problems you’re trying to solve? To innovate creatively, to better fund creators (that’s a year and a half away) Lets’ say you want to shoot a bar scene. Our tool can surface all the bar scenes that ever existed, tell you what works and what didn’t, so you can avoid mistakes. There’s not enough innovation. Models to allow creators to push past their comfort zones: to say “yes, this will work”. The sky is the limit; I’m very optimistic.

Annie: The downsides?

Yves: Using data to do the same old stuff. Audiences are experts. Demand is flat —there are only 24 hours in a day — but supply is exploding. Huge challenge to create new stuff, not simply repeat what worked before. It’s a testimony to talented creators that it’s worked so well for so long. Hyper-competitive. Thousands of channels of media. You need to understand your own product, its semantics, why is this working? Now we have the tools to do that.

Question: What about computer vision?

Yves: Many computer vision challenges have been solved.

Dave: Detect smiles, fist bumps (in Masters tournament clips), detecting that the ball came in at X mph, sort all that material in real time. Automating visual QC (quality control of finished programs) is something everyone’s interested in.

Yves: Action recognition: are they kissing? fighting? etc. Use neural networks to examine every single action film. Output a “meaning code”, like a timecode, everything important happening in a scene (the color, the action, etc.). Which aspects are most important in a given context? How does white balance affect a scene? What is the impact of color?

Question: If we get to the point where “everything is interesting”, can it burn out the consumer? What about human leaning?

Yves: The market will tell us if burn-out happens. As for human learning, Google’s Go-playing AI is a good example. Its plays surprise experienced Go masters and they learned from it. AI is a revolution in how we know things, and it will really help us learn things.

Any mistakes in transcription or paraphrasing are mine alone. Photos are shot off the projection screen, and the images remain the property of their owners.

Disclosure: I’m attending NAB on a press pass, but covering all my own costs.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now