Mark Schubin’s unique bifocal 3D glasses.

The Hollywood Post Alliance Tech Retreat is a gathering of postproduction, broadcast, TV, and cinema folks in Palm Springs every February. This year, 3D is a big topic, and Tuesday’s Super Session was “3D in the Home”, chaired by Jerry Pierce & Peter Fannon.

Apologies in advance; my writeup is mostly comprised of notes taken during the session, with some minor editing after the fact.

A collection of 3D glasses in the lobby (plus: X-Ray Spex!).

Keynote speaker Wayne Miller, Action 3D Productions, who has been working in 3D for five years (episodics, commercials, shorts, features, and music videos, e.g. the 3D “We are the World” Haiti charity video starting to get airplay), started by saying, “it’s not a discussion of if or when: it’s here”. He went on: “I believe 3D is moving faster than HD in the marketplace.” About 18-24 months away from being in the mainstream, but there will be content readily available in 6 months. He shoots with both parallel rigs (for long shots, about 30-40 feet away or more) and beamsplitter rigs, recording to HDCAM-SR in dual-stream 4:2:2 mode. Each rig has a cam op, a focus puller, and a convergence operator (either at the camera or in the truck) talking to a stereographer watching an anaglyph overlay monitor and calling out convergence instructions. He does DI with Lustre and Smoke, color-correcting and tweaking convergence shot-by-shot.

A parallel rig using Varicams with matched Fujinon zooms.

The cost of 3D vs 2D? “It’s more!”, but only 15%-20% more for single-camera, maybe 30% for two-camera shoots. Not a showstopper.

In the home, Miller thinks that 3D Blu-ray discs will lead the charge, even though ESPN, Discovery, and others will have 3D channels. Also, the web may be a major driver for 3D in the home.

“Any content is right for 3D.” It’s not about the gimmick, it’s about the story.

Dr. Martin S. Banks PhD, head of the Visual Space Perception Lab at UC Berkeley, discussed “User Issues in 3D TV and Cinema”. He focused on two items, vergence-accommodation conflicts, and the effect of off-angle viewing on 3D perception (this talk was the high point of the afternoon for many attendees I spoke to).

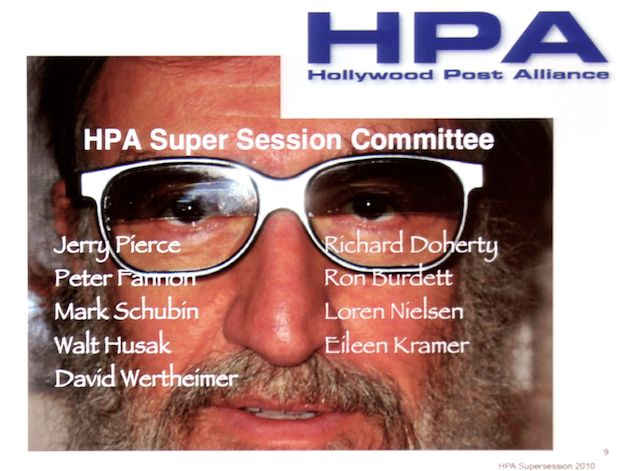

It’s often asserted that vergence-accommodation conflicts are a major factor in 3D viewing fatigue (vergence is the aiming of the eyes at a common point and accommodation is focusing; in real life, these two are always locked together, but in 3D cinema the focus point is always the image on the screen, while vergence varies with the convergence in the image). However there hasn’t been much research; Dr. Banks set out to test it. He devised a multiplanar 3D display using beamplitters and a large TFT LCD screen; think of it as a stereo, multi-plane animation stand, in which he and his team could test folks for 45 minutes with images where focus and convergence were in sync, or images where the focal point and the convergence were purposefully thrown out of sync.

Vergence & Accommodation explained: When they’re in sync within the green zone, you can see a stereo image; when they’re in the yellow zone, you’re comfortable doing so. Distances shown in diopters: the inverse of the distance in meters.

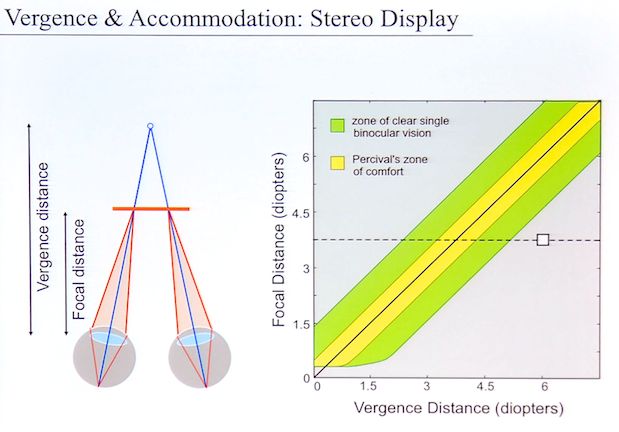

With some careful testing, Dr. Banks and crew found that vergence-accommodation conflicts did indeed cause strain and discomfort, and that the theoretical results were closely matched by experiment:

Experimental results, shown in both diopters and in meters.

Observe the right-hand chart: conflicts are more acceptable as the screen moves farther away. The digital cinema audience (gray band) can tolerate a fair amount; viewers at home, with smaller, closer screens, may not do so well.

What about perceptual distortions—viewing the image obliquely, instead of of dead-on? People rarely look at 2D images head-on; thus it appears they can mentally compensate. The researchers displayed a synthetic scene with a sphere in it on a CRT, and asked people if the object was too tall to be a sphere, or too short, while varying toe angle at which the screen was viewed. People saw the image either through a pinhole, so they couldn’t see the CRT’s surround; or with full binocular viewing of the screen.

It appears than when people can see the framing of the image, they automatically compensate for aspect ratio and other errors of oblique viewing. When the surround was hidden, they couldn’t.

But what happens in 3D? Can theater viewers make the same corrections?

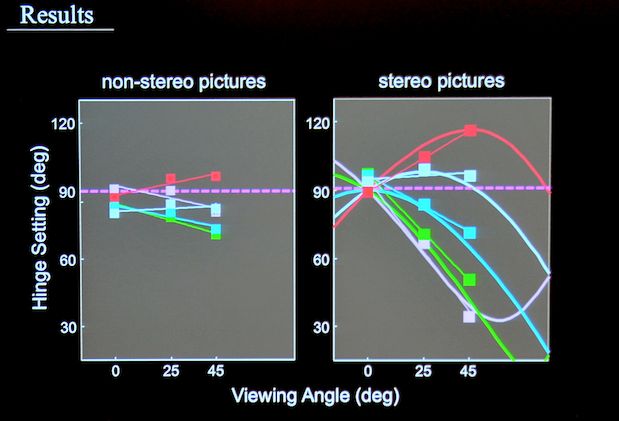

The effect of off-angle viewing on 2D and 3D image perception (smooth curves are theoretical; lines with boxes are experimental results).

Not so much. Thus acceptable seating angles for 3D appear to be rather a bit narrower than for 2D.

Loren Nielsen, president of Entertainment Technology Consultants, asked “Where Will 3D Content Come From?” CES had a lot of 3D tech; but what’s the content? She posited that are categories of films that are “must be 3D” films: Animation, Horror, Tent-pole (e.g., Avatar), Fantasy, and Specialty (“may be 3D” as opposed to “must be 3D”). Foreign distributors are willing to pay up to 30% more for an indie horror film in 3D. Sports, of course—not just premium sports, but even (and especially) up-close and personal things like Pro Wrestling, Gladiators, and UFC (no, really. The mind boggles). Also computer & console games; concerts and music videos. And what about a 3D UI for searching for content, either on the TV or on the PC? The final question: “Will it stay” or is it just a fad? If 3D creates premium revenue streams, it’ll stick around (and yes, it will cost more, because people will pay for it).

Phil Eisler, GM for NVIDIA’s 3D Vision shutter glasses, talked about 3D in the PC market. It was difficult to transition from CRT to LCD due to lower refresh rates (problems with ghosting). NVIDIA’s shutter glasses had to be designed for high contrast and quick actuation to deal with ghosting. The system is a sequential left-right display system, but with added “dark frame” insertion on the glasses (like a closed shutter). 400+ games converted for 3D (since they are already DirectX 3D models, it’s relatively easy, though some things like crosshairs, HUDs, and deferred rendering can cause problems); NVIDIA has 3D playback software for the Fujifilm REAL 3D W1 point-and-shoot camera, mobile 3D (3 notebooks already have 3D screens, and more are coming), projectors, even three 3D LCDs side-by-side for an immersive surround monitor. There are over 5000 3D YouTube videos; NVIDIA is working with Adobe to embed 3D in Flash for use with shutter glasses; YouTube is promoting left-right pairing (a.k.a. side-by-side, anamorphically squashed frames) for 3D content. http://www.NVIDIA.com/get3D

Walt Husak, Senior Manager of Electronic Media at Dolby Labs: “Standards, and How 3D will Get Into the Home.” An overview of standards organizations history (standardization started with screw threads for British railways), processes, and the standards bodies relevant to 3D (there are a LOT). Paths into the home: optical media, cable, satellite, OTA broadcast, Internet; each has its own (often different) standards. Relevant for 3D: MPEG MVP (MPEG-2 Multi-View Profile, ISO/IEC 13818-2, or h.262) using spatial as well as temporal prediction for compressions, MPEG-C (using a depth signal as an image, like a depth map for 2D+ autostereo displays) for depth as an auxiliary signal ISO/IEC 23002-3, and now MVC (Multi-View Video Codec), ISO/IEC 14496-10 & H.264, which adds stereo to AVC, and interlace to MVP for broadcast. 3DV, combining MVC and MPEG-C, is being explored.

CEA is working on standardizing the IR signaling for 3D shutter glasses, as well as HDMI signaling (looking at adding a subset of HDMI 1.4 to HDMI 1.3 to allow some form of display on current sets), and the 708 committee is looking at 3D captioning.

SMPTE of course defines standards for D-Cinema and is working on 3D home standards. In the broadcast space, ATSC, SCTE, and DVB are looking at standards (though ATSC’s 3D OTA process is on hold for lack of a viable business model; they’re focusing on mobile 3D DTV instead).

Eisuke Tsuyuzaki, Panasonic Japan, discussed the end-to-end 3D process. “For $50 Billion, what is the next big thing that will make us all money? 3D!” Infrastructure is there; content is there; equipment is there. 3D TVs may sell between 2 million this year and 1/3 of all TVs by 2013. Twice the uptake rate of HD. The cost delta for 3D is about $700 for a ~55 inch screen. “If you’re making a new film or a new broadcast, why not make it in 3D, so its value will increase?” Panasonic has a full line of 3D displays (even a field monitor coming out), and a one-piece 3D camcorder (see below). Panasonic is also very interested in studying the biomechanics of 3D: ghosting/crosstalk, alignment, child vs. adult vision, different interocular spacing for different populations, etc. “3D is about jobs”; the whole 3D chain affects cinematography, sound (more 7.1 recording/production), post… everything except craft services [though I’d say the convergence puller, stereographer, and the extra grips needed to wrangle the big beamsplitter rigs all need to be fed, so craft services benefits, too]. “Products available in the spring… depending on your definition of spring!” After Blu-ray packaged media, what’s the next frontier? 3D broadcasting; Panasonic is working with DIRECTV. “Prices will probably increase a little bit, but because consumers want 3D and will pay for it.” When the novelty wears off, advertising and education will remain beneficiaries, since 3D makes a greater impression than 2D.

DIRECTV announced 3 3D channels starting in June; Hanno Basse, VP Broadcast Systems Engineering at DIRECTV discussed them. A 24/7 1080p channel: theatrical films, docs, concerts, etc., sponsored by Panasonic; a live-events channel, like the baseball All-Star game (in cooperation with Fox); and a VOD channel. 3D will use a frame-compatible side-by-side format (it needs to work with the 10 million STBs already in the field, and side-by-side is a required format in HDMI 1.4, so it’ll be widely compatible). Already tested 3D in 1080i, 1080p and 720p. Works with existing STBs, but you’ll need a 3D-capable HDTV. An STB firmware download will handle graphics,and HDMI messaging for 3D control. DIRECTV has successfully multiplexed L&R streams in real time for live events, but prefer to have content delivered with L&R streams already multiplexed, to prevent any possibility of sync slippage (which “takes all the fun out of 3D”). Closed Captioning is still an issue, what with scene-dependent depth-cuing; Mr Basse said that DIRECTV would love to hear from someone in the HPA audience how best to deal with this! Almost all of the 3D content DIRECTV has seen so far is 1080p, but sports is mostly 1080i, so interlace support is critical.

To read this slide, put on your 3D glasses…

Pete Putnam of ROAM Consulting discussed 3D in the home: according to one survey, 50% consumers want 3D in the home; 80% have seen 3D; willing to pay for glasses (but not 2x for two glasses); want to see streamed content more than Blu-ray. 3D challenges: LCD motion blur and off-axis viewing; plasma phosphor lag; DLP (projector) color breakup; need for active shutter glasses (problematic under fluoros, daylight), and HDMI 1.4 (not on existing sets, so there’s a legacy problem). Under the image of a 3D broadcast of a baseball game, with left & right images superimposed, Mr. Putnam put the question, “Can you strike out twice on one pitch?”

He saw a lot of 3D at CES, in the form of products shipping in Japan, or products shipping worldwide later this year, or tech not embodied in any announced products. All 3D LCDs he saw use LED backlights, and refresh rates of 240 Hz or 480 Hz.

Glasses: passive glasses can be as cheap as $0.65 or as pricey as $90, but active glasses are $50 and up. How do you recycle passive glasses, or do you just throw ’em away? Active glasses: what happens when someone sits on them? How do you keep ’em clean & sterilized?

3D cameras: Fujifilm’s REAL 3D W1 $1100 (“for both”, e.g., both the left and right imagers, grin), a DXG underwater 3D camcorder (!) for which very little info was available, and the $21,000 Panasonic stereo camcorder (two seats away from me, Panasonic’s Jan Crittenden Livingston whispered, “It’s not a consumer camcorder!”.)

Pete Putnam describes what he saw at CES.

David Wood of the EBU (European Broadcasting Union), asks, “Are You Guys NUTS?” Does 3D ever turn from “wow” to “ho-hum”? There have been repeated cycles of 3D; they didn’t succeed. Is there just a tiny chance that the public got tired of it? Is it the glasses? How does 3D change viewer behavior? Calming or stressing? Better or less retention of content? Emotional content? Is it a “plus” or a “minus” compared to HD? The EBU found that HDTV was a sure-fire winner; a 2008/2009 study in France showed lower stress, more program retention, more accurate program retention, etc. but there is no such study yet for 3D.

What about the business success factor? Does the tech improve user experience? What’s the cost and availability? Content choice? Ease of adoption? Complements? Lack of substitutes? Does everybody win? We don’t have these answers yet.

Who should make 3D TV standards? Is it do-able? Do standards need to be worldwide, or can they be regional? Should the market decide? Should the standards match those for Blu-ray and games, or will this lead to more fragmentation (since they aren’t from the broadcast world)? Who’s looking out for the user here?

Is there still eye irritation after nit-picky L/R alignment? Evidence is relatively scarce; is Dr. Bank’s lab enough? Mostly we have anecdotal evidence. Should there be a warning on 3D content? Should we have more coordinated studies?

Do we “get” 3D production yet? Good stereo is 2-15 meters away, close enough that performers say the “camera is up your nose”. Long shots either look flat (normal interaxial) or like miniatures (widened interaxial).

Where does 3D TV sit as a media form? Is it a fairground show, or an art form? Too much depth & dimension, it’s a gimmick. Too little, and why bother?

“3D will never die, it keeps coming back like a politician’s promise.” Every 25 years it comes back, for a while… if it ever will succeed, the time is now; the quality is so good. But is that enough? We owe it to ourselves to research the economics and behavioral issues.

At the end of it all, following a brief audience Q&A, the moderators polled the audience: will 50% of the home audience have 3D in the home in 3 years? Only a few attendees agreed. How about 10%? Most thought so. How many HPA attendees expect to have 3D a home within 3 years? Most hands went up.

Next: some of the stuff in the demo room…

Demo Room Demos

One of the treats of the Tech Retreat is the Demo Room: stuff from the labs of various companies, not necessarily yet (or ever) ready to be turned into products. Here’s some of what I saw…

ARRI Alexa digital cine camera.

The Alexa was here, and making pictures. The EVF wasn’t working yet and many functions hadn’t been implemented, but light went in the front and HD-SDI came out the back.

How about that see-through gearhead the Alexa was sitting on?

I don’t think the see-through gearhead is something you’ll find in the ARRI catalog.

I put the Alexa on my shoulder; it is contoured to rest on the shoulder and sits quite comfortably. The camera itself weighs about 12 pounds.

Dialing in a sensitivity: nominal rating is ISO 800.

Setup controls are on the right side of the camera, where an AC / DIT can get to them without disturbing the operator. A more limited set of controls is present on the operator’s side, too.

The base-level EVF. The two buttons are ZOOM and EXP.

Alexa will be offered in electronic and optical viewfinding models. This Alexa had a nonworking mockup of the electronic finder.

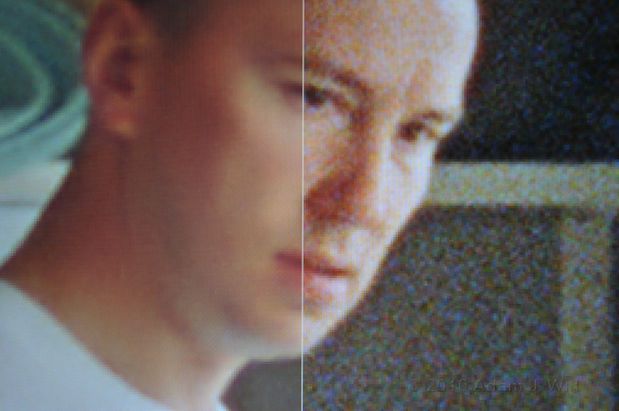

ARRI Relativity grain removal on a Super16mm frame.

Relativity is ARRI’s software suite for de-graining, re-graining, frame rate conversion, etc. It runs in real time or near real time (depending on the number of things it’s asked to do) using a GPU-accelerated Windows PC.

Canon video-capable DSLRs: Rebel T2i; 7D; 1D.

Canon had their HDSLRs present, including the just-announced Rebel T21 (a.k.a. EOS 550D). Same 1.6x sized sensor as in the 7D, but not exactly the same sensor; the T2i’s may be just a bit noisier in low light. Shipping soon, probably within a month.

The 1/3″ 3-chip camcorder with the new 50Mbit/sec 4:2:2 codec was at HDExpo, alas.

Panasonic stereo camcorder and 3D field monitor.

This $21,000 camcorder is already up to about 300 pre-orders, and it won’t ship until the fall. It’s essentially two HMC40s in one body: 3-CMOS, 1/4″ full-res 1920×1080 sensors, mated with a new stereo lens rig.

The 3D field monitors use passive 3D glasses, and will be around $8000-$9000 when they ship.

A jury-rigged remote control for the prototype camera.

The final camera will have a more elegant controller; the headline news here is that convergence will be tweakable independently of focus.

This binocular lens is the key element: lots of patent-pending effort has gone into making it work.

Panasonic’s Jan Crittenden Livingston, whose baby this is (in the US market at least), says it’ll be as big as the HVX200 was in its day. I disagreed; I think this’ll be bigger. It’s the first practical 3D camcorder; it doesn’t require a three-man crew, an oversize tripod, and two of everything to make it work. A single operator can shoot this camera, even handheld… and it can go mobile, recording 24 Mbit/sec AVCHD on SDHC cards or it can feed full uncompressed dual-channel HD out via two HD-SDI cables for external recording.

Across the hall, Gary Demos of Image Essence showed playback of wide-dynamic-range images using his new hybrid, floating-point wavelet/sinc codec. DCI StEM material was encoded at about 57 Mbit/sec; other clips ran the gamut from about 47 Mbit/sec up to 67 Mbit/sec if I recall correctly. Clips decoded in realtime on an Intel CPU running Linux to 16-bit RGB values, and output through a DVS Centaurus board to a Sony XBR8 and an HP Dreamcolor display in parallel, with synchronous audio.

The pix looked fine; no clipping, artifacting, ringing, blocking, banding, or other nastiness visible—so there’s not really any image I can show. It was just DPX-quality playback at Canon 5D data rates… ho, hum… (grin).

Exciting times. More tomorrow…

More:

16 CFR Part 255 Disclosure

I attended the HPA Tech Retreat on a press pass, which saved me the registration fee. I paid for my own transport, meals, and hotel. The past two years I paid full price for attending the Tech Retreat (it hadn’t occurred to me to ask for a press pass); I feel it was money well spent.

No material connection exists between myself and the Hollywood Post Alliance; aside from the press pass, HPA has not influenced me with any compensation to encourage favorable coverage.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now