Analytical vs creativity, from Shutterstock

It should come as a surprise to no one that an editor thinks about a project in a very different way than a systems administrator does. That’s not to say one is better than the other, but the person who’s accessing files on a daily basis isn’t always the one setting up the process to access those files, and that can lead to complications.

To sort through the best approach around making these kinds of decisions, regardless of the perspective, I talked with Doug Hynes, Director of Product and Solution Marketing at StorageDNA. Doug has previously discussed the evolution of LTO and LTFS solutions, and the Infinity Series from StorageDNA is a comprehensive, one-stop solution that has helped countless professionals sort through and solve their workflow and storage issues. All of that made him an ideal person to talk with about how creative professionals can and should approach their workflow and storage needs. For anyone who wants to learn more about the Infinity Series specifically, this video lays out the details.

To sort through the best approach around making these kinds of decisions, regardless of the perspective, I talked with Doug Hynes, Director of Product and Solution Marketing at StorageDNA. Doug has previously discussed the evolution of LTO and LTFS solutions, and the Infinity Series from StorageDNA is a comprehensive, one-stop solution that has helped countless professionals sort through and solve their workflow and storage issues. All of that made him an ideal person to talk with about how creative professionals can and should approach their workflow and storage needs. For anyone who wants to learn more about the Infinity Series specifically, this video lays out the details.

We discuss how the attitudes around these processes have changed, how 4K has impacted the need to have such logistics figured out, what sort of questions creative and technical professionals can and should be asking when they begin to research solutions and plenty more.

ProVideo Coalition: File-based workflows have clearly become pervasive throughout the media and entertainment industry. How has that influenced the way in which creative professionals need to approach storage and workflow?

Doug Hynes: In general I think creative and editorial teams are more cognizant about managing their content and managing the storage on which it lives because everyone is under such pressure to be more efficient while cutting costs. Spinning disk that can accommodate and can be edited from, meaning the content on that storage and that it’s high speed enough for one or multiple editors to work off of it, is very expensive on a dollar/gigabyte basis. With the amount of content coming in daily and the pervasiveness of higher resolution cameras that are capturing digital info, more and more people are starting to wonder where they’re going to put all this stuff. They’ve quickly realized they can’t just keep buying expensive storage.

Being able to actually manage your content and have a solution, not just with spinning disk but multiple tiers of storage, is important. That allows you to move and handle content in terms of how valuable that content is at any moment, so that it’s at an appropriate level in terms of you cost/gigabyte of storage. It’s really the only way you can logistically and financially manage the deluge of content that’s coming in all the time now.

How have you seen attitudes and expectations change around storage and workflow? Are people more aware about the technology and/or what it can do for them specifically?

I think LTO and LTFS have become pretty familiar types of solutions. They’re both mature enough now to the point that people look at them as a viable place to put their content. That’s in contrast to a couple years ago, before LTO and LTFS became more popular and people were just putting things onto USB and firewire devices. We knew then and we know even more now that spinning disk which just sits on a shelf is really not any kind of reliable archive. It’s not nearly reliable enough to provide guaranteed access to that content a few years down the road.

It’s really about people knowing that solutions are available, and knowing what tradeoffs might be associated with a particular solution. And for the most part, I think people are pretty educated these days.

I imagine you still run into a few people using spinning disk as their storage/archive, but that has to be less and less these days, isn’t it?

We still do see people doing it, but unlike in the past, they know it’s not the right thing to be doing. Those are people that are really looking for an alternative, because the shoeboxes on the shelf with the paperwork highlighting what’s in those boxes just doesn’t work on any level in this day and age.

The thing that’s really propelled people’s minds and fears around the danger of that approach is how commonplace 2K and 4K have become. You can go out and get a camera that shoots 4K pretty inexpensively, so the question is then around where are all these files going to go. Previously, when people were shooting in more compressed formats and lower resolutions, you could store a lot of stuff on a small, removable drive, and you felt comfortable with that. But fast forward a few years and a few failed drives later and now you’ve got an overflowing amount of info that’s important to your present and your future, and you don’t want to trust a removable drive with that kind of info.

When people start thinking about being able to repurpose 4K footage that they might have only had one or two days to shoot, they realize that if they lost those camera masters or can’t access them, it’s a significant issue. That’s material that could or in some cases will be counted on to continue generating revenue, so you really don’t want to take any unnecessary risks when you’re dealing with those kinds of stakes.

You mentioned the ubiquity of 4K, so how has that desire to capture content at this scale impacted the approach StorageDNA takes around your solutions?

One of the things that we’ve always done differently than a traditional archiving solution is that we’ve always tried to look at workflows and how our solution and our software can actually fit into people’s workflows.

Previous to 2K and 4K resolutions, we had the ability to do what we would call a “conform from LTO”. That means the high-res camera master footage gets put on LTO and then when you’re done editing you hand off a metadata representation of your timeline or sequence, and we can go find the associated high-res files. So the idea is to transcode those same files that go to LTO down to a lower resolution to work with in your editorial process.

Then you can hand off an XML, EDL, ALE or AAF, we work with all of the above, and we can interpret those and go off and find the high-res equivalent files. So we’ve offered, and have been offering for some time, the ability for users to work at a lower resolution than their camera master footage is at which saves them a ton on storage cost. Then they don’t have to buy as much spinning disk capacity, yet they still have a very reliable and low dollar/gigabyte storage solution. That’s especially important today with the larger file sizes.

The key is being able to bring content back quickly, and while we can do file-by-file restores, we also have the other unique workflow-based capabilities which I believe gives us an edge over any of the other solutions out there.

What sort of capabilities are we talking about? How are they different from those other options?

We don’t pitch our solutions as a traditional archive. We talk about using the storage medium as another place to put your stuff that we can provide quick access to. The idea is that it’s another tier of storage, and once you make your initial investment in the hardware or overall solution, you’re buying capacity at less than two cents per gigabyte, which is incredible in terms of the storage savings that people can realize.

When we archive material, which means we’re writing stuff to tape, we keep track of it in a non-proprietary XML-based catalogue system. We keep track of the tape, we keep track of the file names, but we also extract metadata for over 180 different file and format types. That includes camera master footage from RED or ARRI RAW or GoPro, etc. All of the standard formats and file types all the way down to PDFs, we’ll extract metadata for all of it. So it doesn’t really matter in terms of what types of files can be archived. We pull that metadata which lives in our catalogue so it can be searched again.

Giving people the ability to quickly and easily find what they’re looking for is the key, and that’s really where our solutions came from, and what sets us apart. Our prior product was designed for WAN synch of content, so that a facility in New York could synch up with a facility in LA and be seeing the same things. We took some of that IP and put it into DNA Evolution to increase archive efficiency. It’s not something a lot of people think about, but if you’re archiving or backing up content every single night, you’d be wasting a lot of the capacity of your tapes and even though “tape is cheap”, it’s preferred not to have countless copies of the same file.

We only support LTFS with our solutions, and part of the reason behind that is it gives us the ability to offer direct access to that content. Our UI can provide complete visibility into what is in the archive, and that’s key because if you want a solution that’s going to be successful in the M&E space, you have to have a great cataloguing system. Metadata is what makes your content valuable, because if you can’t get at you content or don’t know what’s there, it’s not going to be of much use.

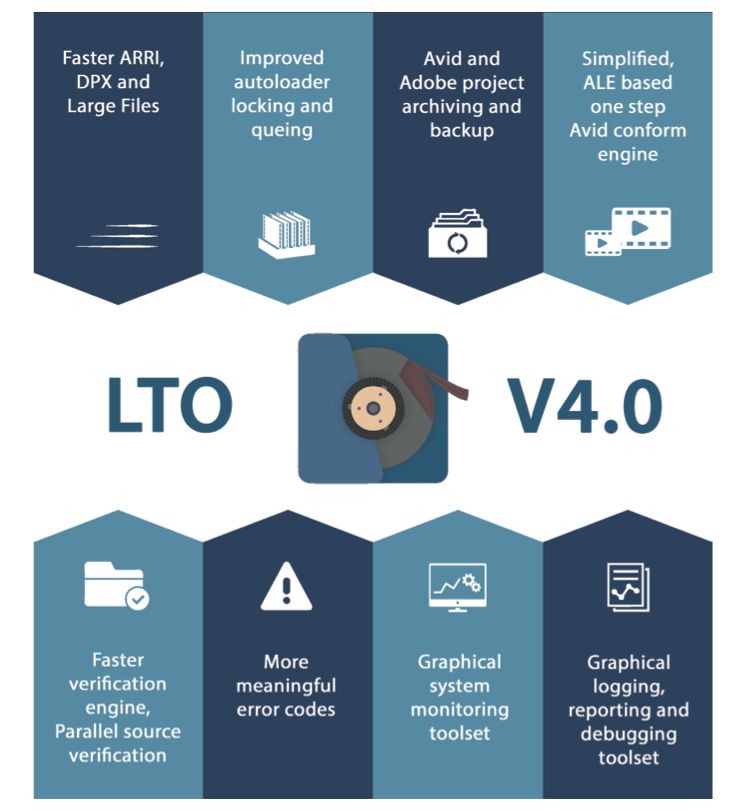

Looking through the “What’s New” infographic for your v4.0 release, the more meaningful error codes sounds pretty great, as we’ve all seen far too many error codes that appear to mean absolutely nothing. What has you most excited about these updates/upgrades?

Looking through the “What’s New” infographic for your v4.0 release, the more meaningful error codes sounds pretty great, as we’ve all seen far too many error codes that appear to mean absolutely nothing. What has you most excited about these updates/upgrades?

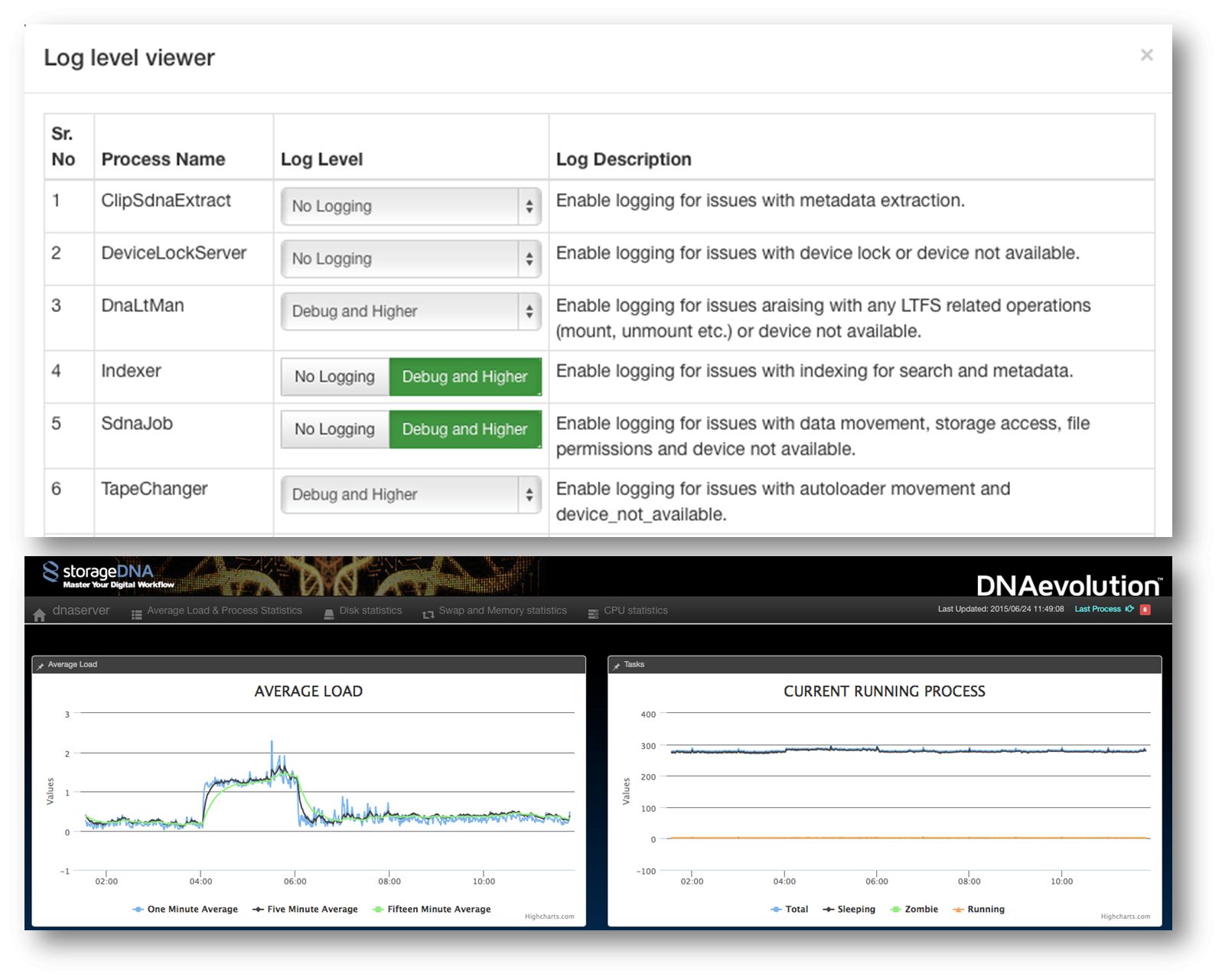

The error code piece is actually really big, and that was based off of specific customer requests. They said how much they liked the solution, but that when they were stuck they wanted to be able to be a bit more self-servicing. They wanted to see error codes that made sense which would allow them to resolve issues rather than have to pick up the phone or send a service ticket and wait for a response. So that was a key difference.

One of the interesting things is that the error codes and all of the logging was always there, but now we’ve just exposed it to the end user. Now the end user can submit very specific logs of info to us if they aren’t able to sort out the issues themselves, so I think it really makes this solution easier and friendlier.

Aside from that, some of the newest features in 4.0 are centered around improving the user experience with the system. What that means is we’ve tweaked the UI to be a little bit friendlier and give you a better idea around where you’re at. When you’re looking at the UI you don’t necessarily have to drill down into several layers of pages or directory structures in order to get to what you’re looking for. Customers taking advantage of DNAevolution’s built-in HTML5 player for previewing content might have proxies available, but they don’t have proxies of every single file they’ve ever written to tape. So we now provide icons right next to clip names that indicates whether there’s a proxy available, so you aren’t going to waste your time going in and looking for a proxy preview of a file that isn’t there. We’ve also added other icons which indicate whether extra metadata has been added to the clip and job status indicators right next to the archive name. We’ve really tried to make it more user friendly so that a typical user can just glance at the screen and see what’s going on very easily.

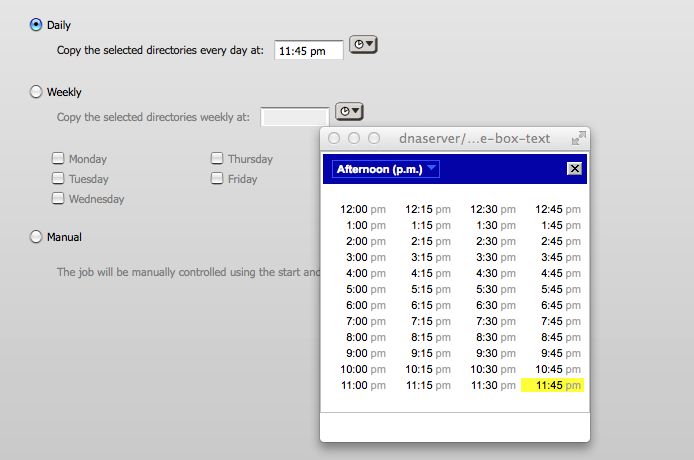

The other big thing we’ve done in 4.0, and it’s probably the most exciting thing for our customers as well as for anyone looking at us as an archiving solution, is we’ve added both Avid and Adobe project-based archiving. What that means is that with a simple, direct setup of a job where you just tell us where an Avid project or Adobe.pproj file resides, we’ll then interpret that file and generate a list of all files and content related to that project and allow you to do either a single, one time job, like a backup or archive of that entire project, or set that up to have us look at the project at, say, every day at midnight. When we do that, we can incrementally be backing up the project so that by the time you’re done with your project, the last night’s archive will only grab the last changes you did that last day, and the entire project is fully backed up.

Error Codes/Logs Image

Is this a system or setup that’s going to make sense to the various creatives that are going to be using and handling this content?

It certainly fits in line with the end users, and when I speak of end users I’m thinking of an editorial team which is using and intimately familiar with the content they’re working on, versus an IT guy who gets a request to do an archive or backup of a project.

Many other archive solutions, and we do this as well, allow you to go out to your storage volumes and pick either individual files or directories and folders of files and to write them to tape. But from the end user’s perspective, editors don’t really think that way. They think in terms of projects. They know what’s within the bounds of their project, and they assume anything related to a project should easily be copied over to an LTO tape. If you do things at the directory level you’re not guaranteed to get everything. Sometimes files live on the desktop. Sometimes they exist on one volume versus another. Sometimes they’re spread across different SAN volumes.

What’s great about our solution is that we generate a list based on the project file providing us a comprehensive file list AND where everything is, and we can do this for both Avid and Adobe projects. With Avid projects, we even break everything out into bins, so you can choose which bins of content you want if you don’t need to archive the entire project.

It’s interesting to hear that breakout of how an editor thinks about these files and process, versus an IT professional, because the differences there are in terms of the people using the system versus those who are taking care of it and dealing with it at a higher level, correct?

Yes, and when full restores are called for, the IT professional isn’t going to know what that means. They’ll ask about what volume it’s on, ask about what SAN storage it’s on or what drive letter and directory where the file existed. That’s where a disconnect happens which ultimately causes a delay in getting access to content.

Now, using our system, a request can be put into the IT person who can use the UI to actually pinpoint what the editor has asked for or the editor can go into the system to call up content either at the directory level, which is the old fashioned way, or they can go in and look at clips and pick out one or two clips out of the entire project. Or they can do the entire project via AAF, XML, EDL or ALE, which is the newest functionality that we have.

You mentioned the auto-archiving that you can setup to take place, but what role does automation play at a larger level in terms of the functionality of the system?

It’s pretty big. The automation of jobs kind of goes hand in hand with the hardware. If you’re talking about a single LTO drive, it’s hard to automate something if you have to manually stick a tape into that device.

What DNAevolution does as part of its job, is to identify and know about all of the tapes that reside in one of the autoloaders. They don’t have to be in the drives, they can be in any one of the slots. We identify those and if a job that calls for a sequence restore is processed we can handle it. Let’s say there are 100 files in it. Maybe you have 25 files coming off of tape one, 25 are coming off of tape two, and the last 50 are coming off of tape three. As long as all of the tapes identified in the restore file listing reside in any one of those slots or drives, all you have to do is a single button push and we’ll move all of those tapes in and out of their slots into the drives as appropriate to get all the files restored. There’s no human intervention after that point, which is nice because it saves people time and effort in terms of having someone manually fetching tapes.

Editors just want to be able to get what they need without having to go through an entire ordeal themselves or with IT. If it’s just a couple button pushes, it makes a big difference. We see some small workgroup type customers typically go for at least a small auto-loader in their environment so they save themselves from having to do this stuff manually. You can load up a multi slot autoloader and you’ve got a nice chunk of storage sitting there that will be readily available to either do archiving to or retrieve material from.

Automation window Graphic

Speaking of your customers, what sort of scales and environments have you seen your solutions most effectively utilized in?

It really runs the gamut. We have solutions that scale from a single drive for a very small operation all the way up to a highly scalable OEM’ed Hewlett Packard Enterprise library, our Infinity Series, that we sell as turnkey solutions.

One of the things we do see is that the mid-range, which I’d say has shifted from the 24-slot, two drive unit to an 80 slot 200TB configuration that easily and affordably scales to over a petabyte capacity. When you get into automation, you want to have a fully scalable catalogue system that’s being created for you so you can do all your restores quickly and easily, people see the value in our turn-key solutions. Or, if they already have a large investment in an LTO solution, we can control those as well.

When you mention your turnkey solutions, does that mean you’re delivering and supporting a product, soup-to-nuts? How many of the things we’ve been talking about do your customers need to be concerned with if they choose a turnkey solution?

Turnkey solutions, like our Infinity Series, work best for those who don’t already have a large investment in LTO hardware. Because we sell the entire solution, we support the entire solution without question – software and hardware. When we are involved with LTO hardware we did not sell, it’s hard to know the status of that 3rd party hardware in terms of warranty, configuration, connectivity etc., which in turn makes troubleshooting a bit harder, but not impossible as we do have the best support in the business. There is also a financial difference as well – our turnkey solutions don’t have any added per-slot costs, whereas when we connect our server to someone else’s library there will be a charge per slot to license the use of that hardware.

I know that professionals working to figure out what system and solution is going to be the right fit for them need to do their research and ask a ton of questions, but is there a good way to approach this research process?

I know that professionals working to figure out what system and solution is going to be the right fit for them need to do their research and ask a ton of questions, but is there a good way to approach this research process?

The biggest thing is to have a handle on how much content is coming into the environment on a regular basis. That will help you decide how much spending you might have to incur to store that content. After you figure that out, the numbers are not hard to calculate. You can figure out on a dollar/gigabyte basis how much spinning disk costs versus LTO versus the variety of other types of storage. You really need to have an understanding of what sort of capacity you need, and then you can start running your numbers. You can figure out if it’s going to cost you, say, $30,000 a year to keep buying more spinning disk storage, and for that price you can get two or three times that capacity at a much lower cost.

The next question is always around what’s available. There are a lot of LTO solutions out there. There are a lot of solutions that are very traditional “store and restore”, which don’t offer a lot of workflow integration.

Then it comes down to who is driving the requirements for content to be moved back and forth? Is it at the editorial level or is it just being handed off to IT? If it’s handed off to IT, those guys will often choose one of their favorite solutions which tends to be very IT-centric. On the other hand, when you get into environments where there might not be a big IT presence, people often prefer to have a solution that’s centered around an understanding of everything that they’re used to working with. So that’s a big difference as well. Do you move forward with a solution that’s in the IT world, or one that understands things in the same way that editorial does?

Another big question in this is around who owns the actual content. That often determines whether people need to keep the archives of their content long-term, or even mid-term. Say a couple years down the road. Some people we talk to tell us they don’t own the content they’re working with on a daily basis, and that they want to offer that service of backup to their clients, but those clients don’t want to pay for it. Then it becomes a question of value for both client and customer.

For people who are the content owners and are thinking about repurposing their content, it’s extremely important to go down this path and choose a solution that’s going to be the right fit. The concept of being able to repurpose content is something that’s been around for awhile because of the costs that are associated with gathering and creating the content in the first place. The question then becomes around how easily you can reuse that content, and “easily” is the key, because there are plenty of solutions that allow you to store your content on tape, but then you have to deal with a restore request window that can take a long time. That’s one of the bigger differences between us and a more traditional IT based solution. The tool and workflow integration we have allows our system to grant a much shorter time window from when you make that request and when you get your content.

Being able to identify how things are working for you and how you want them to work is a great starting place, because it leads to other important questions around budget and around the person who is ultimately tasked and responsible for making a decision. Are they an editor who is going to be thinking about the project at hand? Or an IT professional whose bigger concern is around the long-term viability of the system? Or a producer who wants to go with the cheapest thing that works?

There obviously needs to be collaboration and communication throughout this process, and regardless of whether or not these roles are actually separated by person and/or department, all of those perspectives have to be considered on projects of every size.

To learn more visit: http://www.storagedna.com/ or contact [email protected].

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now