Editing the Bioneers 25th Anniversary Conference

In October 2014, the Bioneers (www.bioneers.org) held their 25th anniversary conference in San Rafael, California. Bioneers is a non-profit educational organization that explores innovative solutions to planetary issues. The 5-day conference included 3 days of TED®-like presentations by well-known authors and speakers, watched by thousands of attendees.

My friend and producer/videographer Scott Stender (http://www.digitvideo.tv/) has been shooting this event for 15 years. This year he took on the role of co-producer/director for the recording of the event. He asked me to come on board as the video editor, supported by James Davey as assistant editor.

Thanks to an efficient post workflow, fast storage, and the horsepower of the Mac Pro, we were able to quickly ingest terabytes of footage on a daily basis, edit 6 HD camera angles in real time with no transcoding, and deliver a professional product to the client in record time.

Preproduction Planning

Before the event, we needed to determine how much hardware to acquire to handle the processing of 12 hours of presentations, all being recorded by 6 “cameras” (4 cameras, a graphics feed, and a switcher feed) as well as 3 additional camera operators shooting breakout sessions and b-roll.

After doing the math on different codec data rates, transfer times, transcoding and render times, we determined that 6TB should be adequate for the primary editing station, and Scott purchased a Pegasus R4, which I configured and synchronized in its default RAID 5 configuration. We also chose to record in ProResLT to reduce storage requirements and improve playback performance with no visible impact to video quality, since we weren’t doing any heavy grading or effects work. And we chose to use a Mac Pro as the primary editing machine because the combination of ProResLT and the Mac Pro eliminated the need to create optimized media, which saved us hours of time as well the cost of additional storage space, as it takes up less than half the space as ProRes. We also used Scott’s iMac, my MacBook Pro, and we purchased additional drives for backing up all the source material and delivering final renders to the client.

The Shoot

The presentations took place in Marin Veterans Memorial Auditorium, a 2,000-seat venue that was outfitted with 3 professional Sony studio cameras which fed into a video switcher located in a trailer outside the hall. These three streams were captured onto KiPro drives, along with a feed from the projected graphics and a feed of the live-switched program for a total of 5 separate video feeds recorded for each 4-hour session on Friday, Saturday and Sunday. In addition, Scott mounted a GoPro to the stage each day, and swapped out the mico-SD card during the intermission.

Figure 2: The 2,000 seat auditorium.

Figure 3: The production trailer parked outside.

Figure 4: Scott at the live switcher inside the production trailer.

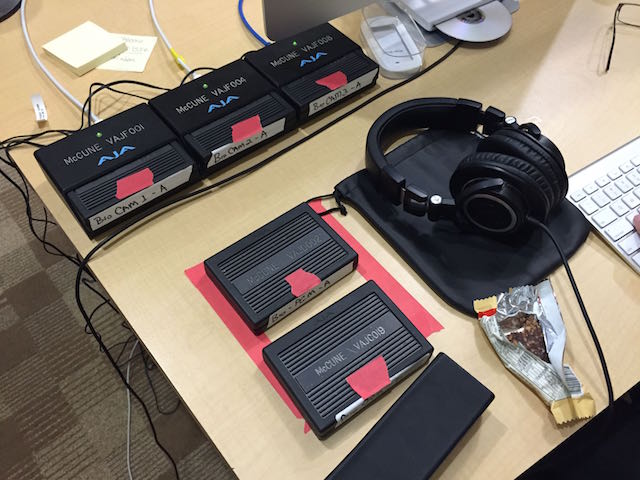

Figure 5: 4 of the 5 KiPro racks – each camera is fed to a rack and records to 250GB drives in user-selectable codecs.

Post Setup

Scott booked us a room with a conference table at the Embassy Suites a few hundred yards from the event. We removed most of the chairs and set up our editing stations the day before the first set of presentations in order to test our workflow.

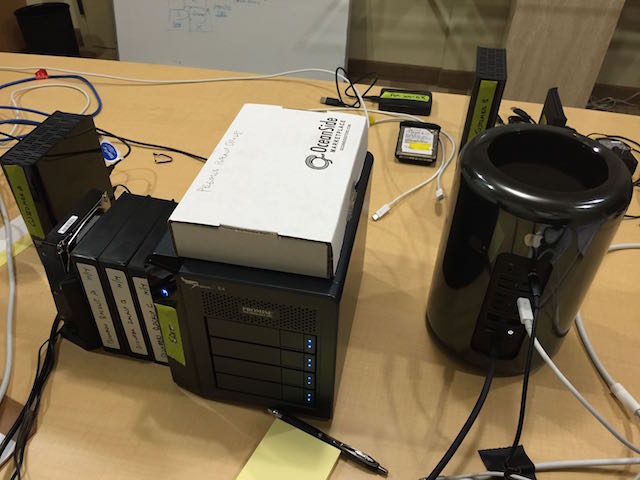

James used the iMac for setting up libraries, initial media ingest, creating multicam projects, and creating backups. I used the 8-core Mac Pro connected to a Pegasus R4 for the edit. In addition, we had 3 bare 2TB SATA drives for backups connected via a Seagate Thunderbolt dock, 2 MyBook drives for delivering final product to the customer, and a Hitachi 2TB drive as a backup for the R4, should one of its drives fail (this is one of the approved replacement drives according to Promise Technologies).

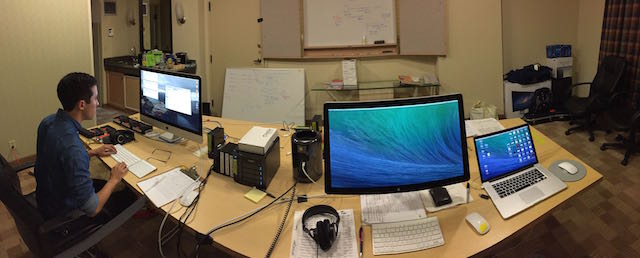

We set the equipment up in a straight left to right workflow, with a taped red box at the far left for incoming media sources, the iMac for ingest, the Mac Pro for editing, then a taped green box at the far right for media sources that could be sent back for additional recording (in addition to the main conference, we had other camera operators capturing breakout sessions and b-roll, and coming in at random times with SD cards).

Figure 6: Assistant editor James Davey at the far left ingesting the day’s footage to the Pegasus R4 RAID. Once completed, he switches the R4 to the Mac Pro so that I can start editing and he then makes backups of all the footage while I am editing. Once all media has been ingested and backed up, it’s moved to the green square at the top right corner (thanks to Jem Schofield for this workflow tip). The MacBook Pro at the far right is my personal machine used for creating and modifying graphics and internet access.

Figure 7: All our storage for this project: the Pegasus R4 configured as RAID 5 with an effective capacity of 6TB; a backup 2TB drive on top specifically for the Pegasus in case a drive fails; 3 2-TB SATA drives on the left containing duplicate copies of all the raw footage; and 2 4-TB customer drives for final outputted material.

Post Workflow

Efficiency was paramount: we need to figure out how we could get to editing as quickly as possible in order to make the tight delivery deadline.

Each day, immediately after the 4-hour session ended at about 1pm, James would head over to the production trailer, collect the 5 KiPro drives (marking them with red tape to make it clear they were NOT to be recorded over), and bring them back to the edit suite. Each drive contained close to 250GB of PRLT video. Now, how to get a total of 1.3 terabytes backed up and onto the RAID as fast as possible?

While I am a big proponent of creating Camera Archives in Final Cut Pro X, I opted not to use them for this project, for two reasons. First, to get editing as fast as possible, we created our backups after copying the files to the RAID for editing. This way I could be editing while backups were still being made. With camera archives, you have to create the archive first, and then import from it, to get the full benefit of being able to restore from the archive. Second, because the cameras were recording continuously except for the intermission, each KiPro drive contained just 2 video files (albeit large ones, at over 100GB each!). So there really wasn’t any card structure to maintain.

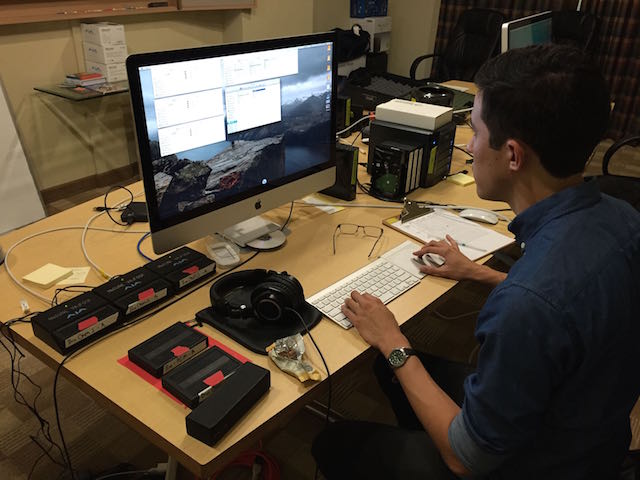

Figure 8: James at work ingesting media from the KiPro drives straight from the production truck.

Through our preproduction testing the day before the conference, we made a discovery that saved us hours of copying time. The KiPros were inserted into a dock that was then connected by Thunderbolt to the iMac. The R4 was also connected by Thunderbolt to the iMac. Copying one KiPro containing about 240GB of data took about 30 minutes to copy. So if we did the copying serially, it would take 5 x 30, or over 2.5 hours to copy the media – much too long! But we had a second KiPro doc, and we had an unused Thunderbolt port on the R4 – so we connected it and tried copying from both KiPros simultaneously – which took the same 30 minutes!

Intrigued, we were able to wrangle a third KiPro dock. But now we were out of Thunderbolt ports so we connected it to the iMac via USB and copied from 3 KiPro drives at the same time – and it still took the same 30 minutes, to copy over 700GB!

The reason this works is that the limiting factor in the data transfer is the speed of the KiPro hard drives: the Thunderbolt connection has lots of headroom, as does the write speed of the Pegasus RAID.

There were no more docks available, but we were out of connection points anyway and these docks cannot be daisy-chained, so it took 30 minutes to transfer the first 3 drives, and 30 minutes for the next 2. So we were able to reduce the daily copy time from over 2.5 hours down to 1 hour, saving us almost 5 hours over the course of 3 days.

Figure 9: Data being transferred from three drives simultaneously to the RAID. The other two in the red box are queued up to be copied.

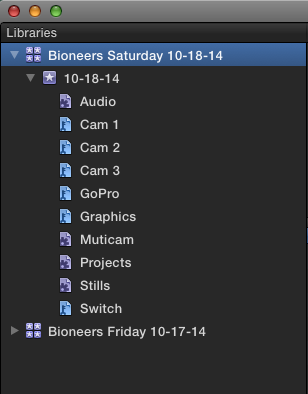

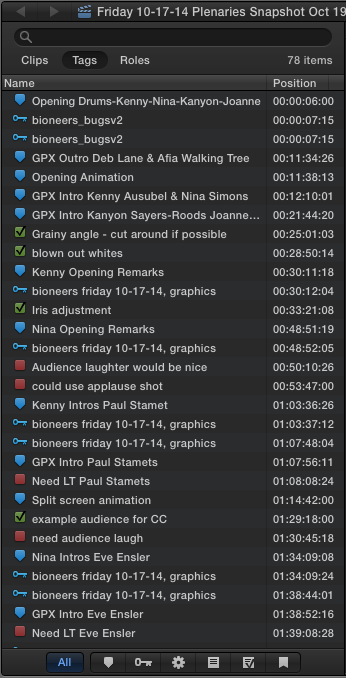

Once the data was copied to the RAID (and the GoPro footage was copied as well), James imported the clips into the library for that day, using “Leave in Place” to keep the libraries small and portable. He assigned camera names and angles, created keywords, and created the Multicam clips. Note that he had earlier created a library for each day of shoot, and created a set of smart collections that would automatically populate based on the clip metadata.

Figure 10: We set up a single library for each day’s shoot in advance. Each library contained a single event. Before importing any video, we created smart collections for Multicam clips, Projects, and Stills. Then we used keywords for each camera angle after import. We copied these collections to the other libraries so we didn’t have to recreate them each time.

Figure 10: We set up a single library for each day’s shoot in advance. Each library contained a single event. Before importing any video, we created smart collections for Multicam clips, Projects, and Stills. Then we used keywords for each camera angle after import. We copied these collections to the other libraries so we didn’t have to recreate them each time.

The Sony cameras were jam-synced to each other and the graphics feed and the switcher feed were sent the same matching timecode. So, rather than use audio for synchronization, James used timecode, and it took FCP X about 5 seconds to sync each 4-hour multicam shoot perfectly. And because he had first named his angles, the separate clips for each camera were placed in the proper angles.

Syncing the Go Pro footage was unfortunately more involved. There was no timecode to use, and although the GoPro did include audio, FCP X took too long to sync using audio – in fact we never found out how long because we aborted the attempt after waiting several minutes. Instead, we found it was faster to create a new angle manually, drag all the GoPro clips into it, and then sync each clip individually using some visual reference – a laborious process with no obvious sync point to use, but still faster than waiting for Final Cut to analyze a 4-hour audio track. A follow-up note: I had forgotten that if you place a marker on each clip in the vicinity of where you want them to sync and then use audio synchronization, Final Cut will focus on the area around the marker and therefore sync much faster. I will certainly try this next time.

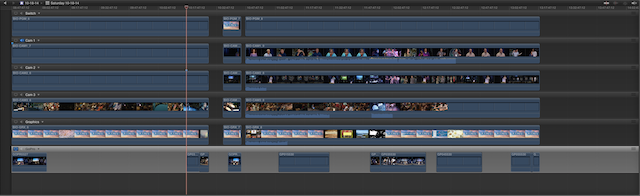

Figure 11: The Angle Editor. Each angle contains 4 hours of material. Note the short sections of Go Pro footage at the bottom. Because each GoPro clip had to be synced manually, we only added Go Pro clips where we knew we would use them (the dance/music performances).

After James copied all the data onto the RAID, and set up the Libraries (also on the RAID), we disconnected it from the iMac and connected it to the MacPro. I could then start editing while James starting backing up all the KiPro drives to the bare SATA drives (and made an additional temporary copy to the customer drives). The backup process was serial and took 30 minutes per drive, but the KiPro drives didn’t need to be back in the trailer until 8am the next morning so that wasn’t an issue. While backup up data, James was able to help another group produce DVDs on the same iMac.

By taking advantage of the great throughput of Thunderbolt and the R4 by copying in parallel and using the power of the MacPro to avoid transcoding meant that I could starting editing 6 angles of HD material in real time within 2 hours of the end of each day’s 4-hour sessions.

A side note: for the third day’s sessions, I created proxies overnight on a bare SATA drive connected via a Thunderbolt dock so that James could edit 6 angles in real time on the iMac while I continued to edit the prior day’s show on the Mac Pro. I was able to place just the proxies on that drive by targeting it before transcoding. Once he edited the show, I copied the library back to the RAID, switched back to original media, and exported.

The Edit

You might wonder what editing needs to be performed for an event that had a live switch cutting between cameras during the presentations. Couldn’t we just use the recording of the live-switched program? The reality is that a lot of small mistakes are made during a live switch: mistakes that aren’t obvious to an audience that is more focused on the live presentation itself rather than the screen above the presenters. But these small errors are much more obvious when watched in isolation. For example, the switcher might cut to audience applause too late, or cut to a camera that is still adjusting exposure or focus, or simply not cut to the best camera angle available. In addition, there are many parts of a live presentation that can be removed or tightened to make it more compact and easier to view: downtime between presenters, fixing a bad microphone, venue-specific instructions, etc.

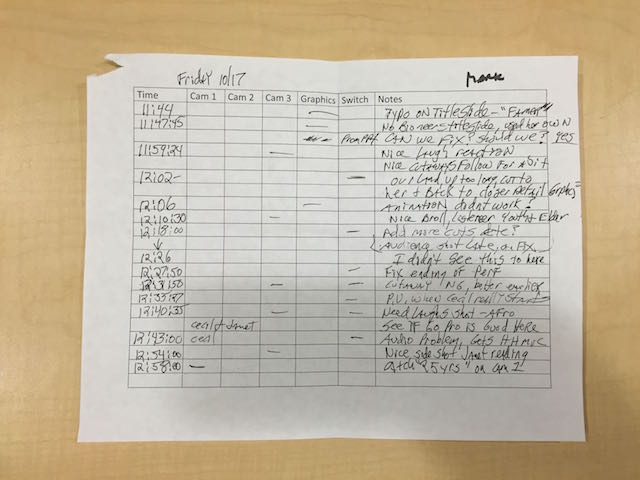

During the event, the producer takes notes on issues he catches that need to be fixed in the edit. Then, the editor needs to watch the entire show carefully and make additional fixes. So although I used the switched angle as my primary angle and kept it about 80% of the time, there were many changes to make. In addition, I needed to add intro graphics and lower thirds for each presentation and watermark the entire show. And color correct to match the cameras. And adjust the audio from one speaker to the next.

The producer notes included timecode that allowed me to quickly jump to the right location to fix the cut. Then, because I could play back all 6 angles in real time, I could watch the live switched show while checking out all the other angles. Each 4-hour day of presentations (4 hour timeline!!) took me about 8 hours to work through.

Figure 12: A sample of producer notes from about an hour of material. I used the timecode to jump to the correct spot and the dash told me which angle he was interested in.

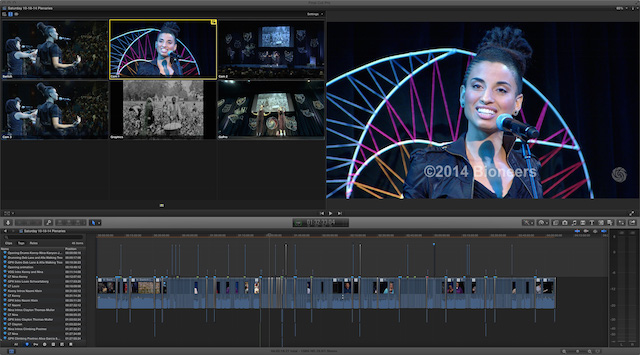

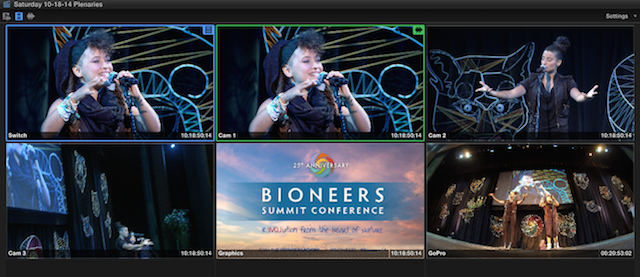

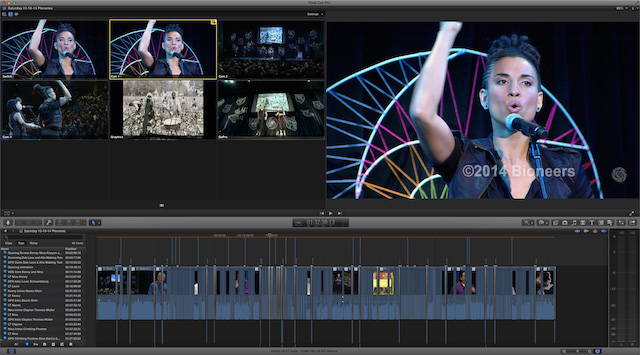

Figure 13: The Angle Viewer. Note the customized Angle Names at the bottom left of each angle which make it easy to identify the camera source. Also note the timecode at the bottom right that matches for all angles except the GoPro footage. The Switch is the live switched angle that I used as the starting point of the edit, and I made video-only switches and cuts, keeping the single clean audio from Cam 1 the entire edit.

Here are some strategies I employed during the edit:

Selective disclosure: I had just one Apple Thunderbolt display, so to maximize screen real estate, I used different layouts depending on the current task: organizing, editing, trimming, color correction, or audio work. For example, I would close both the Libraries List and the Event Browser while editing since I didn’t need regular access to more source material. And when color correcting, I would close the Angle Viewer, which not only freed up space for the Video Scopes and Color Board, it improved performance because I was playing back just a single stream.

Figure 14: The layout I used for editing with the Libraries list and Event Browser closed to maximize screen real estate. I kept the Timeline Index open as I used it constantly to jump to specific spots in the edit.

Double-speed playback: With our tight schedule, after my first pass, I wanted to check portions of presentations at double-speed. Even though the Mac Pro played all 6 angles flawlessly in real time, when I tapped the L key to speed up playback, I lost audio. The solution was easy: I closed the Angle Viewer. By this point of the edit I had memorized the angles so I could easily switch an angle or cut to an angle using keyboard shortcuts. Or I’d pop it open quickly when needed. Also, choosing the smallest clip appearance eliminated thumbnails and audio waveforms which improved hi-speed playback.

Markers and the Timeline Index: I love these tools. The combination makes it easy to track your work. I used standard markers to identify the intros and outros of each presentation as well as the location of all the lower thirds. I used To-Do markers to identify all the producer’s notes. Then, with the Timeline index, I could quickly jump to any individual presentation, fix a lower third spelling error, or show the producer the fixes I had made.

Figure 15: The Timeline Index with just markers selected. I could jump to the start of any of the dozen or so presentations in the 4-hour timeline just by clicking the marker. Note the grey horizontal line that indicates the current playhead locaiton.

Figure 15: The Timeline Index with just markers selected. I could jump to the start of any of the dozen or so presentations in the 4-hour timeline just by clicking the marker. Note the grey horizontal line that indicates the current playhead locaiton.

Figure 16 Here I’ve enabled all tags so you can see keywords, to-do’s, and completed to-dos.

Optimizing GoPro Footage: 5 streams of ProRes LT at 1080i were no problem for the MacPro without needing to transcode, but adding in the GoPro angle sometimes caused choppy playback. Therefore I optimized it all overnight, which took a significant additional chunk of storage space (close to a terabyte). I knew I could always delete this extra media at anytime if I had to make room, which didn’t turn out to be necessary (but it was close!).

Reimporting folders: this is a great trick. Although we were provided with the opening title graphics and lower thirds, many of them were incorrect and we had to make new ones ourselves. I had originally imported the folders of these graphics files as keyword collections so they would be easy to locate. Let’s say I had a folder of 40 graphics files, and I’ve updated 4 of them. I could locate those four, and drag them to the right keyword collection. But even better, if I just reimport the folder, FCP X is smart enough to only import the new files, and tag them with the keyword collection. Selecting the keyword collection and sorting by content created pops them to the top of the browser for a quick replace edit.

Field rendering: those high-end Sony studio cameras shot in 1080i – that’s right, interlaced footage – and the edit was destined for web delivery. Nasty jaggies on graphics and interlace artifacts in video can be hidden from view because by default FCP X only displays a single field. I needed to remember to enable Field Rendering in the Viewer to check the graphics and video through before export.

Queued exports: I kept each day in a single, 4-hour timeline. The client wanted the presentations broken out separately, so I set ranges for each presentation and exported them one after the other, letting them queue up, essentially creating a batch export of dozens of files.

What Worked Well

Here’s what I was most pleased with:

• Speed: We were able to start editing within two hours of the end of the morning’s shoot thanks to the throughput of Thunderbolt and the Pegasus R4 and the performance of the Mac Pro which eliminated the need to transcode media. Exports were also extremely fast (2X the speed of my MacBook Pro).

• Organization: Smart collections and Keyword collections made it fast and easy to locate material.

• Editing: Multicam editing was a joy: I was able to cut 6 angles of 1080 ProResLT footage on the Mac Pro with real time playback in 4-hour color-corrected timelines with graphics, without ever rendering.

• Markers and the Timeline Index made locating presentations and graphics and working through producer notes fast and easy.

What Didn’t Work So Well

We did have a few issues. Specifically:

When creating proxy footage for my assistant editor to cut on the iMac, Final Cut got confused about how much space GoPro proxy footage would take up. At first, it would not let me create proxies, warning me that they would fill the drive, which was not even close to the case.

At one point I decided to delete about 1TB of render files from completed projects to make room on the RAID. In the middle of the delete process, Final Cut froze. I had to force quit, I couldn’t relaunch, had to hard reboot the Mac Pro.

One day, one of the projects would not open. We had to resort to a backup file. Luckily, Final Cut backs up every 15 minutes. By the way, because we used external media, our libraries were very small, which allowed us to store them in the cloud as additional offsite backups.

Wish List

There are a few features that would have made this project easier:

Match frame: I’d like to be able to match frame from the multicam clip back to the original angle in Browser in order to look through that clip for something nearby – for example, grabbing an audience reaction shot.

3 issues with markers:

I really wanted to be able to move markers around. They disappear if I ripple an edit, or make a cut on a marker. It would be so helpful to be able to simply drag them left or right.

Jumping to markers by selecting them in the Timeline Index or using the keyboard shortcut kills existing In or Out points, so you can’t use markers to set a range, which would be very useful.

Finally, transitions placed over markers make them disappear from the timeline index – this must be a bug.

Conclusion

Overall I was tremendously pleased with how smoothly the post process went. With a minimum amount of resources (both human and hardware), we were able to produce a professional product in an extremely short timeframe.

Disclosure

I produce tutorials for Final Cut Pro X and Motion that are sold through Rippletraining.com, and plugins for Final Cut Pro X that are sold through FxFactory.com.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now