- Image by Krista76 via Flickr

Feature: Does Taxonomy Matter in a New World of Search and Discovery.By Suzanne BeDell and Libby Trudell

In a Google world, where people are accustomed to entering a few keywords into a web search box and retrieving relevant answers, even information professionals wonder if the traditional library information sources’ reliance on controlled vocabularies remains a viable, worthwhile, and cost-effective strategy.

Similar concerns arise with enterprise search. As many organizations begin, or extend, the process of building or choosing new platforms and applications to support search across the enterprise, information professionals assess the role of controlled vocabularies. How important are they in this world of keyword search?

At the SLA 2010 Annual Conference, a panel discussion that I led took on the pivotal question of “Does Taxonomy Matter in a New World of Search and Discovery?” Panelists (Jabe Wilson, Elsevier; Tim Mohler, Lexalytics; and Tyron Stading; Innography) considered how the industry is evolving, presenting their opinions on the value of investing in the creation of structured data. Do taxonomies still add value when keyword searching seems sufficient to many end users?

AIDING PRECISION

Information professionals and librarians rely on classification and controlled vocabularies to aid precision search; abstract and index (A&I) publishers make investments in indexing and thesauri to add value to their products. Given the costs of quality indexing, is an alternative technology available to provide similar value for achieving greater precision in search results? Many organizations are experimenting with semantic technologies in hopes of automatically extracting the meaning inherent in documents and supplementing, or even replacing, the human editorial process.

Although information professionals and many publishers believe in the power of indexing, most end users remain satisfied with simple text search and don’t recognize a need for controlled vocabularies. This article will look at the current state of the industry and will explore the outlook for blending taxonomy and other tools in the new world of search.

INDUSTRY GROWTH DRIVERS

Years ago, we used to speak of primary, secondary, and tertiary publishing (primary research journals, abstracting and indexing databases, and aggregated online search services) as driving the core of the information publishing industry.

All three elements remain important, but increasingly, growth comes from a new layer of analysis and data mining. This covers many applications. At its core, however, lies the use of current and past content, in conjunction with statistical, structural, or other analytics models and intelligence mining methods. Examples include Collexis (acquired by Elsevier in June 2010), Innography, LexisNexis TotalPatent, and Thomson Reuters’ ProfSoft. These hot new tools are driven by structured content.

Continues @http://www.infotoday.com/online/sep10/BeDell-Trudell.shtml

Related articles by Zemanta

- Taxonomy: The New Hip (digitalassetmanagement.org.uk)

- The logic of document capture (digitalassetmanagement.org.uk)

- Controlled Vocabularies for use with Digital Asset Libraries (daydream.co.uk)

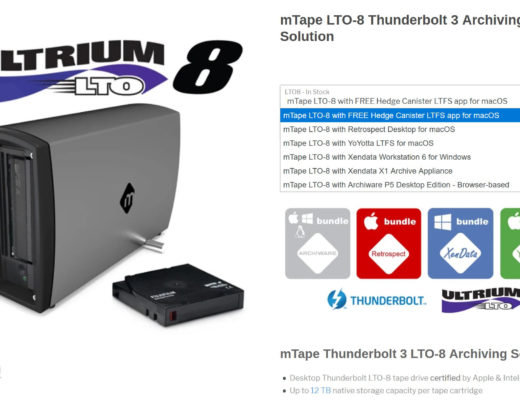

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now