In our October 2014 Mailbag, readers asked questions about editing clips from different angles, the best approach to LUTs, Premiere Pro logistics and plenty more. Art Adams, Bruce Johnson, Scott Simmons and Adam Wilt weighed in to answer most of the questions this month, and you can see what they all have to say below.

In our October 2014 Mailbag, readers asked questions about editing clips from different angles, the best approach to LUTs, Premiere Pro logistics and plenty more. Art Adams, Bruce Johnson, Scott Simmons and Adam Wilt weighed in to answer most of the questions this month, and you can see what they all have to say below.

If you have any questions you’d like to see our writers answer in an upcoming mailbag or as a Roundtable article, email them to us at [email protected] or tweet us with the hashtag #askpvc to have your question answered by an expert.

Q: With video and motion and OTT being the new darlings of marketing, I believe that there is a market for the 30+ years of experience I have in video and film production. Producing/Directing/Writing/Shooting/Editing – I have the usual quiver of skill sets for a network/corporate/cinema freelance/employee.

Where can I begin to market myself? I do not mind traveling.

I guess the question that comes to mind for me is this: if you have the usual quiver of skills, what makes you different? There’s lots of people out there with the usual quiver of skills, and if you just have more of the same you’ll have a much harder time marketing yourself successfully.

Not long ago I worked with a branding consultant, and it’s some of the best money I’ve ever spent. It’s not enough to be “just another producer/director/writer/shooter/editor” as (1) you’re hardly alone, and (2) many people who might hire someone like yourself look suspiciously upon someone who does all that and assume that you don’t do any one thing terribly well.

Bruce Johnson: I started out in Orlando FL, and while it was always a rush to be working in commercial TV, living in Florida just didn’t do it for me. So I got a copy of Places Rated Almanac, pored over it, and decided a college town would probably be for me. As luck would have it, eventually there was a shooter gig open at the public TV station in Madison, and I managed to get the job. That was 27 years ago.

Of course, the tools of job-hunting have changed a bit since then. I found out about the job in Madison by “borrowing” the sales department’s copy of Broadcasting Magazine and reading the classified ads in the back. Today, all the jobs in the world are available at your fingertips. But I still stand by the message of “know what you want from life, go to where you can find that, and make the rest fall into place.”

Art Adams: You should also ask yourself the following questions:

(1) What market am I targeting? Network, corporate and cinema production are all wildly different markets requiring different skills. You can’t target all of them with the same business plan and marketing tools. Either choose one or get ready to run three marketing campaigns. (And doing this as an employee puts you in yet another niche, probably corporate, which would require a fourth kind of campaign.)

(2) Are you going for the high end or low end? A lot of hyphenates do it all and do so cheaply and in volume. You make less but you work more. You also tend to compete on price over quality. Do you want to work a lot but constantly fight to be the lowest bidder, or do you want to specialize, possibly work less often but for more money?

(3) What do you bring to the table that no one else brings? In other words, if you have the usual quiver of skills, why should anyone choose you over the next person? Until you can articulate that you’re not going to go anywhere quickly.

Where you market yourself depends on how specific you can be about what you want to do. Where you’d go for cinema projects is very different to where you’d land for corporate work, and the kind of corporate work you’d do is dependent on where you’d be. (Some areas are known for high technology, others for medical and pharmaceutical, etc.)

Once you answer these questions about your goals I think you’ll be able to quickly figure out where to market yourself. If you’re willing to do anything you have a lot more options but a lot less that differentiates you from the competition. The more specific you can be about yourself and what you want to do the more you narrow down not just who to approach and where to be, but also where you stand amongst the competition.

Q: I have two 1 hr. clips from different angles, and am trying to use the FCPX multicam for them and I got two clips combined in one angle viewer. Are they supposed to be in separate angles? Would you please tell me what I am doing wrong?

Adam Wilt: I’m not entirely sure what you mean by “two clips combined in one angle viewer.” Do the clips play one after the other in a single “source monitor” window, rather than side-by-side in two separate windows?

If you’ve assigned different camera angles to the clips, selected them both, and then created a “New Multicam Clip” (using either the right-click menu or File > New > Multicam Clip…), you’ll get what looks like a single new clip.

If you double-click the multicam clip, it’ll open up in its own timeline (the “Angle Editor”). In this timeline, you can add, delete, or re-order angles, adjust clip-to-clip sync, and make other tweaks.

If you don’t see both original clips in the Angle Editor, you may not have had both of them selected when you made the multicam clip. Drag in the missing clip and slide it into sync. Alternatively, FCPX may have put them one after the other instead of one above the other (perhaps because one camera’s timecode started after the other’s timecode ended, or FCPX couldn’t find any common audio sync). Simply drag them into the right relationship, one above the other, and line ’em up in sync.

If neither of those fixes your problem, write back with more detail!

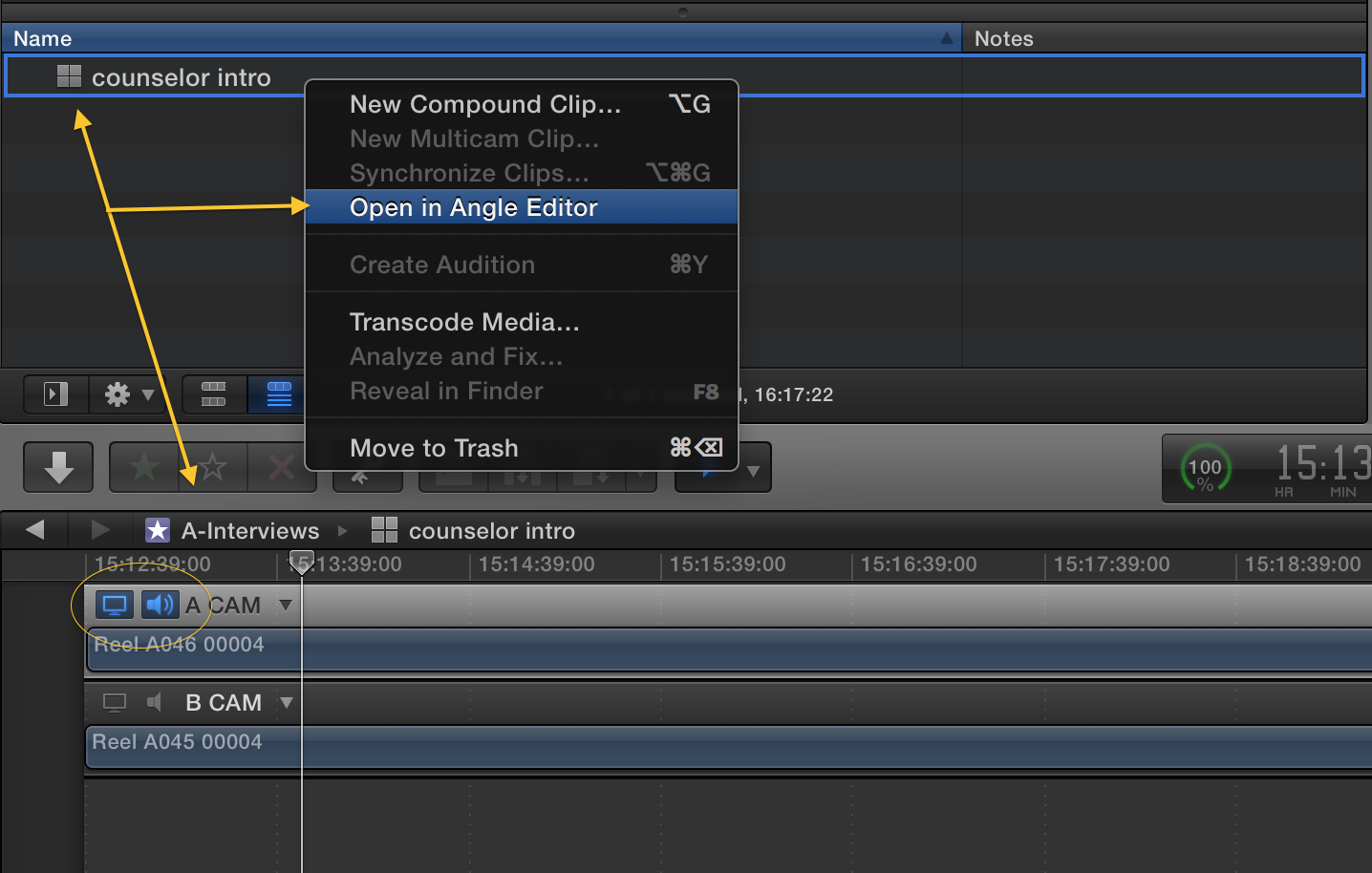

Scott Simmons: The key to working in FCPX multicam is getting the clips in sync. Most likely you’ll use the audio syncing capabilities and let FCPX compare the audio waveforms for sync but this is dependent on having some type of scratch audio (at the very least) for FCPX to sync with. If sync works you’ll get a new multicam clip. With that created you can open that multicam clip in the FCPX Angle Editor where you can add or remove angles, check sync and generally do most anything to individual clips like add color correction. See the image below for what to look for when opening a clip in the Angle Editor. This clip has two angles (A CAM and B CAM) and in this image I was checking the A CAM video in audio as you can see via what is circled.

Q: I work as senior technical Manager at a large DI post house based in India in city of Chennai. We use Quantel Pablo’s for our color grading work. We have seven Quantel suites.

With the Pablo we have an option of applying view LUT through software, however this causes the waveforms on the omnitek to change and show a LUT applied signal waveform. Our colorist instead prefer to apply LUT on the reference monitor, Dolby PRM being in our case.

I would like to have your thoughts on which is a better way to grade from the above to methods mentioned.

We have custom LUTs matching the final DCP exhibited in theaters.

Art Adams: When I’m shooting I always run a LUT-modified signal through my waveform monitor. There was a time when I was concerned with checking the log or “raw” signal directly for over- and underexposure, but over time I realized that the application of a LUT is always a destructive process and I’m better off critically checking the LUT-modified image because that’s the closest representation to the final product that I have access to when shooting.

The same thing applies in a DI suite: if you’re grading through a LUT, why would you only monitor the waveform signal before the LUT? The critical part of the process comes after the LUT, when you’ve bent the image data into new and interesting shapes, and that’s where you need to see if what you’ve done is “legal,” or if you’ve clipped or crushed image data in what will be your final image.

At that point it doesn’t really matter what the originating image data looks like. It’s not like you’re going to be able to go back and remake it. You have what you have and that’s what you have to work with, period. All that matters is the image that you create through the LUT and through your grading process. That’s what you need to monitor with your waveform–particularly as eyes get tired and deceptive during the grading process, while a waveform monitor never lies.

I’d suggest that your LUT should be applied in software so that it affects the image going to both your Omnitek and your reference monitor. Why would you want to do otherwise? Monitoring the image before processing makes no sense to me as it will never change. It’s the processed image you need to worry about.

Adam Wilt: Your colorist may want to see what he’s doing to the signal before the LUT, and that’s perfectly valid, but it’s also necessary to have the objective picture of the post-LUT signal that the ‘scopes give. If I were delivering DCPs with those LUTs baked in, I would insist on being able to monitor the post-LUT signal on the Omnitek, and not trust the subjective impression I get from looking at the image on the Dolby display.

I’m not familiar with Pablo, but I would hope it has the option to be able to toggle an output LUT on and off reasonably easily. If that’s not practical (or it may be too disruptive for the client to see the image on the Dolby change), have you considered an outboard LUT box like the Fujifilm IS-100 or IS-mini? These allow you to take the “clean” signal out of Pablo, apply the LUT, and feed that to the monitor. The Omnitek can be switched between the Pablo’s “clean” feed and the LUT box’s post-LUT signal so the colorist can see his choice of signals without affecting the image on the display.

Q: I am using Adobe Premiere Pro CS6 and have installed the latest upgrades. I am using a Mac with a USB mic.

I have an audio track selected in the timeline by having the speaker on it, but in the multi-track mixer with the channel selected, the “enable track for recording” button will not select. I have checked inputs, etc. but the multi-track mixer also doesn’t seem to recognize the USB mic or any other device at the very top . . .

Could you shed some light?

Jeremiah Karpowicz: First things first…you mention you’ve installed the latest upgrade for Premiere Pro CS6, but that would mean you’re using Premiere Pro CC, which doesn’t seem to be the case. So I’ll mention some info that is going to be most relevant to you if you’re working on CS6 rather than CC.

You really want to use the normal mic input on the computer rather than USB. But you can also try setting the system default input to your USB headset and setting Premiere’s audio to “System Default Input/Output”.

This issue might have been fixed or adjusted in CC, so the solution is really contingent on that info.

Scott Simmons: I’m currently using Adobe Premiere Pro CC and since you said you have installed the latest upgrades I’m going to guess you’re actually on the CC update as well. To enable PPro to record a voice over to an audio track you have to go to the Preferences > Audio Hardware and choose your input device from the Adobe Desktop Audio pop-up menu. With that input selected PPro should be able to record to an audio track.

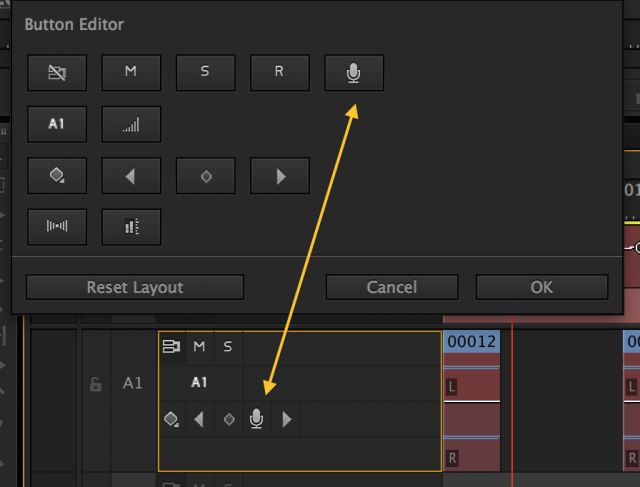

While you can use the Audio Track Mixer by turning on Enable track recording for the desired track, clicking the red Record button at the bottom and then hitting play in the timeline to record, I like to add the Voice-over Record button to an audio track and click that to make the recording. With that you target a single track with one click and get a countdown before recording. If you don’t see the Voice-over Record button right+click on the audio track controls and choose Customize. Then drag the Voice-over Record mic from the button list to the audio track control header. Then you can click that to record right to that selected audio track. See the image below about adding that button to a track.

Q: I’m building a PC workstation for editing HD with Intel xeon and two Invidia cards. Im going to run Adobe Premiere. Do I need to have a Red Rocket card to run r3d files for the RED cameras in real time?

Adam Wilt: Over two years ago I ran Premiere Pro CS6 on a 2009 Mac Pro with dual 2.26 GHz quad-core Xeons. I had previously installed a RED ROCKET for real-time R3D playback in FCP 7. CS6 ran very well, too… so well that I didn’t notice for a couple of weeks that the ROCKET card wasn’t enabled in CS6! Premiere’s software decode, spread across all eight cores, was keeping up with realtime playback. I then threw whatever switch I needed to throw to enable the ROCKET, and it worked, but I didn’t notice any vast performance difference.

A couple of caveats:

- PPro has (or had; I haven’t drunk the CC Kool-Aid so my knowledge stops at CS6) variable resolution settings for realtime playback: 1/16, 1/8, 1/4, 1/2, full-res. As I moved up the resolution ladder, PPro CS6 had a tendency to start bogging down, even with HD sources. I think I was doing a 1/8 or 1/4 res RED playback in software and was happy with that for editing purposes. Threads on REDUser suggest that you can go to higher-res realtime playback settings with a ROCKET than without, and your full-res renders will be faster, but whether you “need” that is entirely dependent on you.

- I had the original ROCKET and was dealing with 4K and QHD M and M-X files. Nowadays there’s the ROCKET-X accelerator and you’ve got 5K and 6K files to deal with, so the speed tradeoffs may have changed entirely.

Based on all that, I’d suggest getting a couple of hefty Xeons and trying the software-only route first. If you find that Premiere CC starts bogging down on R3D playback at your desired resolution, or you need more speed in full-res renders, you can always add a ROCKET or ROCKET-X card later.

Have a question you’d like to see answered by a PVC Expert? Send it to us at [email protected] or tweet us with the hashtag #askpvc

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now