ReadWriteWeb: The Age of Exabytes: Tools & Approaches for Managing Big Data.

We are experiencing a big data explosion, a result not only of increasing Internet usage by people around the world, but also the connection of billions of devices to the Internet. Eight years ago, for example, there were only around 5 exabytes of data online. Just two years ago, that amount of data passed over the Internet over the course of a single month. And recent estimates put monthly Internet data flow at around 21 exabytes of data.

We are experiencing a big data explosion, a result not only of increasing Internet usage by people around the world, but also the connection of billions of devices to the Internet. Eight years ago, for example, there were only around 5 exabytes of data online. Just two years ago, that amount of data passed over the Internet over the course of a single month. And recent estimates put monthly Internet data flow at around 21 exabytes of data.

This explosion of data – in both its size and form – causes a multitude of challenges for both people and machines. No longer is data something accessed by a small number of people. No longer is the data that’s created simply transactional information; and no longer is the data predictable – either as it’s written, or when, or by whom or what it’s going to be read by. Furthermore, much of this data is unstructured, meaning that it does not clearly fall into a schema or database. How can this data move across networks? How can it be processed? The size of the data, along with its complexity, demand new tools for storage, processing, networking, analysis and visualization.

The Age of Exabytes explores how technologies are evolving to address the needs of managing big data, from innovations in storage at the chip and data center level, to the development of frameworks used for distributed computing, to the increasing demand for analytical tools that can glean insights from big data in near real-time

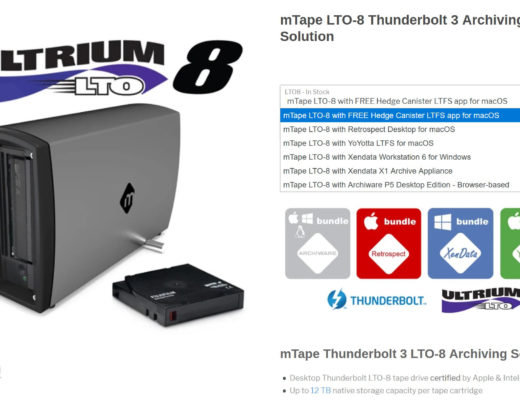

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now