Olympic codec rankings. AVCHD is the most accurate… pic.twitter.com/ncRykgBznq

— Shane Ross (@comebackshane) July 16, 2014

Everything I know about codecs could fit on the head of a dime. That’s after compression, of course. Prior to compression I think we’re talking a knowledge base that’s about the size of a deck of cards.

There are some people (Adam Wilt) who know enough about codecs that they could design and build them. I know just enough to be dangerous and keep myself out of trouble, mostly. Here’s what I know, in a compressed nutshell.

The higher the bitrate the better the image

This seems like a no-brainer, and it is, but keep in mind that there’s considerable pressure to keep bitrates down not just in post but especially on the set, where data has to be backed up before being shipped off to editorial. That often means spending a few hundred dollars more while a data manager goes into overtime making sure all the footage lands, intact, on two or more drives. Some producers would rather spend less on overtime and settle on images that are good enough rather than excellent, and indeed there are projects where good enough really is good enough. (The trick is to recognize those projects when they come along and act accordingly.)

As transfer rates increase due to technologies like Thunderbolt this is less of an issue, and hard drives seem to get cheaper by the day.

The lower the bitrate, the more danger you’re in

This, too, seems pretty obvious, but wishful thinking often leads us astray. Also–and I can’t emphasize this enough–sometimes good enough really is good enough. If the producer wants to shoot a lesser codec for budgetary reasons, or to speed up post, you need to know when you can make their day by saying “No problem” vs. when that choice may destroy the project.

The (hopefully long dead) DVCProHD and XDCAM codecs are good examples of this. First, here’s the secret truth behind how every codec operates: EVERY CODEC THROWS INFORMATION AWAY AND HOPES YOU WON’T NOTICE. The engineers who design codecs spend a lot of time learning what the average human will and won’t notice and exploiting that knowledge.

Neither DVCProHD or XDCAM is good for shooting green screen. DVCProHD cheats on horizontal resolution (960×720 instead of 1280×720) because we tend to be less sensitive to it than vertical resolution.

Vertical resolution affects horizontal detail: imagine a little man with a pen moving from the top of a row of pixels to the bottom and leaving a dot of ink wherever he encounters a line; as he’s moving vertically the only lines he’s going to recognize run horizontally as he’ll bump right into them. Therefore, while he’s moving vertically, he leaves more ink where he encounters horizontal lines.

Vertical detail helps bring out eyes, which are wider than they are tall, and adds detail to horizons, which trend towards the horizontal, but we seem to be very tolerant of resolution loss in vertical lines. We don’t notice this loss of detail in in normal live action footage, but put a person in front of a green screen and it quickly becomes clear that edges become very jagged very quickly.

XDCAM is just plain compressed. It’s a long-GOP codec, where GOP stands for “group of pictures.” When the codec compresses information it doesn’t look only within the frame (intra frame) but it looks between frames (inter frame) and tries to store only the changes that occur between the beginning and end frames. Normal live action looks fine, but once again the green screen reveals all: edges around objects in XDCAM appear as randomly moving clumps of blocks.

XAVC is an interesting beast. Found in the Sony F5 and F55, it works reasonably well for live action but–once again–fails the green screen test. A close look at high contrast edges reveals artifacts that remind me of what happens when I pull two pieces of paper apart: there’s a subtle “tearing” effect as the codec appears to repurpose bandwidth from surrounding areas in order to store more information related to the contrasty edge. Our eyes don’t notice this in normal scenarios but green screen reveals all.

The F5 and F55 offer SStP, Sony’s non-tape based HDCAM SR codec. It’s only available as an HD codec in the F55 but it’s excellent: green screens look great, and it can stand up to heavy color grading (which is also a very destructive process). SStP is only available as an HD codec and handles green screens vastly better than 4K XAVC does, even though the bitrates are similar. This isn’t terribly surprised as 4K requires four times the storage space of HD for similar results, so XAVC is cramming 4K into the same space that SStP uses for HD.

As you can surmise from the comments above, I’ve found that green screen breaks codecs faster than anything else. So does gentle motion in a detailed frame: Adam Wilt will test codecs by shooting trees in his backyard while gently nudging or bumping the camera, which turns leaves into blocks of color in the less robust codecs. The better codes will retain leaf detail.

Some say it would be wonderful if every manufacturer standardized on the same codec, and while that would be convenient I’m not sure it’s completely desirable. Codecs are part of a comprehensive marketing strategy: high quality cameras will offer better codecs, while cheaper cameras will offer codecs that do an acceptable but not great job. As technology changes, the lower end of the market gets better codecs, so it’s beneficial to shake things up down there through competition. Also, every codec has a tradeoff and it’s important to match the tradeoff with what you’re shooting so as to get the best bang for the buck–and so you don’t screw up by trying to, say, shoot green screen in XDCAM on an EX3. (Just for the record, the 10-bit SDI output on the EX3 is really clean and keys great when recorded to an external deck.)

I’m not going to go into a huge amount detail about codecs as I’ll either show my ignorance or else use big words to make it appear I’m smarter than I am. I do have some rules to share that I’ve found quite useful in my professional life:

(1) I use the highest bit rate possible at the greatest bit depth possible if the footage will be either graded or composited. Nothing shows poor edges faster than green screen; and as grading is inherently destructive I give the colorist the most information possible so that there’s something left after they throw most of it away.

(2) Live action is very forgiving because codecs rely on chaos: the more detail there is in a scene, and the fewer truly hard edges, the less you’ll notice that these details are missing. Yours eyes love detail as long as there’s not a lot of it; too much and they lose track. Codecs take advantage of that.

(3) 4:2:2 is fine for live action. 4:4:4 is required for any kind of effects work. If you’re grading the image aggressively you’ll do much better with 4:4:4 than 4:2:2. (4:2:2 has less than half the horizontal color resolution in the red and blue channels than 4:4:4.) Also, note that some codecs (I’m looking at you, XAVC) will show a slight color shift compared to 4:4:4 codecs like SStP if the two are directly compared.

(4) 10-bit color is the minimum I want to work with, although I can make 8-bit work in a lot of situations. Some cameras record less than 10-bit, and there are situations where I can get away with that… but this kind of footage often falls apart faster when graded or composited. Any sort of contrast enhancement will often result in banding, and most color grading is contrast enhancement.

(5) Don’t assume all codecs are the same just because they are based on a similar standard. For example, H.264 has traditionally been considered a very good codec but both XAVC and SStP are based on it and they offer very different results. Codecs operate at different levels, and SStP is much more robust than XAVC even though they have the same roots. (Also, my understanding is that H.264 only defines how the footage must be presented to a player in order to be played back properly, and says nothing about how it is compressed. That’s completely up to the developer!) Make no assumptions about a new codec based on its roots: always test!

(6) While raw formats are awesome, I am happy to master in ProRes 4444 for non-raw projects. I’ll accept 422HQ as well, although I’m slightly less happy with it. I’ll never shoot lesser flavors of ProRes because I don’t consider them to be master formats: the bit rate is too low and the footage is too highly compressed. It’s an editing proxy format at best.

(7) XAVC is fine for light grading. SStP and ProRes 422HQ/4444 are better. Canon Log recorded to Canon’s XF codec is okay if run through a LUT before grading but trying to tweak it aggressively using lift/gamma/gain only can be… disappointing. (8-bit color can only stretch so far. I’ve found Canon’s Wide Dynamic Range gamma helpful as it adds a little bit of an S-curve during recording so I don’t have to create it so aggressively later.)

(8) When testing a new codec I always shoot a diagonal edge against green screen to look for jagged edges, and then shoot something with extremely high detail while jiggling the camera slightly to see how much detail disappears.

(9) A noisy signal is the enemy of a bad codec. Most codecs throw away shadow information as our eyes and brains forgive missing detail in dark tones. As codecs spend more bandwidth compressing moving objects than still ones, and as noise moves constantly, shadows become bland and featureless blobs as a stressed codec tries to store all that moving detail and simply gives up. If you’re shooting with a lesser codec you’ll be much more successful at retaining shadow detail if you reduce your camera’s ISO to make the image as noise free as possible.

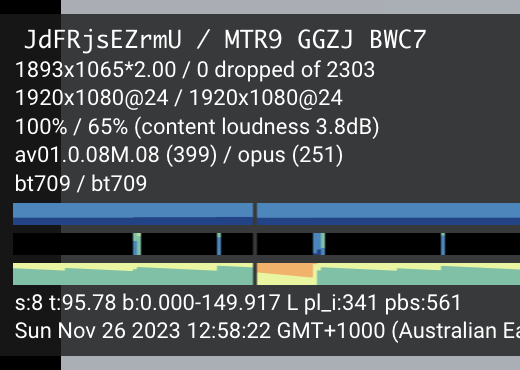

(10) Never assume that what you’re seeing on the monitor is what is being laid down in a file. You may be recording 4:4:4 16-bit RGB raw or 4:2:2 8-bit YCbCr XAVC and it’s going to look much the same because the monitor is ALWAYS showing you 4:2:2 YCbCr. It’s best to check playback once in a while to see what’s REALLY being recorded. (Bring a microscope.)

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now