You’ve most probably seen a lot of AI generated animations already using various tools like Runway Gen-2 and Kaiber that take either still images and animate a few seconds or direct text-to-video generative style animations that tend to look somewhat like a Psilocybin-induced trip. While they’re interesting and often artistic in their nature as a genre unto itself, I’ve found little practical use for any of the technology to date – especially with anything even remotely realistic or smooth in their delivery.

While I’m not looking for extreme realism like I might in VFX work, I do want my fantasy creations to feel smooth and have quality motion and engaging elements that aren’t distracting to the overall work.

That’s why most AI content produced isn’t always ready straight out of the box. You often need to do further work on the elements in a rendered image in Photoshop – or if animating objects, After Effects. I do a lot of work in After Effects almost daily and 95% of it is working with 2D content and objects that simulate 3D environments and effects.

That’s where stumbling across the Zoom Out out-painting feature in Midjourney v5.2 somes in for some specific compositions. Midjourney is just a content generator, while AfterEffects is the animator.

Want to know how to use this new feature in Midjourney on Discord? This video tutorial for Midjourney Zoom-Out from All About AI’s YouTube channel walks you through the steps to produce them:

For this specific type of animation I’m looking to create, After Effects is necessary to “zoom” through multiple rendered layers from Midjourney to reveal a long track motion.

Initial Tests

So when it was announced that Midjourney v5.2 allowed for Zoom-out and Pan out-rendering capabilities, that triggered some fun experimentation in my quest for something unique.

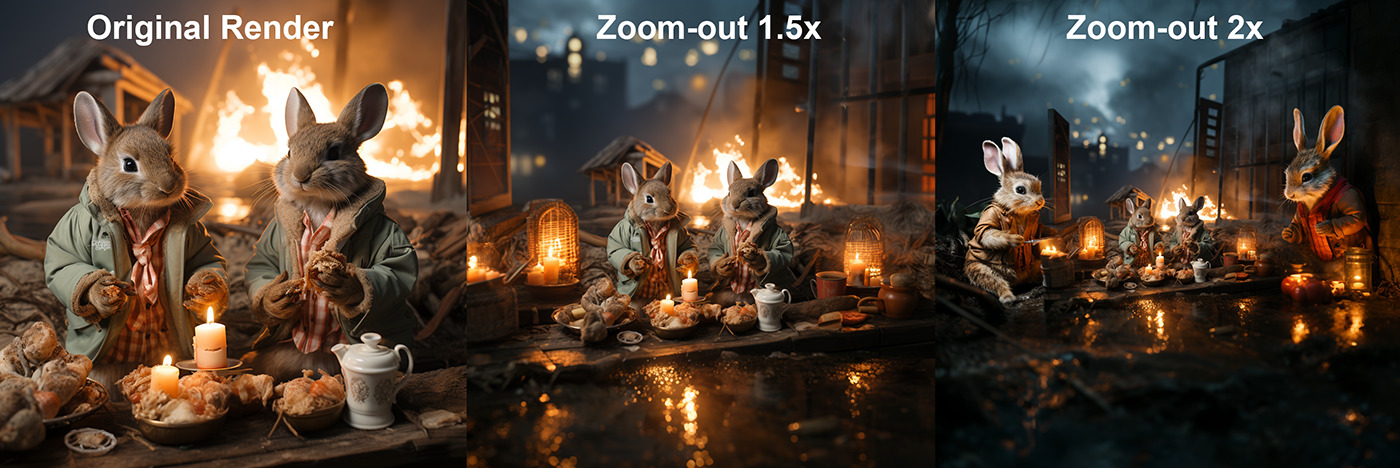

I started with a bizarre concept that surprised me when it first rendered, as it wasn’t anything that I prompted Midjourney for but still gave a delightful result nonetheless. Since it was the first of July and the beginning of a 4-day weekend (as well as the first day of the month) I often do a fun take ont he ritual of saying “rabbit rabbit” on social media on the first of the month with an image from Midjourney. In this instance, I asked for “two rabbits eating hotdogs”, thinking I’d be able to add some fireworks or something colorful in subsequent renditions. The resulting image you see below in the first frame is one of the results I got and I was delighted at the choice of composition (even though it looks more like they’re eating an alien or squid). 😉

When Midjourney v5.2 was announced days later, I just went back in Discord and looked back through my completed image in search for something to try out the new Zoom-Out feature. The next two renders were sequential and surprised me even more! First, the Zoom-out 1.5x gave me a nice outpainted scene around my initial feasting rabbits render. I then went 2x more and didn’t change the prompt at all – I got two more rabbits. I kept going 2x, 2x, 2x, 2x a bunch of times (13 to be exact) and it just created this long street scene lined with rabbits. They were really multiplying and varied in their characteristic and clothing and surroundings. They went from eating to drinking primarily but the variety of clothing and facial expressions was delightful!

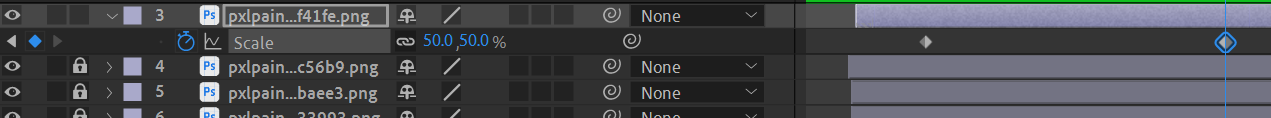

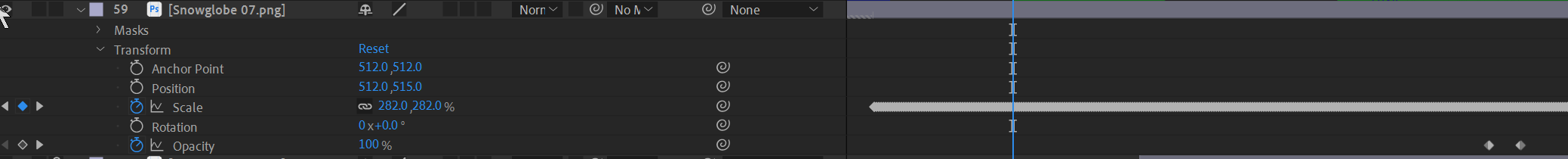

After collecting all these renders and stacking them on top of each other in sequence in After Effects, I knew I needed to align the zooming in such a way that it always used the best quality “center focal area” of each layer, as I zoomed between 400%-50% before fading out and revealing the layer below aligned to take over the zoom. I used a feathered mask about 100 pixels inside the edge of each frame to eliminate the artifacts and differences of edge pixel data from each render.

I repeated the process all the way through 14 different layers just to see if it would work…

…and it kinda did!

My first test render in theory worked okay, but the factor of zooming between 400%-50% speed ramps in waves as you start the zoom cycle of the subsequent frame reveals, so it slows-down in the last 30% of the scaling path, then speeds up again in the next 30-50% or scaling motion. Here’s the first test result:

So in essence, each layer on the timeline looked like this:

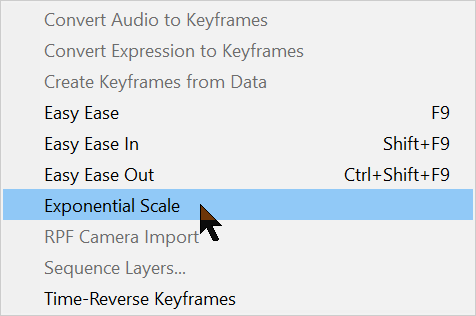

What I needed was a way to normalize this motion to achieve the correct steady speed through the range of scale motion. After a little snooping I discovered a feature in After Effects that I’ve never used before! “Exponential Scale” found under the Animation drop-down menu:

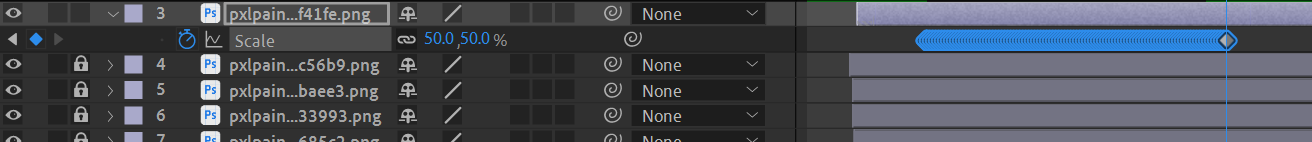

So by selecting the first and last keyframes for scaling in the timeline, then applying this option, it provides a full Exponential Scale speed ramping that’s normalized straight-through.

Applying that to all the layers and making a few tweaks and adjustments for timing on a few layers, I rendered it again after Exponential Scale:

So while that was a satisfying discovery and a good practice and proof of concept piece, I set out to make something very deliberate and much more immersive.

The Dimensions of Nature Animation Project

What started out as an experiment ended up a collaborative project with my wife, Ellen Johnson, to make a short nature-inspired music video. I generated 20 different rendered frames (10 different “globes”) for a total zoom out factor of 1,048,576x. Other than using 3D animation software, there’s really no way to possibly create this with optics alone – especially at this resolution.

As I animated the layers for this project (that were rendered out of Midjourney v5.2), which zooms out from a snowglobe on a table to different miniature worlds of wonder each inside of another and eventually resting on a large glass jar on a carved stump. Ellen provided all the music scoring and sound design with SFX that travel through these various “worlds”, as I incorporated animated segments for each environment to bring them to life a bit.

In essence, we’re sort of creating an animated Russian Doll effect where you see one globe/jar environment inside another, inside another, etc.

The base zoom-out animation followed the same process as the rabbit animation earlier; stacking layers, masking, scaling, Exponential Scale applied to each layer, etc.

Once all 20 rendered layers were aligned and set to complete in approximately 3:40 (the proposed length of the music soundtrack), we determined what additional animation segments would make sense traveling through the various “worlds” – which transitioned through different types of weather and flora/fauna. This laid the groundwork for the music and sound design, as well as the detailed animations that got composited later.

Here’s the raw layers zoomed out and sped up 3X so you can see how smooth the scaling effect is before adding any other elements:

In this case, the Generative AI imagery was not only the inspiration for this animation, but also the base content that was animated, along with some other 2D elements such as particle generations, masked effects on motion layers and some green screen video objects for various insects, etc. from Adobe Stock. Over 100 layers in this project alone – before moving over to Adobe Premiere to edit in the recorded/mixed music track from Logic Pro, with another 12+ SFX tracks.

The completed Music Video/Animation project is on YouTube – it’s best to watch it full-screen on YouTube directly than just embedded, so you can see the details. Also wearing earbuds/headphones are recommended if you don’t have a quality sound system when viewing.

Keep in mind, this is only 1080×1080 from the native original dimensions that Midjourney rendered the frames used and isn’t supposed to be truly realistic like a 3D render, but a fantasy exploration of imagery and sounds. Enjoy.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now