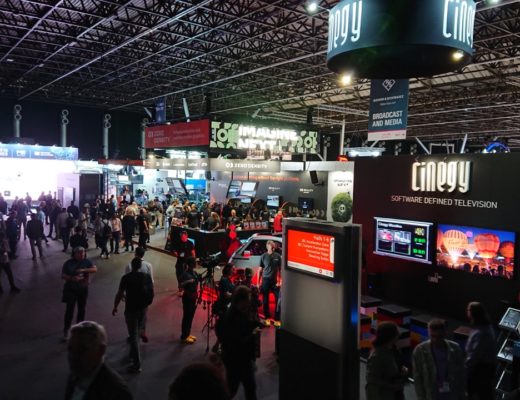

Going by the organisation’s own press releases, IBC 2022 has been something of a gift for anyone looking to impress a buzzword-focussed search engine. There have been new lens, camera and lighting releases, of course, but the whole show in general seems to have been something of a cure for the gear acquisition syndrome that so frequently afflicts camera enthusiasts. Most of those buzzwords – things like “cloud” and “metaverse” and “IP” – are concerned pretty exclusively with a very specific concept: taking infrastructure which was once physical hardware, and doing its work on computers.

Cloud has existed for a while

It’s an idea the film industry has been getting used to at least since the 1980s, when people started referring to a Mac II running nonlinear editing software as “the Avid” in the same way they’d previously referred to “the Steenbeck.” In that way, the move from custom hardware to generic IT equipment wasn’t always that obvious, at first. When some of that infrastructure moved from big, expensive hardware to big, expensive software, the attitude came along with them, and it wasn’t long ago that software like Resolve, even after it had stopped being so expensive, still liked to annexe every pixel of the display as if it was the only reason anyone would buy a workstation.

Resolve, and many other killer apps, have drifted gradually toward an acceptance that in 2022, they’re all just, well, applications, albeit applications which do things that demanded racks of custom hardware the price of a house in the early 2000s. Nobody calls it “The Cinema 4D,” after all. And now they don’t even demand a workstation, or at least that’s what the cloud computing people would prefer. Purveyors of cloud resources sometimes prefer not to mention the fact that “the cloud” is really just a sophisticated way to refer to someone else’s workstation that’s set up in a neighbouring time zone where electricity is cheap, although the result is the same.

History buffs will be aware that this is effectively a return to the 1960s approach of putting a simple, low-cost device on the end of a long wire, as was standard with the earliest mainframes. Our thin clients probably have more computational muscle in their touchscreen controllers than a decades-old mainframe had in total, but the overall concept isn’t something to get excited about.

Replace x with tablet, where x is… anything

A much more recent development is the sheer extent to which commodity hardware is starting to displace even the most task-specific devices. Take one of Hollyland’s video transmitter-receiver sets, for instance, and realise that it can stream very-nearly-live images to wireless Ethernet devices as well as SDI and HDMI monitors, creating a risk that monitors might end up being replaced by, well, more or less any simple tablet – and simple tablets can be had for under $50.

Doing that doesn’t create the immediacy that a camera operator needs, and it’s no coincidence that realtime performance is often one of the places that custom hardware still wins out. Still, if you’re a hair and makeup artist or a production designer, latency may not be too much of a concern. Big productions will continue to supply real-time pictures on OLED displays because the cost of a PVM-A170 and a Teradek is trivial compared to the day rate of any Oscar winner, but, at risk of swerving close to the word “democratisation,” there’s value in making it possible for every set to have a handful of radio-linked displays to scatter around.

What that’ll also do is highlight the ways in which this sort of approach doesn’t work. Lighting people will be painfully aware that trying to use a cellphone as a lighting controller all day tends not to work very well.

Your phone has a high-powered processor, a beautiful touchscreen, and about four radio modems, all of which makes it a far better potential remote controller than most task-specific remote controllers. The problem is that cellphones are not engineered in the expectation that they’ll be kept active for hours on end. Neither are tablets, to a lesser extent, so anyone planning a fleet of pocketmoney Aliexpress eyePadds as monitors might also want to invest in some equally inexpensive Aliexpress power banks, and keep a fire extinguisher near the charging area.

Evaporating infrastructure

Most of the fallout from IBC, though, isn’t about comparatively small-scale situations like this. It’s about avoiding the need to install the huge infrastructure of a television studio and replace it with the considerably reduced infrastructure of IP networking. That often means systems like NDI, even using the same Ethernet wiring that everyone’s been using to send PDFs to each other for the last fifteen years. That’s what a lot of universities did when the unanticipated need to install a lot of streaming cameras arose in early 2020. Like the standby carpenter on a show photographed entirely on a virtual production stage, the infrastructure requirement has evaporated. It’s even possible to obviate the costume department, though, as Ryan Reynolds can attest, that’s possibly something best left to the future.

The long-held fantasy of production executives is probably to have the poster art drawn up, feed it into an AI, and have a finished DCP turn up on a network share the next day. Disturbingly, there’s nothing formally preventing that from becoming possible other than sheer computer power, and people have gone broke underestimating that before. In the meantime, there’ll be some requirement to point cameras at things, so if you’re someone whose gear acquisition syndrome can only be assuaged by occasionally addressing the unplucked foam of a freshly-delivered Pelican case, best acquire some gear while there’s gear left to acquire.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now